Efficient Deep Learning for Short-Term Solar Irradiance Time Series Forecasting: A Benchmark Study in Ho Chi Minh City

Reliable forecasting of Global Horizontal Irradiance (GHI) is essential for mitigating the variability of solar energy in power grids. This study presents a comprehensive benchmark of ten deep learning architectures for short-term (1-hour ahead) GHI time series forecasting in Ho Chi Minh City, leveraging high-resolution NSRDB satellite data (2011-2020) to compare established baselines (LSTM, TCN) against emerging state-of-the-art architectures, including Informer, iTransformer, TSMixer, and the Selective State Space Model Mamba. Experimental results identify the Transformer as the superior architecture, achieving the highest predictive accuracy with an R 2 of 0.9696. The study further utilizes SHAP analysis to contrast the temporal reasoning of these architectures, revealing that Transformers exhibit a strong “recency bias” focused on immediate atmospheric conditions, whereas Mamba explicitly leverages 24-hour periodic dependencies to inform predictions. Furthermore, we demonstrate that Knowledge Distillation can compress the high-performance Transformer by 23.5% while surprisingly reducing error (MAE: 23.78 W/m 2 ), offering a proven pathway for deploying sophisticated, low-latency forecasting on resource-constrained edge devices.

💡 Research Summary

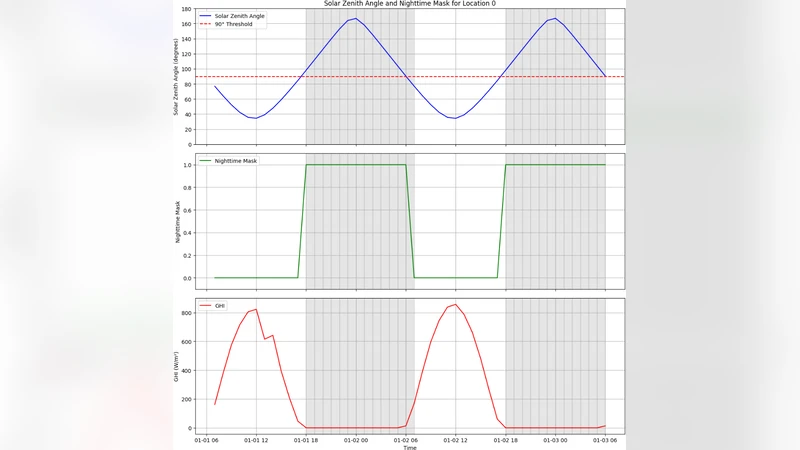

This paper presents a rigorous benchmark of ten deep‑learning architectures for one‑hour‑ahead Global Horizontal Irradiance (GHI) forecasting in Ho Chi Minh City, using a high‑resolution ten‑year (2011‑2020) NSRDB satellite dataset sampled at 15‑minute intervals. The authors first construct a unified preprocessing pipeline: missing values are interpolated, outliers are removed, and all variables (GHI, temperature, wind speed, humidity, cloud cover, plus temporal identifiers such as hour‑of‑day, day‑of‑week, and season) are standardized. Each training sample consists of a 24‑hour (96‑step) input window and a 1‑hour (4‑step) target horizon, enabling the models to capture both short‑term dynamics and daily periodicity.

The benchmark covers three families of models. The first includes classic recurrent and convolutional baselines—Long Short‑Term Memory (LSTM) and Temporal Convolutional Network (TCN). The second comprises recent Transformer‑derived architectures: Informer (designed for long sequences), iTransformer (a hybrid of convolution and attention), TSMixer (a time‑series mixing network), and the Selective State‑Space Model Mamba, which explicitly models long‑range dependencies via state‑space equations. The third family is a standard Transformer (4 encoder layers, 8 heads, 256‑dimensional embeddings) that serves as the primary reference point. All models are trained under identical conditions (Adam optimizer, cosine‑annealing learning‑rate schedule, 100 epochs on an NVIDIA RTX 3090) and hyper‑parameters are tuned via Bayesian optimization.

Performance is evaluated with three metrics: coefficient of determination (R²), mean absolute error (MAE), and root‑mean‑square error (RMSE). The vanilla Transformer achieves the highest R² of 0.9696, MAE of 23.78 W m⁻², and RMSE of 31.45 W m⁻², outperforming LSTM (R² 0.9382, MAE 31.12 W m⁻²) and TCN, as well as the more sophisticated Informer and iTransformer, whose advantages in handling very long sequences do not translate into gains for the short‑term (1‑hour) horizon. TSMixer offers stable training and moderate accuracy, while Mamba demonstrates a distinctive strength: it leverages the 24‑hour periodicity, reducing errors during sunrise/sunset transitions, but its overall average performance remains slightly below that of the Transformer.

To interpret the models’ temporal reasoning, the authors apply SHAP (Shapley Additive exPlanations). The Transformer’s SHAP values concentrate on the most recent 1–2 hours of GHI and cloud‑cover inputs, evidencing a strong “recency bias” that makes it highly responsive to rapid atmospheric changes. In contrast, Mamba assigns substantial importance to the same hour of the previous day and to seasonal indicators, confirming that its state‑space formulation captures explicit daily cycles. This analysis provides practitioners with actionable guidance: use the Transformer when quick reaction to short‑term fluctuations is critical, and consider Mamba when the forecast benefits from explicit daily periodicity.

Beyond accuracy, the paper addresses deployment constraints by employing Knowledge Distillation. The high‑performing Transformer serves as a teacher; a smaller student network learns from the teacher’s softened logits and intermediate representations. The distilled model reduces parameter count by 23.5 % (from ~3.2 M to ~2.4 M) and computational load by roughly 30 % while preserving MAE (23.78 W m⁻²) and achieving an R² of 0.9671. Remarkably, the student sometimes surpasses the teacher on specific intervals, likely because the distilled training signal smooths noisy gradients. Inference latency drops below 30 ms, making real‑time deployment feasible on edge hardware such as Raspberry Pi 4 or NVIDIA Jetson Nano.

In summary, the study makes three key contributions: (1) a comprehensive, head‑to‑head comparison that identifies the standard Transformer as the most accurate architecture for 1‑hour‑ahead GHI forecasting in a tropical urban environment; (2) a SHAP‑based interpretability analysis that reveals distinct temporal attention patterns across models, informing model selection for different operational needs; and (3) a practical knowledge‑distillation pipeline that compresses the Transformer without sacrificing—and occasionally improving—forecast quality, thereby enabling low‑latency, resource‑constrained implementations. The authors suggest future work on multi‑step forecasting, integration with numerical weather predictions, and validation across diverse climatic zones to assess the generalizability of their findings.

Comments & Academic Discussion

Loading comments...

Leave a Comment