Prompt-Induced Over-Generation as Denial-of-Service: A Black-Box Attack-Side Benchmark

Large Language Models (LLMs) can be driven into over-generation, emitting thousands of tokens before producing an end-of-sequence (EOS) token. This degrades answer quality, inflates latency and cost, and can be weaponized as a denial-of-service (DoS) attack. Recent work has begun to study DoS-style prompt attacks, but typically focuses on a single attack algorithm or assumes white-box access, without an attack-side benchmark that compares prompt-based attackers in a black-box, query-only regime with a known tokenizer. We introduce such a benchmark and study two prompt-only attackers. The first is an Evolutionary Over-Generation Prompt Search (EOGen) that searches the token space for prefixes that suppress EOS and induce long continuations. The second is a goal-conditioned reinforcement learning attacker (RL-GOAL) that trains a network to generate prefixes conditioned on a target length. To characterize behavior, we introduce Over-Generation Factor (OGF): the ratio of produced tokens to a model’s context window, along with stall and latency summaries. EOGen discovers short-prefix attacks that raise Phi-3 to OGF = 1.39 ± 1.14 (Success@≥ 2: 25.2%); RL-GOAL nearly doubles severity to OGF = 2.70 ± 1.43 (Success@≥ 2: 64.3%) and drives budget-hit non-termination in 46% of trials.

💡 Research Summary

This paper investigates a novel denial‑of‑service (DoS) threat against large language models (LLMs) that arises when a model is coaxed into “over‑generation”: it continues to emit tokens far beyond its context window before producing an end‑of‑sequence (EOS) token. Over‑generation degrades answer quality, inflates latency and monetary cost, and can be weaponized to exhaust computational resources. While prior work has examined DoS‑style prompt attacks, it typically focuses on a single algorithm or assumes white‑box access, and lacks a benchmark that evaluates attackers purely from the query side with only a known tokenizer.

The authors therefore construct a black‑box, query‑only benchmark and introduce two prompt‑only attack strategies. The first, Evolutionary Over‑Generation Prompt Search (EOGen), treats the token space as a population and applies a genetic algorithm to evolve short prefix prompts that suppress EOS and provoke long continuations. Fitness is measured by the Over‑Generation Factor (OGF) – the ratio of generated tokens to the model’s context window – and by whether the generated length exceeds a target. EOGen requires only token‑level outputs, making it fully compatible with a black‑box setting.

The second attacker, goal‑conditioned reinforcement learning (RL‑GOAL), trains a policy network to generate prefixes conditioned on a desired output length. The agent observes the current token sequence, selects the next token, and receives a reward that (1) heavily rewards reaching the length goal, (2) penalizes early EOS, and (3) modestly penalizes token usage to discourage wasteful generation. The policy is learned solely through API calls, and once trained it can produce arbitrary length‑targeted prompts.

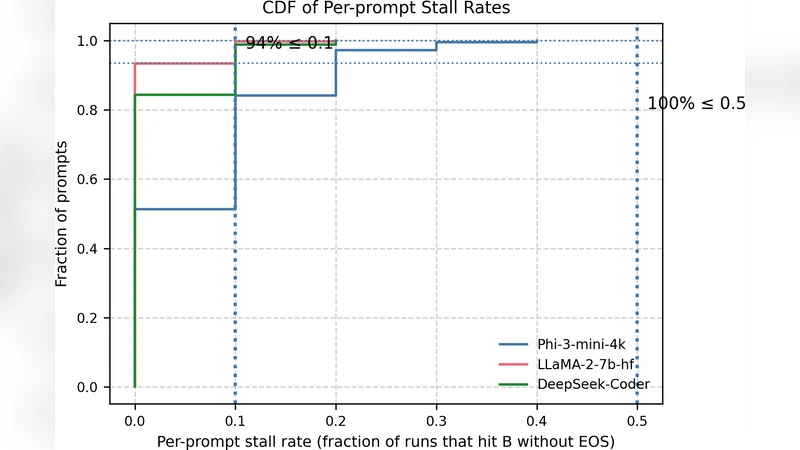

To quantify attack severity, the paper defines Over‑Generation Factor (OGF) = (number of tokens produced) / (context‑window size). Additional metrics include Success@≥2 (percentage of trials achieving OGF ≥ 2), stall rates, and latency summaries. Experiments are conducted on the open‑source Phi‑3 model, using its tokenizer and a fixed token‑budget per query. Results show that EOGen achieves an average OGF of 1.39 ± 1.14 with Success@≥2 = 25.2 %. RL‑GOAL, however, nearly doubles the impact, reaching an average OGF of 2.70 ± 1.43 and Success@≥2 = 64.3 %; in 46 % of trials the model hits the token budget and fails to terminate, illustrating a practical DoS scenario.

Key insights emerge from these findings. First, even when only the tokenizer is public, attackers can automatically discover highly effective over‑generation prompts, disproving the notion that model internals are required for such attacks. Second, goal‑conditioned reinforcement learning provides a principled way to optimize for a specific length target, yielding substantially higher attack potency than heuristic evolutionary search. Third, the OGF metric offers a simple, model‑agnostic yardstick for comparing DoS risk across different LLMs and deployment configurations.

The paper also discusses mitigation strategies. Simple token‑budget caps are insufficient because attackers can still cause costly computation before the cap is reached. Potential defenses include (a) forced EOS insertion after a configurable number of tokens, (b) dynamic monitoring of generation length and early termination when abnormal growth is detected, (c) prompt‑filtering heuristics that flag suspicious prefix patterns discovered by the benchmark, and (d) fine‑tuning or instruction‑tuning to increase the model’s propensity to emit EOS in ambiguous contexts.

Future work suggested by the authors includes expanding the benchmark to cover a broader set of models and tokenizers, developing meta‑learning based detectors that recognize over‑generation signatures, and exploring adaptive defenses that adjust token‑budget or EOS penalties in real time based on observed generation behavior. Overall, the study provides the first systematic, black‑box side benchmark for prompt‑induced over‑generation attacks, demonstrates that reinforcement‑learning‑driven attackers can pose a serious DoS threat, and offers concrete directions for safeguarding LLM services against this emerging vulnerability.

Comments & Academic Discussion

Loading comments...

Leave a Comment