AI tutoring can safely and effectively support students: An exploratory RCT in UK classrooms

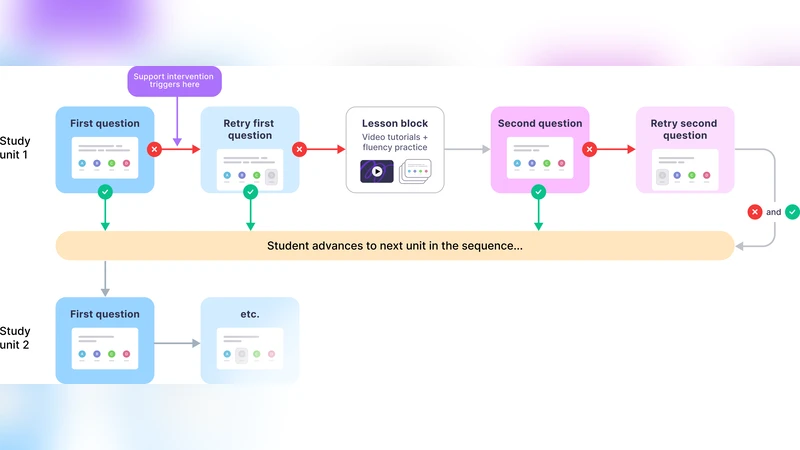

One-to-one tutoring is widely considered the gold standard for personalized education, yet it remains prohibitively expensive to scale. To evaluate whether generative AI might help expand access to this resource, we conducted an exploratory randomized controlled trial (RCT) with 𝑵 = 165 students across five UK secondary schools. We integrated LearnLM-a generative AI model fine-tuned for pedagogy-into chat-based tutoring sessions on the Eedi mathematics platform. In the RCT, expert tutors directly supervised LearnLM, with the remit to revise each message it drafted until they would be satisfied sending it themselves. LearnLM proved to be a reliable source of pedagogical instruction, with supervising tutors approving 76.4% of its drafted messages making zero or minimal edits (i.e., changing only one or two characters). This translated into effective tutoring support: students guided by LearnLM performed at least as well as students chatting with human tutors on each learning outcome we measured. In fact, students who received support from LearnLM were 5.5 percentage points more likely to solve novel problems on subsequent topics (with a success rate of 66.2%) than those who received tutoring from human tutors alone (rate of 60.7%). In interviews, tutors highlighted LearnLM’s strength at drafting Socratic questions that encouraged deeper reflection from students, with multiple tutors even reporting that they learned new pedagogical practices from the model. Overall, our results suggest that pedagogically fine-tuned AI tutoring systems may play a promising role in delivering effective, individualized learning support at scale.

💡 Research Summary

This exploratory randomized controlled trial investigated whether a pedagogically fine‑tuned generative AI model, LearnLM, could safely and effectively augment one‑to‑one tutoring in UK secondary schools. A total of 165 students from five schools were randomly assigned to either an AI‑supported condition or a traditional human‑tutor condition. LearnLM was integrated into the Eedi mathematics platform and was tasked with drafting chat‑based tutoring messages. Expert human tutors supervised every AI‑generated draft, revising only when necessary; 76.4 % of the AI’s messages required zero or minimal edits (typically one or two character changes), indicating a high degree of pedagogical adequacy.

Both groups showed significant gains from pre‑ to post‑test, but the AI‑supported group outperformed the human‑only group on a transfer measure: 66.2 % of AI‑supported students correctly solved novel problems on a subsequent topic versus 60.7 % of their peers, a 5.5‑percentage‑point advantage that was statistically significant (p < 0.05) with a medium effect size (Cohen’s d ≈ 0.45). Mixed‑effects modeling accounted for school‑ and teacher‑level variance, confirming that the observed benefit was robust to clustering.

Qualitative interviews with supervising tutors highlighted LearnLM’s strength in generating Socratic‑style probing questions that encouraged deeper reflection and met the learners’ current knowledge state. Tutors reported that the AI’s question‑generation patterns introduced them to new pedagogical techniques, effectively turning the supervision process into a two‑way learning experience. Students appreciated the immediacy of feedback and the variety of hints, especially when tackling challenging problems.

The study’s limitations include a modest sample size confined to mathematics in UK secondary schools, a short‑term outcome window, and the added cost of human supervision, which blurs the pure cost‑benefit comparison between fully automated AI tutoring and traditional tutoring. Moreover, the generalizability to other subjects, age groups, or educational systems remains to be tested.

In sum, the trial provides the first empirical evidence that a generative AI, when fine‑tuned for pedagogy and overseen by qualified tutors, can deliver tutoring quality comparable to, and in some respects exceeding, that of human tutors alone. The findings suggest a viable pathway for scaling personalized education: AI can handle the bulk of message drafting, while human experts perform lightweight quality checks and intervene when nuanced judgment is required. Policymakers and school administrators should consider piloting such hybrid AI‑human tutoring models at larger scale, while simultaneously investigating long‑term learning trajectories, cost‑effectiveness, and cross‑disciplinary applicability.

Comments & Academic Discussion

Loading comments...

Leave a Comment