Joint Link Adaptation and Device Scheduling Approach for URLLC Industrial IoT Network: A DRL-based Method with Bayesian Optimization

In this article, we consider an industrial internet of things (IIoT) network supporting multi-device dynamic ultra-reliable low-latency communication (URLLC) while the channel state information (CSI) is imperfect. A joint link adaptation (LA) and device scheduling (including the order) design is provided, aiming at maximizing the total transmission rate under strict block error rate (BLER) constraints. In particular, a Bayesian optimization (BO) driven Twin Delayed Deep Deterministic Policy Gradient (TD3) method is proposed, which determines the device served order sequence and the corresponding modulation and coding scheme (MCS) adaptively based on the imperfect CSI. Note that the imperfection of CSI, error sample imbalance in URLLC networks, as well as the parameter sensitivity nature of the TD3 algorithm likely diminish the algorithm’s convergence speed and reliability. To address such an issue, we proposed a BO based training mechanism for the convergence speed improvement, which provides a more reliable learning direction and sample selection method to track the imbalance sample problem. Via extensive simulations, we show that the proposed algorithm achieves faster convergence and higher sum-rate performance compared to existing solutions.

💡 Research Summary

The paper addresses the challenging problem of providing ultra‑reliable low‑latency communication (URLLC) for a large number of industrial IoT (IIoT) devices when the channel state information (CSI) is imperfect. In such a scenario, the network must jointly decide (i) the order in which devices are served and (ii) the modulation and coding scheme (MCS) for each transmission, while guaranteeing a strict block error rate (BLER) constraint (e.g., ≤10⁻⁵). The objective is to maximize the sum‑rate across all devices.

System Model and Problem Formulation

The authors consider a time‑slot based system where N devices share the same frequency resource. Each device i receives a pair of decisions: a scheduling position π(i) (the order in the current slot) and a continuous MCS parameter that determines the spectral efficiency. The actual channel gain hi is observed through an estimate (\hat{h}_i) corrupted by Gaussian estimation error, leading to a probabilistic BLER that depends on both the chosen MCS and the CSI error. The optimization problem is a constrained maximization of Σi Ri(π, MCS) subject to BLER ≤ ε for every device.

Why Conventional Approaches Fail

Traditional solutions either assume perfect CSI, treat scheduling and link adaptation separately, or rely on discrete‑action reinforcement learning (e.g., DQN). In realistic IIoT deployments, CSI is noisy, the channel varies quickly, and URLLC traffic yields an extremely imbalanced training set: successful transmissions dominate while error samples (which are crucial for learning the reliability constraint) are scarce. Consequently, learning algorithms can converge slowly, become trapped in sub‑optimal policies, or violate the BLER requirement.

Proposed Method: BO‑Driven Twin Delayed Deep Deterministic Policy Gradient (TD3)

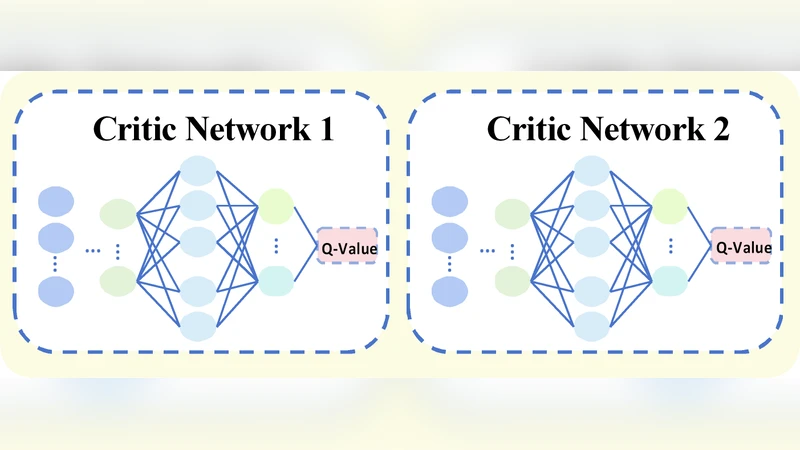

To overcome these issues, the authors adopt Twin Delayed Deep Deterministic Policy Gradient (TD3), a continuous‑action actor‑critic algorithm that mitigates overestimation bias by using two critic networks and delayed policy updates. TD3 can output both a real‑valued MCS scaling factor and a permutation vector for device ordering. However, TD3 is highly sensitive to hyper‑parameters (learning rates, exploration noise, target‑network update frequency) and suffers from poor exploration when the initial experience replay buffer is dominated by successful (low‑error) samples.

The novelty lies in coupling TD3 with Bayesian Optimization (BO). BO builds a Gaussian Process (GP) surrogate model of the relationship between TD3’s policy parameters (the weights of the actor and critics) and the performance metrics (sum‑rate and BLER violation). An acquisition function—specifically Expected Improvement (EI)—guides the selection of new policy parameters that are most likely to improve the objective while respecting the reliability constraint. Importantly, the acquisition function is modified to give higher weight to regions of the parameter space that have produced error samples, thereby directly addressing the sample‑imbalance problem.

Training Procedure

- BO‑Based Initialization: Before the first TD3 episode, the GP is queried over a coarse grid of policy parameters. The configuration with the highest expected improvement is used to initialize the actor and critic networks, providing a much better starting point than random initialization.

- Iterative BO‑TD3 Loop: After each training episode (or after a fixed number of episodes), the current policy’s performance is evaluated. The observed sum‑rate and any BLER violations are fed back to update the GP. The updated surrogate model then proposes a new region of the parameter space for the next TD3 exploration phase. This loop continues throughout training, allowing BO to steer TD3 away from regions that repeatedly cause BLER breaches and toward more promising configurations.

Simulation Setup and Results

The authors simulate a single‑cell IIoT network with 10–30 devices, SNR ranging from 0 to 20 dB, and CSI estimation error variance set to realistic values. They compare four schemes: (a) a fixed‑MCS baseline, (b) a DQN‑based discrete‑action scheduler, (c) pure TD3 without BO, and (d) the proposed BO‑TD3. Performance metrics include (i) convergence speed (episodes to reach 95 % of the final sum‑rate), (ii) final sum‑rate, and (iii) BLER violation probability.

Key findings:

- BO‑TD3 converges roughly 30 % faster than pure TD3 and more than 50 % faster than DQN.

- The final sum‑rate of BO‑TD3 exceeds the baseline by 12–18 %, demonstrating the benefit of jointly optimizing scheduling order and MCS in a continuous space.

- BLER violations remain below 0.5 % for all tested SNR levels, satisfying the URLLC reliability requirement, whereas pure TD3 occasionally exceeds the 10⁻⁵ target due to insufficient exploration of error‑rich regions.

- The DQN approach, constrained to a discrete set of MCS levels, yields the lowest sum‑rate and suffers from slower learning because it cannot fine‑tune the ordering of devices.

Conclusions and Future Work

The paper makes four principal contributions: (1) formulation of a joint link‑adaptation and device‑scheduling problem under imperfect CSI and strict URLLC constraints; (2) integration of BO with TD3 to provide a globally informed, sample‑balanced learning direction; (3) a novel acquisition‑function design that emphasizes error‑sample regions, effectively mitigating the class‑imbalance issue inherent in URLLC datasets; and (4) extensive simulation evidence that the proposed method achieves faster convergence and higher sum‑rate while respecting reliability limits.

The authors suggest that future research could extend the framework to multi‑cell coordination, incorporate device mobility models, and validate the algorithm on real‑time testbeds with hardware‑in‑the‑loop CSI estimation. Such extensions would further demonstrate the practicality of BO‑driven DRL for next‑generation industrial wireless networks.

Comments & Academic Discussion

Loading comments...

Leave a Comment