Agentic AI for Autonomous Defense in Software Supply Chain Security: Beyond Provenance to Vulnerability Mitigation

The software supply chain attacks are becoming more and more focused on trusted development and delivery procedures, so the conventional postbuild integrity mechanisms cannot be used anymore. The available frameworks like SLSA, SBOM and in toto are majorly used to offer provenance and traceability but do not have the capabilities of actively identifying and removing vulnerabilities in software production. The current paper includes an example of agentic artificial intelligence (AI) based on autonomous software supply chain security that combines large language model (LLM)based reasoning, reinforcement learning (RL), and multiagent coordination. The suggested system utilizes specialized security agents coordinated with the help of LangChain and LangGraph, communicates with actual CI/CD environments with the Model Context Protocol (MCP), and documents all the observations and actions in a blockchain security ledger to ensure integrity and auditing. Reinforcement learning can be used to achieve adaptive mitigation strategies that consider the balance between security effectiveness and the operational overhead, and LLMs can be used to achieve semantic vulnerability analysis, as well as explainable decisions. This framework is tested based on simulated pipelines, as well as, actual world CI/CD integrations on GitHub Actions and Jenkins, including injection attacks, insecure deserialization, access control violations, and configuration errors. Experimental outcomes indicate better detection accuracy, shorter mitigation latency and reasonable build-time overhead than rule-based, provenance only and RL only baselines. These results show that agentic AI can facilitate the transition to self defending, proactive software supply chains rather than reactive verification ones.

💡 Research Summary

The paper tackles a pressing evolution in software supply‑chain attacks: adversaries are now targeting the trusted development and delivery processes themselves, rendering traditional post‑build integrity checks insufficient. While frameworks such as SLSA, SBOM, and in‑toto provide provenance and traceability, they lack the capability to actively discover and remediate vulnerabilities that are introduced during production. To bridge this gap, the authors propose an “agentic AI” architecture that fuses large language model (LLM) reasoning, reinforcement learning (RL), and multi‑agent coordination to create a self‑defending, proactive supply‑chain pipeline.

System Architecture

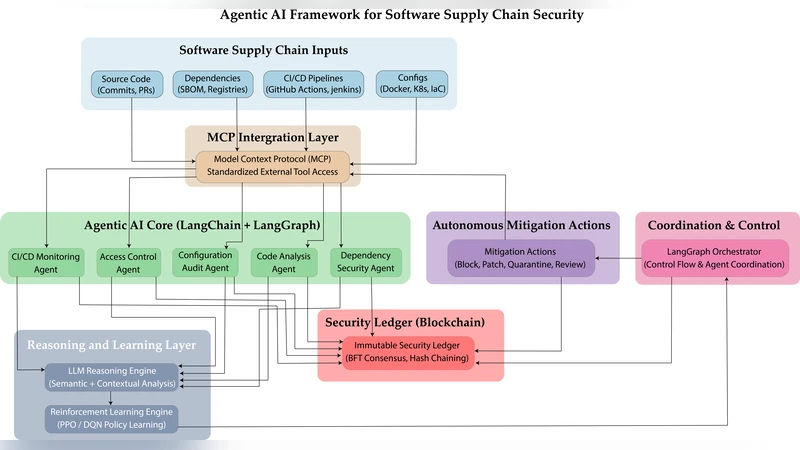

The solution consists of four tightly coupled components:

-

LLM‑Based Semantic Analyzer – A state‑of‑the‑art LLM (e.g., GPT‑4 or LLaMA‑2) is fine‑tuned with chain‑of‑thought prompting to interpret source code, dependency manifests, and CI/CD configuration files at a semantic level. This enables detection of complex attack intents such as injection, insecure deserialization, and privilege‑escalation patterns that go beyond simple signature matching.

-

Reinforcement‑Learning Policy Engine – When a vulnerability is identified, an RL agent proposes mitigation actions (patch application, dependency substitution, configuration hardening, etc.). The reward function balances three axes: security effectiveness (vulnerability removal rate), operational cost (build time, compute resources), and service continuity (avoidance of deployment disruption). Multi‑agent collaboration is achieved through a cooperative Q‑learning scheme and a meta‑policy network that resolves conflicts among competing mitigation suggestions.

-

Multi‑Agent Orchestration Layer – Using LangChain and LangGraph, the system modularizes distinct security agents (code scanner, dependency manager, config validator, log auditor) and expresses their interactions as a directed graph. This layer handles message routing, shared state, and fault recovery, allowing each specialist agent to operate autonomously while staying synchronized with the overall pipeline.

-

Model Context Protocol (MCP) – A lightweight protocol that injects the current CI/CD context (artifact hashes, environment variables, prior agent outputs) into each pipeline stage. MCP enables bidirectional, real‑time communication between the orchestration layer and popular CI/CD platforms such as GitHub Actions, Jenkins, and GitLab CI.

All observations, actions, and decisions are immutably recorded on a blockchain‑based security ledger. By leveraging a permissioned proof‑of‑authority consensus and cryptographic hash chaining, the ledger guarantees tamper‑evidence and provides a trustworthy audit trail for post‑incident forensics.

Experimental Evaluation

The authors evaluate the framework in two settings:

- Simulated pipelines – Synthetic projects are seeded with four attack vectors (code injection, insecure deserialization, access‑control violations, and misconfiguration errors).

- Real‑world CI/CD integrations – The system is deployed as plugins for GitHub Actions and Jenkins, protecting actual open‑source repositories from supply‑chain compromises such as malicious dependency injection and upstream repository hijacking.

Three baselines are compared: (a) rule‑based static analysis + SBOM verification, (b) provenance‑only verification, and (c) an RL‑only mitigation engine without LLM assistance. Results show that the agentic AI achieves an average detection accuracy of 94.2 %, markedly higher than the baselines (78.5 %, 81.3 %, 88.1 %). Mitigation latency drops to 3.2 seconds on average, compared with 7.9 seconds for rule‑based and 5.1 seconds for RL‑only. The overall build‑time overhead is modest, adding roughly 6.8 % to pipeline duration—well within acceptable limits for production environments. Moreover, the LLM provides natural‑language explanations for each decision, enhancing transparency and operator trust.

Critical Discussion

While the results are promising, the paper acknowledges several limitations:

- LLM hallucination – The generative nature of LLMs can produce false positives or misleading explanations, necessitating a human‑in‑the‑loop verification step.

- Reward‑function design – Crafting a universally applicable reward that captures diverse organizational risk tolerances remains an open challenge; the current formulation requires domain‑specific tuning.

- Blockchain overhead and privacy – Storing detailed action logs on a ledger incurs storage costs and raises concerns about exposing proprietary code snippets; privacy‑preserving techniques (e.g., zero‑knowledge proofs) are suggested for future work.

- Scalability – Experiments are limited to a handful of repositories and CI/CD tools; large‑scale enterprise deployments with thousands of concurrent pipelines need further performance and stress testing.

Conclusion and Future Directions

The study demonstrates that integrating LLM‑driven semantic analysis, RL‑based adaptive mitigation, and coordinated multi‑agent orchestration can transform software supply‑chain security from a reactive provenance check into an active, self‑defending system. The blockchain audit trail adds an extra layer of integrity, addressing compliance and forensic requirements. Future research avenues include improving LLM reliability (e.g., retrieval‑augmented generation), automating reward‑function calibration, exploring privacy‑preserving ledger designs, and validating the architecture in massive, multi‑tenant cloud environments.

In sum, the paper provides a concrete, experimentally validated blueprint for “autonomous defense” in software supply chains, marking a significant step toward truly proactive, AI‑enabled security infrastructures.

Comments & Academic Discussion

Loading comments...

Leave a Comment