📝 Original Info

- Title: HalluMat: Detecting Hallucinations in LLM-Generated Materials Science Content Through Multi-Stage Verification

- ArXiv ID: 2512.22396

- Date: 2025-12-26

- Authors: Researchers from original ArXiv paper

📝 Abstract

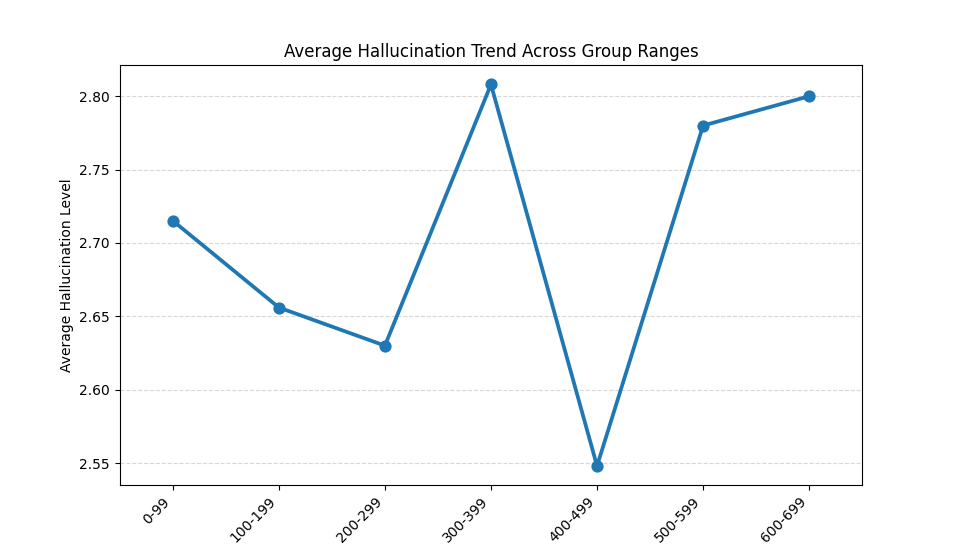

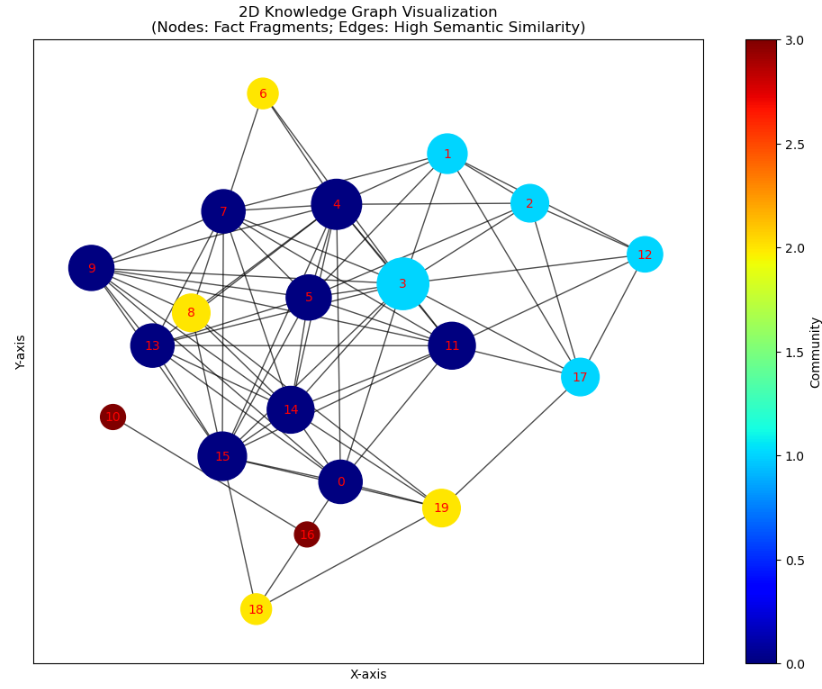

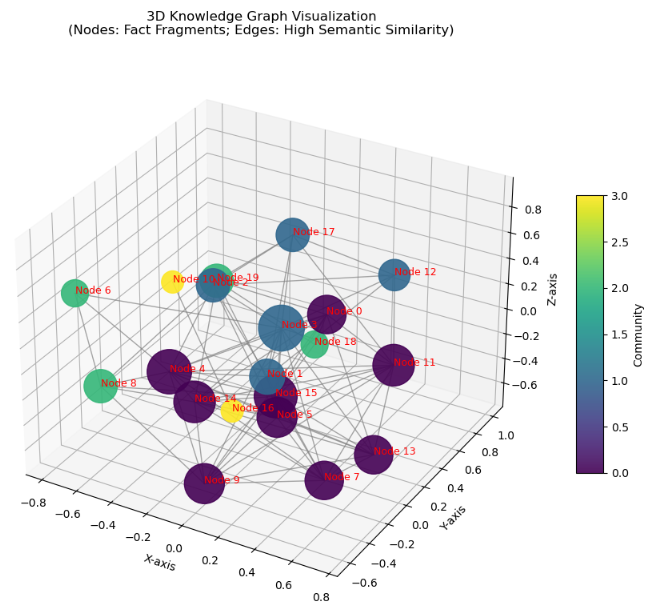

Artificial Intelligence (AI), particularly Large Language Models (LLMs), is transforming scientific discovery, enabling rapid knowledge generation and hypothesis formulation. However, a critical challenge is hallucination, where LLMs generate factually incorrect or misleading information, compromising research integrity. To address this, we introduce HalluMatData, a benchmark dataset for evaluating hallucination detection methods, factual consistency, and response robustness in AI-generated materials science content. Alongside this, we propose HalluMatDetector, a multi-stage hallucination detection framework that integrates intrinsic verification, multi-source retrieval, contradiction graph analysis, and metric-based assessment to detect and mitigate LLM hallucinations. Our findings reveal that hallucination levels vary significantly across materials science subdomains, with high-entropy queries exhibiting greater factual inconsistencies. By utilizing HalluMatDetector verification pipeline, we reduce hallucination rates by 30% compared to standard LLM outputs. Furthermore, we introduce the Paraphrased Hallucination Consistency Score (PHCS) to quantify inconsistencies in LLM responses across semantically equivalent queries, offering deeper insights into model reliability.

💡 Deep Analysis

Deep Dive into HalluMat: Detecting Hallucinations in LLM-Generated Materials Science Content Through Multi-Stage Verification.

Artificial Intelligence (AI), particularly Large Language Models (LLMs), is transforming scientific discovery, enabling rapid knowledge generation and hypothesis formulation. However, a critical challenge is hallucination, where LLMs generate factually incorrect or misleading information, compromising research integrity. To address this, we introduce HalluMatData, a benchmark dataset for evaluating hallucination detection methods, factual consistency, and response robustness in AI-generated materials science content. Alongside this, we propose HalluMatDetector, a multi-stage hallucination detection framework that integrates intrinsic verification, multi-source retrieval, contradiction graph analysis, and metric-based assessment to detect and mitigate LLM hallucinations. Our findings reveal that hallucination levels vary significantly across materials science subdomains, with high-entropy queries exhibiting greater factual inconsistencies. By utilizing HalluMatDetector verification pipe

📄 Full Content

HalluMat: Detecting Hallucinations in LLM-Generated Materials Science Content

Through Multi-Stage Verification

Bhanu Prakash Vangala1

Sajid Mahmud1, Pawan Neupane1, Joel Selvaraj1, Jianlin Cheng1*

1Department of Electrical Engineering and Computer Science

University of Missouri

Columbia, Missouri, USA

{bv3hz, smmkk, pngkg, jsbrq, chengji}@missouri.edu

Abstract

Artificial Intelligence (AI), particularly Large Language

Models (LLMs), is transforming scientific discovery, en-

abling rapid knowledge generation and hypothesis formula-

tion. However, a critical challenge is hallucination, where

LLMs generate factually incorrect or misleading informa-

tion, compromising research integrity. To address this, we

introduce HalluMatData, a benchmark dataset for evaluating

hallucination detection methods, factual consistency, and re-

sponse robustness in AI-generated materials science content.

Alongside, we propose HalluMatDetector, a multi-stage hal-

lucination detection framework integrating intrinsic verifica-

tion, multi-source retrieval, contradiction graph analysis, and

metric-based assessment to detect and mitigate LLM hallu-

cinations. Our findings reveal that hallucination levels vary

significantly across materials science subdomains, with high-

entropy queries exhibiting greater factual inconsistencies. By

utilizing HalluMatDetector’s verification pipeline, we reduce

hallucination rates by 30% compared to standard LLM out-

puts. Furthermore, we introduce the Paraphrased Hallucina-

tion Consistency Score (PHCS) to quantify inconsistencies in

LLM responses across semantically equivalent queries, offer-

ing deeper insights into model reliability. Combining knowl-

edge graph-based contradiction detection and fine-grained

factual verification, our dataset and framework establish a

more reliable, interpretable, and scientifically rigorous ap-

proach for AI-driven discoveries.

Introduction

Artificial Intelligence has become a transformative force in

materials science discovery, significantly accelerating the

identification and development of new materials. By lever-

aging advanced algorithms and computational models, AI

enables researchers to predict material properties, optimize

processes, and expedite experimental workflows, thereby re-

ducing the time and cost associated with traditional trial-

and-error methods. For instance, AI-driven platforms have

been instrumental in discovering novel materials with appli-

cations ranging from energy storage to catalysis. (PyzerK-

napp 2022; Jain et al. 2013)

Despite these advancements, a critical challenge remains:

hallucinations in AI-generated outputs. In the context of AI,

*Corresponding Author

Copyright © 2025, Association for the Advancement of Artificial

Intelligence (www.aaai.org). All rights reserved.

hallucination refers to the generation of information that ap-

pears plausible but is factually incorrect or nonsensical (Sun

et al. 2024; Farquhar et al. 2024). This phenomenon poses

significant risks in materials science, where erroneous data

can lead to misguided research directions, wasted resources,

and potential safety hazards. For example, an AI model

might predict a stable material configuration that, upon ex-

perimental validation, proves to be unfeasible, thereby un-

dermining the reliability of AI-assisted discoveries.

Current methods for detecting and mitigating AI hallu-

cinations are limited, particularly in specialized fields like

materials science (Farquhar et al. 2024; Chen et al. 2024).

Many existing approaches rely on external validation against

comprehensive datasets or human expertise, which may not

always be available or practical. Moreover, these methods

often lack the specificity required to address the unique chal-

lenges associated with materials science data, such as com-

plex chemical compositions and diverse property spaces.

(Jamaluddin, Gaffar, and Din 2023)

Such inaccuracies undermine trust in AI-driven scientific

discovery, making it difficult for researchers to fully in-

tegrate AI-generated insights into real-world materials re-

search (Tshitoyan et al. 2019). Without a reliable framework

to detect and mitigate hallucinations, AI adoption in ma-

terials science remains limited, as researchers cannot con-

fidently rely on AI-generated hypotheses, material compo-

sitions, or experimental predictions. Addressing these chal-

lenges requires a domain-specific approach that goes beyond

generic hallucination detection techniques used in NLP, en-

suring that AI-generated scientific knowledge meets the rig-

orous standards of materials research.

While hallucination detection methods have been ex-

plored in broader AI applications,(Farquhar et al. 2024;

Chen et al. 2024; Li et al. 2023) existing approaches are not

well-adapted for materials science. Most detection frame-

works rely heavily on external retrieval-based fact-checking,

using large-scale structured databases. However, compre-

hensive, domain-specific databases are limited in materi-

a

…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.