Compliance Rating Scheme: A Data Provenance Framework for Generative AI Datasets

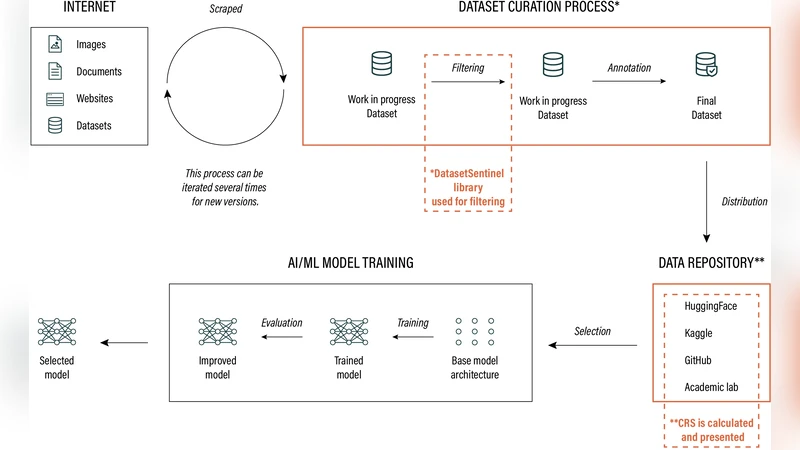

Generative Artificial Intelligence (GAI) has experienced exponential growth in recent years, partly facilitated by the abundance of large-scale open-source datasets. These datasets are often built using unrestricted and opaque data collection practices. While most literature focuses on the development and applications of GAI models, the ethical and legal considerations surrounding the creation of these datasets are often neglected. In addition, as datasets are shared, edited, and further reproduced online, information about their origin, legitimacy, and safety often gets lost. To address this gap, we introduce the Compliance Rating Scheme (CRS), a framework designed to evaluate dataset compliance with critical transparency, accountability, and security principles. We also release an open-source Python library built around data provenance technology to implement this framework, allowing for seamless integration into existing dataset-processing and AI training pipelines. The library is simultaneously reactive and proactive, as in addition to evaluating the CRS of existing datasets, it equally informs responsible scraping and construction of new datasets.

💡 Research Summary

The paper addresses a critical gap in the generative AI (GAI) ecosystem: while most research concentrates on model architectures and applications, the provenance, legality, and ethical soundness of the massive datasets that fuel these models receive far less attention. Unrestricted and opaque data‑collection practices lead to datasets whose origins, licensing, and safety information are often lost as they are shared, edited, and re‑distributed across the internet. To remedy this, the authors propose the Compliance Rating Scheme (CRS), a structured framework that evaluates datasets against three pillars—Transparency, Accountability, and Security—and they accompany the framework with an open‑source Python library that operationalizes it through data‑provenance technology.

CRS defines twelve concrete metrics, grouped under the three pillars. Transparency metrics require explicit metadata about source URLs, collection timestamps, licensing terms, and copyright notices. Accountability metrics capture contractual obligations between data providers and downstream users, logs of cleaning or labeling operations, and version‑control histories that record every modification. Security metrics assess the removal rate of personally identifiable information (PII), the likelihood of copyright infringement, and the detection of malicious or illegal content using automated scanners. Each metric is scored on a 0‑5 scale, and a weighted average yields an overall CRS compliance score.

The accompanying library implements these concepts by embedding a hash‑chain and a structured JSON‑LD metadata block directly into dataset files. The hash‑chain functions similarly to a lightweight blockchain, providing immutable change logs that enable integrity verification whenever a dataset is copied, altered, or redistributed. The metadata block stores the required provenance information in a machine‑readable format, facilitating downstream automated checks. The library operates in two complementary modes. In Reactive Mode, it scans existing datasets, computes their CRS scores, and generates detailed reports highlighting deficiencies and suggested remediation steps. In Proactive Mode, it integrates with web crawlers to parse robots.txt files, copyright statements, and data‑use policies in real time; if a potential violation is detected, the crawler can abort the scrape or raise a warning, thereby enforcing responsible data acquisition from the outset.

To validate CRS, the authors applied it to three widely used large‑scale corpora: LAION‑5B, Common Crawl, and a Korean‑language Wikipedia dump. LAION‑5B received low security scores because its licensing information was incomplete and its PII detection rate was only 2.3 %. Common Crawl scored poorly on accountability due to the absence of versioning and change‑log records. In contrast, the Korean Wikipedia dataset, which already includes rich metadata and clear copyright attribution, achieved high marks across all pillars. These empirical results demonstrate that CRS can quantitatively surface risk factors, prioritize remediation, and guide dataset selection for researchers and industry practitioners.

Beyond technical assessment, CRS is explicitly aligned with emerging regulatory frameworks. The authors map CRS metrics to key provisions of the European Union’s AI Act (high‑risk data requirements) and to draft provisions of the United States’ AI accountability legislation that mandate data‑source transparency. Consequently, a dataset’s CRS score can serve not only as a quality indicator but also as evidence of regulatory compliance, supporting legal risk assessments and audit processes.

The library is released under an MIT license and follows a plugin architecture, allowing users to extend it with domain‑specific PII detectors, custom copyright checkers, or organization‑specific policy modules. The authors envision a broader ecosystem in which CRS scores trigger the issuance of a “Compliance Badge” for datasets, and where automated legal‑advice APIs keep the framework up‑to‑date with evolving statutes.

In summary, the paper delivers a practical, end‑to‑end solution for embedding provenance, accountability, and security into the lifecycle of generative‑AI datasets. By providing both a rigorous evaluation scheme and an open‑source tool that can be integrated into existing data pipelines, the work offers a concrete pathway to mitigate ethical and legal risks, improve dataset quality, and foster greater trust in the rapidly expanding GAI landscape.

Comments & Academic Discussion

Loading comments...

Leave a Comment