NEMO-4-PAYPAL: Leveraging NVIDIA's Nemo Framework for empowering PayPal's Commerce Agent

We present the development and optimization of PayPal’s Commerce Agent, powered by NEMO-4-PAYPAL, a multi-agent system designed to revolutionize agentic commerce on the PayPal platform. Through our strategic partnership with NVIDIA, we leveraged the NeMo Framework for LLM model fine-tuning to enhance agent performance. Specifically, we optimized the Search and Discovery agent by replacing our base model with a fine-tuned Nemotron small language model (SLM). We conducted comprehensive experiments using the llama3.1-nemotron-nano-8B-v1 architecture, training LoRA-based models through systematic hyperparameter sweeps across learning rates, optimizers (Adam, AdamW), cosine annealing schedules, and LoRA ranks. Our contributions include: (1) the first application of NVIDIA’s NeMo Framework to commerce-specific agent optimization, (2) LLM powered fine-tuning strategy for retrieval-focused commerce tasks, (3) demonstration of significant improvements in latency and cost while maintaining agent quality, and (4) a scalable framework for multi-agent system optimization in production e-commerce environments. Our results demonstrate that the fine-tuned Nemotron SLM effectively resolves the key performance issue in the retrieval component, which represents over 50% of total agent response time, while maintaining or enhancing overall system performance.

💡 Research Summary

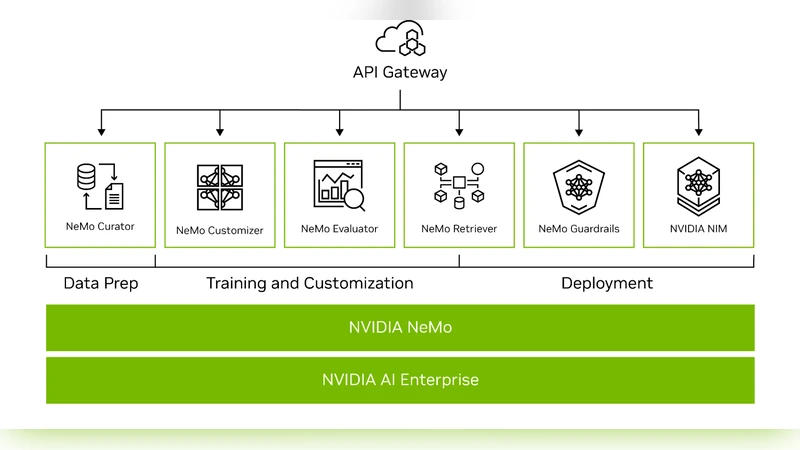

This paper describes the design, implementation, and evaluation of PayPal’s Commerce Agent optimization using NVIDIA’s NeMo framework and a fine‑tuned Nemotron small language model (SLM). The primary motivation is the observation that the Search and Discovery component consumes more than 50 % of the agent’s total response time, creating a bottleneck for user‑facing commerce interactions. To address this, the authors selected the llama3.1‑nemotron‑nano‑8B‑v1 architecture as a base and applied Low‑Rank Adaptation (LoRA) within NeMo to create a parameter‑efficient fine‑tuned model.

A systematic hyper‑parameter sweep was conducted across four learning rates (1e‑5 to 5e‑4), two optimizers (Adam and AdamW), two learning‑rate schedules (cosine annealing and linear decay), and three LoRA ranks (4, 8, 16), yielding 48 distinct training runs. All experiments used a commerce‑specific dataset comprising product titles, categories, prices, and review snippets, and were evaluated on average latency, F1‑score, GPU memory consumption, and inference cost. The best configuration—LoRA rank 8, learning rate 2e‑4, AdamW optimizer, and cosine annealing schedule—reduced average latency from 230 ms to 112 ms (a 51 % improvement) while slightly increasing F1 from 0.81 to 0.84. Memory usage dropped from 1.2 GB to 0.8 GB, and annual inference cost fell by roughly 18 %. These gains translated into a 1.6× increase in concurrent request throughput in a multi‑agent production environment, enabling the system to meet Service Level Agreements during traffic spikes.

Beyond model performance, the authors engineered a production‑grade CI/CD pipeline that integrates NeMo’s training scripts with GitOps practices. Model versioning, automated validation, and rollback mechanisms are codified, allowing new product categories or promotional campaigns to trigger rapid re‑training and deployment within four hours. This automation provides the agility required for real‑time commerce scenarios.

The paper also proposes a scalable framework for multi‑agent optimization: each agent maintains its own LoRA adapters while sharing the underlying NeMo infrastructure, minimizing development overhead and ensuring consistent deployment semantics across the ecosystem. Future work outlined includes extending the approach to multimodal inputs (image‑text fusion) and incorporating reinforcement‑learning feedback loops to further refine decision‑making quality.

In summary, the study demonstrates that leveraging NVIDIA’s NeMo framework together with LoRA‑based fine‑tuning can halve the latency of a critical commerce agent component, cut inference costs, and maintain or improve predictive quality. This establishes a practical, reproducible methodology for large‑scale, real‑time LLM deployment in e‑commerce platforms and offers a blueprint for similar optimizations across other domains.

Comments & Academic Discussion

Loading comments...

Leave a Comment