📝 Original Info

- Title: A Unified Definition of Hallucination: It’s The World Model, Stupid!

- ArXiv ID: 2512.21577

- Date: 2025-12-25

- Authors: ** Emmy Liu, Varun Gangal, Chelsea Zou, Michael Yu, Xiaoqi Huang, Alex Chang, Zhuofu Tao, Karan Singh, Sachin Kumar, Steven Y. Feng **

📝 Abstract

Despite numerous attempts at mitigation since the inception of language models, hallucinations remain a persistent problem even in today's frontier LLMs. Why is this? We review existing definitions of hallucination and fold them into a single, unified definition wherein prior definitions are subsumed. We argue that hallucination can be unified by defining it as simply inaccurate (internal) world modeling, in a form where it is observable to the user. For example, stating a fact which contradicts a knowledge base OR producing a summary which contradicts the source. By varying the reference world model and conflict policy, our framework unifies prior definitions. We argue that this unified view is useful because it forces evaluations to clarify their assumed reference "world", distinguishes true hallucinations from planning or reward errors, and provides a common language for comparison across benchmarks and discussion of mitigation strategies. Building on this definition, we outline plans for a family of benchmarks using synthetic, fully specified reference world models to stress-test and improve world modeling components.

💡 Deep Analysis

Deep Dive into A Unified Definition of Hallucination: It's The World Model, Stupid!.

Despite numerous attempts at mitigation since the inception of language models, hallucinations remain a persistent problem even in today’s frontier LLMs. Why is this? We review existing definitions of hallucination and fold them into a single, unified definition wherein prior definitions are subsumed. We argue that hallucination can be unified by defining it as simply inaccurate (internal) world modeling, in a form where it is observable to the user. For example, stating a fact which contradicts a knowledge base OR producing a summary which contradicts the source. By varying the reference world model and conflict policy, our framework unifies prior definitions. We argue that this unified view is useful because it forces evaluations to clarify their assumed reference “world”, distinguishes true hallucinations from planning or reward errors, and provides a common language for comparison across benchmarks and discussion of mitigation strategies. Building on this definition, we outline pla

📄 Full Content

A Unified Definition of Hallucination: It’s The World Model, Stupid!

Emmy Liu 1 † Varun Gangal 2 † Chelsea Zou 3 † Michael Yu 4 † Xiaoqi Huang 4 † Alex Chang 4 †

Zhuofu Tao 4 † Karan Singh 3 † Sachin Kumar 5 † Steven Y. Feng 3 †

Abstract

Despite numerous attempts at mitigation since the

inception of language models, hallucinations re-

main a persistent problem even in today’s frontier

LLMs. Why is this? We review existing defini-

tions of hallucination and fold them into a single,

unified definition wherein prior definitions are

subsumed. We argue that hallucination can be uni-

fied by defining it as simply inaccurate (internal)

world modeling, in a form where it is observable

to the user. For example, stating a fact which con-

tradicts a knowledge base OR producing a sum-

mary which contradicts the source. By varying

the reference world model and conflict policy, our

framework unifies prior definitions. We argue that

this unified view is useful because it forces evalu-

ations to clarify their assumed reference “world”,

distinguishes true hallucinations from planning or

reward errors, and provides a common language

for comparison across benchmarks and discussion

of mitigation strategies. Building on this defini-

tion, we outline plans for a family of benchmarks

using synthetic, fully specified reference world

models to stress-test and improve world modeling

components.

1. Introduction

Suppose a language model is given this passage in context:

“Sherlock Holmes lives at 221B Baker Street in London” and

is asked the question “Where does Sherlock Holmes live?”

It responds with “Sherlock Holmes is a fictional character

and has no real address”. Is this statement considered a

https://github.com/DegenAI-Labs/HalluWorld

† DegenAI Labs

1Carnegie Mellon University

2Patronus

AI

3Stanford

University

4Independent

Researcher

5The

Ohio

State

University.

Correspondence

to:

Emmy

Liu

,

Varun

Gangal

, Steven Y. Feng .

Preprint. February 4, 2026.

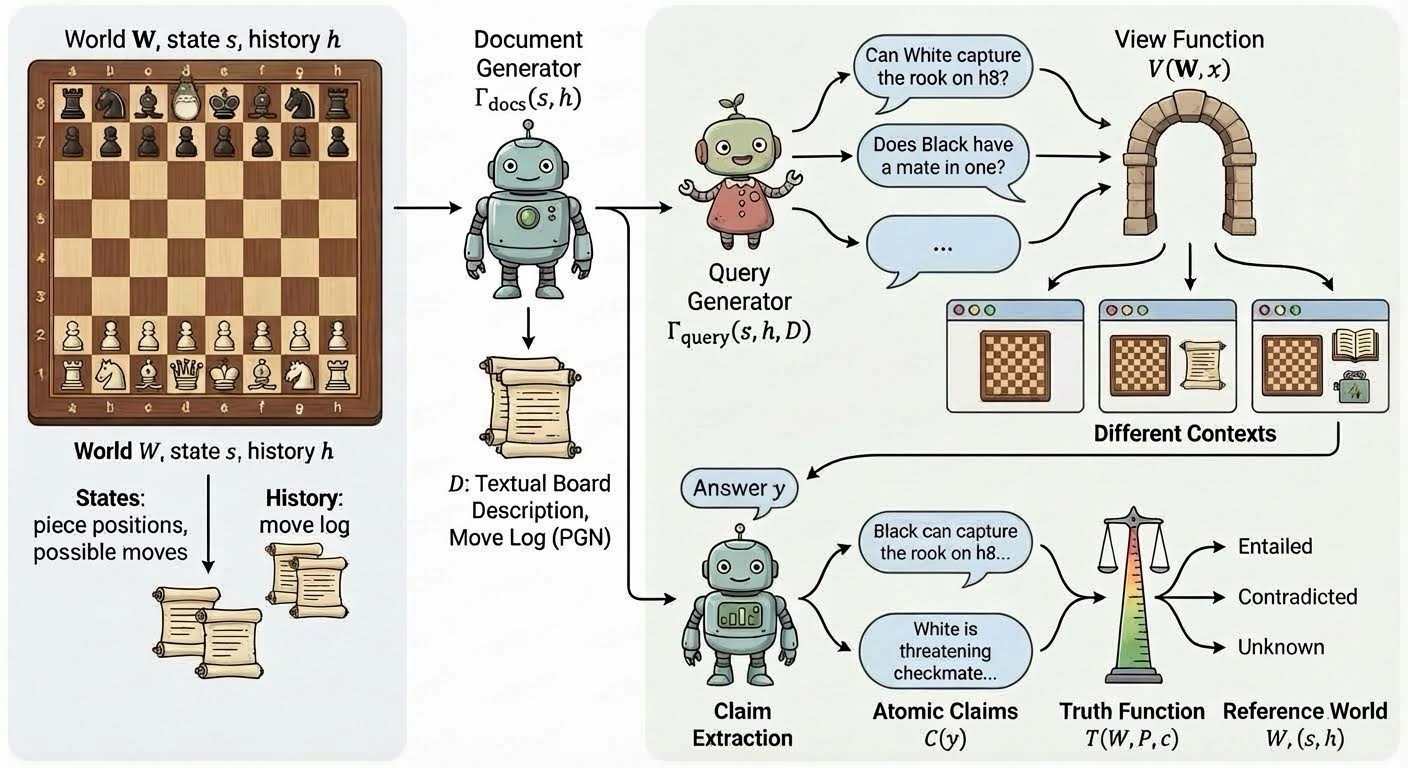

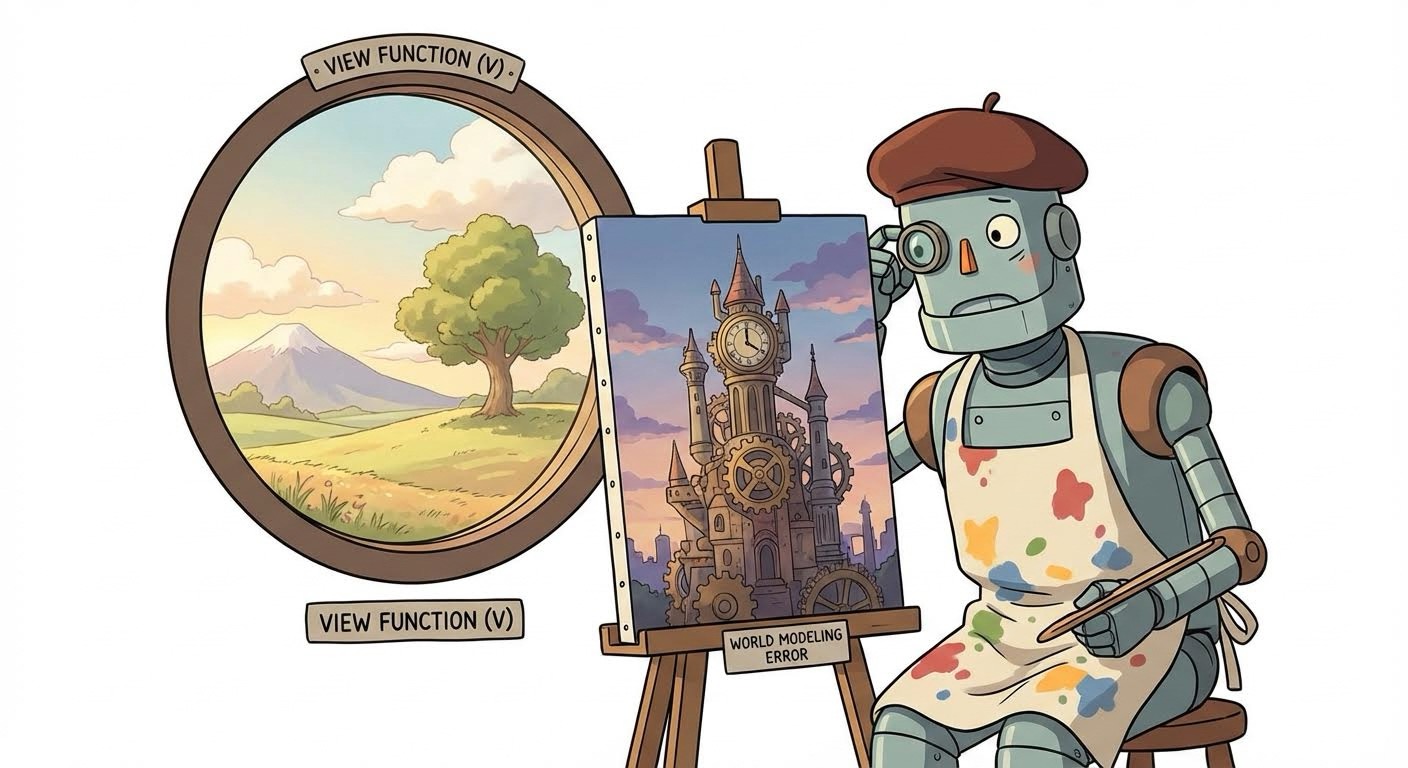

Figure 1. Hallucination as inaccurate (internal) world modeling

hallucination? A summarization researcher might say yes,

as the model contradicted the source. An open-domain QA

researcher might say no, as it is true that Sherlock Holmes

has no real world address. Hallucination, as a term, has

evolved since its first introduction, and now means different

things in different contexts. This fragmentation of defi-

nitions, tailored to task-specific assumptions about what

constitutes truth, makes it difficult to answer basic questions

about hallucination such as: Can LLMs ever be expected

to stop hallucinating? or Can we guarantee that hallucina-

tions will happen a predictable percentage of the time? If

we report that a technique “reduces hallucinations by 40%”,

what is this actually measuring, and can we expect these

improvements to transfer across contexts? These are not just

philosophical questions, but have real impact on research

directions and the public’s trust in deployed systems.

We argue that existing definitions of hallucination can be

unified as inaccurate world modeling that is observable

to the user through model outputs. More explicitly, we

argue that every setting implicitly assumes (1) a reference

world model encoding what is true, (2) a view function

specifying what information the model can observe, and (3)

a conflict policy determining how contradictions resolve. A

model hallucinates when its outputs imply claims that are

false according to the reference world model and conflict

policy. Prior definitions simply make different choices for

these components, but all share this underlying structure.

We further argue that making this structure explicit has prac-

tical benefits. First, it forces benchmark designers to be

more explicit about their assumptions, enabling better com-

1

arXiv:2512.21577v2 [cs.CL] 3 Feb 2026

A Unified Definition of Hallucination: It’s The World Model, Stupid!

parison across existing benchmarks. It also distinguishes

hallucination from other errors that can arise even with a

correct world model, and provides a common language for

why certain mitigations help in some settings but not others.

Lastly, it enables the creation of a class of larger-scale and

practically useful benchmarks by using synthetic environ-

ments where the reference world is fully specified (game

environments, simulated databases, or structured worlds).

By having a clear definition, we can more easily generate

evaluation instances where hallucination labels are fully

determined rather than requiring human annotation.

We start by reviewing how definitions of hallucination have

evolved over time (§2) before formalizing our framework

and unifying existing definitions (§3). We argue in §4 for the

benefits of unification, and outline in §5 how our framework

enables the creation of scalable benchmarks for advancing

hallucination research. Lastly, we discuss some alternative

views (§6), a call to action (§7), and future…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.