A Multi-fidelity Double-Delta Wing Dataset and Empirical Scaling Laws for GNN-based Aerodynamic Field Surrogate

Data-driven surrogate models are increasingly adopted to accelerate vehicle design. However, open-source multi-fidelity datasets and empirical guidelines linking dataset size to model performance remain limited. This study investigates the relationship between training data size and prediction accuracy for a graph neural network (GNN) based surrogate model for aerodynamic field prediction. We release an open-source, multi-fidelity aerodynamic dataset for double-delta wings, comprising 2448 flow snapshots across 272 geometries evaluated at angles of attack from 11 (degree) to 19 (degree) at Ma=0.3 using both Vortex Lattice Method (VLM) and Reynolds-Averaged Navier-Stokes (RANS) solvers. The geometries are generated using a nested Saltelli sampling scheme to support future dataset expansion and variance-based sensitivity analysis. Using this dataset, we conduct a preliminary empirical scaling study of the MF-VortexNet surrogate by constructing six training datasets with sizes ranging from 40 to 1280 snapshots and training models with 0.1 to 2.4 million parameters under a fixed training budget. We find that the test error decreases with data size with a power-law exponent of -0.6122, indicating efficient data utilization. Based on this scaling law, we estimate that the optimal sampling density is approximately eight samples per dimension in a d-dimensional design space. The results also suggest improved data utilization efficiency for larger surrogate models, implying a potential trade-off between dataset generation cost and model training budget.

💡 Research Summary

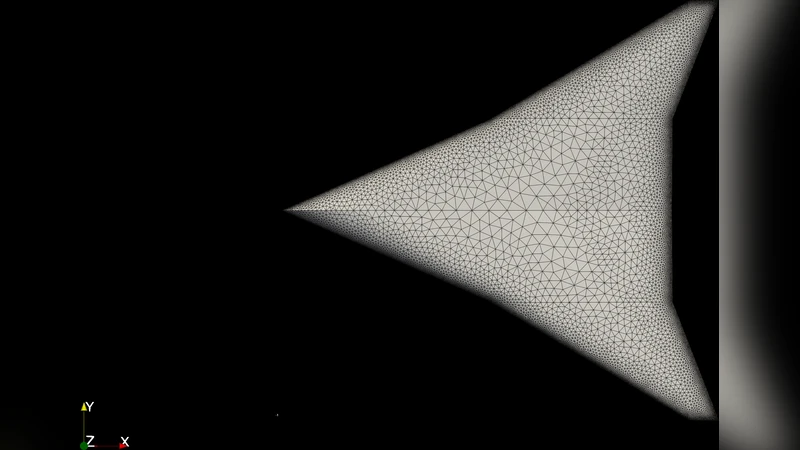

The paper addresses two pressing needs in data‑driven aerodynamic design: (1) the lack of open‑source, multi‑fidelity datasets that span a realistic design space, and (2) the absence of empirical guidelines that relate the size of a training set to the predictive performance of a surrogate model. To fill these gaps, the authors construct and release a comprehensive dataset for double‑delta wings. Using a nested Saltelli sampling scheme, they generate 272 distinct wing geometries that cover a 10‑degree attack‑angle range (11°–19°) at Mach 0.3. For each geometry, they compute flow fields with both a low‑fidelity Vortex Lattice Method (VLM) and a high‑fidelity Reynolds‑Averaged Navier‑Stokes (RANS) solver, yielding a total of 2,448 snapshots. The data are stored as graph representations (nodes = mesh points, edges = connectivity) so they can be directly ingested by graph neural networks (GNNs).

With this dataset in hand, the authors conduct a systematic scaling study of a GNN‑based surrogate, dubbed MF‑VortexNet, which is designed to predict full aerodynamic fields from geometry and flight condition inputs. Six training sets of increasing size (40, 80, 160, 320, 640, and 1,280 snapshots) are assembled, and for each set five model configurations ranging from 0.1 M to 2.4 M trainable parameters are trained under a fixed computational budget (same number of epochs, learning‑rate schedule, and batch size). Performance is evaluated on a held‑out test set of high‑fidelity RANS solutions using mean‑squared error (MSE) of the predicted pressure and velocity fields.

The key empirical finding is that test error follows a power‑law decay with respect to training‑set size N:

MSE ∝ N^‑0.6122

This exponent is steeper than the ‑0.5 scaling typical of simple regression models, indicating that the GNN efficiently extracts information from each additional sample. By interpreting the exponent in the context of a d‑dimensional design space, the authors derive an “optimal sampling density” of roughly eight samples per dimension (i.e., N_opt ≈ 8^d). This rule of thumb provides a practical guideline for how many CFD runs are needed when expanding the design space.

Another important observation is that larger GNNs (with more parameters) achieve lower errors for a given data size, suggesting that model capacity and data quantity are not independent; increasing model size improves data utilization efficiency. Consequently, there is a trade‑off: investing in a larger surrogate model can reduce the number of expensive high‑fidelity simulations required, but it also raises training cost.

The paper’s contributions are threefold: (i) the release of a high‑quality, multi‑fidelity double‑delta wing dataset that can serve as a benchmark for future aerodynamic surrogates; (ii) a rigorous empirical scaling analysis that quantifies how surrogate accuracy improves with data and model size; and (iii) a concrete guideline—approximately eight samples per design dimension—for constructing cost‑effective training sets.

From an application perspective, the results imply that engineers can dramatically cut down on CFD expense during early‑stage design exploration by leveraging MF‑VortexNet or similar GNN surrogates, while still retaining sufficient fidelity for downstream optimization or uncertainty quantification. Moreover, the dataset’s nested sampling structure enables variance‑based sensitivity analysis, opening avenues for integrated design‑space exploration and model‑based decision making. In summary, the study provides both a valuable public resource and actionable insights into the data‑model trade‑offs that underpin modern, AI‑augmented aerodynamic design workflows.

Comments & Academic Discussion

Loading comments...

Leave a Comment