Item Region-based Style Classification Network (IRSN): A Fashion Style Classifier Based on Domain Knowledge of Fashion Experts

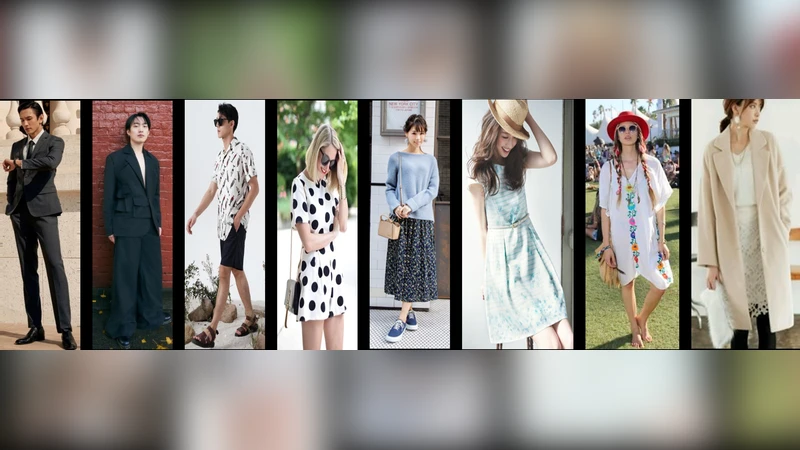

Fashion style classification is a challenging task because of the large visual variation within the same style and the existence of visually similar styles. Styles are expressed not only by the global appearance, but also by the attributes of individual items and their combinations. In this study, we propose an item region-based fashion style classification network (IRSN) to effectively classify fashion styles by analyzing item-specific features and their combinations in addition to global features. IRSN extracts features of each item region using item region pooling (IRP), analyzes them separately, and combines them using gated feature fusion (GFF). In addition, we improve the feature extractor by applying a dual-backbone architecture that combines a domain-specific feature extractor and a general feature extractor pre-trained with a large-scale image-text dataset. In experiments, applying IRSN to six widely-used backbones, including EfficientNet, ConvNeXt, and Swin Transformer, improved style classification accuracy by an average of 6.9% and a maximum of 14.5% on the FashionStyle14 dataset and by an average of 7.6% and a maximum of 15.1% on the ShowniqV3 dataset. Visualization analysis also supports that the IRSN models are better than the baseline models at capturing differences between similar style classes.

💡 Research Summary

The paper tackles the long‑standing challenge of fashion style classification, where large intra‑class visual variance and subtle inter‑class similarities make pure global‑appearance models insufficient. Drawing on the observation that fashion experts judge style not only by the overall silhouette but also by the attributes of individual garments and their combinations, the authors propose the Item Region‑based Style Classification Network (IRSN).

IRSN consists of three main components. First, an item‑region detector isolates predefined garment parts (e.g., top, bottom, shoes, accessories). For each region, Item Region Pooling (IRP) extracts a fixed‑size feature map using an RoI‑Align‑like operation, followed by a small dedicated MLP head that produces an item‑specific embedding. Second, two parallel feature extractors form a dual‑backbone architecture. One backbone is a domain‑specific CNN (e.g., EfficientNet, ConvNeXt) fine‑tuned on fashion data to capture fine‑grained texture, pattern, and color cues. The other backbone is a general‑purpose vision encoder pre‑trained on a massive image‑text dataset (CLIP‑style), providing rich semantic context. Both outputs are projected to a common dimensionality and fed into a Gated Feature Fusion (GFF) module. GFF learns a sigmoid‑based gate per channel, dynamically weighting the contribution of global versus item embeddings, thereby encouraging complementary use of the two information streams.

The training objective combines standard cross‑entropy with label smoothing and an auxiliary regularizer that promotes consistency among item embeddings belonging to the same style, helping the network distinguish subtle combinations such as “jeans + t‑shirt” versus “jeans + shirt”.

Extensive experiments were conducted on two large‑scale benchmarks: FashionStyle14 (14 styles, ~30 k images) and ShowniqV3 (30 styles, >100 k images). IRSN was grafted onto six state‑of‑the‑art backbones, including EfficientNet‑B0, ConvNeXt‑Base, and Swin‑Transformer‑Tiny. Across both datasets, IRSN consistently outperformed the vanilla backbones, delivering average accuracy gains of 6.9 % (FashionStyle14) and 7.6 % (ShowniqV3), with peak improvements of 14.5 % and 15.1 % respectively.

Qualitative analysis using Grad‑CAM visualizations shows that IRSN focuses on discriminative item regions (e.g., shoes, accessories) when differentiating visually similar styles such as “street” and “casual,” whereas baseline models rely mainly on the overall silhouette. This confirms that the item‑level representations and their gated fusion are indeed responsible for the performance boost.

Key contributions are: (1) a novel architecture that explicitly models item‑region features and their combinations, (2) a dual‑backbone scheme that merges domain‑specific fine‑tuned CNNs with large‑scale vision‑language pre‑training, (3) empirical evidence of consistent gains across multiple modern backbones, and (4) interpretability results that align with fashion expert reasoning.

Future work suggested includes integrating more accurate pose‑guided segmentation for item detection, extending the framework to multimodal style description generation, and exploring style‑aware generative models for virtual try‑on or recommendation systems. The proposed IRSN thus offers a practical, scalable solution for real‑world fashion applications such as e‑commerce search, personalized styling assistants, and automated cataloging.