📝 Original Info

- Title: Advancing Multimodal Teacher Sentiment Analysis:The Large-Scale T-MED Dataset & The Effective AAM-TSA Model

- ArXiv ID: 2512.20548

- Date: 2025-12-23

- Authors: Researchers from original ArXiv paper

📝 Abstract

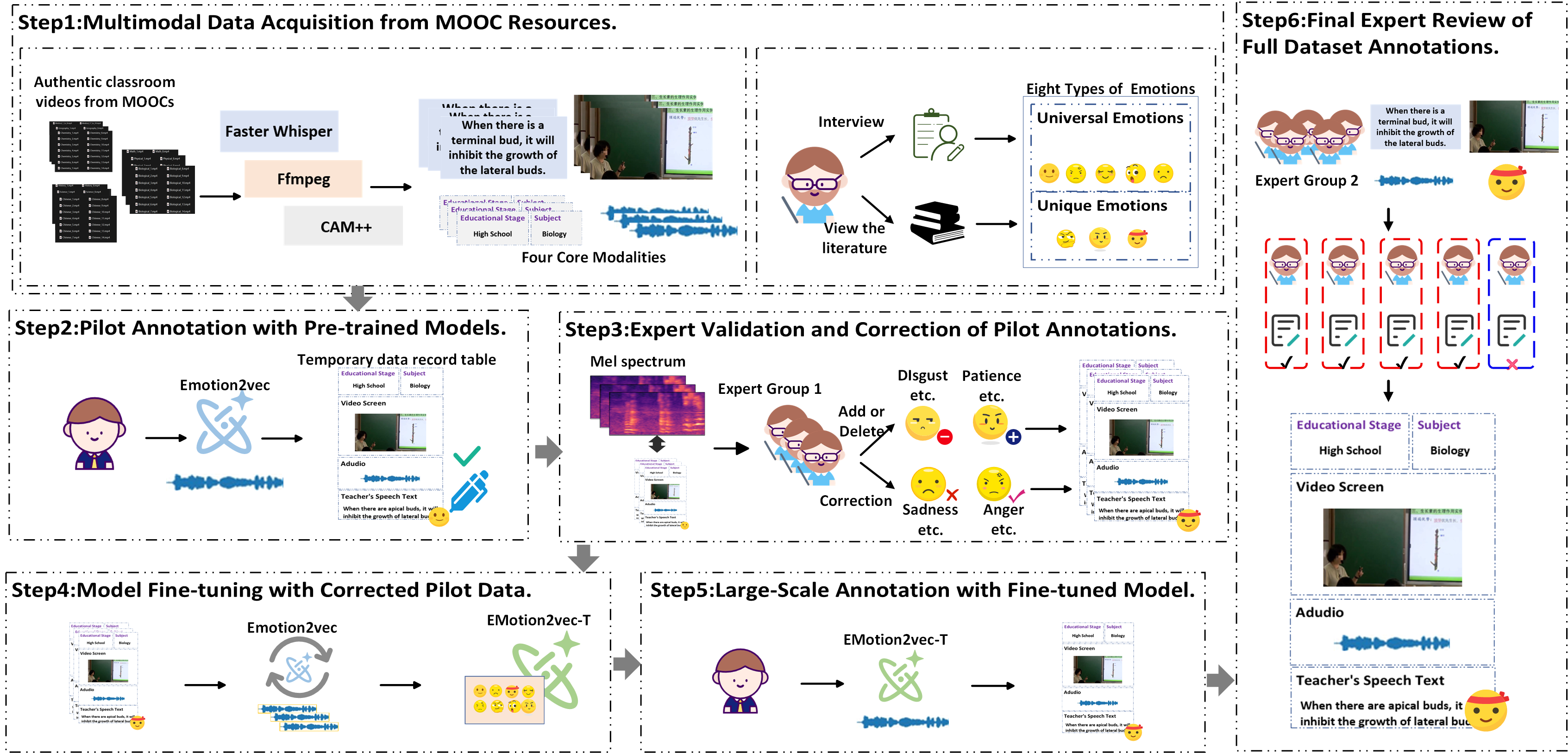

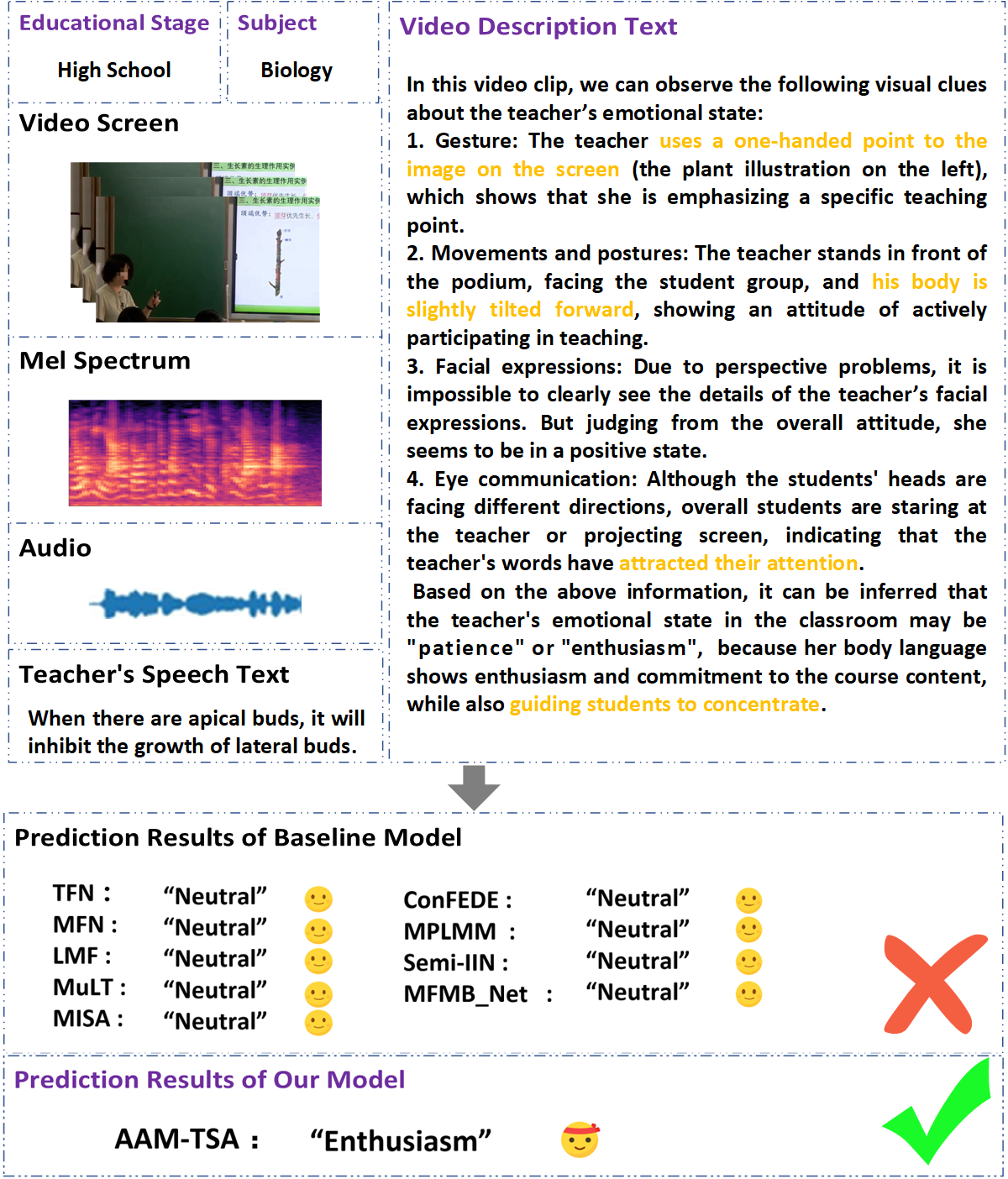

Teachers' emotional states are critical in educational scenarios, profoundly impacting teaching efficacy, student engagement, and learning achievements. However, existing studies often fail to accurately capture teachers' emotions due to the performative nature and overlook the critical impact of instructional information on emotional expression. In this paper, we systematically investigate teacher sentiment analysis by building both the dataset and the model accordingly. We construct the first large-scale teacher multimodal sentiment analysis dataset, T-MED. To ensure labeling accuracy and efficiency, we employ a human-machine collaborative labeling process. The T-MED dataset includes 14,938 instances of teacher emotional data from 250 real classrooms across 11 subjects ranging from K-12 to higher education, integrating multimodal text, audio, video, and instructional information. Furthermore, we propose a novel asymmetric attention-based multimodal teacher sentiment analysis model, AAM-TSA. AAM-TSA introduces an asymmetric attention mechanism and hierarchical gating unit to enable differentiated cross-modal feature fusion and precise emotional classification. Experimental results demonstrate that AAM-TSA significantly outperforms existing state-of-the-art methods in terms of accuracy and interpretability on the T-MED dataset.

💡 Deep Analysis

Deep Dive into Advancing Multimodal Teacher Sentiment Analysis:The Large-Scale T-MED Dataset & The Effective AAM-TSA Model.

Teachers’ emotional states are critical in educational scenarios, profoundly impacting teaching efficacy, student engagement, and learning achievements. However, existing studies often fail to accurately capture teachers’ emotions due to the performative nature and overlook the critical impact of instructional information on emotional expression. In this paper, we systematically investigate teacher sentiment analysis by building both the dataset and the model accordingly. We construct the first large-scale teacher multimodal sentiment analysis dataset, T-MED. To ensure labeling accuracy and efficiency, we employ a human-machine collaborative labeling process. The T-MED dataset includes 14,938 instances of teacher emotional data from 250 real classrooms across 11 subjects ranging from K-12 to higher education, integrating multimodal text, audio, video, and instructional information. Furthermore, we propose a novel asymmetric attention-based multimodal teacher sentiment analysis model, A

📄 Full Content

Advancing Multimodal Teacher Sentiment Analysis:

The Large-Scale T-MED Dataset & The Effective AAM-TSA Model

Zhiyi Duan1, Xiangren Wang1, Hongyu Yuan1, Qianli Xing2*

1Department of Computer Science, Inner Mongolia University, Hohhot, Inner Mongolia

2College of Computer Science and Technology, Jilin University, Changchun, Jilin

duanzy@imu.edu.cn, wangxr@mail.imu.edu.cn, yuanhongyu 1997@163.com, qianlixing@jlu.edu.cn

Abstract

Teachers’ emotional states are critical in educational scenar-

ios, profoundly impacting teaching efficacy, student engage-

ment, and learning achievements. However, existing studies

often fail to accurately capture teachers’ emotions due to the

performative nature and overlook the critical impact of in-

structional information on emotional expression. In this pa-

per, we systematically investigate teacher sentiment analy-

sis by building both the dataset and the model accordingly.

We construct the first large-scale teacher multimodal senti-

ment analysis dataset, T-MED. To ensure labeling accuracy

and efficiency, we employ a human-machine collaborative la-

beling process. The T-MED dataset includes 14,938 instances

of teacher emotional data from 250 real classrooms across 11

subjects ranging from K-12 to higher education, integrating

multimodal text, audio, video, and instructional information.

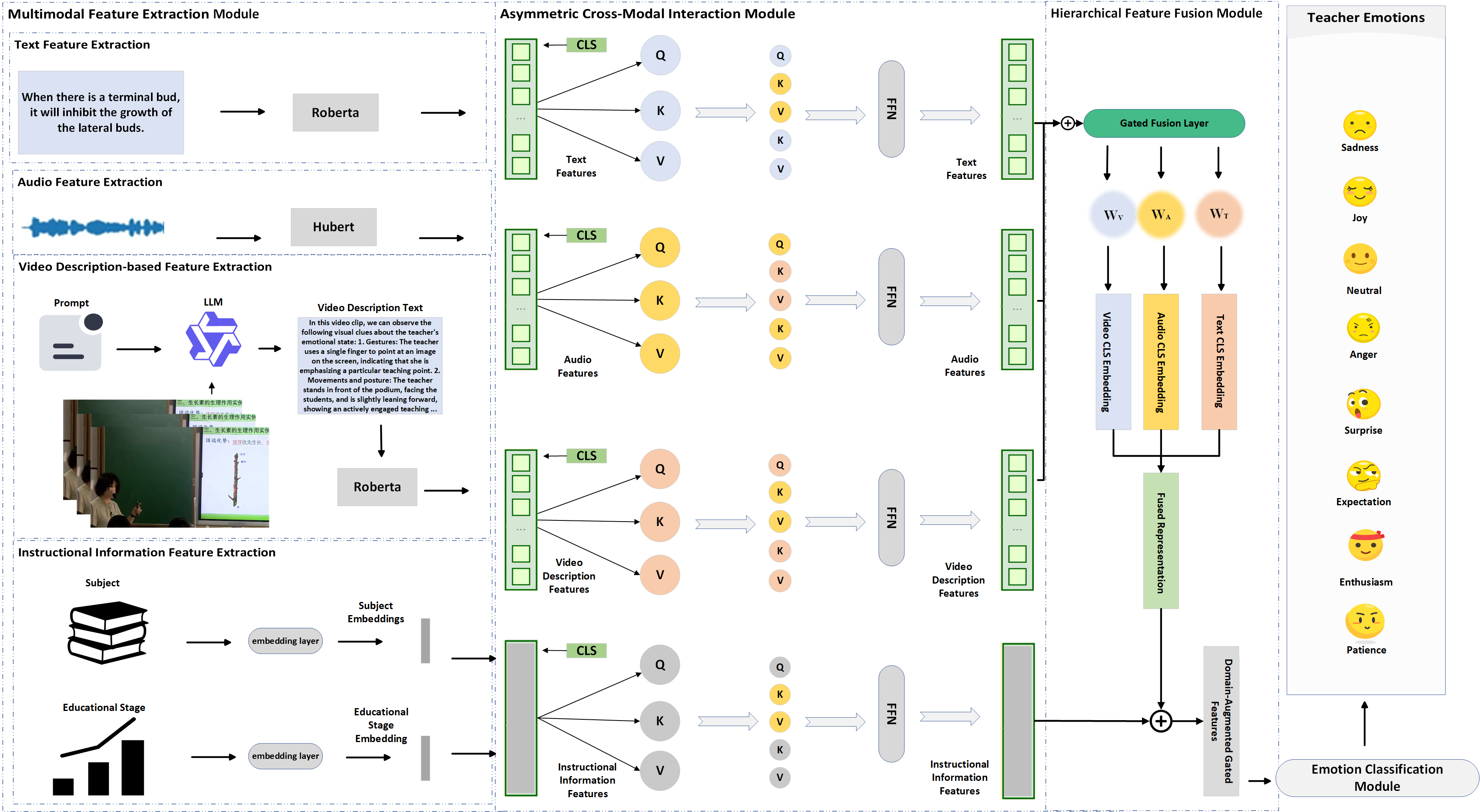

Furthermore, we propose a novel asymmetric attention-based

multimodal teacher sentiment analysis model, AAM-TSA.

AAM-TSA introduces an asymmetric attention mechanism

and hierarchical gating unit to enable differentiated cross-

modal feature fusion and precise emotional classification. Ex-

perimental results demonstrate that AAM-TSA significantly

outperforms existing state-of-the-art methods in terms of ac-

curacy and interpretability on the T-MED dataset.

Introduction

In the intricate scenarios of modern education, the emotional

states of teachers emerge as a pivotal factor, profoundly

influencing not only the efficacy of pedagogical delivery

but also the crucial aspects of student engagement, motiva-

tion, and ultimately, learning achievements (Han, Jin, and

Yin 2023). Consequently, the systematic analysis of teacher

emotions has garnered increasing attention within educa-

tional psychology and human-computer interaction research

(Wang and Frenzel 2025).

Unlike general sentiment analysis, analyzing teacher

emotions is distinguished by several unique characteristics.

On the one hand, the performative nature of teaching re-

quires educators to project specific emotions, such as enthu-

siasm or composure. Thus, teachers frequently exhibit hid-

den emotions, deliberately suppressing feelings like frustra-

tion or stress to uphold professionalism or shield students

*Corresponding author.

Copyright © 2026, Association for the Advancement of Artificial

Intelligence (www.aaai.org). All rights reserved.

from negative influences(King et al. 2024). On the other

hand, teacher emotions are inherently affected by instruc-

tional information, including instructional subject and edu-

cational stage(Hargreaves 2000).

The current approaches to teachers’ sentiment analysis

have predominantly relied on single-modal data modeling,

primarily focusing on textual transcripts or isolated audio

cues (Zhao, Zhang, and Chu 2024). While these methods

have offered valuable preliminary insights, their inherent

limitations become apparent when confronted with the com-

plexity and richness of real-world teaching environments.

The inadequacy of single-modal data is further amplified

by the distinctive nature of teachers’ emotional expressions,

characterized by deliberate deductiveness, e.g., emotive cues

tailored to instructional goals, and strategic restraint, e.g.,

suppression of negative emotions to maintain classroom dy-

namics. In summary, rendering any single data modality is

insufficient to disentangle performative displays from under-

lying emotion states.

Teacher sentiment analysis is inherently multimodal,

manifested through a confluence of text, audio, video, and

instructional information (Cheng et al. 2024). This integra-

tion can effectively capture environment-induced variations

in emotional expression and decode implicit affective cues,

which are critical for understanding teachers’ true emotional

experiences. Although current multimodal models try to in-

tegrate information from different modalities (Zhao, Zhang,

and Chu 2024; Zheng et al. 2024), they often fail to effec-

tively analyze teachers’ emotions due to the performative

nature. On the other hand, the existing multimodal datasets

(McDuff and Soleymani 2017; Bagher Zadeh et al. 2018)

are mostly designed for general sentiment analysis, which

further interferes with the performance of the sentiment

analysis models. Thus, there is an urgent need for both a

high-quality multimodal dataset and specialized multimodal

teacher sentiment analysis models.

To bridge this critical gap and facilitate a more holistic

and accurate understanding of teacher emotional dynam

…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.