As artificial intelligence (AI) rapidly advances, especially in multimodal large language models (MLLMs), research focus is shifting from single-modality text processing to the more complex domains of multimodal and embodied AI. Embodied intelligence focuses on training agents within realistic simulated environments, leveraging physical interaction and action feedback rather than conventionally labeled datasets. Yet, most existing simulation platforms remain narrowly designed, each tailored to specific tasks. A versatile, general-purpose training environment that can support everything from low-level embodied navigation to high-level composite activities, such as multi-agent social simulation and human-AI collaboration, remains largely unavailable. To bridge this gap, we introduce TongSIM, a high-fidelity, general-purpose platform for training and evaluating embodied agents. TongSIM offers practical advantages by providing over 100 diverse, multi-room indoor scenarios as well as an open-ended, interaction-rich outdoor town simulation, ensuring broad applicability across research needs. Its comprehensive evaluation framework and benchmarks enable precise assessment of agent capabilities, such as perception, cognition, decision-making, humanrobot cooperation, and spatial and social reasoning. With features like customized scenes, task-adaptive fidelity, diverse agent types, and dynamic environmental simulation, TongSIM delivers flexibility and scalability for researchers, serving as a unified platform that accelerates training, evaluation, and advancement toward general embodied intelligence. The source code is publicly available at https://github.com/bigai-ai/tongsim.

Deep Dive into 범용 고충실도 시뮬레이션 플랫폼 TongSIM.

As artificial intelligence (AI) rapidly advances, especially in multimodal large language models (MLLMs), research focus is shifting from single-modality text processing to the more complex domains of multimodal and embodied AI. Embodied intelligence focuses on training agents within realistic simulated environments, leveraging physical interaction and action feedback rather than conventionally labeled datasets. Yet, most existing simulation platforms remain narrowly designed, each tailored to specific tasks. A versatile, general-purpose training environment that can support everything from low-level embodied navigation to high-level composite activities, such as multi-agent social simulation and human-AI collaboration, remains largely unavailable. To bridge this gap, we introduce TongSIM, a high-fidelity, general-purpose platform for training and evaluating embodied agents. TongSIM offers practical advantages by providing over 100 diverse, multi-room indoor scenarios as well as an ope

The emergence of large language models (LLMs) has revolutionized the understanding of artificial intelligence (AI). Researchers quickly uncovered a diverse array of capabilities of LLMs within the text modal, including multi-turn dialogue agents [17,1,45], automatic customer services [29,42], novel generation and completion [41,25], text-based automatic non-player characters (NPCs) [5,57], role-playing [30,35], and AI-engaged educational systems [6,10,11]. As the competence of LLMs rapidly increases, expectations for these models have begun to shift from purely text-based applications toward multimodal extensions.

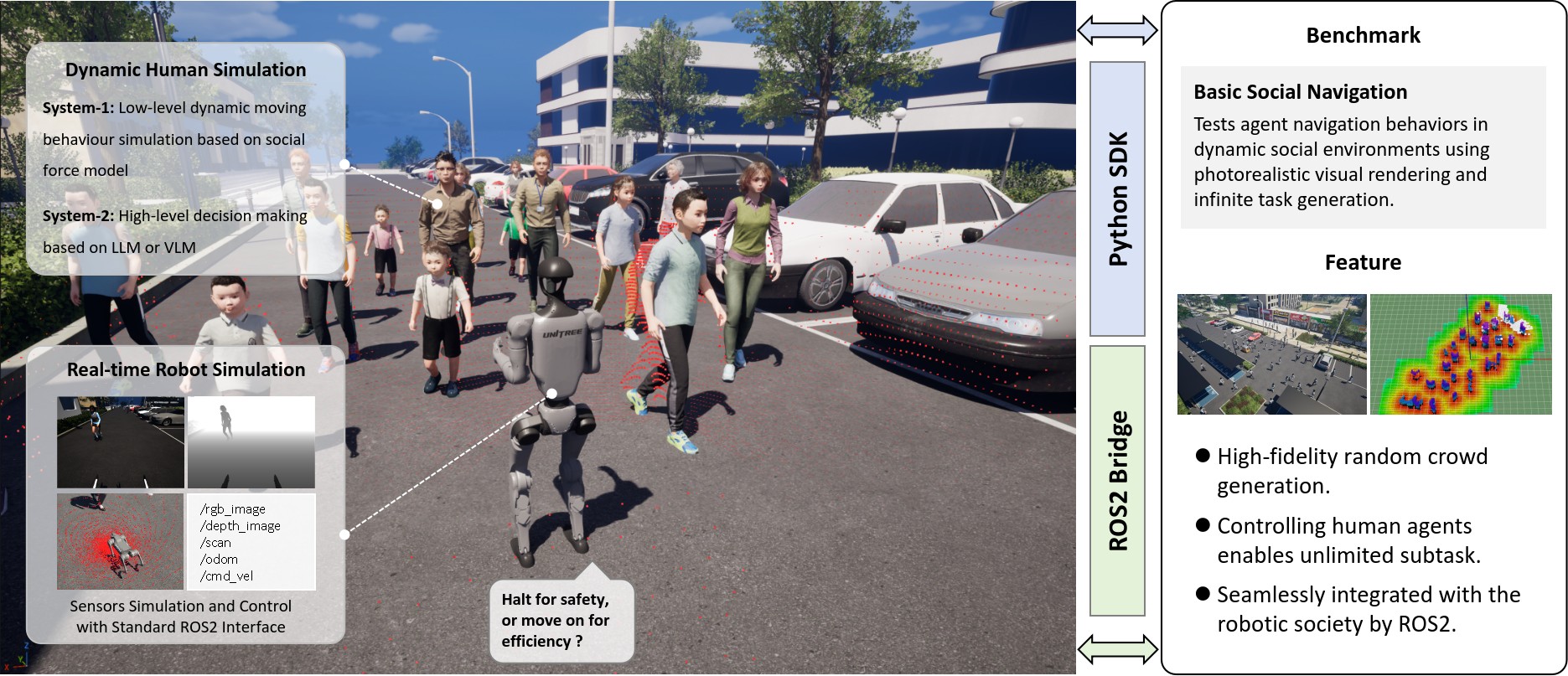

Consequently, a series of research works focused on connecting AI to the real world has emerged [40,30,18,59]. A particularly prominent area within this trend is Embodied AI. Rather than relying on conventional labeled data, researchers in this field propose that training agents in high-fidelity simulated environments and providing them with an embodiment and corresponding action-based feedback can inject new vitality into AI development [36]. A sufficiently realistic simulation environment is crucial to this field. This necessity has spurred the development of a variety of simulation platforms, such as Interactive Gibson [52] and MINOS [37] that focused on navigation, VRGym [54] addressing various human interfaces for multimodal interaction, and platforms dedicated to indoor household tasks [44,21,33]. While some simulation platforms primarily support only one or a Figure 1: TongSIM, a simulation platform for general-purpose embodied AI agent training and evaluation. We provide diverse high-fidelity indoor and outdoor scenes that suit a large range of tasks, as well as multiple embodiments, including not only human-like figures but also robotic ones. few categories of embodied AI tasks, the scope of supported task types is observably increasing [49,34,23].

Against this backdrop, a general-purpose simulation platform that can support both low-level tasks, such as embodied robot training, and high-level tasks, such as single-agent and even multi-agent social simulations, is essential. Such a platform can provide a highly consistent embodied generalpurpose agent training environment, facilitating researchers from different fields to conduct model training, testing, and even develop new tasks on the same platform, which will help advance the development of general-purpose embodied intelligence.

In this work, we present TongSIM, a high-fidelity, universal embodied intelligence training and testing platform that supports complex indoor and outdoor scene simulation, as shown in Figure 1. In this platform, we have constructed a diverse range of indoor and outdoor scenes. Leveraging these diverse and rich scenes, we have proposed a series of benchmarks. These benchmarks cover a wide range of agent capabilities, including perception, cognition, decision-making, human-robot cooperation, spatial and social understanding, covering both low-level tasks (such as navigation) and high-abstraction tasks (such as multi-agent games), forming a comprehensive evaluation system that spans the entire spectrum [31]. Users can independently select different benchmarks to train or test specialized agents or integrate these benchmarks for general-purpose agents. Specifically, we propose 5 categories of benchmarking tasks, including single-agent tasks, multi-agent tasks, humanrobot interaction tasks, primary family composite tasks, and advanced family composite tasks.

Progress in Embodied AI is inextricably tied to developments in simulation technologies and evaluation benchmarks. High-fidelity simulation environments offer low-cost, safe, and reproducible training platforms for agents, while diverse benchmarks drive the evolution of these agents from foundational visual navigation to complex, long-horizon task execution.

The evolution of simulation platforms outlines a clear technical trajectory: transitioning from static, visually faithful environments designed for navigation, to physics-rich interactive worlds support-Table 1: Comparison of embodied AI simulators. We compare TongSIM (Ours) with state-ofthe-art platforms across simulation engines, asset diversity, platform features, and supported tasks. TongSIM possesses the common features of current state-of-the-art simulators, and it stands out in city-level interaction, task-oriented fidelity, and the variety of the supported tasks.

ing fine-grained manipulation, and currently advancing towards generative, open-ended ecosystems powered by AI, aiming to reconcile the trade-off between training scalability and physical realism.

The Habitat platform [38] epitomizes high-efficiency and large-scale simulation. Comprising the high-performance 3D simulator Habitat-Sim and the embodied reinforcement learning (RL) framework Habitat-Lab, its core advantage lies in extreme rendering throughput. Capable of running in parallel at thousands of frames per second, it significan

…(Full text truncated)…

This content is AI-processed based on ArXiv data.