SimpleCall: A Lightweight Image Restoration Agent in Label-Free Environments with MLLM Perceptual Feedback

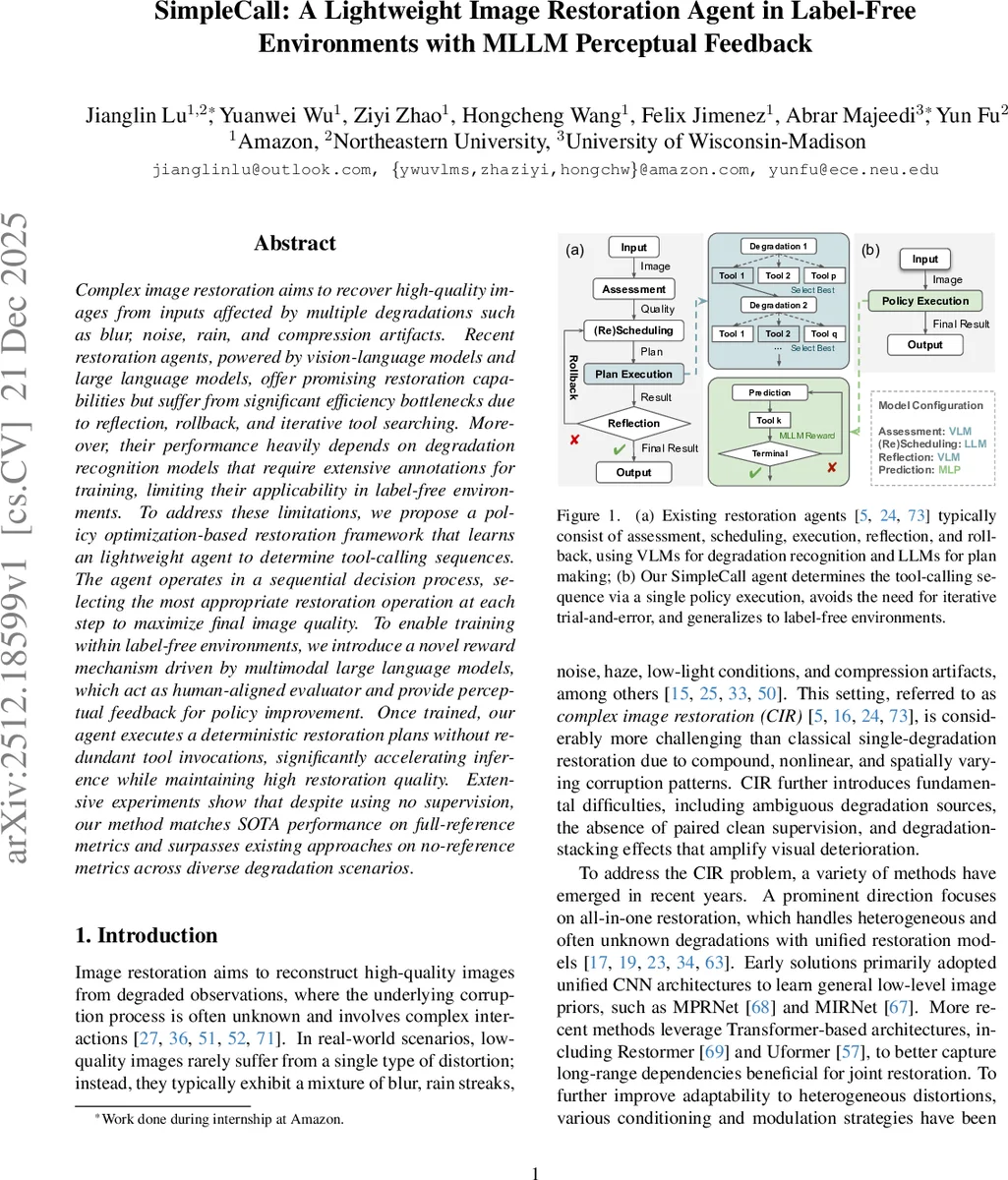

Complex image restoration aims to recover high-quality images from inputs affected by multiple degradations such as blur, noise, rain, and compression artifacts. Recent restoration agents, powered by vision-language models and large language models, offer promising restoration capabilities but suffer from significant efficiency bottlenecks due to reflection, rollback, and iterative tool searching. Moreover, their performance heavily depends on degradation recognition models that require extensive annotations for training, limiting their applicability in label-free environments. To address these limitations, we propose a policy optimization-based restoration framework that learns an lightweight agent to determine tool-calling sequences. The agent operates in a sequential decision process, selecting the most appropriate restoration operation at each step to maximize final image quality. To enable training within label-free environments, we introduce a novel reward mechanism driven by multimodal large language models, which act as human-aligned evaluator and provide perceptual feedback for policy improvement. Once trained, our agent executes a deterministic restoration plans without redundant tool invocations, significantly accelerating inference while maintaining high restoration quality. Extensive experiments show that despite using no supervision, our method matches SOTA performance on full-reference metrics and surpasses existing approaches on no-reference metrics across diverse degradation scenarios.

💡 Research Summary

SimpleCall tackles the challenging problem of complex image restoration (CIR), where a single degraded photograph may suffer from multiple, intertwined degradations such as blur, noise, rain streaks, haze, low‑light conditions, and compression artifacts. Traditional all‑in‑one restoration networks attempt to learn a universal mapping but often struggle with the diversity and non‑linear interactions of real‑world degradations. Recent agentic approaches have introduced large vision‑language models (VLMs) and large language models (LLMs) to recognize degradations, plan a sequence of specialized restoration tools, and iteratively refine the output. While promising, these methods suffer from two critical drawbacks: (1) they require costly iterative loops involving reflection, rollback, and tool re‑search, leading to high inference latency; (2) they depend on supervised degradation labels or optimal tool‑calling trajectories, which are unavailable in label‑free real‑world settings.

SimpleCall proposes a fundamentally different solution by casting CIR as a sequential decision‑making problem solved via reinforcement learning (RL). The core idea is to learn a lightweight policy (the “agent”) that directly predicts the optimal tool‑calling sequence in a single forward pass, without any external degradation recognizer or trial‑and‑error loops. The agent follows an actor‑critic architecture: a compact vision encoder extracts visual features from the current image state, which are concatenated with a record of previously taken actions and fed into a small multilayer perceptron (MLP) policy network (the actor). The critic network estimates the state‑value to compute advantage estimates for policy updates.

Training proceeds in a fully label‑free environment. After the agent selects a tool, the environment applies it to the image and then evaluates the restored result using a multimodal large language model (MLLM) such as LLaVA‑Llama3 or Qwen2‑VL. The MLLM receives a natural‑language prompt (e.g., “Describe how the image quality changed compared to the original degraded input”) and generates a textual assessment. This assessment is mapped to a scalar reward that reflects human‑aligned perceptual quality. Because the reward is derived from language understanding rather than ground‑truth pixel differences, no paired clean images or degradation annotations are required.

Policy optimization follows a PPO‑style clipped surrogate objective to keep updates stable, with generalized advantage estimation (GAE) for low‑variance advantage computation and an entropy bonus to encourage exploration. The overall loss combines the clipped policy loss, a value‑function loss, and the entropy term. During training, actions are sampled from the stochastic policy to explore diverse tool sequences; during inference, the agent deterministically picks the highest‑probability tool at each step, producing a fixed restoration pipeline.

The resulting inference pipeline is dramatically more efficient: a single deterministic pass replaces the multiple reflection‑rollback cycles of prior agents, and the total parameter count is reduced to 0.28 B (≈20–30× smaller than the 7–8 B models used in earlier works). Experiments on several benchmark datasets with mixed degradations (e.g., DIV2K, RealSR, Rain100H) demonstrate that SimpleCall, despite being trained without any supervision, attains full‑reference performance (PSNR, SSIM, LPIPS) comparable to state‑of‑the‑art supervised agents, and it surpasses them on no‑reference quality metrics (MANIQA, CLIP‑IQA, MUSIQ, DeQA). Moreover, inference time drops to roughly 0.12 seconds for 256×256 images, confirming the practical viability of the approach.

In summary, SimpleCall makes three key contributions: (1) it introduces the first label‑free, RL‑based framework for complex image restoration; (2) it designs a lightweight actor‑critic agent that eliminates costly iterative tool searches; (3) it leverages MLLMs as human‑aligned perceptual evaluators to generate rewards, enabling supervision‑free policy learning. By unifying these ideas, the paper establishes a new paradigm that combines high restoration quality with real‑time efficiency, opening the door for deployment of sophisticated restoration agents in truly unlabeled, real‑world environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment