EIRES:Training-free AI-Generated Image Detection via Edit-Induced Reconstruction Error Shift

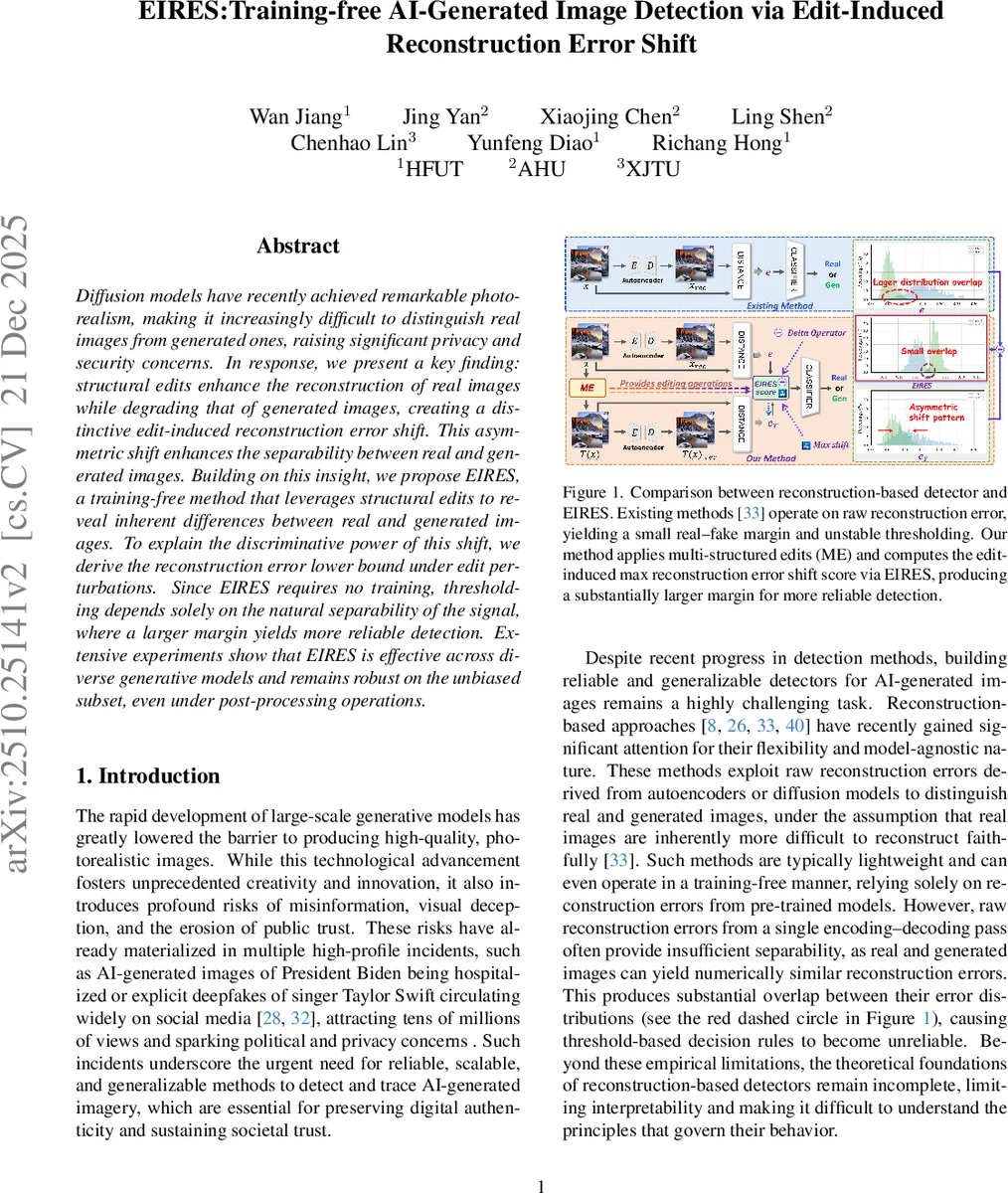

Diffusion models have recently achieved remarkable photorealism, making it increasingly difficult to distinguish real images from generated ones, raising significant privacy and security concerns. In response, we present a key finding: structural edits enhance the reconstruction of real images while degrading that of generated images, creating a distinctive edit-induced reconstruction error shift. This asymmetric shift enhances the separability between real and generated images. Building on this insight, we propose EIRES, a training-free method that leverages structural edits to reveal inherent differences between real and generated images. To explain the discriminative power of this shift, we derive the reconstruction error lower bound under edit perturbations. Since EIRES requires no training, thresholding depends solely on the natural separability of the signal, where a larger margin yields more reliable detection. Extensive experiments show that EIRES is effective across diverse generative models and remains robust on the unbiased subset, even under post-processing operations.

💡 Research Summary

The paper addresses the increasingly difficult problem of distinguishing real photographs from images generated by modern diffusion models, which have reached near‑photorealistic quality. Existing reconstruction‑based detectors rely on a single pass through a pre‑trained encoder‑decoder (or auto‑encoder) and measure raw reconstruction error (often using LPIPS). However, the error distributions of real and synthetic images heavily overlap, making threshold selection unstable, and the theoretical foundations of these methods are weak.

EIRES (Edit‑Induced Reconstruction Error Shift) proposes a training‑free, model‑agnostic framework that actively probes reconstruction behavior by applying a set of structured edits to the input image before reconstruction. The edits are semantically meaningful transformations that preserve overall layout while locally modifying content: (1) Add – insert an object, (2) Erase – remove a region, and (3) SemR – semantic‑guided regeneration. A Multi‑Edit (ME) module applies all K edits, producing edited variants (x_{T_k}). For each edit the reconstruction error change (\Delta_k(x)=e(x_{T_k})-e(x)) is computed, where (e(\cdot)) is the LPIPS distance between the original image and its reconstruction. The EIRES score is the maximum shift across all edits: (\text{score}(x)=\max_k \Delta_k(x)). A single threshold (\tau), calibrated on a small validation set of real images, separates real ((\text{score}>\tau)) from generated ((\text{score}\le\tau)) samples.

The authors provide a geometric analysis to explain why this maximum shift is highly discriminative. They model the reconstruction manifold (\mathcal{M}={D(z)\mid z\sim p_z}) induced by the decoder. Real images typically lie off the manifold, possessing a normal residual (\varepsilon^\perp = x - P_{\mathcal{M}}(x)) orthogonal to the tangent space at the nearest point. Generated images are on or near (\mathcal{M}) and have negligible residuals. Proposition 1 derives a lower bound on the raw reconstruction error in terms of the residual norm and the decoder’s condition number (\kappa_D):

\

Comments & Academic Discussion

Loading comments...

Leave a Comment