A Plug-and-Play Method for Improving Imperceptibility and Capacity in Practical Generative Text Steganography

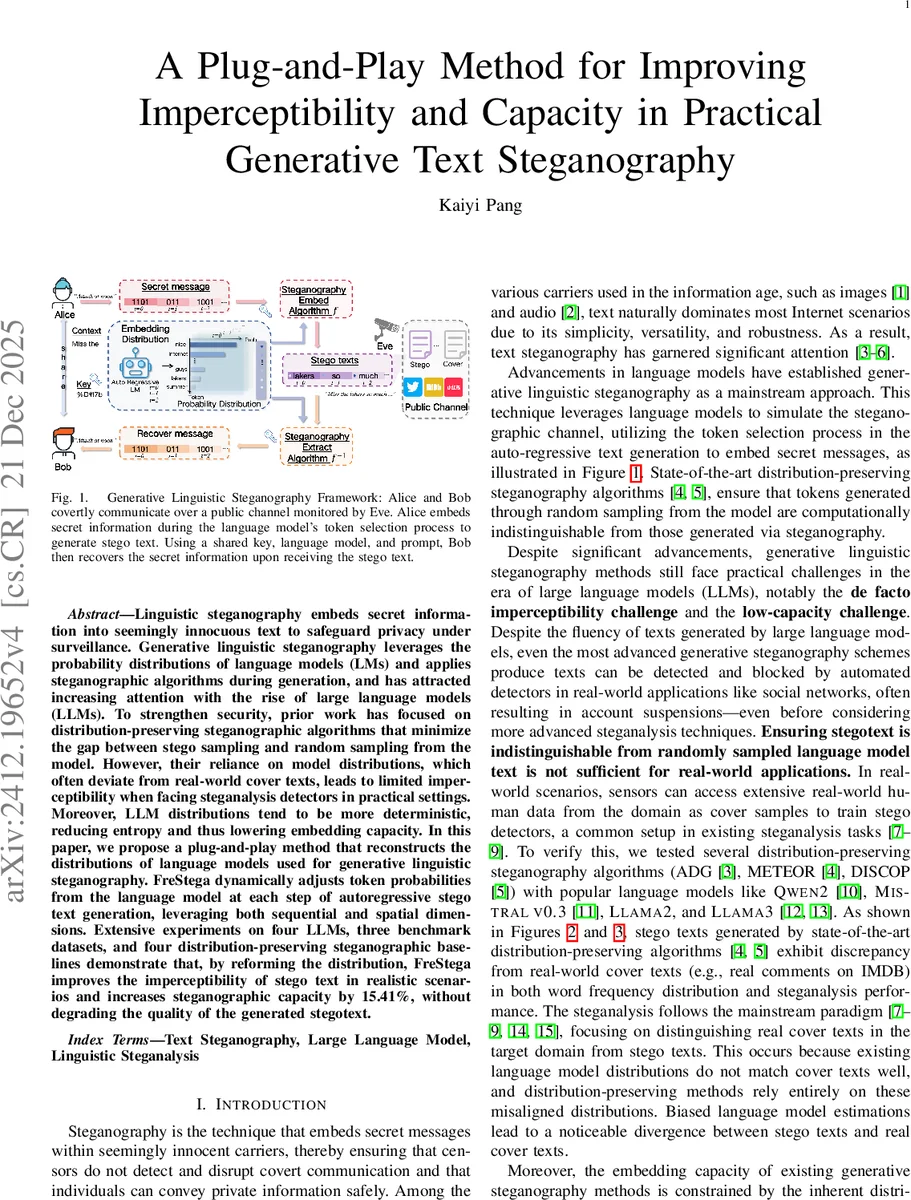

Linguistic steganography embeds secret information into seemingly innocuous text to safeguard privacy under surveillance. Generative linguistic steganography leverages the probability distributions of language models (LMs) and applies steganographic algorithms during generation, and has attracted increasing attention with the rise of large language models (LLMs). To strengthen security, prior work has focused on distribution-preserving steganographic algorithms that minimize the gap between stego sampling and random sampling from the model. However, their reliance on model distributions, which often deviate from real-world cover texts, leads to limited imperceptibility when facing steganalysis detectors in practical settings. Moreover, LLM distributions tend to be more deterministic, reducing entropy and thus lowering embedding capacity. In this paper, we propose a plug-and-play method that reconstructs the distributions of language models used for generative linguistic steganography. FreStega dynamically adjusts token probabilities from the language model at each step of autoregressive stego text generation, leveraging both sequential and spatial dimensions. Extensive experiments on four LLMs, three benchmark datasets, and four distribution-preserving steganographic baselines demonstrate that, by reforming the distribution, FreStega improves the imperceptibility of stego text in realistic scenarios and increases steganographic capacity by 15.41%, without degrading the quality of the generated stegotext.

💡 Research Summary

The paper addresses two practical shortcomings of current generative text steganography that rely on large language models (LLMs): (1) limited imperceptibility in real‑world settings because the probability distribution of an LLM often diverges from the distribution of genuine cover texts, and (2) reduced embedding capacity caused by the low entropy of LLM outputs, which become overly deterministic after reinforcement‑learning‑from‑human‑feedback (RLHF) fine‑tuning. Existing distribution‑preserving steganographic algorithms (e.g., ADG, METEOR, DISCOP) attempt to keep the stego sampling distribution close to the model’s own distribution, but this strategy fails when the model’s distribution itself is mismatched with the target domain. Consequently, stego texts are easily distinguished by modern steganalysis detectors trained on real‑world corpora (e.g., IMDB reviews, Shakespeare plays), and the amount of secret bits that can be embedded per token remains low.

To overcome these issues, the authors propose FreStega, a plug‑and‑play, model‑agnostic method that reforms the language model’s token probability distribution at generation time. FreStega operates along two complementary dimensions:

- Sequential Adjustment – At each generation step t, the instantaneous entropy (E_t = -\sum_{k} p(w_k|x_{0:t-1})\log p(w_k|x_{0:t-1})) is computed. A temperature function

\

Comments & Academic Discussion

Loading comments...

Leave a Comment