📝 Original Info

- Title: Controllable Probabilistic Forecasting with Stochastic Decomposition Layers

- ArXiv ID: 2512.18815

- Date: 2025-12-21

- Authors: Researchers from original ArXiv paper

📝 Abstract

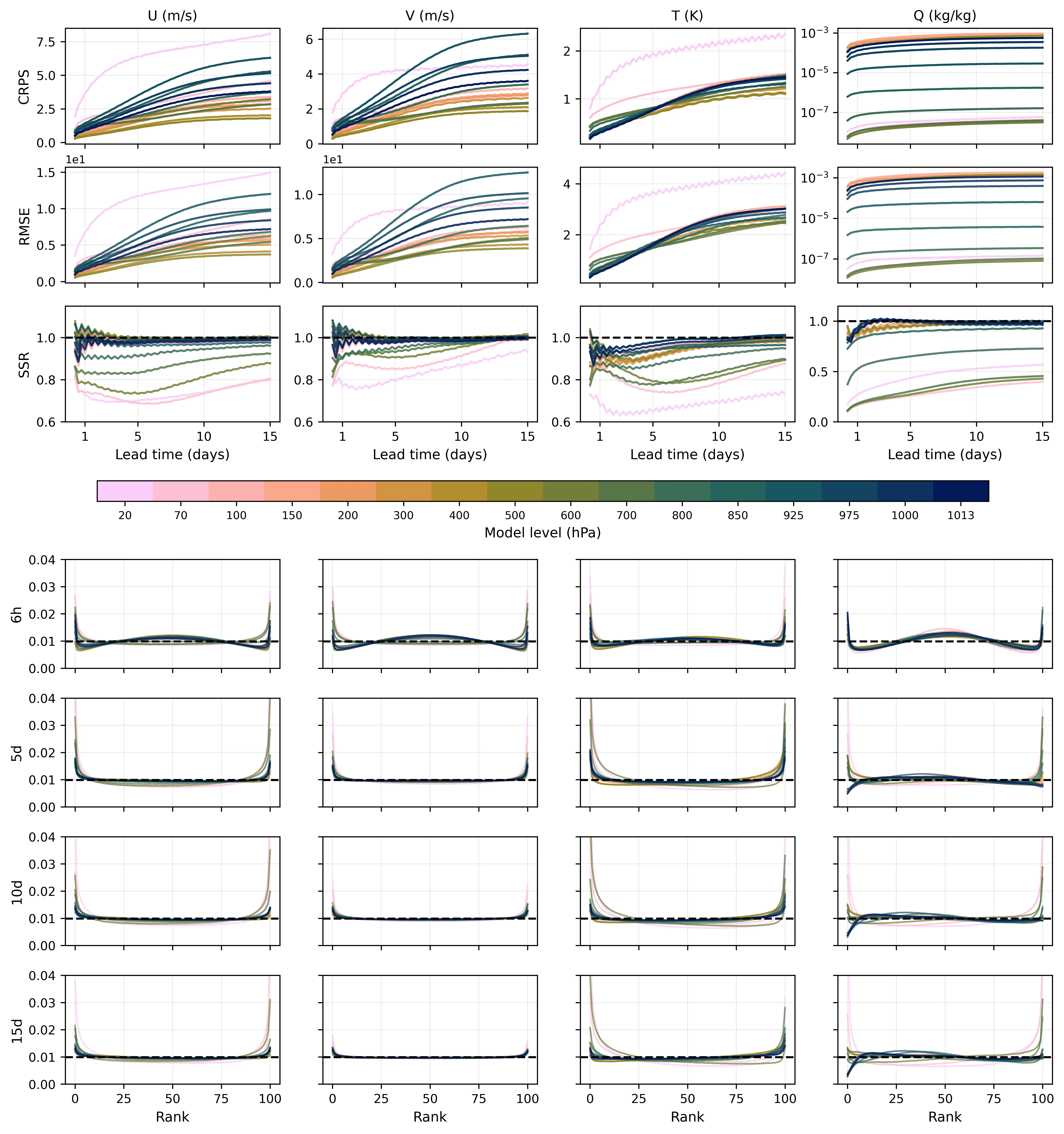

AI weather prediction ensembles with latent noise injection and optimized with the continuous ranked probability score (CRPS) have produced both accurate and well-calibrated predictions with far less computational cost compared with diffusion-based methods. However, current CRPS ensemble approaches vary in their training strategies and noise injection mechanisms, with most injecting noise globally throughout the network via conditional normalization. This structure increases training expense and limits the physical interpretability of the stochastic perturbations. We introduce Stochastic Decomposition Layers (SDL) for converting deterministic machine learning weather models into probabilistic ensemble systems. Adapted from StyleGAN's hierarchical noise injection, SDL applies learned perturbations at three decoder scales through latent-driven modulation, per-pixel noise, and channel scaling. When applied to WXFormer via transfer learning, SDL requires less than 2\% of the computational cost needed to train the baseline model. Each ensemble member is generated from a compact latent tensor (5 MB), enabling perfect reproducibility and post-inference spread adjustment through latent rescaling. Evaluation on 2022 ERA5 reanalysis shows ensembles with spread-skill ratios approaching unity and rank histograms that progressively flatten toward uniformity through medium-range forecasts, achieving calibration competitive with operational IFS-ENS. Multi-scale experiments reveal hierarchical uncertainty: coarse layers modulate synoptic patterns while fine layers control mesoscale variability. The explicit latent parameterization provides interpretable uncertainty quantification for operational forecasting and climate applications.

💡 Deep Analysis

Deep Dive into Controllable Probabilistic Forecasting with Stochastic Decomposition Layers.

AI weather prediction ensembles with latent noise injection and optimized with the continuous ranked probability score (CRPS) have produced both accurate and well-calibrated predictions with far less computational cost compared with diffusion-based methods. However, current CRPS ensemble approaches vary in their training strategies and noise injection mechanisms, with most injecting noise globally throughout the network via conditional normalization. This structure increases training expense and limits the physical interpretability of the stochastic perturbations. We introduce Stochastic Decomposition Layers (SDL) for converting deterministic machine learning weather models into probabilistic ensemble systems. Adapted from StyleGAN’s hierarchical noise injection, SDL applies learned perturbations at three decoder scales through latent-driven modulation, per-pixel noise, and channel scaling. When applied to WXFormer via transfer learning, SDL requires less than 2% of the computational

📄 Full Content

Controllable Probabilistic Forecasting with Stochastic

Decomposition Layers

John S. Schreck1,∗, William E. Chapman2, Charlie Becker1,

David John Gagne II1, Dhamma Kimpara1, Nihanth Cherukuru1,

Judith Berner1, Kirsten J. Mayer1, Negin Sobhani1

1NSF National Center for Atmospheric Research, Boulder, CO, USA

2Atmospheric and Oceanic Sciences Department, University of Colorado, Boulder, CO, USA

∗Corresponding author: schreck@ucar.edu

December 23, 2025

Abstract

AI weather prediction ensembles with latent noise injection and optimized with the contin-

uous ranked probability score (CRPS) have produced both accurate and well-calibrated predic-

tions with far less computational cost compared with diffusion-based methods. However, current

CRPS ensemble approaches vary in their training strategies and noise injection mechanisms,

with most injecting noise globally throughout the network via conditional normalization. This

structure increases training expense and limits the physical interpretability of the stochastic per-

turbations. We introduce Stochastic Decomposition Layers (SDL) for converting deterministic

machine learning weather models into probabilistic ensemble systems. Adapted from StyleGAN’s

hierarchical noise injection, SDL applies learned perturbations at three decoder scales through

latent-driven modulation, per-pixel noise, and channel scaling. When applied to WXFormer

via transfer learning, SDL requires less than 2% of the computational cost needed to train the

baseline model. Each ensemble member is generated from a compact latent tensor (5 MB),

enabling perfect reproducibility and post-inference spread adjustment through latent rescaling.

Evaluation on 2022 ERA5 reanalysis shows ensembles with spread-skill ratios approaching unity

and rank histograms that progressively flatten toward uniformity through medium-range fore-

casts, achieving calibration competitive with operational IFS-ENS. Multi-scale experiments re-

veal hierarchical uncertainty: coarse layers modulate synoptic patterns while fine layers control

mesoscale variability. The explicit latent parameterization provides interpretable uncertainty

quantification for operational forecasting and climate applications.

1

Introduction

National Meteorological and Hydrological Services have increasingly incorporated ensemble fore-

casting methods to provide probabilistic predictions and quantify forecast uncertainty over the past

1

arXiv:2512.18815v1 [cs.LG] 21 Dec 2025

3 decades [1, 2]. Traditional ensemble numerical weather prediction (NWP) approaches perturb

initial conditions and/or use perturbed model physics parameterizations [3–6], but these methods

are computationally expensive, requiring multiple full model integrations. Operational NWP en-

sembles have a relatively small number of members, usually on the order of 30-50, and may run at

a lower spatial resolution compared with deterministic flagship members [7]. Recent advances in

machine learning for weather prediction [8–13] have demonstrated skill comparable to traditional

numerical weather prediction models, yet extending these capabilities to probabilistic forecasting

while respecting chaotic dynamics remains challenging.

The theoretical foundation for ensemble prediction stems from Lorenz’s discovery that the atmo-

sphere is a chaotic system where small initial condition errors grow exponentially [14]. Quantitative

estimates from early global circulation models established a practical deterministic predictability

limit of roughly two weeks [15], later confirmed as a fundamental property of atmospheric dynamics

[16–18]. Epstein and coauthors [19] proposed a stochastic-dynamic formulation of the primitive

equations that predicted the mean and variance of each state variable but was viewed to be com-

putationally intractable at the time. Monte Carlo ensemble approximations [20] and optimization

with least squares techniques [21] demonstrated that calibrated NWP ensembles could be produced

that could improve on deterministic forecasts. In general, ensemble forecasting quantifies the fun-

damental predictability limitations of chaotic dynamics by sampling the phase space of possible

atmospheric states, representing forecast uncertainty through trajectory divergence on the climate

system’s attractor [2, 22].

Early ML weather models employed deterministic architectures trained with mean squared er-

ror (MSE) loss. When combined with multi-step training, these models produced overly smooth

forecasts that underrepresented atmospheric variability. Generative modeling approaches, particu-

larly diffusion-based weather models [23–26], address this limitation by iteratively denoising random

fields to produce realistic ensemble members. However, diffusion-based weather models [26] require

learning full denoising trajectories across hundreds of timesteps during training and at least 20-50

iterative refinement steps per forecast at inference, imposing substantial computational burden on

operational sy

…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.