📝 Original Info

- Title: DASH: Deception-Augmented Shared Mental Model for a Human-Machine Teaming System

- ArXiv ID: 2512.18616

- Date: 2025-12-21

- Authors: Researchers from original ArXiv paper

📝 Abstract

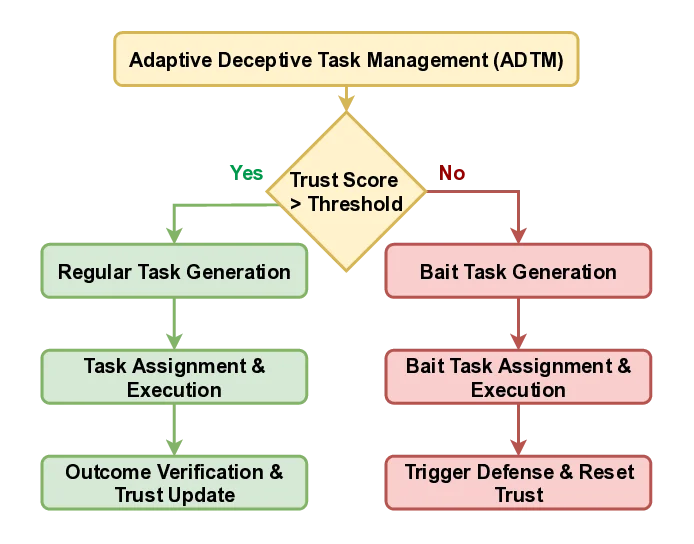

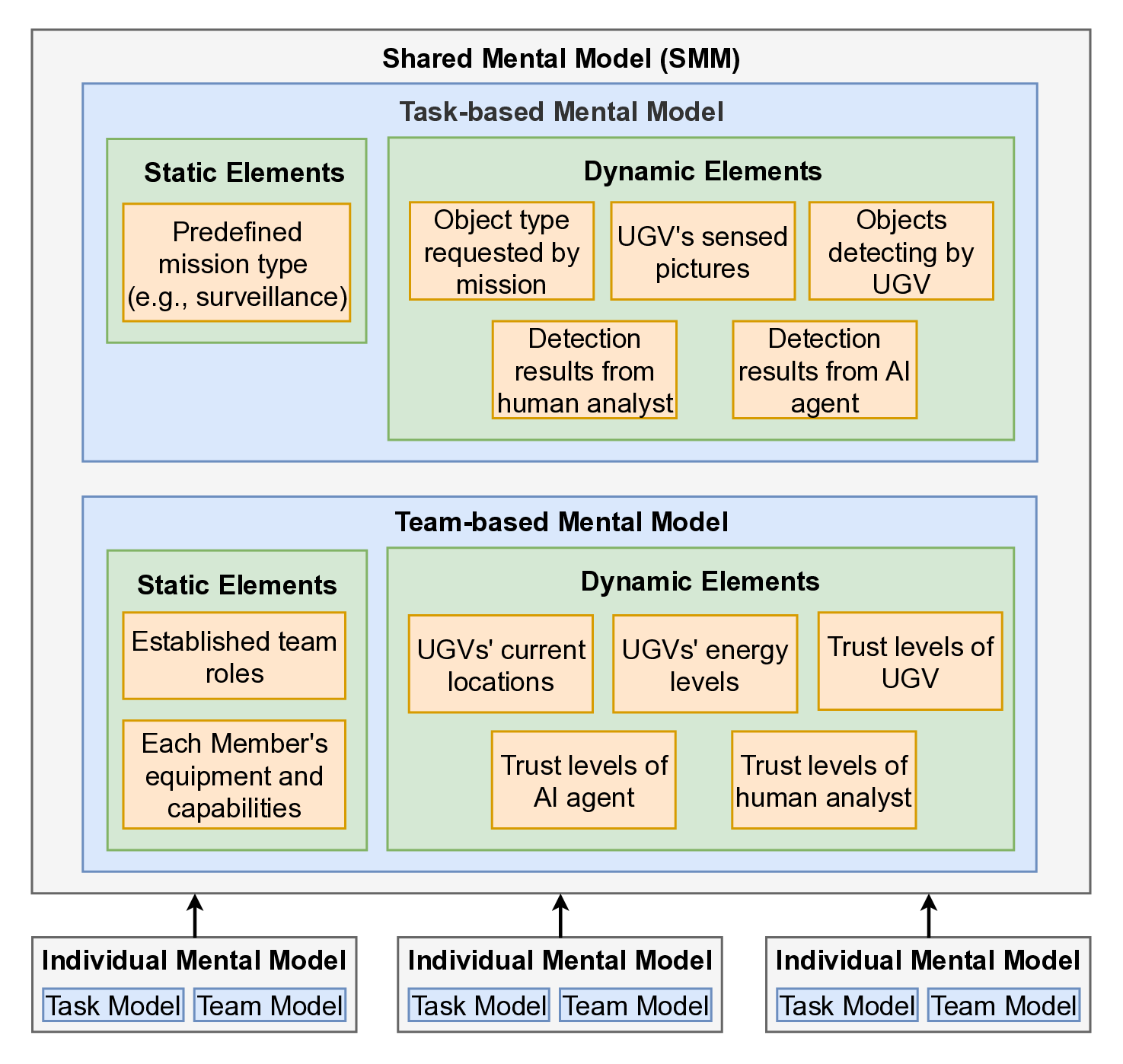

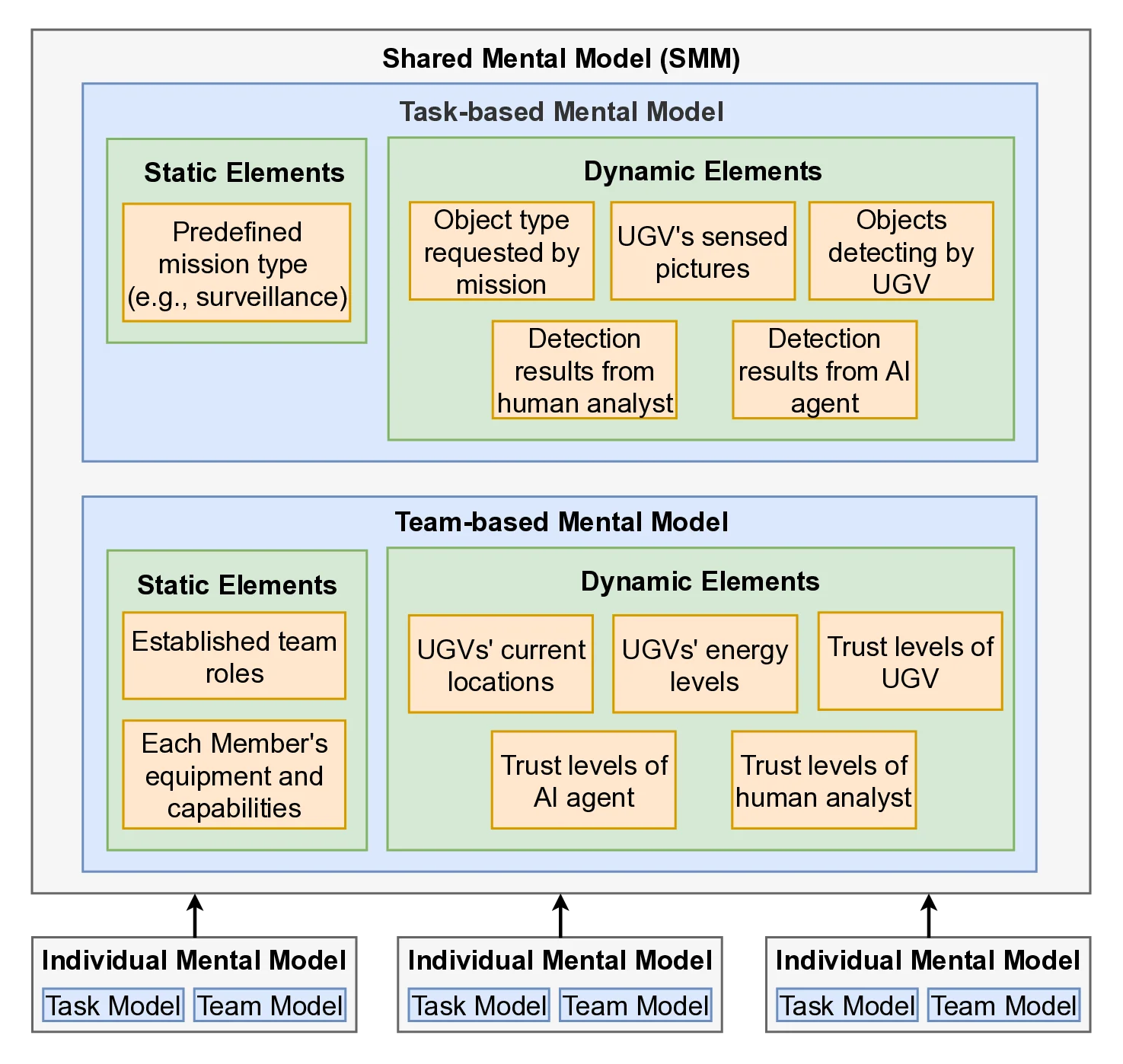

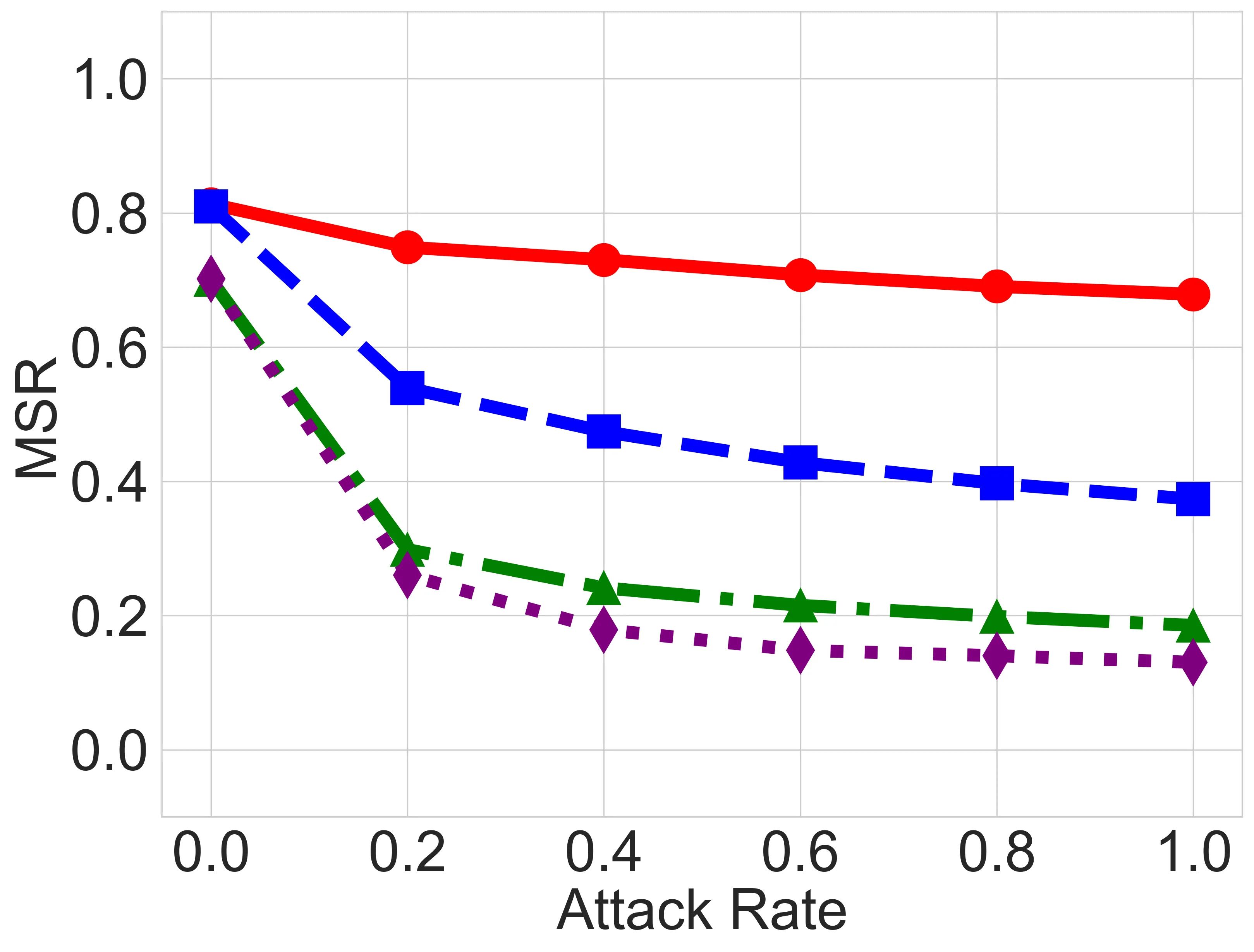

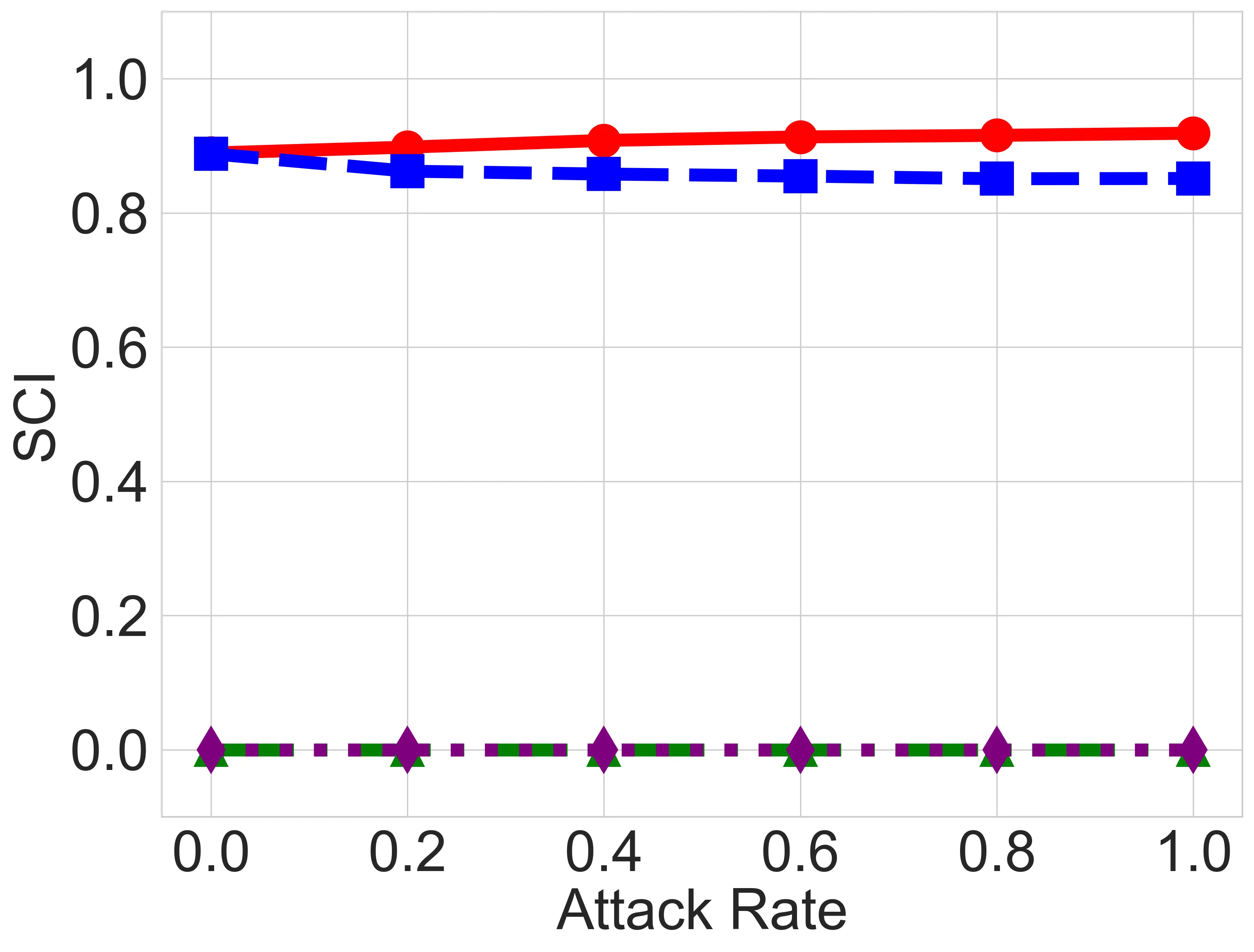

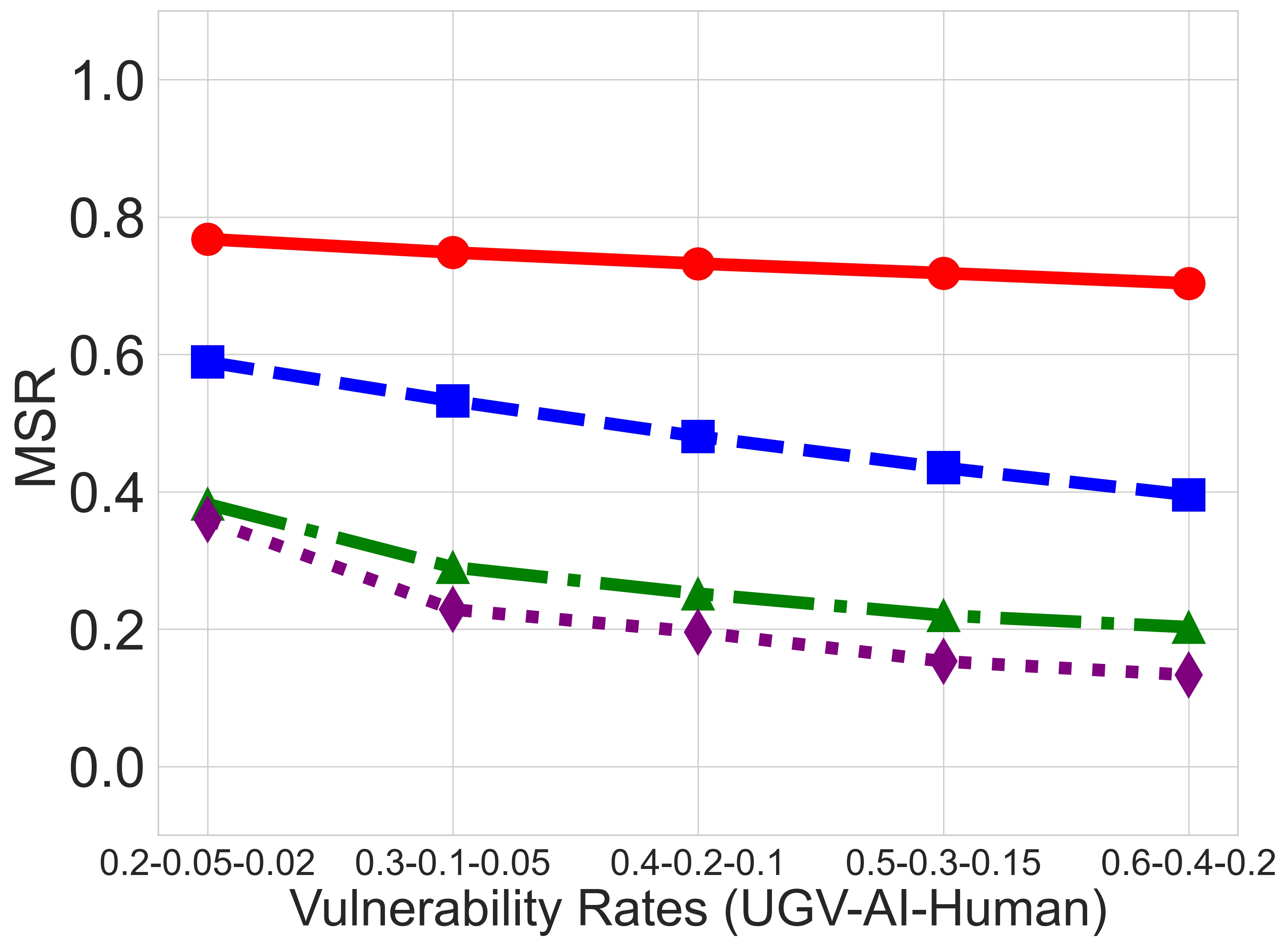

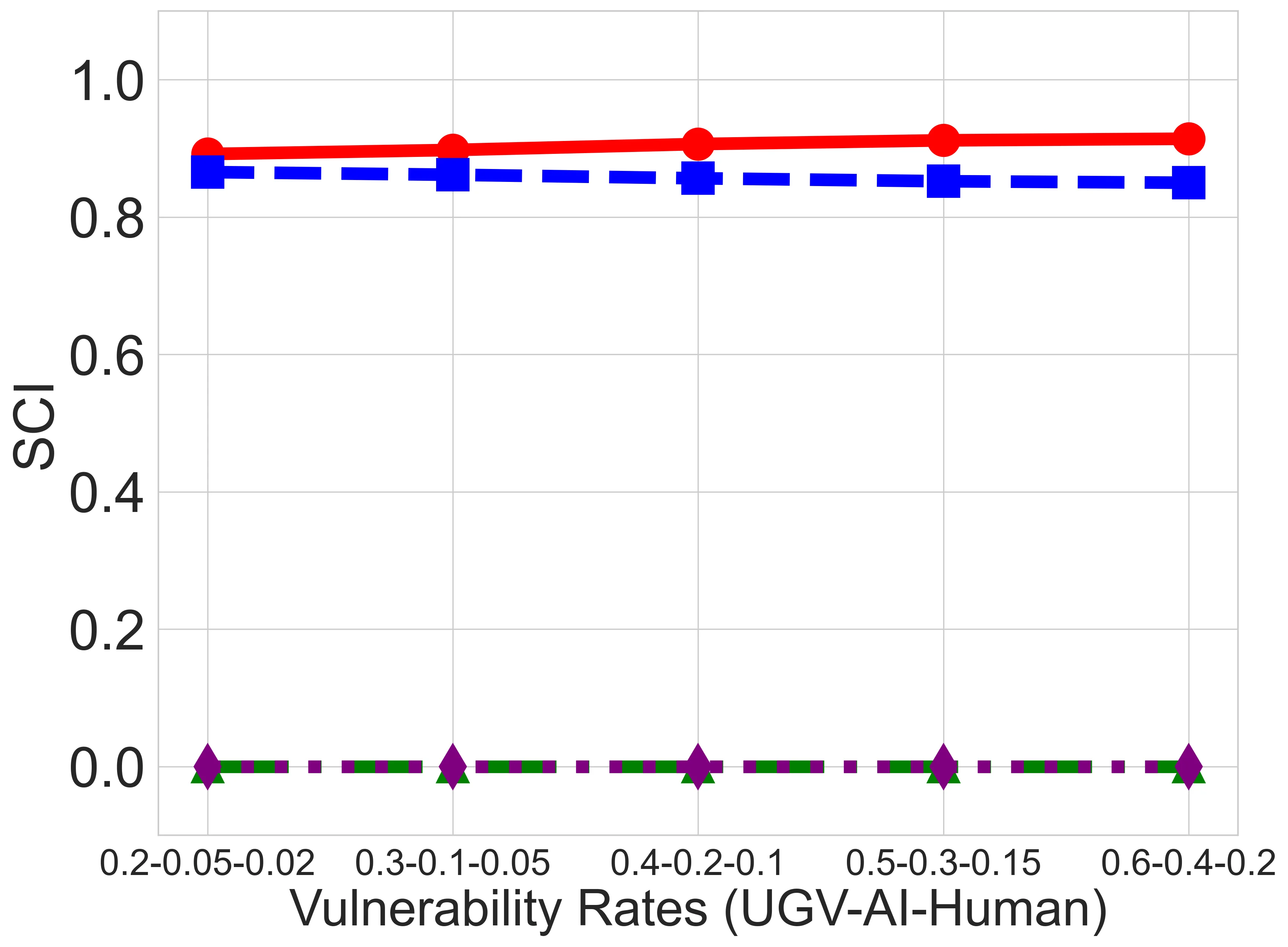

We present DASH (Deception-Augmented Shared mental model for Human-machine teaming), a novel framework that enhances mission resilience by embedding proactive deception into Shared Mental Models (SMM). Designed for mission-critical applications such as surveillance and rescue, DASH introduces "bait tasks" to detect insider threats, e.g., compromised Unmanned Ground Vehicles (UGVs), AI agents, or human analysts, before they degrade team performance. Upon detection, tailored recovery mechanisms are activated, including UGV system reinstallation, AI model retraining, or human analyst replacement. In contrast to existing SMM approaches that neglect insider risks, DASH improves both coordination and security. Empirical evaluations across four schemes (DASH, SMM-only, no-SMM, and baseline) show that DASH sustains approximately 80% mission success under high attack rates, eight times higher than the baseline. This work contributes a practical human-AI teaming framework grounded in shared mental models, a deception-based strategy for insider threat detection, and empirical evidence of enhanced robustness under adversarial conditions. DASH establishes a foundation for secure, adaptive human-machine teaming in contested environments.

💡 Deep Analysis

Deep Dive into DASH: Deception-Augmented Shared Mental Model for a Human-Machine Teaming System.

We present DASH (Deception-Augmented Shared mental model for Human-machine teaming), a novel framework that enhances mission resilience by embedding proactive deception into Shared Mental Models (SMM). Designed for mission-critical applications such as surveillance and rescue, DASH introduces “bait tasks” to detect insider threats, e.g., compromised Unmanned Ground Vehicles (UGVs), AI agents, or human analysts, before they degrade team performance. Upon detection, tailored recovery mechanisms are activated, including UGV system reinstallation, AI model retraining, or human analyst replacement. In contrast to existing SMM approaches that neglect insider risks, DASH improves both coordination and security. Empirical evaluations across four schemes (DASH, SMM-only, no-SMM, and baseline) show that DASH sustains approximately 80% mission success under high attack rates, eight times higher than the baseline. This work contributes a practical human-AI teaming framework grounded in shared ment

📄 Full Content

IEEE TRANSACTIONS ON HUMAN-MACHINE SYSTEMS, VOL. XX, NO. X, XXX 2025

1

DASH: Deception-Augmented Shared Mental

Model for a Human-Machine Teaming System

Zelin Wan, Han Jun Yoon, Nithin Alluru, Terrence J. Moore, Frederica F. Nelson, Seunghyun Yoon, Hyuk Lim,

Dan Dongseong Kim, and Jin-Hee Cho, Senior Member, IEEE

Abstract—We present DASH (Deception-Augmented Shared

mental model for Human-machine teaming), a novel frame-

work that enhances mission resilience by embedding proactive

deception into Shared Mental Models (SMM). Designed for

mission-critical applications such as surveillance and rescue,

DASH introduces “bait tasks” to detect insider threats, e.g.,

compromised Unmanned Ground Vehicles (UGVs), AI agents, or

human analysts, before they degrade team performance. Upon

detection, tailored recovery mechanisms are activated, including

UGV system reinstallation, AI model retraining, or human

analyst replacement. In contrast to existing SMM approaches

that neglect insider risks, DASH improves both coordination

and security. Empirical evaluations across four schemes (DASH,

SMM-only, no-SMM, and baseline) show that DASH sustains

approximately 80% mission success under high attack rates,

eight times higher than the baseline. This work contributes

a practical human-AI teaming framework grounded in shared

mental models, a deception-based strategy for insider threat

detection, and empirical evidence of enhanced robustness under

adversarial conditions. DASH establishes a foundation for secure,

adaptive human-machine teaming in contested environments.

Index Terms—Human-machine teaming, shared mental model,

cyber deception, unmanned ground vehicles, trust

I. INTRODUCTION

Why human-machine teaming (HMT) systems? As HMT

systems are increasingly deployed in high-stakes operations,

ensuring secure and efficient collaboration among human an-

alysts, AI agents, and UGVs is critical [1]. A well-established

Shared Mental Model (SMM) enhances coordination by syn-

chronizing tasks, fosters verification and validation, promotes

adaptation to dynamic conditions, and mitigates failures. With-

out an effective SMM, miscommunication, inefficient task

This research is partially supported by the DEVCOM ARL Army Research

Office (ARO) Award (W911NF-24-2-0241), the National Science Foundation

(NSF) Secure and Trustworthy Cyberspace (SaTC) Award (2330940), and

the Virtual Institutes for Cyber and Electromagnetic Spectrum Research and

Employment (VICEROY) program under the Air Force Research Laboratory

(AFRL) initiatives through The Griffiss Institute (419890). The views and

conclusions contained in this document are those of the authors and should

not be interpreted as representing the official policies, either expressed

or implied, of the Army Research Laboratory or the U.S. Government.

The U.S. Government is authorized to reproduce and distribute reprints

for Government purposes, notwithstanding any copyright notation herein.

(Corresponding author: Zelin Wan). Zelin Wan, Han Jun Yoon, Nithin Alluru,

and Jin-Hee Cho are with the Department of Computer Science, Virginia

Tech, Arlington, VA, USA. Email: {zelin, godzmdi93, nithin, jicho}@vt.edu.

Terrence J. Moore and Frederica F. Nelson are with the US Army DEVCOM

Army Research Laboratory, Adelphi, MD, USA. Email: {terrence.j.moore.civ,

frederica.f.nelson.civ}@army.mil. Seunghyun Yoon and Hyuk Lim are with

the Korea Institute of Energy Technology (KENTECH), Naju-si, Jeollanam-

do, Republic of Korea. Email: {syoon, hlim}@kentech.ac.kr. Dan Dongseong

Kim is with the University of Queensland, Brisbane, Queensland, Australia.

Email: dan.kim@uq.edu.au.

allocation, and increased risks of mission failure arise [2].

Moreover, adversaries can exploit inconsistencies in trust and

coordination, underscoring the need for a security-aware HMT

framework that strengthens both collaboration and resilience.

Why are SMMs critical? According to Cannon-Bowers’

team mental model framework [3, 4], an SMM is known to

establish a shared understanding that enhances coherent task

execution and adaptability. However, their application in HMT

systems remains underdeveloped [5]. Existing models [6, 7]

fail to capture the dynamic interactions between human and

AI teammates, and security remains largely unaddressed. Tra-

ditional static defenses [8] are insufficient against adversaries

manipulating trust dynamics or selectively targeting system

components. To ensure mission integrity, a security-aware

SMM is essential for proactively detecting and mitigating these

evolving threats.

Cyber deception in HMT systems. Cyber deception has

emerged as a promising proactive defense strategy [9], offering

unique advantages in securing HMT systems. Unlike con-

ventional security mechanisms focusing solely on perimeter

defense or reactive threat detection [10, 11], cyber decep-

tion actively manipulates an adversary’s perception, inducing

suboptimal decisions [9]. This is particularly valuable in

HMT environments, where the diverse attack

…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.