Loom: Diffusion-Transformer for Interleaved Generation

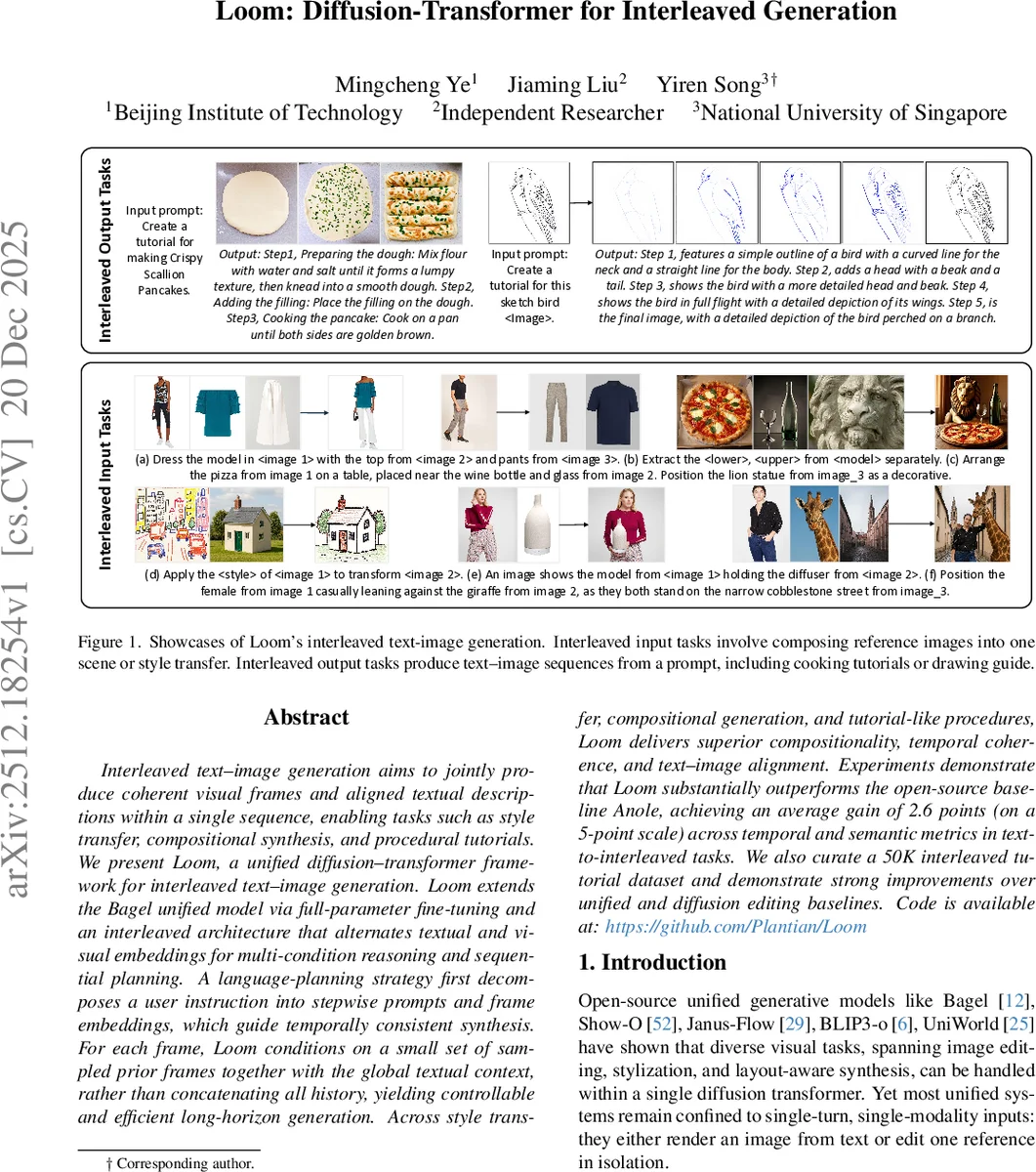

Interleaved text-image generation aims to jointly produce coherent visual frames and aligned textual descriptions within a single sequence, enabling tasks such as style transfer, compositional synthesis, and procedural tutorials. We present Loom, a unified diffusion-transformer framework for interleaved text-image generation. Loom extends the Bagel unified model via full-parameter fine-tuning and an interleaved architecture that alternates textual and visual embeddings for multi-condition reasoning and sequential planning. A language planning strategy first decomposes a user instruction into stepwise prompts and frame embeddings, which guide temporally consistent synthesis. For each frame, Loom conditions on a small set of sampled prior frames together with the global textual context, rather than concatenating all history, yielding controllable and efficient long-horizon generation. Across style transfer, compositional generation, and tutorial-like procedures, Loom delivers superior compositionality, temporal coherence, and text-image alignment. Experiments demonstrate that Loom substantially outperforms the open-source baseline Anole, achieving an average gain of 2.6 points (on a 5-point scale) across temporal and semantic metrics in text-to-interleaved tasks. We also curate a 50K interleaved tutorial dataset and demonstrate strong improvements over unified and diffusion editing baselines.

💡 Research Summary

Loom introduces a unified diffusion‑transformer architecture that can generate interleaved sequences of text and images within a single model. Building on the open‑source Bagel backbone, the authors fully fine‑tune all parameters and redesign the token stream so that textual and visual embeddings alternate, enabling the model to accept mixed‑modality inputs and produce mixed‑modality outputs. The core innovation is a “Planning‑First” strategy: given a complex user instruction, Loom first generates a complete textual plan (a list of stepwise prompts) in one autoregressive pass. Each step’s description is then used, together with a small, uniformly sampled subset of previously generated frames, to condition the diffusion process that creates the next image. This decouples high‑level semantic reasoning from low‑level pixel synthesis, reducing error propagation in long‑horizon tasks. Temporal embeddings are added to visual tokens to explicitly encode the position of each frame, further stabilizing sequential coherence. Sparse historical frame sampling keeps computational cost linear in a fixed budget Kmax while preserving global context. The training objective combines cross‑entropy loss for text prediction and mean‑squared error loss for latent‑space image denoising, allowing joint optimization of both modalities. To evaluate Loom, the authors curated a 50 K interleaved tutorial dataset covering cooking, drawing, and assembly workflows. Experiments on three task families—style transfer, compositional synthesis, and procedural tutorial generation—show that Loom outperforms the open‑source baseline Anole by an average of 2.6 points on a 5‑point scale across temporal consistency, semantic fidelity, and text‑image alignment metrics. Notably, Loom maintains high fidelity over sequences longer than ten steps and handles multiple reference images without the degradation seen in prior models. The paper releases code and data, providing a solid foundation for future research on multi‑turn, multi‑modal generation.

Comments & Academic Discussion

Loading comments...

Leave a Comment