SG-RIFE: Semantic-Guided Real-Time Intermediate Flow Estimation with Diffusion-Competitive Perceptual Quality

Real-time Video Frame Interpolation (VFI) has long been dominated by flow-based methods like RIFE, which offer high throughput but often fail in complicated scenarios involving large motion and occlusion. Conversely, recent diffusion-based approaches (e.g., Consec. BB) achieve state-of-the-art perceptual quality but suffer from prohibitive latency, rendering them impractical for real-time applications. To bridge this gap, we propose Semantic-Guided RIFE (SG-RIFE). Instead of training from scratch, we introduce a parameter-efficient fine-tuning strategy that augments a pre-trained RIFE backbone with semantic priors from a frozen DINOv3 Vision Transformer. We propose a Split-Fidelity Aware Projection Module (Split-FAPM) to compress and refine high-dimensional features, and a Deformable Semantic Fusion (DSF) module to align these semantic priors with pixel-level motion fields. Experiments on SNU-FILM demonstrate that semantic injection provides a decisive boost in perceptual fidelity. SG-RIFE outperforms diffusion-based LDMVFI in FID/LPIPS and achieves quality comparable to Consec. BB on complex benchmarks while running significantly faster, proving that semantic consistency enables flow-based methods to achieve diffusion-competitive perceptual quality in near real-time.

💡 Research Summary

The paper addresses the long‑standing trade‑off in video frame interpolation (VFI) between real‑time speed and high perceptual quality. Flow‑based methods such as RIFE achieve impressive throughput (hundreds of frames per second) but struggle with large motions and occlusions, producing blur or ghosting artifacts. Diffusion‑based approaches (e.g., Consec. BB, TLB‑VFI) generate state‑of‑the‑art visual fidelity and temporal consistency, yet their iterative sampling pipelines incur prohibitive latency (seconds per frame), making them unsuitable for real‑time applications.

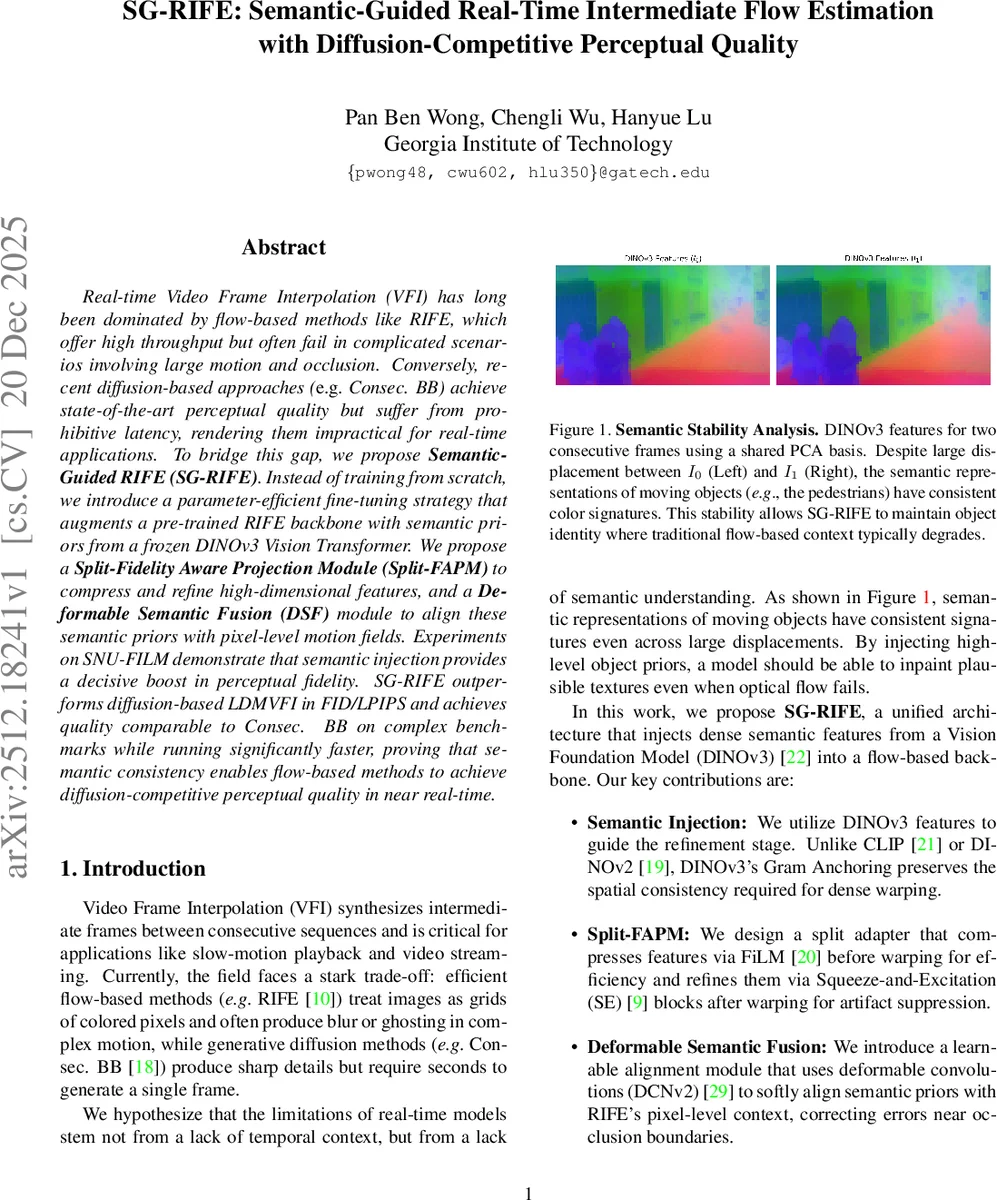

SG‑RIFE proposes to bridge this gap by injecting high‑level semantic priors from a frozen DINOv3 Vision Transformer into a pre‑trained RIFE backbone. The key insight is that semantic representations from foundation models remain stable across large displacements, providing object‑level consistency that can guide texture synthesis where optical flow fails. Rather than retraining the entire network, the authors adopt a parameter‑efficient fine‑tuning strategy: the IFNet (intermediate flow estimator) and ContextNet (feature extractor) are frozen, while only the FusionNet and a set of lightweight adapters are updated.

Two novel modules enable this semantic injection:

-

Split‑Fidelity‑Aware Projection Module (Split‑FAPM).

- Pre‑warp compression: DINOv3 features (C=384) are passed through two parallel 1×1 convolutions – a task‑specific “feature” branch and a shared “modulation” branch that predicts FiLM parameters (γ, β). This reduces dimensionality to 256 and aligns the semantics with the VFI domain.

- Post‑warp refinement: After warping the compressed features with the intermediate flow, a residual block consisting of depthwise separable convolutions and Squeeze‑and‑Excitation (SE) recalibrates channels and repairs spatial discontinuities introduced by warping. GELU replaces ReLU to maintain compatibility with the frozen DINOv3 backbone.

-

Deformable Semantic Fusion (DSF).

- Coarsely warped semantic priors are first refined by Split‑FAPM, then aligned to RIFE’s pixel‑level context using a deformable convolution (DCNv2) that predicts offset maps (Δp) and modulation scalars (Δm) from a shared query‑key‑value space.

- Two DSF blocks are placed in the FusionNet: one at the intermediate encoder level (S2) with 4 groups processing 128‑channel features, and another at the bottleneck (S3) with 8 groups processing 256‑channel features. Grouped deformable convolutions reduce parameters from C²·9 to (C²/G)·9, preserving real‑time feasibility. The scalar γ is initialized to zero, allowing the network to gradually incorporate semantic gradients as alignment stabilizes.

Semantic features from DINOv3 layers 8 (high‑frequency texture) and 11 (global object semantics) are injected at different depths: shallow features guide local texture synthesis, while deeper features provide global object consistency. No upsampling of semantics is performed at the highest resolution; the original ContextNet handles fine edges directly from RGB inputs, avoiding noisy high‑resolution semantic maps.

The training objective combines the original RIFE losses (reconstruction, Laplacian pyramid, and distillation) with two new terms:

- Semantic Consistency Loss (L_sem): L1 distance between DINO features of the predicted frame and ground‑truth frame, encouraging the output to retain the same semantic layout.

- Offset Regularization (L_reg): L1 penalty on the predicted deformable offsets at both DSF insertion points, preventing excessive warping.

Loss weights are set to λ_rec = 0.1, λ_dis = 0.01, λ_tea = 0.1, λ_sem = 0.5, λ_reg = 1e‑4, deliberately emphasizing semantic fidelity over pure pixel‑wise accuracy.

Experiments.

SG‑RIFE is trained on the Video90K dataset (≈51k triplets, 448×256 resolution) with standard augmentations. A two‑stage training schedule is employed: first 5 epochs for the adapters and DSF, then 25 epochs jointly fine‑tuning FusionNet. Optimization uses AdamW (lr = 2e‑4, cosine annealing) with gradient clipping (norm = 1.0) on a single NVIDIA L4 GPU.

Evaluation on the SNU‑FILM benchmark (four difficulty splits based on motion magnitude) shows:

- Perceptual metrics: SG‑RIFE achieves FID = 4.557 (Easy), 8.678 (Medium), 17.896 (Hard), 36.272 (Extreme), outperforming diffusion baselines Consec. BB (4.791, 9.039, 18.589, 36.631) and TLB‑VFI (4.658, 4.658, 17.470, 29.868). LPIPS and FloLPIPS are similarly competitive.

- Pixel‑level metrics: PSNR drops modestly (e.g., –0.339 dB on Hard) compared to vanilla RIFE, reflecting the perception‑distortion trade‑off.

- Runtime: 0.05 s per 480×720 frame on an L4 GPU, roughly 2–3× faster than other real‑time baselines (RIFE 0.01 s, IFRNet 0.10 s) and >50× faster than diffusion models (2.6 s for Consec. BB, 0.69 s for TLB‑VFI).

Qualitative results demonstrate that semantic injection restores object boundaries and fine textures in occluded regions where flow alone fails, while the deformable alignment prevents mis‑registration artifacts.

Contributions and Impact.

- Introduces a practical method to leverage frozen foundation‑model semantics for low‑level video synthesis without heavy computational overhead.

- Proposes Split‑FAPM and DSF as lightweight, differentiable interfaces between high‑dimensional semantic embeddings and flow‑based VFI pipelines.

- Shows that semantic consistency can shift the perception‑distortion frontier, achieving diffusion‑level visual quality at real‑time speeds.

- Provides a training recipe that requires only a single modest GPU, making the approach accessible for broader deployment.

The work opens avenues for extending semantic‑guided fine‑tuning to other low‑level tasks (denoising, super‑resolution) and to multimodal video generation scenarios where object‑level consistency is crucial.

Comments & Academic Discussion

Loading comments...

Leave a Comment