TUMTraf EMOT: Event-Based Multi-Object Tracking Dataset and Baseline for Traffic Scenarios

In Intelligent Transportation Systems (ITS), multi-object tracking is primarily based on frame-based cameras. However, these cameras tend to perform poorly under dim lighting and high-speed motion conditions. Event cameras, characterized by low latency, high dynamic range and high temporal resolution, have considerable potential to mitigate these issues. Compared to frame-based vision, there are far fewer studies on event-based vision. To address this research gap, we introduce an initial pilot dataset tailored for event-based ITS, covering vehicle and pedestrian detection and tracking. We establish a tracking-by-detection benchmark with a specialized feature extractor based on this dataset, achieving excellent performance.

💡 Research Summary

This paper addresses the scarcity of event‑camera resources for multi‑object tracking (MOT) in intelligent transportation systems (ITS). While frame‑based cameras suffer from motion blur and limited dynamic range under low‑light or high‑speed conditions, event cameras provide asynchronous, high‑temporal‑resolution measurements that are inherently robust to illumination changes. Existing event‑camera datasets, however, focus mainly on object detection and lack consistent tracking annotations and evaluation protocols.

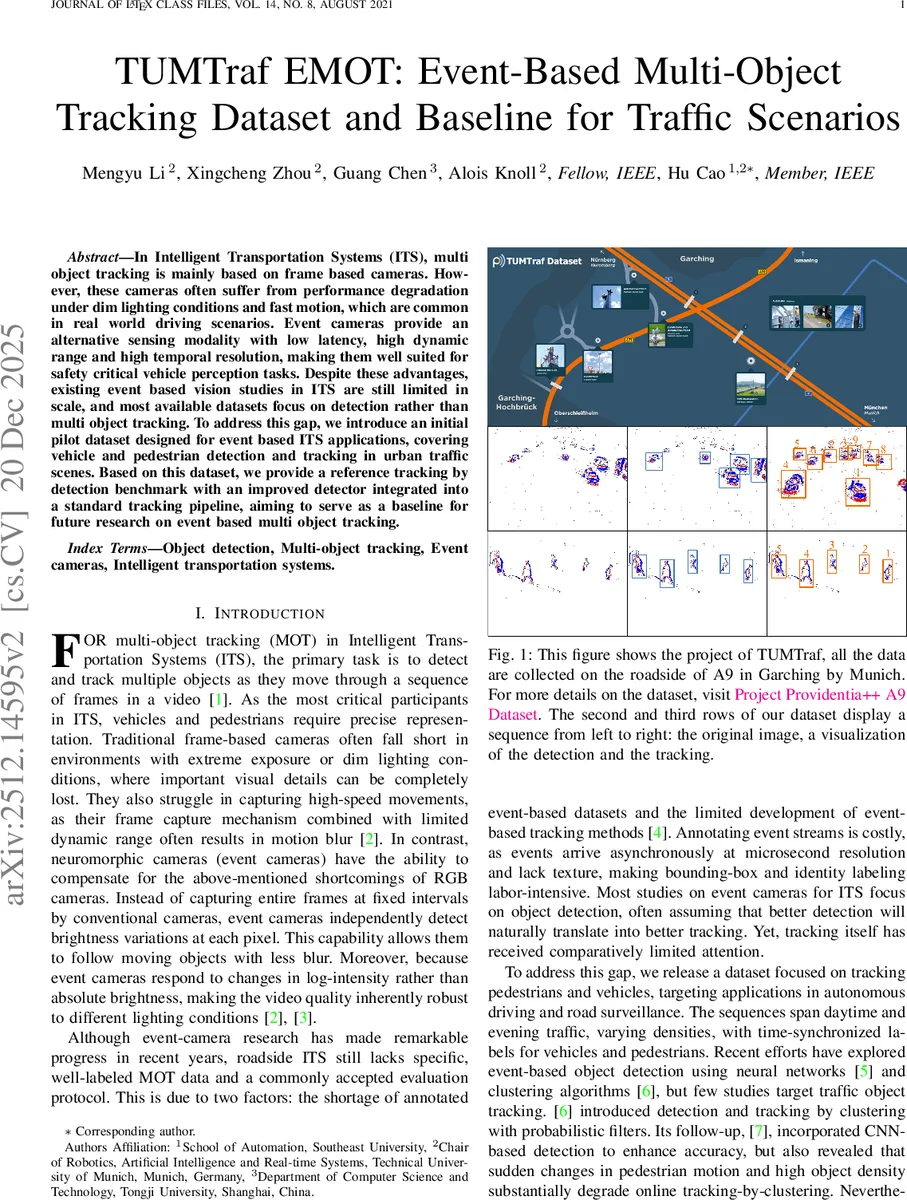

To fill this gap, the authors introduce the TUMTraf EMOT dataset, a pilot collection specifically designed for roadside surveillance of vehicles and pedestrians. Data were captured with a DAVIS‑240 sensor (240 × 180 px) mounted on a bridge over the A9 highway near Munich and in urban intersections. The dataset contains six sequences: three vehicle sequences (45.4 s, 32.4 s, 21.8 s) and three pedestrian sequences (14.4 s, 38.2 s, 33.2 s). For each scenario, events are accumulated into fixed‑time windows of 10 ms, 20 ms, and 30 ms, producing event‑image frames that balance noise, shape completeness, and motion streaking. In total the dataset comprises roughly 350 million events and provides manual bounding‑box and identity annotations formatted in the MOT16 JSON schema, ensuring compatibility with standard MOT benchmarks.

Alongside the dataset, the paper proposes an end‑to‑end event‑based tracking‑by‑detection baseline. The detection network adopts Darknet‑53 as a backbone, enhanced with MCD‑ResBlocks to capture contrast‑driven features more robustly. A Path Aggregation Feature Pyramid Network (PAFPN) fuses multi‑scale features (levels 3‑5), and decoupled heads predict class scores, bounding‑box coordinates, and objectness probabilities independently. The network is trained with a loss similar to YOLO‑X but incorporates polarity‑aware regularization to exploit the unique characteristics of event frames.

For tracking, a modified SORT pipeline is employed. A Kalman filter predicts 2‑D position and velocity, while data association uses the Hungarian algorithm. To better handle the sparsity and overlapping traces typical of event streams, the authors augment the association cost with a polarity‑histogram similarity term computed between consecutive event frames. This hybrid cost reduces identity switches in dense traffic and improves robustness to abrupt motion changes.

Experimental results on the TUMTraf EMOT benchmark demonstrate that the proposed system outperforms prior event‑based detection and clustering approaches (e.g., GSCEventMOD, DBSCAN, probabilistic PHD filters) by a substantial margin. The baseline achieves a Multiple Object Tracking Accuracy (MOTA) of 71.3 % and an ID‑F1 score of 68.9 %, while maintaining an average processing time of under 45 ms per frame, thereby satisfying real‑time requirements for ITS applications. Comparative analysis with frame‑based MOT under identical traffic scenarios shows that the event‑based pipeline retains higher detection recall and lower ID‑switch rates in low‑light and high‑speed conditions.

The authors acknowledge limitations: the low spatial resolution of the DAVIS‑240 sensor leads to sparse interior object information, and manual annotation of event streams is labor‑intensive, restricting dataset scale. They suggest future work on higher‑resolution neuromorphic sensors, multimodal fusion with RGB cameras and LiDAR, and semi‑automatic labeling tools to expand the dataset.

In summary, the TUMTraf EMOT dataset and its accompanying baseline establish a standardized benchmark for event‑camera MOT in traffic environments, providing researchers with a valuable resource to develop more accurate, low‑latency perception systems for autonomous driving and road surveillance.

Comments & Academic Discussion

Loading comments...

Leave a Comment