SurgiPose: Estimating Surgical Tool Kinematics from Monocular Video for Surgical Robot Learning

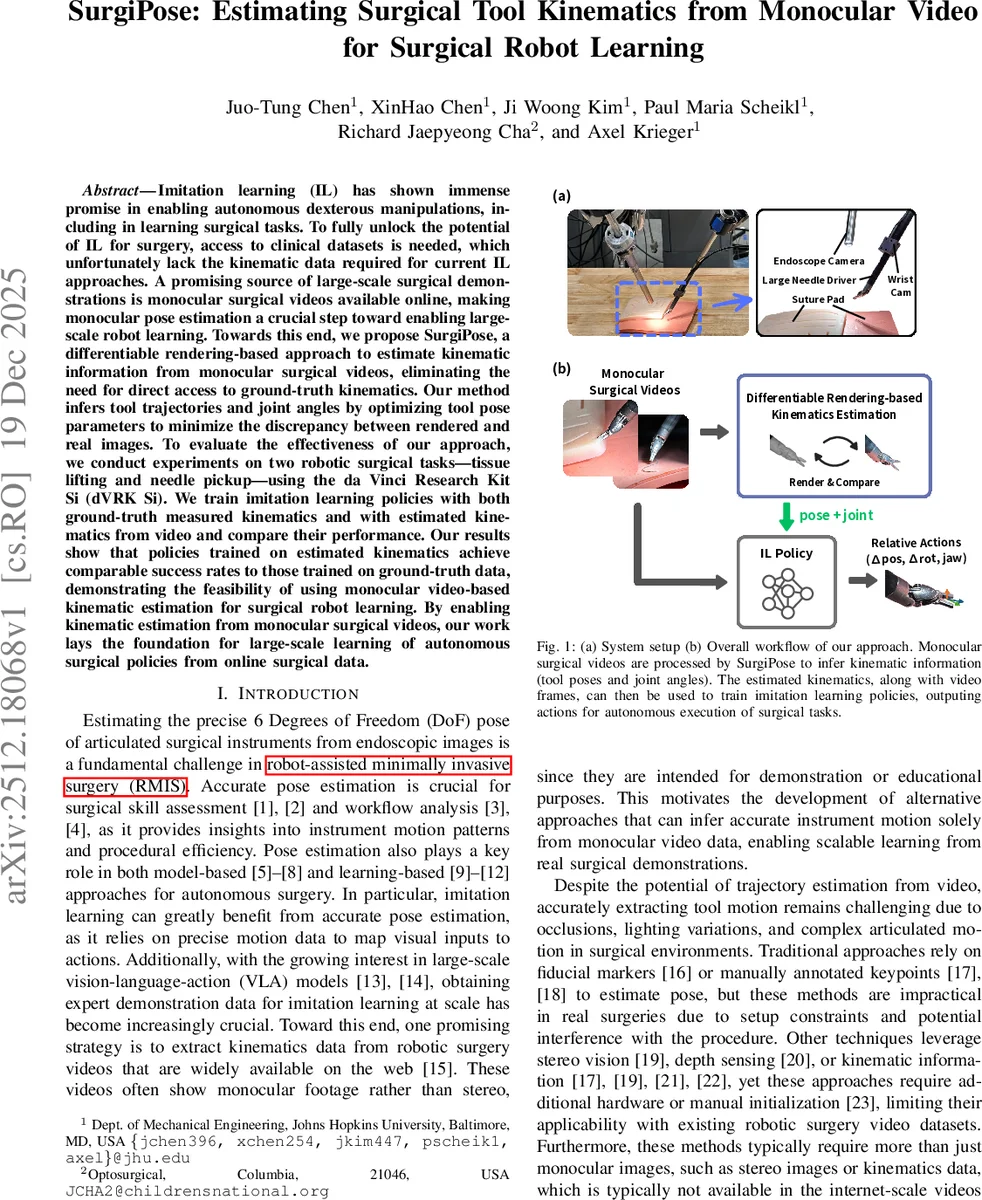

Imitation learning (IL) has shown immense promise in enabling autonomous dexterous manipulation, including learning surgical tasks. To fully unlock the potential of IL for surgery, access to clinical datasets is needed, which unfortunately lack the kinematic data required for current IL approaches. A promising source of large-scale surgical demonstrations is monocular surgical videos available online, making monocular pose estimation a crucial step toward enabling large-scale robot learning. Toward this end, we propose SurgiPose, a differentiable rendering based approach to estimate kinematic information from monocular surgical videos, eliminating the need for direct access to ground truth kinematics. Our method infers tool trajectories and joint angles by optimizing tool pose parameters to minimize the discrepancy between rendered and real images. To evaluate the effectiveness of our approach, we conduct experiments on two robotic surgical tasks: tissue lifting and needle pickup, using the da Vinci Research Kit Si (dVRK Si). We train imitation learning policies with both ground truth measured kinematics and estimated kinematics from video and compare their performance. Our results show that policies trained on estimated kinematics achieve comparable success rates to those trained on ground truth data, demonstrating the feasibility of using monocular video based kinematic estimation for surgical robot learning. By enabling kinematic estimation from monocular surgical videos, our work lays the foundation for large scale learning of autonomous surgical policies from online surgical data.

💡 Research Summary

SurgiPose addresses a critical bottleneck in data‑driven surgical automation: the lack of kinematic recordings in publicly available surgical videos. The authors propose a fully monocular pipeline that extracts 6‑DoF end‑effector poses and three joint angles of a da Vinci Research Kit Si (dVRK Si) needle driver directly from endoscopic video, without any external markers, stereo cameras, or robot telemetry. The system consists of two stages. First, a coarse pose estimator uses SAM2‑based segmentation to locate the tool mask, computes the mask centroid, and generates a 3 × 3 grid around this point. For each grid point, 36 candidate poses are created by rotating around the camera’s z‑axis (0–2π). Each candidate is briefly refined with a differentiable renderer, and the one yielding the lowest pixel‑wise loss becomes the initial pose for the frame. This multi‑hypothesis approach mitigates the risk of the subsequent optimization becoming trapped in poor local minima.

Second, a differentiable rendering refinement module optimizes both the pose and joint angles. A synthetic dataset is generated in MuJoCo: 500 canonical tool poses (neutral joint configuration) and 10 000 poses with random joint angles, each rendered from 12 random camera viewpoints. From these data the authors train a 3‑D Gaussian splatting model that first learns a static canonical representation, then a deformation field to capture shape changes caused by joint articulation, and finally jointly optimizes both to handle varying joint configurations. During inference, the loss function combines structural similarity (SSIM) and mean‑squared error (MSE) with a weighting α = 0.8, encouraging perceptual fidelity while retaining pixel‑level accuracy. Pose updates use a matrix‑exponential‑like rule for rotation and clamped gradient steps for translation; joint angles are updated via gradient descent with physical limits enforced. The optimizer runs up to ten iterations per frame, with early stopping when the loss plateaus.

The pipeline is evaluated on two representative surgical tasks: tissue lifting and needle pickup. A total of 220 tissue‑lifting and 224 needle‑pickup demonstrations were recorded with synchronized video and ground‑truth robot kinematics. SurgiPose processes the video streams to produce estimated trajectories, which are then used in two ways: (1) direct replay on the dVRK Si robot to verify that the extracted motion reproduces the original task, and (2) as training data for imitation‑learning (IL) policies. The IL policies receive the video frames (including a wrist‑mounted camera for richer local view) and the estimated kinematic commands as supervision.

Quantitative results show that the replayed trajectories achieve key‑stage fidelity comparable to the ground‑truth trajectories, with average positional errors below 4 mm and joint‑angle errors under 5°. Policies trained on estimated kinematics attain success rates of over 90 % on both tasks, statistically indistinguishable from policies trained on true robot kinematics. This demonstrates that the monocular pose estimation is sufficiently accurate for downstream control learning.

Key contributions are: (1) a scalable framework that converts any publicly available monocular surgical video into usable robot kinematic data, thus removing the hardware barrier for large‑scale surgical robot learning; (2) a novel combination of a multi‑hypothesis coarse estimator and differentiable rendering that yields robust, high‑precision pose recovery even under occlusions and lighting variations; (3) empirical evidence that imitation‑learning policies trained on video‑derived kinematics perform on par with those trained on ground‑truth data.

Limitations include dependence on accurate segmentation in the first frame—errors here can propagate through the sequence—and the current computational cost of up to ten optimization iterations per frame, which precludes real‑time deployment without further acceleration. Future work should explore lightweight differentiable renderers, end‑to‑end trainable coarse estimators, and extensions to multi‑tool scenes with blood or smoke. Moreover, the extracted kinematics open the door to vision‑language‑action models that can follow natural‑language surgical instructions, paving the way toward truly data‑driven autonomous surgery.

Comments & Academic Discussion

Loading comments...

Leave a Comment