Map2Video: Street View Imagery Driven AI Video Generation

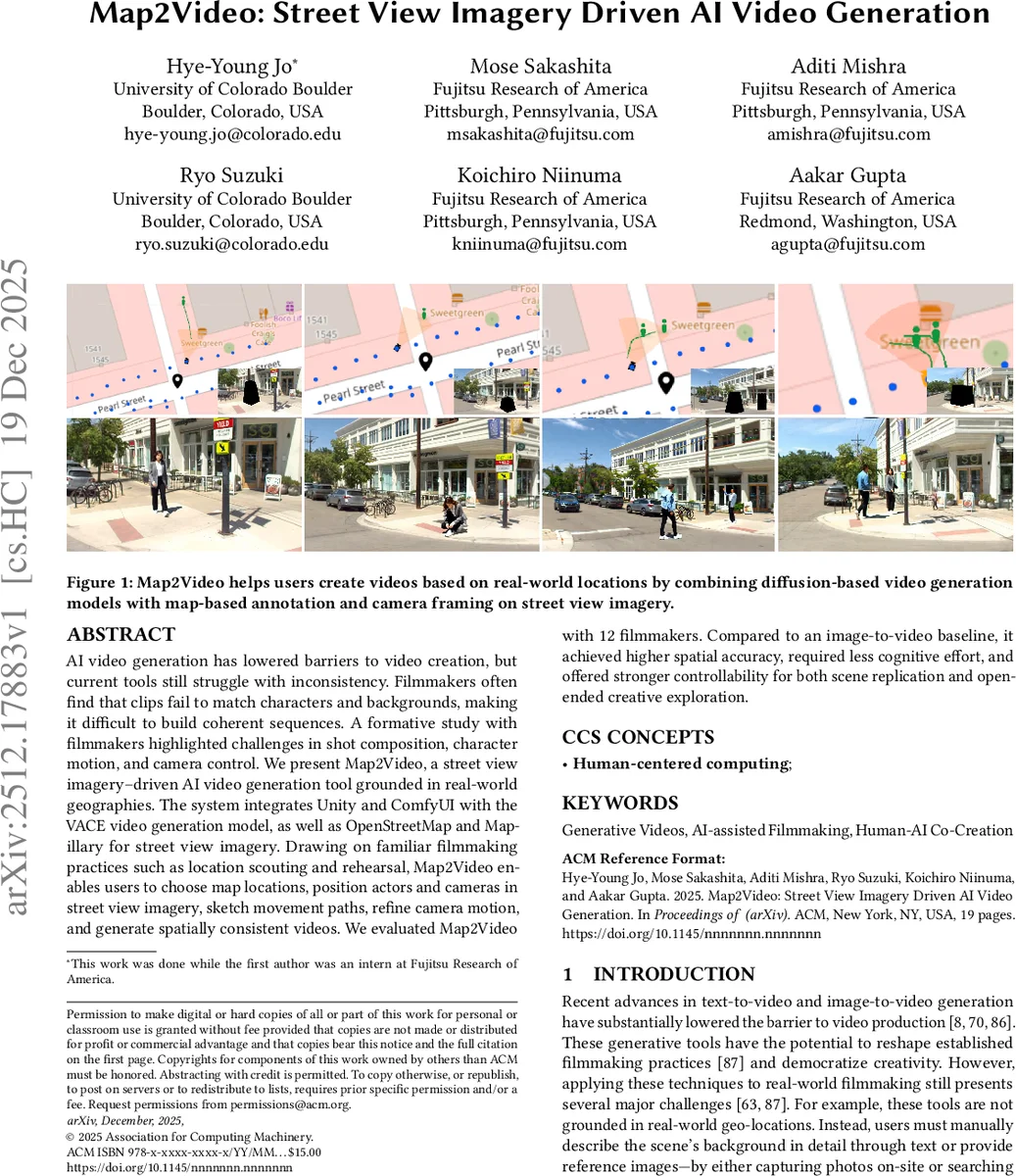

AI video generation has lowered barriers to video creation, but current tools still struggle with inconsistency. Filmmakers often find that clips fail to match characters and backgrounds, making it difficult to build coherent sequences. A formative study with filmmakers highlighted challenges in shot composition, character motion, and camera control. We present Map2Video, a street view imagery-driven AI video generation tool grounded in real-world geographies. The system integrates Unity and ComfyUI with the VACE video generation model, as well as OpenStreetMap and Mapillary for street view imagery. Drawing on familiar filmmaking practices such as location scouting and rehearsal, Map2Video enables users to choose map locations, position actors and cameras in street view imagery, sketch movement paths, refine camera motion, and generate spatially consistent videos. We evaluated Map2Video with 12 filmmakers. Compared to an image-to-video baseline, it achieved higher spatial accuracy, required less cognitive effort, and offered stronger controllability for both scene replication and open-ended creative exploration.

💡 Research Summary

Map2Video introduces a novel workflow for AI‑driven video generation that grounds the output in real‑world geography by leveraging Street View Imagery (SVI) from OpenStreetMap and Mapillary. The authors first conducted a formative survey of 34 AI‑assisted filmmakers and a small prototype study with five participants, uncovering the most pressing pain points: maintaining visual and spatial consistency across generated clips, limited control over shot composition, and inadequate camera motion tools. These findings motivated the design of a six‑step, map‑centric authoring interface that mirrors traditional filmmaking practices such as location scouting, rehearsal, and walk‑throughs.

The system integrates Unity for 3D scene management and camera manipulation, ComfyUI for orchestrating the VACE video generation model, and external APIs for map and street‑level imagery. Users begin by selecting a location on a map (Location Scouting), which loads a corresponding street‑view panorama. They then place green‑box masks (Mask Positioning) to indicate where actors or objects will appear. By sketching trajectories directly on the map (Movement Sketching), users define temporal paths for those masks, which are synchronized with a live camera view. The Camera Walkthrough step lets users fine‑tune camera position, pan, zoom, and rotation in a Unity viewport, providing an intuitive “virtual dolly” experience. A natural‑language prompt (Prompting Scene) describes character appearance and actions; this text, together with the mask and camera metadata, is fed to the VACE model via ComfyUI. Finally, the Video In‑painting stage generates each frame, preserving the original street‑view background while in‑painting only the masked regions, thereby ensuring spatial continuity.

A user study with 12 professional filmmakers compared Map2Video against a baseline image‑to‑video pipeline across two tasks: (1) replication of existing film scenes and (2) open‑ended creative production. In the replication task, participants required 1.2× fewer fine‑tuning iterations and rated spatial accuracy at 4.3/5, significantly higher than the baseline. NASA‑TLX scores indicated a 22 % reduction in perceived cognitive load. In the open‑ended task, the UEQ questionnaire showed strong gains in efficiency, autonomy, and excitement (all >0.8). Qualitative feedback highlighted that the map‑based grounding eliminated the need for manually curated reference images and that the sketch‑plus‑prompt multimodal input aligned with filmmakers’ mental models, reducing iteration time.

Key contributions include: (1) the concept of SVI‑driven AI video generation, (2) a human‑centered six‑step interface that maps familiar pre‑visualization practices onto generative AI, (3) an integrated prototype combining Unity, ComfyUI, VACE, OSM, and Mapillary, and (4) empirical evidence that grounding generation in real‑world imagery improves spatial consistency and lowers cognitive effort. Limitations are noted: coverage gaps where street‑view data are unavailable, and the current mask‑based in‑painting struggles with complex lighting changes or large crowds. Future work proposes extending the approach with satellite or 3D map priors, richer illumination modeling, and tighter integration with LLM‑driven script planning to create a full end‑to‑end AI‑assisted filmmaking pipeline.

Comments & Academic Discussion

Loading comments...

Leave a Comment