Calibratable Disambiguation Loss for Multi-Instance Partial-Label Learning

Multi-instance partial-label learning (MIPL) is a weakly supervised framework that extends the principles of multi-instance learning (MIL) and partial-label learning (PLL) to address the challenges of inexact supervision in both instance and label spaces. However, existing MIPL approaches often suffer from poor calibration, undermining classifier reliability. In this work, we propose a plug-and-play calibratable disambiguation loss (CDL) that simultaneously improves classification accuracy and calibration performance. The loss has two instantiations: the first one calibrates predictions based on probabilities from the candidate label set, while the second one integrates probabilities from both candidate and non-candidate label sets. The proposed CDL can be seamlessly incorporated into existing MIPL and PLL frameworks. We provide a theoretical analysis that establishes the lower bound and regularization properties of CDL, demonstrating its superiority over conventional disambiguation losses. Experimental results on benchmark and real-world datasets confirm that our CDL significantly enhances both classification and calibration performance.

💡 Research Summary

Multi‑Instance Partial‑Label Learning (MIPL) tackles the dual inexactness problem where both the instances inside a bag and the true label among a set of candidate labels are unknown. While recent MIPL methods have focused on label disambiguation, they have largely ignored model calibration, resulting in over‑confident or under‑confident predictions that are unsuitable for high‑stakes applications such as pathology image analysis.

In this paper the authors introduce a plug‑and‑play Calibratable Disambiguation Loss (CDL) that simultaneously improves classification accuracy and calibration quality. CDL comes in two instantiations. The first, CDL‑C, operates only on the candidate label set: it penalizes the gap between the highest predicted probability and the second‑highest probability within the candidate set, encouraging the model to be decisive among candidates while still preserving uncertainty. The second, CDL‑CN, also incorporates the non‑candidate label set by contrasting the highest candidate probability with the highest probability assigned to any non‑candidate label, thereby suppressing spurious confidence on irrelevant classes. Both variants extend focal loss (FL) and inverse focal loss (IFL) to the weakly supervised MIPL setting by weighting all candidate labels (with epoch‑dependent weights w(t)_{i,c}) rather than treating a single surrogate label as ground truth.

Theoretical analysis establishes that CDL provides a tighter lower bound on the expected loss compared with conventional disambiguation losses and includes a regularization term (λ·(p_max−p_second)^2 or λ·(p_max−p_nonmax)^2) that constrains the model’s parameter space, mitigating over‑fitting and excessive confidence.

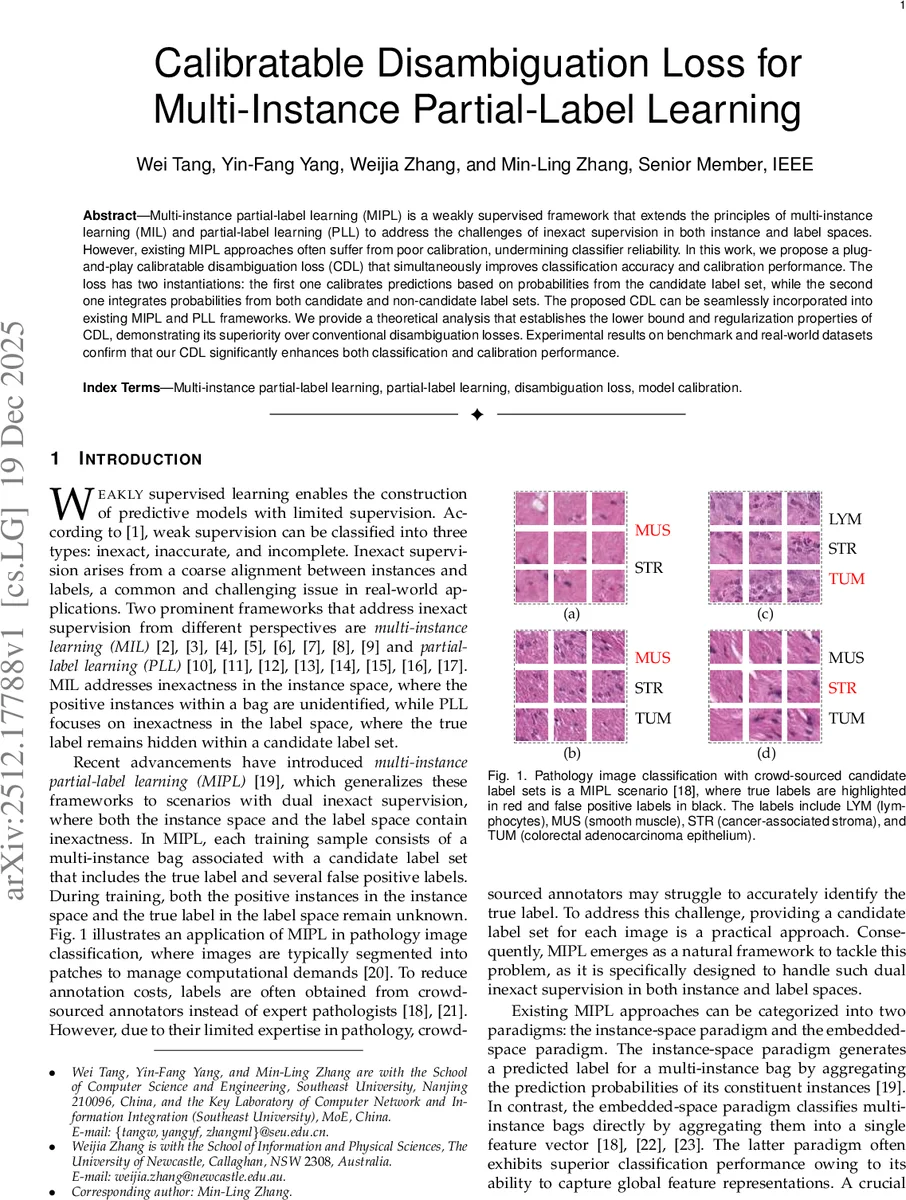

Empirical evaluation is conducted on five benchmark datasets—including C‑KMeans, FMNIST‑MIPL, CIFAR‑10‑MIPL—and a real‑world pathology image dataset with crowd‑sourced candidate label sets. CDL is integrated into existing MIPL frameworks such as DEMIPL, ELIMIPL, and MIPLM A, as well as into the Scaled Additive Attention Mechanism (SAM) used in recent PLL methods. Results show consistent gains: classification accuracy improves by 2–4 percentage points, while Expected Calibration Error (ECE) drops from >20 % to below 10 % (as low as 1.96 %). Reliability diagrams demonstrate that CDL‑enhanced models produce confidence scores that align closely with empirical accuracies across all bins, effectively achieving near‑perfect calibration. Ablation studies confirm the importance of the regularization weight λ and the focusing parameter γ, with λ≈0.5 and γ≈2 yielding the best trade‑off.

The authors also discuss practical considerations. CDL adds negligible computational overhead when the candidate set is modest, but large candidate sets may increase cost. The CN variant can be slightly less effective when candidate labels are highly imbalanced, suggesting future work on adaptive weighting or sampling strategies.

In summary, this work is the first to address calibration in MIPL explicitly. By leveraging probability gaps within and across candidate/non‑candidate label sets, CDL provides a theoretically sound and empirically validated loss that can be seamlessly plugged into any existing MIPL or PLL pipeline, delivering both higher predictive performance and trustworthy confidence estimates—an essential step toward deploying weakly supervised models in critical domains such as medical diagnosis.

Comments & Academic Discussion

Loading comments...

Leave a Comment