Generative Human-Object Interaction Detection via Differentiable Cognitive Steering of Multi-modal LLMs

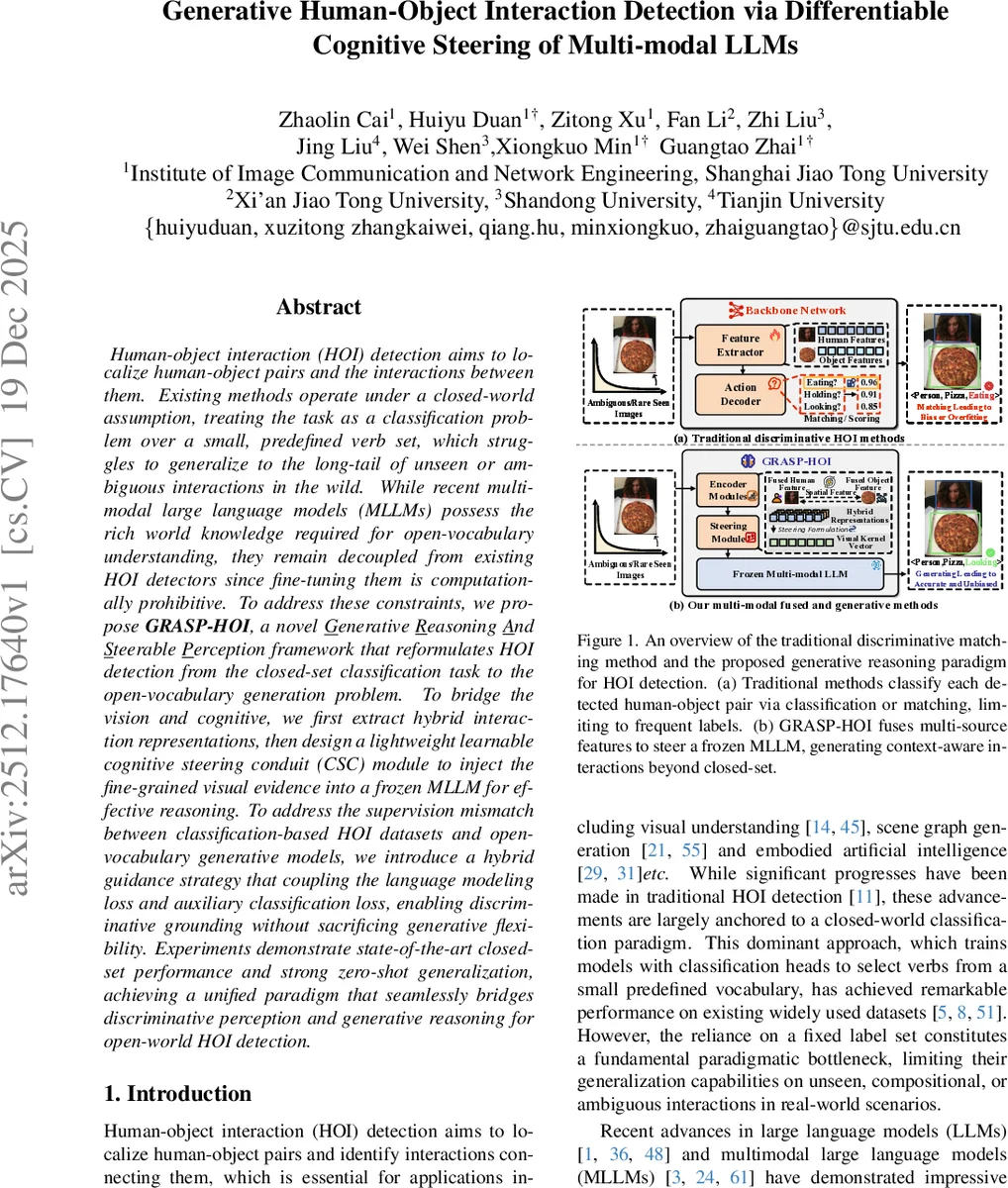

Human-object interaction (HOI) detection aims to localize human-object pairs and the interactions between them. Existing methods operate under a closed-world assumption, treating the task as a classification problem over a small, predefined verb set, which struggles to generalize to the long-tail of unseen or ambiguous interactions in the wild. While recent multi-modal large language models (MLLMs) possess the rich world knowledge required for open-vocabulary understanding, they remain decoupled from existing HOI detectors since fine-tuning them is computationally prohibitive. To address these constraints, we propose \GRASP-HO}, a novel Generative Reasoning And Steerable Perception framework that reformulates HOI detection from the closed-set classification task to the open-vocabulary generation problem. To bridge the vision and cognitive, we first extract hybrid interaction representations, then design a lightweight learnable cognitive steering conduit (CSC) module to inject the fine-grained visual evidence into a frozen MLLM for effective reasoning. To address the supervision mismatch between classification-based HOI datasets and open-vocabulary generative models, we introduce a hybrid guidance strategy that coupling the language modeling loss and auxiliary classification loss, enabling discriminative grounding without sacrificing generative flexibility. Experiments demonstrate state-of-the-art closed-set performance and strong zero-shot generalization, achieving a unified paradigm that seamlessly bridges discriminative perception and generative reasoning for open-world HOI detection.

💡 Research Summary

The paper introduces GRASP‑HOI (Generative Reasoning And Steerable Perception for Human‑Object Interaction detection), a novel framework that reconceptualizes HOI detection from a closed‑set classification problem into an open‑vocabulary generative task. Traditional HOI detectors rely on a predefined verb set and treat interaction recognition as multi‑class classification, which limits their ability to handle long‑tail, unseen, or ambiguous interactions. While multimodal large language models (MLLMs) possess extensive world knowledge and can reason in an open‑world setting, directly fine‑tuning them is computationally prohibitive and risks catastrophic forgetting of their pretrained knowledge.

GRASP‑HOI bridges this gap by (1) constructing a Hybrid Interaction Representation that fuses entity‑level evidence (instance tokens from a detector and appearance features from RoI‑pooling) with pair‑level geometric cues, (2) employing a Salience Adjudication Transformer (SAT) and an Orchestration Gate to contextualize and rank candidate human‑object pairs, and (3) introducing a lightweight, learnable Cognitive Steering Conduit (CSC) that injects the selected visual evidence into a frozen MLLM as a conditioning visual kernel. The CSC converts each adjudicated candidate token into a compact evidence vector, which is transformed into a sequential visual kernel that guides the MLLM’s text decoder. This enables the frozen language model to generate interaction verbs that are grounded in the visual evidence while retaining its broad semantic knowledge.

To reconcile the supervision mismatch between classification‑oriented HOI datasets and generative language models, the authors propose a Hybrid Guidance Objective that combines a language modeling loss (encouraging the MLLM to generate the correct verb sequence) with an auxiliary classification loss (ensuring that the generated verb aligns with the ground‑truth class). The joint loss balances generative flexibility with discriminative grounding.

Experiments on the standard HOI benchmarks HICO‑DET and V‑COCO demonstrate that GRASP‑HOI achieves state‑of‑the‑art performance in the closed‑set setting, surpassing prior methods such as PPDM and InteractNet. More importantly, in zero‑shot and open‑vocabulary evaluations, the model shows strong generalization to unseen verb‑object combinations, confirming that the CSC effectively leverages the MLLM’s world knowledge for novel interaction reasoning. The CSC adds only a few million parameters, and the MLLM remains frozen, resulting in modest training costs compared to full fine‑tuning.

Key contributions include: (i) reframing HOI detection as a generative, open‑vocabulary problem; (ii) designing the CSC as a differentiable interface that steers a frozen MLLM with structured visual evidence; (iii) formulating a hybrid loss that jointly optimizes language generation and classification; and (iv) delivering superior closed‑set accuracy and robust zero‑shot performance. The work opens avenues for integrating large generative models into visual reasoning tasks such as robot manipulation, video understanding, and complex human behavior analysis, where grounded yet flexible language generation is essential.

Comments & Academic Discussion

Loading comments...

Leave a Comment