RecipeMasterLLM: Revisiting RoboEarth in the Era of Large Language Models

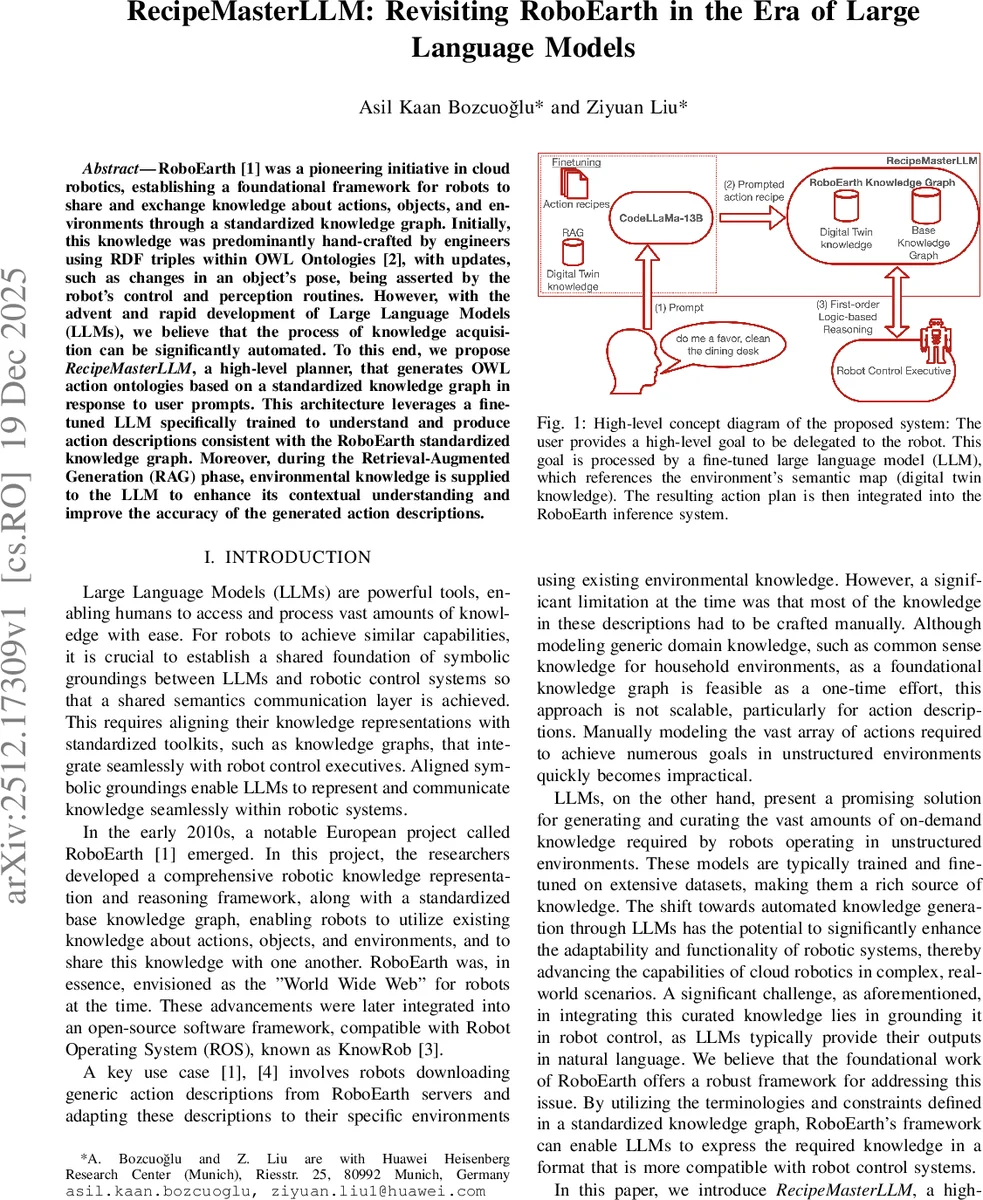

RoboEarth was a pioneering initiative in cloud robotics, establishing a foundational framework for robots to share and exchange knowledge about actions, objects, and environments through a standardized knowledge graph. Initially, this knowledge was predominantly hand-crafted by engineers using RDF triples within OWL Ontologies, with updates, such as changes in an object’s pose, being asserted by the robot’s control and perception routines. However, with the advent and rapid development of Large Language Models (LLMs), we believe that the process of knowledge acquisition can be significantly automated. To this end, we propose RecipeMasterLLM, a high-level planner, that generates OWL action ontologies based on a standardized knowledge graph in response to user prompts. This architecture leverages a fine-tuned LLM specifically trained to understand and produce action descriptions consistent with the RoboEarth standardized knowledge graph. Moreover, during the Retrieval-Augmented Generation (RAG) phase, environmental knowledge is supplied to the LLM to enhance its contextual understanding and improve the accuracy of the generated action descriptions.

💡 Research Summary

**

The paper revisits the RoboEarth cloud‑robotics platform in the context of today’s large language models (LLMs) and proposes a high‑level planner called RecipeMasterLLM. RoboEarth originally provided a standardized knowledge graph (RKG) based on OWL/RDF that allowed robots to share information about actions, objects, and environments. However, the original system relied on manually crafted RDF triples and hand‑written “action recipes,” which limited scalability.

RecipeMasterLLM aims to automate the acquisition of these action recipes by leveraging an open‑source LLM, CodeLLaMa‑13B, fine‑tuned with a small but carefully curated dataset of 350 action recipes (both from RoboEarth and GPT‑4‑generated examples). The fine‑tuning uses the QLoRA technique, which quantizes the model to 4‑bit precision, dramatically reducing memory requirements and enabling training on modest hardware.

The architecture consists of two main stages. In the first stage, the fine‑tuned model learns the syntax and semantics of RoboEarth’s OWL action ontology, allowing it to output structured TBox and ABox assertions. In the second stage, a Retrieval‑Augmented Generation (RAG) pipeline supplies up‑to‑date environmental context (the “digital twin”) to the model. LlamaIndex together with the bge‑small‑en‑v1.5 embedding model indexes the semantic map of the robot’s surroundings; at inference time, the most relevant triples are retrieved and injected into the prompt, ensuring that generated recipes are grounded in the current state of the world.

When a user issues a high‑level natural‑language goal (e.g., “pick up the cup from the table and place it in the sink”), the model produces an ordered list of OWL actions such as approachTable1, graspCup1, moveTowardsSink1, placeCupOnSink1. These actions are asserted into the RoboEarth Knowledge Graph. The graph is queried by the Robot Control Executive (RCE) using SWI‑Prolog; the RCE extracts required parameters, checks capability compatibility, and sequentially invokes the low‑level robot controllers. Algorithm 1 in the paper outlines this loop, including safety aborts on capability mismatches.

Key contributions highlighted by the authors are: (1) a pipeline that bridges user prompts, LLM‑generated structured knowledge, and a mature robotic knowledge graph; (2) the use of a small, open‑source LLM fine‑tuned with QLoRA, demonstrating that large proprietary models are not strictly necessary for this task; (3) integration of RAG to make the planner environment‑aware without re‑training the LLM for every new scene.

The related‑work section positions RecipeMasterLLM among recent efforts such as InterPreT, LASP, D‑AG‑Plan, and SMART‑LLM, noting that most prior work either focuses on natural‑language‑to‑code translation or on error diagnosis, whereas this work directly generates OWL‑compatible action recipes that can be consumed by an existing inference engine.

However, the paper lacks an empirical evaluation. No quantitative metrics (e.g., recipe correctness, execution success rate, latency) are reported, making it difficult to assess real‑world performance. The authors acknowledge that building and maintaining the digital twin knowledge base may be costly in practice, and that verification of LLM‑generated recipes (logical consistency, safety) is not fully addressed. Additionally, the current implementation is limited to a single 13 B‑parameter model, raising questions about scalability to more complex multi‑robot or multi‑modal scenarios.

Future work suggested includes (a) adding automated formal verification (type checking, simulation‑based validation) of generated recipes; (b) extending RAG to incorporate multimodal sensory data (vision, force); (c) benchmarking on multi‑robot collaborative tasks; and (d) exploring larger or more specialized LLMs for richer reasoning.

In summary, RecipeMasterLLM demonstrates a promising integration of LLM‑driven knowledge generation with the mature, ontology‑based RoboEarth framework. By automating the creation of action recipes and grounding them in up‑to‑date environmental context, the approach could significantly improve the adaptability and scalability of cloud‑robotics systems, provided that rigorous validation and performance studies are conducted in future iterations.

Comments & Academic Discussion

Loading comments...

Leave a Comment