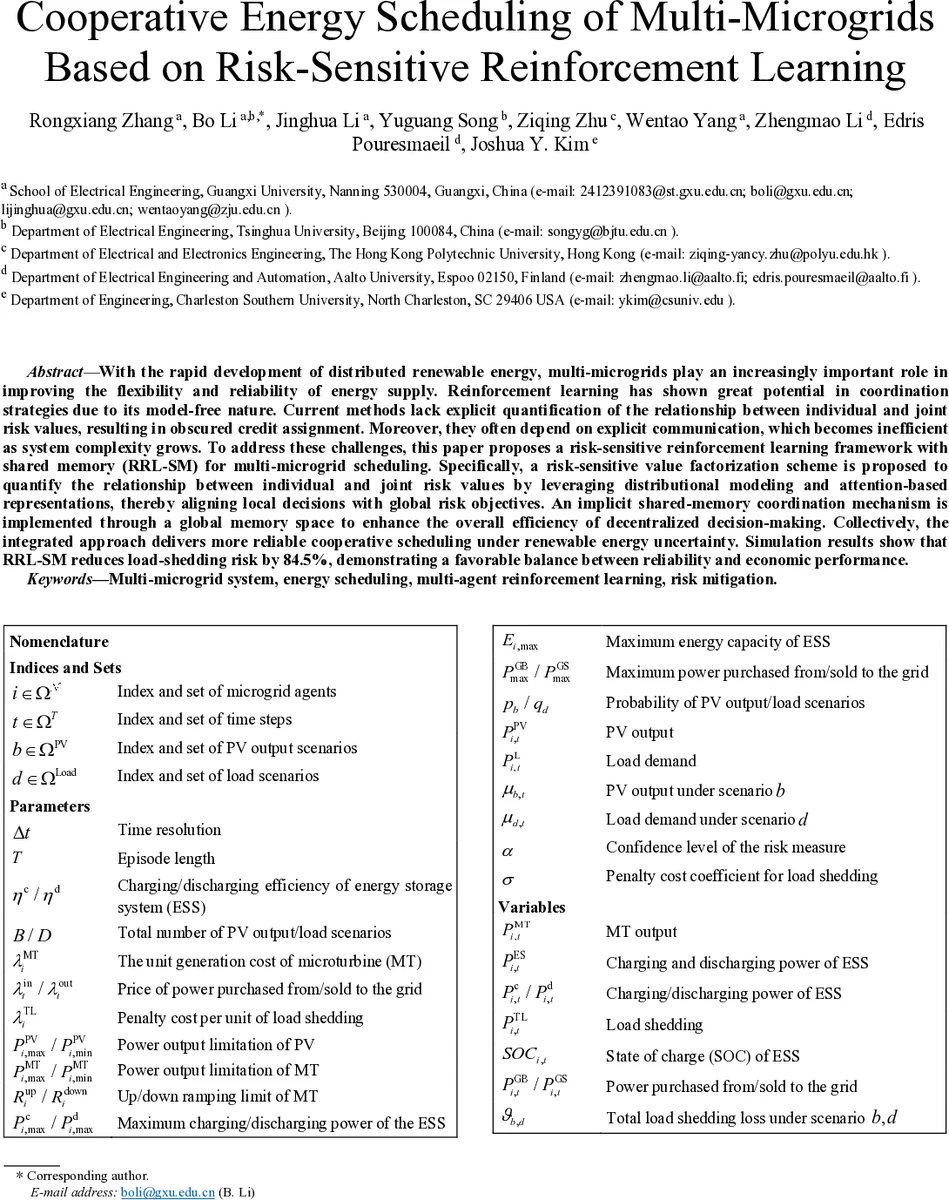

Cooperative Energy Scheduling of Multi-Microgrids Based on Risk-Sensitive Reinforcement Learning

With the rapid development of distributed renewable energy, multi-microgrids play an increasingly important role in improving the flexibility and reliability of energy supply. Reinforcement learning has shown great potential in coordination strategies due to its model-free nature. Current methods lack explicit quantification of the relationship between individual and joint risk values, resulting in obscured credit assignment. Moreover, they often depend on explicit communication, which becomes inefficient as system complexity grows. To address these challenges, this paper proposes a risk-sensitive reinforcement learning framework with shared memory (RRL-SM) for multi-microgrid scheduling. Specifically, a risk-sensitive value factorization scheme is proposed to quantify the relationship between individual and joint risk values by leveraging distributional modeling and attention-based representations, thereby aligning local decisions with global risk objectives. An implicit shared-memory coordination mechanism is implemented through a global memory space to enhance the overall efficiency of decentralized decision-making. Collectively, the integrated approach delivers more reliable cooperative scheduling under renewable energy uncertainty. Simulation results show that RRL-SM reduces load-shedding risk by 84.5%, demonstrating a favorable balance between reliability and economic performance.

💡 Research Summary

The paper addresses the growing need for coordinated energy scheduling among multiple microgrids (MGs) in a renewable‑rich power system. While model‑free reinforcement learning (RL) has shown promise for decentralized control, existing multi‑agent RL (MARL) approaches suffer from two critical shortcomings: (1) they lack an explicit, quantitative link between the risk incurred by individual MGs and the overall system risk, making credit assignment ambiguous; and (2) they rely on explicit inter‑agent communication, which becomes a scalability bottleneck as the number of MGs and the dimensionality of the problem increase.

To overcome these limitations, the authors propose a novel framework called Risk‑Sensitive Reinforcement Learning with Shared Memory (RRL‑SM). The framework integrates two core mechanisms. First, a risk‑sensitive value factorization scheme is introduced. Each MG learns a distributional value function that captures the full return distribution rather than just its expectation. From this distribution, Conditional Value at Risk (CVaR) is extracted to quantify the tail risk associated with load shedding. A multi‑head attention‑based mixer network then receives the risk‑sensitive returns of all agents and learns a dynamic mapping that aligns individual risk contributions with a global risk objective. The attention weights adapt to the current system state (e.g., sudden PV drop or load surge), automatically emphasizing agents that are currently more critical to risk mitigation.

Second, the framework replaces point‑to‑point messaging with an implicit shared‑memory coordination mechanism. All agents write their local observations (ESS state of charge, turbine output, local load, etc.) and chosen actions into a global memory space. Other agents read from this memory asynchronously, thereby obtaining a holistic view of the system without explicit message exchanges. This design dramatically reduces communication overhead, latency, and network congestion, enabling the approach to scale to large MG networks.

Algorithmically, RRL‑SM follows a centralized training with decentralized execution (CTDE) paradigm. A central trainer aggregates experiences from all MGs, updates a joint policy and the shared value network, and distributes the updated parameters back to the agents. The policy gradient is corrected by the risk‑sensitive factorization term, ensuring that local policies are optimized not only for economic cost but also for risk reduction. To guarantee fairness and incentivize cooperation, the authors compute a Shapley‑value based cost allocation after each episode, attributing marginal contributions of each MG to the coalition’s total utility (operational cost plus CVaR‑weighted load‑shedding penalty). This post‑processing step discourages free‑riding and aligns individual incentives with the collective goal.

The authors evaluate RRL‑SM on a realistic multi‑MG test system comprising several geographically proximate MGs, each equipped with photovoltaic (PV) generation, a micro‑turbine (MT), an energy storage system (ESS), and residential loads. Uncertainty is modeled through multiple PV output and load scenarios. Baselines include standard value‑factorization MARL methods (QMIX, VDN) and a risk‑aware Trust‑Region Policy Optimization (TRPO) approach. Results demonstrate that RRL‑SM achieves a dramatic reduction in load‑shedding risk—84.5 % lower than the best baseline—and yields a 7–12 % decrease in total operational cost. The shared‑memory mechanism yields near‑zero communication latency, and the attention‑based mixer successfully captures dynamic inter‑agent dependencies, as evidenced by higher alignment between individual CVaR contributions and the global risk metric.

In summary, the paper makes three principal contributions: (1) a risk‑sensitive value factorization that quantitatively links individual and joint risk profiles via distributional RL and attention; (2) an implicit shared‑memory coordination architecture that eliminates explicit communication bottlenecks; and (3) a Shapley‑value based fair cost allocation that sustains cooperative behavior. By jointly addressing risk quantification, coordination efficiency, and incentive compatibility, RRL‑SM offers a practical and scalable solution for reliable, cost‑effective energy scheduling in future high‑renewable, multi‑microgrid power systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment