Anatomical Region-Guided Contrastive Decoding: A Plug-and-Play Strategy for Mitigating Hallucinations in Medical VLMs

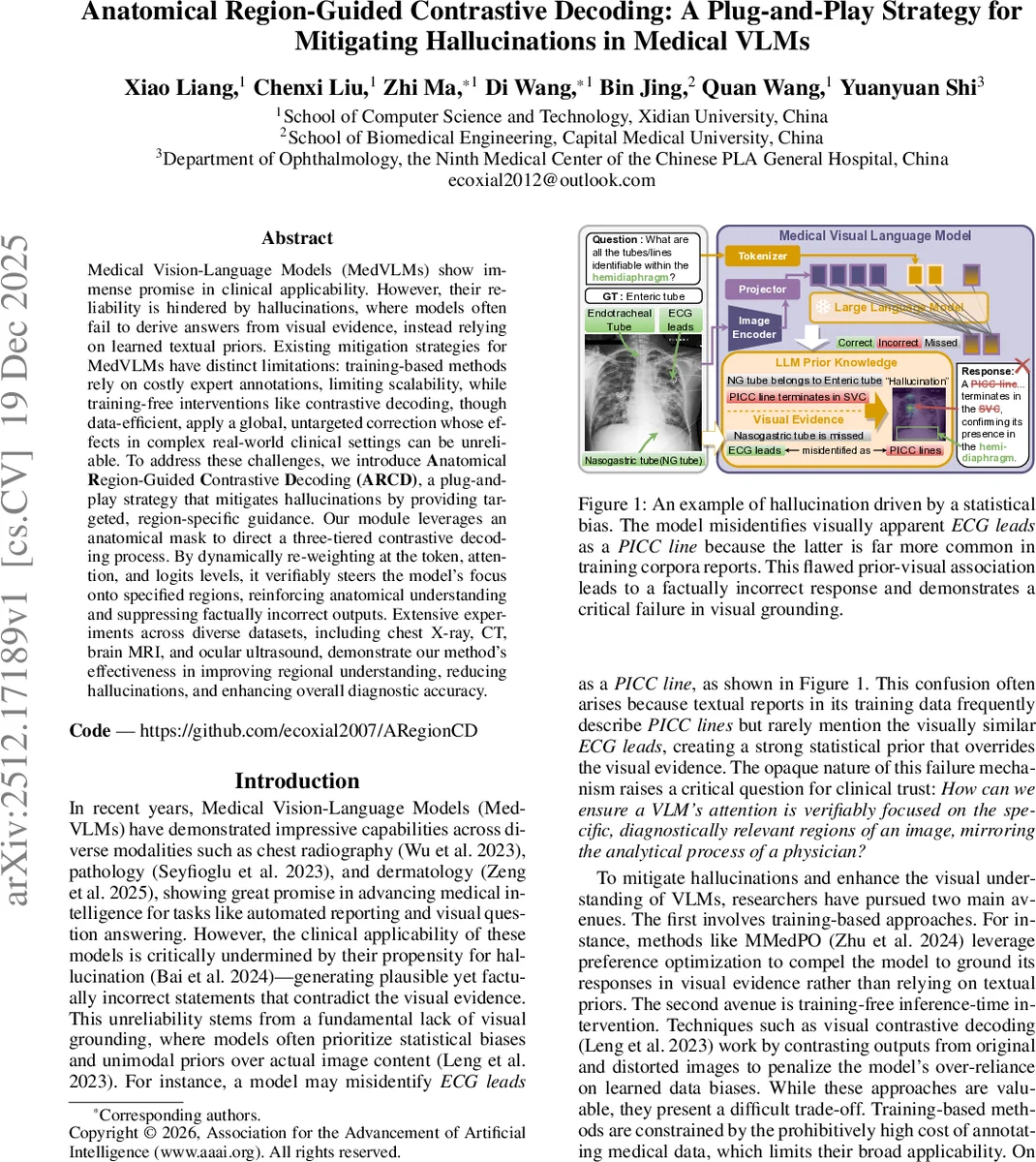

Medical Vision-Language Models (MedVLMs) show immense promise in clinical applicability. However, their reliability is hindered by hallucinations, where models often fail to derive answers from visual evidence, instead relying on learned textual priors. Existing mitigation strategies for MedVLMs have distinct limitations: training-based methods rely on costly expert annotations, limiting scalability, while training-free interventions like contrastive decoding, though data-efficient, apply a global, untargeted correction whose effects in complex real-world clinical settings can be unreliable. To address these challenges, we introduce Anatomical Region-Guided Contrastive Decoding (ARCD), a plug-and-play strategy that mitigates hallucinations by providing targeted, region-specific guidance. Our module leverages an anatomical mask to direct a three-tiered contrastive decoding process. By dynamically re-weighting at the token, attention, and logits levels, it verifiably steers the model’s focus onto specified regions, reinforcing anatomical understanding and suppressing factually incorrect outputs. Extensive experiments across diverse datasets, including chest X-ray, CT, brain MRI, and ocular ultrasound, demonstrate our method’s effectiveness in improving regional understanding, reducing hallucinations, and enhancing overall diagnostic accuracy.

💡 Research Summary

The paper tackles the pervasive problem of hallucinations in medical vision‑language models (MedVLMs), where generated answers rely on learned textual priors rather than visual evidence. Existing solutions fall into two categories: (1) training‑based methods that require costly expert annotations and (2) training‑free contrastive decoding (e.g., VCD) that applies a global, untargeted correction. Both have drawbacks—high annotation cost or insufficient regional specificity.

To overcome these limitations, the authors propose Anatomical Region‑Guided Contrastive Decoding (ARCD), a plug‑and‑play, inference‑time strategy that leverages an anatomical segmentation mask to guide the decoding process at three hierarchical levels: token, attention, and logits.

Method Overview

- Dynamic Attention Mask Generation – A binary segmentation mask S (produced by an expert or a pre‑trained segmenter such as MedSAM or PSPNet) is down‑sampled to match the token grid of the Vision Transformer (ViT‑L/14) used in the MedVLM. Two masks are created: a global mask M_g and a local mask M_l, each flattened and concatenated with newline separators to form a composite mask M =

Comments & Academic Discussion

Loading comments...

Leave a Comment