PhysFire-WM: A Physics-Informed World Model for Emulating Fire Spread Dynamics

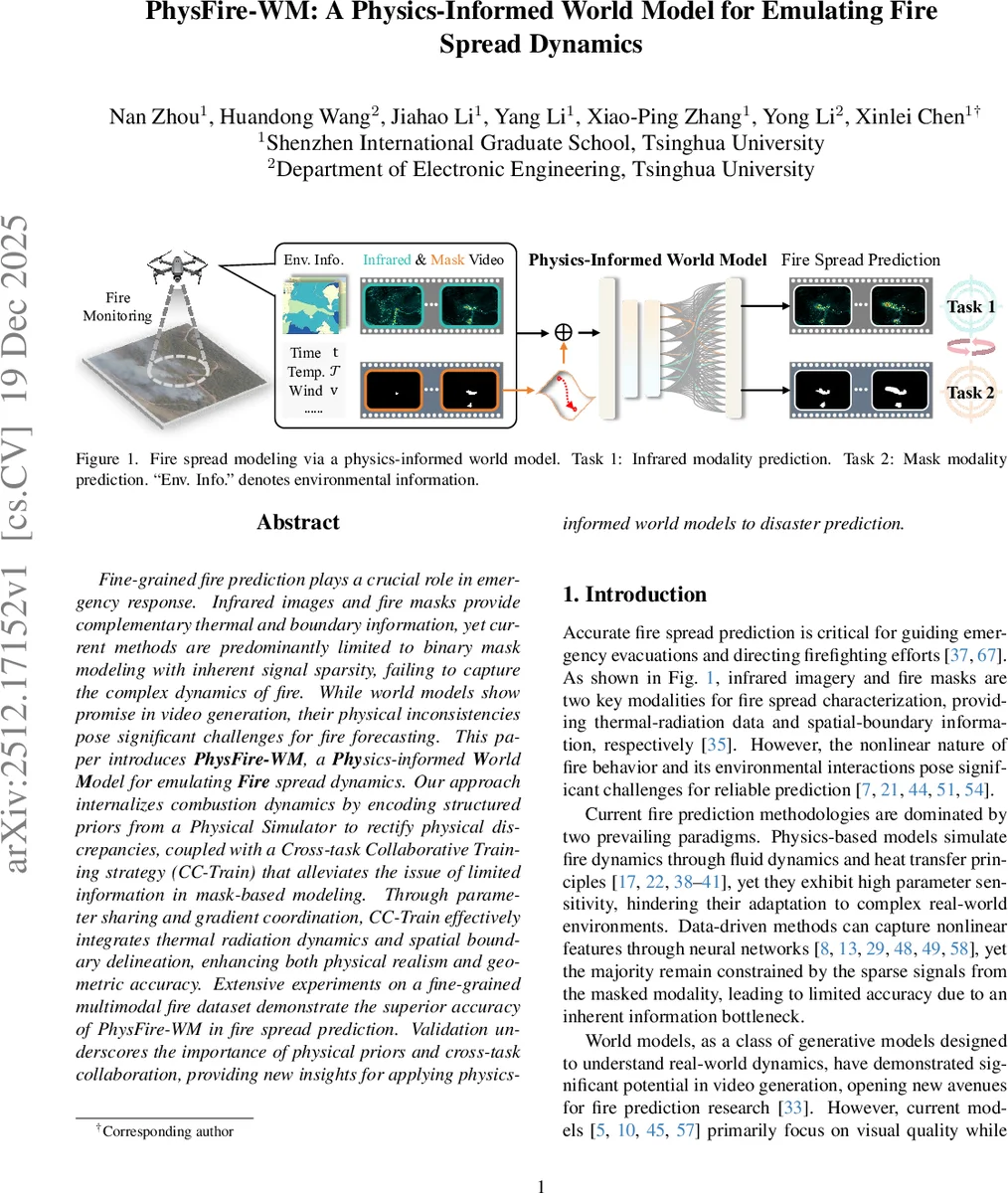

Fine-grained fire prediction plays a crucial role in emergency response. Infrared images and fire masks provide complementary thermal and boundary information, yet current methods are predominantly limited to binary mask modeling with inherent signal sparsity, failing to capture the complex dynamics of fire. While world models show promise in video generation, their physical inconsistencies pose significant challenges for fire forecasting. This paper introduces PhysFire-WM, a Physics-informed World Model for emulating Fire spread dynamics. Our approach internalizes combustion dynamics by encoding structured priors from a Physical Simulator to rectify physical discrepancies, coupled with a Cross-task Collaborative Training strategy (CC-Train) that alleviates the issue of limited information in mask-based modeling. Through parameter sharing and gradient coordination, CC-Train effectively integrates thermal radiation dynamics and spatial boundary delineation, enhancing both physical realism and geometric accuracy. Extensive experiments on a fine-grained multimodal fire dataset demonstrate the superior accuracy of PhysFire-WM in fire spread prediction. Validation underscores the importance of physical priors and cross-task collaboration, providing new insights for applying physics-informed world models to disaster prediction.

💡 Research Summary

PhysFire‑WM introduces a novel physics‑informed world model for fine‑grained fire spread prediction, addressing two fundamental challenges: (C1) ensuring physical consistency with the underlying combustion dynamics, and (C2) exploiting the complementary information of infrared (thermal radiation) and fire‑mask (spatial boundary) modalities. The authors first construct a physical simulator (Pϕ) that numerically solves the heat‑balance partial differential equation governing temperature evolution, wind advection, terrain effects, and fuel consumption. The simulator outputs structured physical priors—spatiotemporal tensors encoding temperature gradients, wind fields, and combustion rates—which are later injected as conditional tokens into a diffusion‑transformer (DiT) backbone.

The backbone is based on the WAN architecture, a DiT‑based video generation model, fine‑tuned with Low‑Rank Adaptation (LoRA) for parameter efficiency. A multimodal tokenizer (Eη) unifies four input streams—video frames, binary masks, textual prompts, and the physical priors—into a single token sequence via a context adapter that decouples reactive (modifiable) and inactive (preserved) regions according to the mask.

To bridge the information gap inherent in binary‑mask‑only modeling, the paper proposes Cross‑Task Collaborative Training (CC‑Train). Two tasks are learned jointly: infrared video prediction (Task 1) and fire‑mask prediction (Task 2). Shared model parameters and a weighted loss L = λ₁L_IR + λ₂L_mask enable gradient coordination, so that thermal distribution consistency and boundary geometric precision reinforce each other. The physical priors act as a regularizer, guiding the cross‑attention layers to respect energy conservation, wind direction, and terrain‑induced acceleration, thereby preventing physically implausible artifacts such as up‑wind fire propagation.

Experiments are conducted on a custom high‑resolution multimodal fire dataset (512 × 512, 10 fps, 5 000 time steps) covering diverse vegetation, wind, and terrain conditions. Evaluation metrics include PSNR, SSIM for video quality, IoU/Dice for mask accuracy, and an Energy Conservation Error (ECE) to quantify physical fidelity. PhysFire‑WM outperforms state‑of‑the‑art video‑generation world models (Sora, Genie, Cosmos) and data‑driven fire predictors (UNet‑Heat, Transformer‑Fire) with gains of +2.1 dB in PSNR, +0.03 in SSIM, and +0.07 in IoU, while achieving the lowest ECE (4.3 %). Visual inspection shows fire fronts advancing naturally with wind, and thermal fields diminishing as fuel is consumed, matching the simulated physics.

Ablation studies confirm that removing the physical priors leads to increased physical violations (e.g., reverse‑wind spread), while omitting CC‑Train degrades boundary precision. The combination of both components yields the best performance, demonstrating their synergistic effect.

The authors discuss limitations: the fidelity of the physical simulator directly influences prior quality, and complex terrain or atmospheric conditions increase computational cost. The current framework handles only infrared and mask modalities; extending to smoke, gas, or acoustic sensors could further enrich predictions.

In conclusion, PhysFire‑WM successfully integrates structured physical knowledge with a cross‑modal collaborative training scheme, delivering fire spread forecasts that are both physically plausible and geometrically accurate. The work provides a scalable blueprint for applying physics‑informed world models to broader disaster‑prediction tasks such as floods or landslides.

Comments & Academic Discussion

Loading comments...

Leave a Comment