AdaTooler-V: Adaptive Tool-Use for Images and Videos

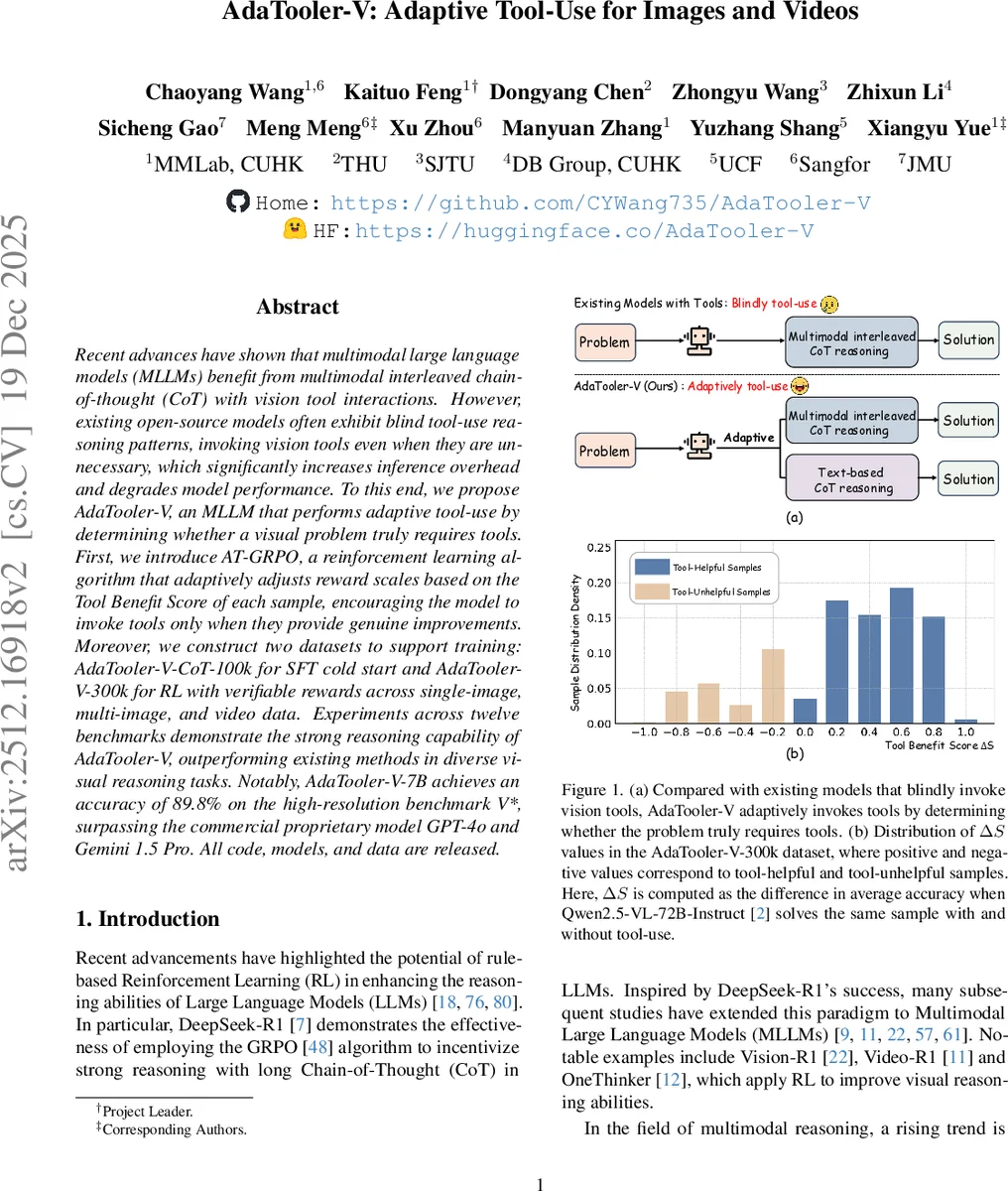

Recent advances have shown that multimodal large language models (MLLMs) benefit from multimodal interleaved chain-of-thought (CoT) with vision tool interactions. However, existing open-source models often exhibit blind tool-use reasoning patterns, invoking vision tools even when they are unnecessary, which significantly increases inference overhead and degrades model performance. To this end, we propose AdaTooler-V, an MLLM that performs adaptive tool-use by determining whether a visual problem truly requires tools. First, we introduce AT-GRPO, a reinforcement learning algorithm that adaptively adjusts reward scales based on the Tool Benefit Score of each sample, encouraging the model to invoke tools only when they provide genuine improvements. Moreover, we construct two datasets to support training: AdaTooler-V-CoT-100k for SFT cold start and AdaTooler-V-300k for RL with verifiable rewards across single-image, multi-image, and video data. Experiments across twelve benchmarks demonstrate the strong reasoning capability of AdaTooler-V, outperforming existing methods in diverse visual reasoning tasks. Notably, AdaTooler-V-7B achieves an accuracy of 89.8% on the high-resolution benchmark V*, surpassing the commercial proprietary model GPT-4o and Gemini 1.5 Pro. All code, models, and data are released.

💡 Research Summary

AdaTooler‑V introduces a novel approach to multimodal large language models (MLLMs) that intelligently decides when to invoke visual tools during reasoning. The authors identify a critical flaw in existing “thinking with images” systems: they indiscriminately call vision tools (e.g., cropping, frame extraction) even for queries that can be solved with pure text‑based chain‑of‑thought (CoT), leading to unnecessary computational overhead, over‑thinking, and sometimes degraded accuracy.

To address this, the paper proposes two key innovations. First, a Tool Benefit Score (ΔS) is defined for each sample as the difference in average accuracy when the same question is answered with and without tool usage. Positive ΔS indicates genuine benefit, while negative ΔS signals that tools are harmful. Second, the authors develop AT‑GRPO (Adaptive Tool‑use GRPO), a reinforcement‑learning algorithm that modifies the standard GRPO reward scaling by multiplying the reward with the sample‑specific ΔS. This dynamic scaling rewards tool invocations only when they provide measurable improvement and penalizes redundant or detrimental calls, thereby teaching the policy model a meta‑decision: “should I use a tool?”.

Training proceeds in two stages. The supervised fine‑tuning (SFT) stage uses AdaTooler‑V‑CoT‑100k, a curated dataset of 100 k multi‑turn tool‑interaction trajectories that embed both text‑based CoT and tool‑augmented reasoning patterns. This stage establishes strong baseline reasoning and tool‑calling syntax. The subsequent reinforcement‑learning (RL) stage leverages AdaTooler‑V‑300k, comprising 300 k samples spanning single‑image, multi‑image, and video modalities, and covering tasks such as visual mathematics, logical reasoning, spatial understanding, and counting. Each sample is annotated with its ΔS, enabling AT‑GRPO to apply precise, sample‑aware rewards.

Extensive evaluation across twelve diverse benchmarks—including image VQA, video QA, visual math, spatial reasoning, and visual counting—demonstrates that AdaTooler‑V consistently outperforms prior state‑of‑the‑art methods. Notably, the 7‑billion‑parameter model achieves 89.8 % accuracy on the high‑resolution V* benchmark, surpassing proprietary systems like GPT‑4o and Gemini 1.5 Pro. Moreover, the adaptive tool‑use strategy reduces average tool invocations by roughly 30 %, yielding significant inference‑time savings without sacrificing accuracy. Ablation studies confirm that removing the ΔS‑based scaling (i.e., using vanilla GRPO) leads to tool over‑use and a 3‑5 % drop in overall performance.

Qualitative examples illustrate the model’s flexibility: for a multi‑image clock‑reading problem, AdaTooler‑V solves it entirely with text‑based CoT, while for a video‑based chronological reasoning task it iteratively extracts relevant frames, zooms in on details, and refines its answer, showcasing seamless integration of tool calls when needed.

In summary, AdaTooler‑V provides the first concrete framework for end‑to‑end learning of “when to use tools” in multimodal LLMs. By quantifying tool utility with ΔS and embedding this signal into the RL reward, the system achieves both higher accuracy and lower computational cost. The released code, models, and datasets (AdaTooler‑V‑CoT‑100k and AdaTooler‑V‑300k) open avenues for extending adaptive tool‑use to richer toolsets (e.g., OCR, 3D rendering) and more complex real‑world applications, marking a significant step toward practical, efficient multimodal AI.

Comments & Academic Discussion

Loading comments...

Leave a Comment