CLUENet: Cluster Attention Makes Neural Networks Have Eyes

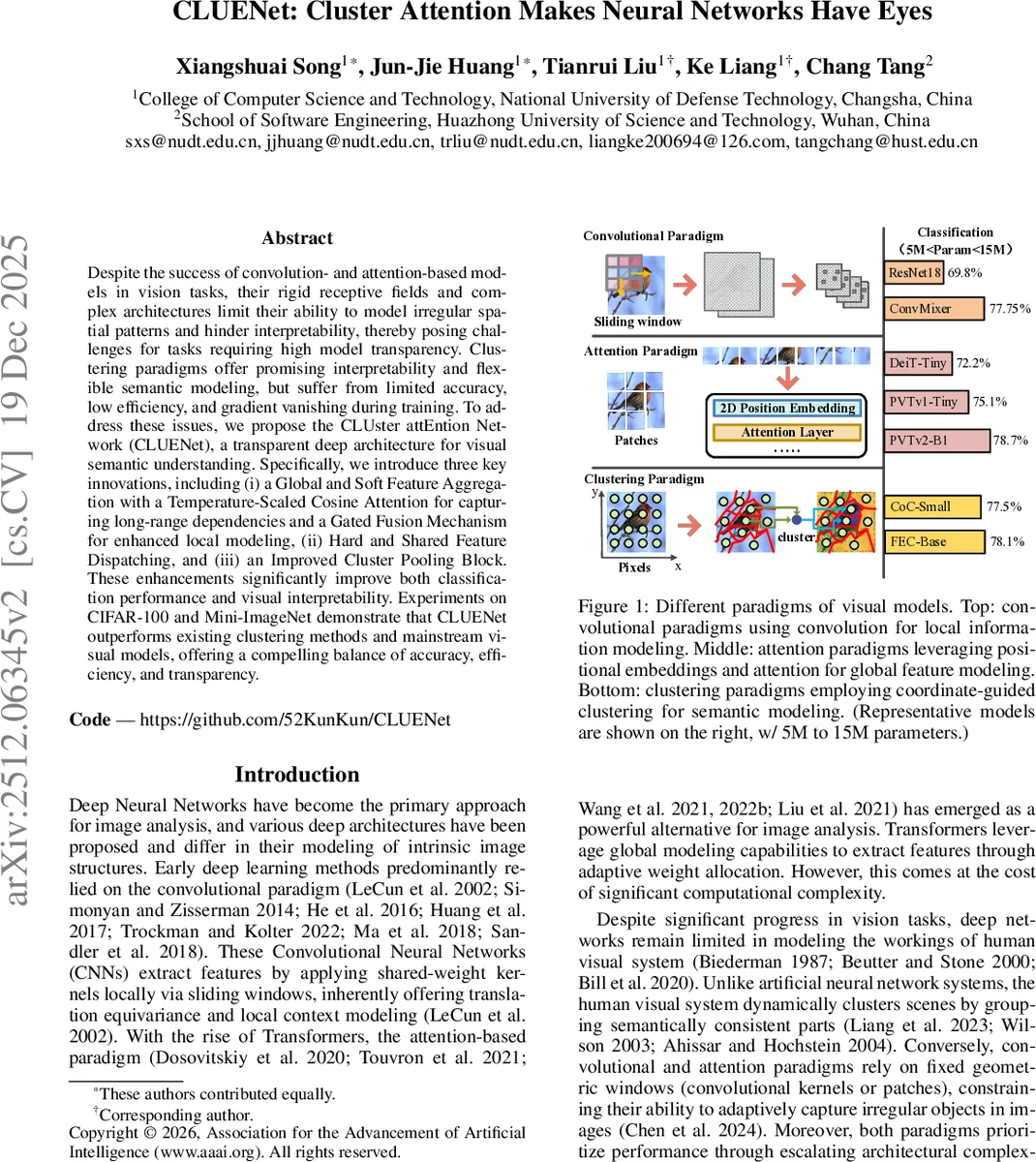

Despite the success of convolution- and attention-based models in vision tasks, their rigid receptive fields and complex architectures limit their ability to model irregular spatial patterns and hinder interpretability, therefore posing challenges for tasks requiring high model transparency. Clustering paradigms offer promising interpretability and flexible semantic modeling, but suffer from limited accuracy, low efficiency, and gradient vanishing during training. To address these issues, we propose CLUster attEntion Network (CLUENet), an transparent deep architecture for visual semantic understanding. We propose three key innovations include (i) a Global Soft Aggregation and Hard Assignment with a Temperature-Scaled Cosin Attention and gated residual connections for enhanced local modeling, (ii) inter-block Hard and Shared Feature Dispatching, and (iii) an improved cluster pooling strategy. These enhancements significantly improve both classification performance and visual interpretability. Experiments on CIFAR-100 and Mini-ImageNet demonstrate that CLUENet outperforms existing clustering methods and mainstream visual models, offering a compelling balance of accuracy, efficiency, and transparency.

💡 Research Summary

**

The paper introduces CLUENet, a novel deep architecture for visual semantic understanding that leverages a clustering paradigm to achieve both high accuracy and intrinsic interpretability. Traditional convolutional networks and Vision Transformers excel at performance but suffer from rigid receptive fields and opaque internal mechanisms, making it difficult to model irregular spatial patterns and to provide transparent explanations. Existing clustering‑based models (e.g., CoC, FEC, ClusterFormer) address interpretability but lag behind state‑of‑the‑art accuracy, are limited to local windows, and suffer from gradient‑vanishing in their pooling layers.

CLUENet tackles these shortcomings through three main technical contributions:

-

Global Soft Feature Aggregation (GSFA) with Temperature‑Scaled Cosine Attention – Cluster centers are initialized via adaptive pooling and then updated by attending to all pixel features across the image. Cosine similarity is scaled by a learnable temperature τ and passed through a softmax, producing a similarity matrix that weights the aggregation of value‑space features. This global soft assignment captures long‑range dependencies and yields smooth, continuous clusters.

-

Gated Fusion Mechanism – To preserve local detail that could be washed out by global averaging, a lightweight gating network (sigmoid‑activated two‑layer MLP) blends the soft‑aggregated cluster representations with locally computed grid‑based centers. The gate learns per‑cluster weights, allowing the model to adaptively balance global context and fine‑grained information.

-

Hard and Shared Feature Dispatching (HSFD) – After soft aggregation, a hard assignment step converts the similarity matrix into a one‑hot mapping, enforcing exclusive pixel‑to‑cluster relationships that are easy to interpret. Crucially, the hard assignment matrix is shared among all blocks within the same stage, reducing redundant computation and stabilizing training.

-

Improved Cluster Pooling (ICP) – Instead of directly averaging features in value space (which can cause gradient vanishing), CLUENet first projects pixel features into a similarity space, performs clustering and pooling there, and finally maps the pooled representation back to the feature space via a perceptron. This design maintains gradient flow and improves learning dynamics.

The overall architecture follows a four‑stage pyramid (down‑sampling ratios 1/4, 1/8, 1/16, 1/32). Each stage contains a Positional‑aware Feature Embedding (PFE) block that injects learnable positional information using depth‑wise convolutions, followed by a series of GFC blocks (GSFA + HSFD) and an ICP block.

Experimental results demonstrate that CLUENet achieves state‑of‑the‑art performance among clustering‑based methods and competes favorably with mainstream CNNs and ViTs of comparable size. On CIFAR‑100, CLUENet reaches 76.55 % top‑1 accuracy (≈3 pp higher than the best prior clustering model). On Mini‑ImageNet, it attains 82.44 % top‑1 (≈2.6 pp improvement). The model uses 5–15 M parameters and 1.2–2.0 G FLOPs, comparable to ResNet‑18 or ViT‑Tiny, while providing explicit cluster visualizations that correspond to semantically meaningful image regions.

Limitations include: (i) the current design is limited to 2‑D images; extending to 3‑D point clouds or video sequences would require additional temporal or spatial mechanisms, (ii) the global soft attention’s memory cost scales with image size (O(H·W·m)), potentially problematic for very high‑resolution inputs, and (iii) the temperature τ and gating parameters can be sensitive during early training, necessitating careful hyper‑parameter tuning.

Future directions suggested by the authors involve multi‑scale clustering to capture semantics at various resolutions simultaneously, integrating temporal consistency for video, and designing hybrid loss functions that jointly optimize soft and hard assignments for a smoother accuracy‑interpretability trade‑off.

In summary, CLUENet presents a compelling synthesis of clustering theory and modern attention mechanisms, delivering a model that “has eyes” – it can both see (high performance) and explain (transparent clustering) – thereby advancing the quest for truly interpretable deep vision systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment