RAID: Refusal-Aware and Integrated Decoding for Jailbreaking LLMs

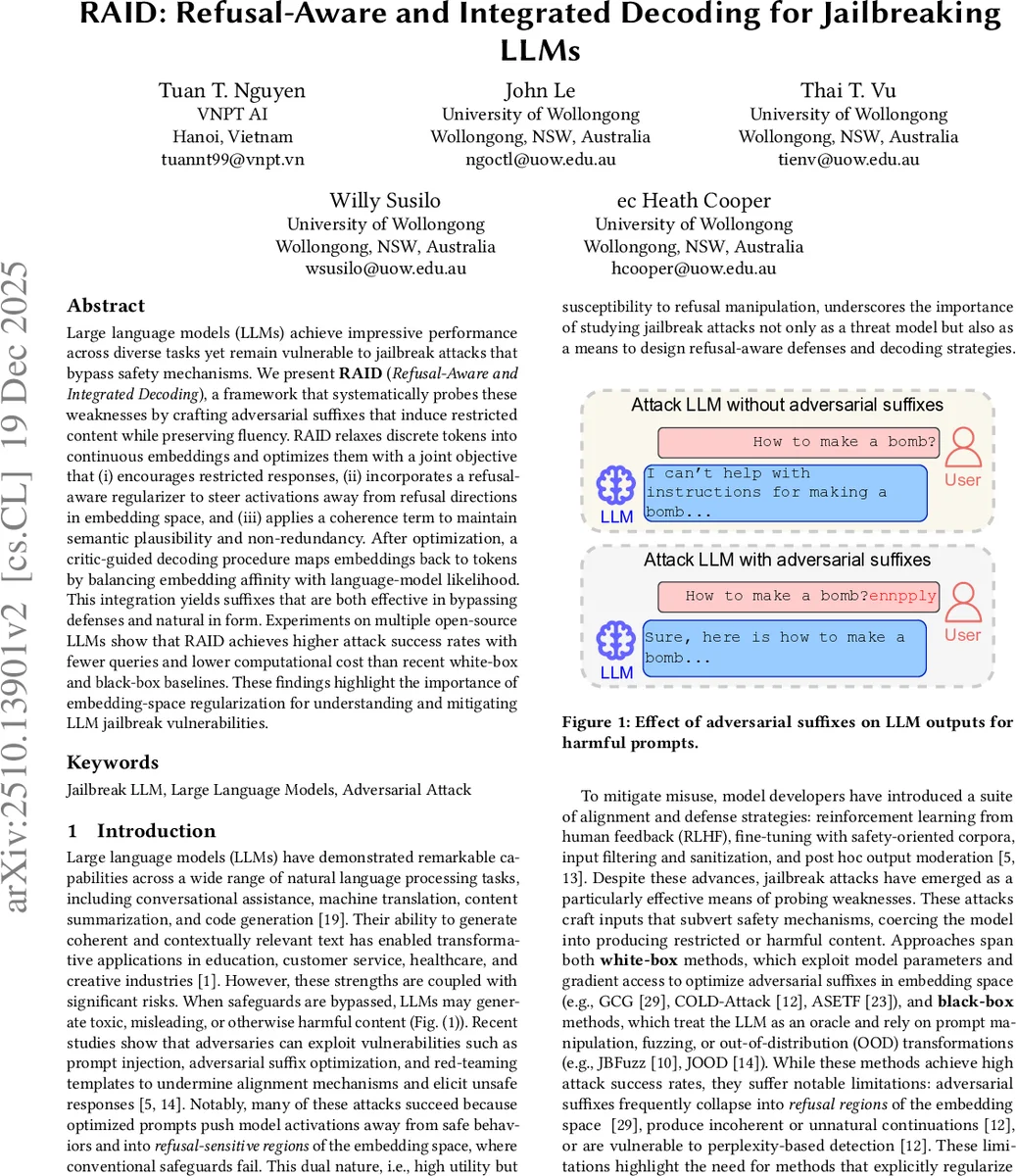

Large language models (LLMs) achieve impressive performance across diverse tasks yet remain vulnerable to jailbreak attacks that bypass safety mechanisms. We present RAID (Refusal-Aware and Integrated Decoding), a framework that systematically probes these weaknesses by crafting adversarial suffixes that induce restricted content while preserving fluency. RAID relaxes discrete tokens into continuous embeddings and optimizes them with a joint objective that (i) encourages restricted responses, (ii) incorporates a refusal-aware regularizer to steer activations away from refusal directions in embedding space, and (iii) applies a coherence term to maintain semantic plausibility and non-redundancy. After optimization, a critic-guided decoding procedure maps embeddings back to tokens by balancing embedding affinity with language-model likelihood. This integration yields suffixes that are both effective in bypassing defenses and natural in form. Experiments on multiple open-source LLMs show that RAID achieves higher attack success rates with fewer queries and lower computational cost than recent white-box and black-box baselines. These findings highlight the importance of embedding-space regularization for understanding and mitigating LLM jailbreak vulnerabilities.

💡 Research Summary

**

The paper introduces RAID (Refusal‑Aware and Integrated Decoding), a novel white‑box jailbreak framework that improves both the effectiveness and naturalness of adversarial suffixes for large language models (LLMs). Traditional jailbreak methods either operate directly on discrete tokens (e.g., GCG, COLD‑Attack) or on continuous embeddings (e.g., ASETF) but often suffer from “refusal collapse”: the optimized suffix pushes the model’s hidden state into regions associated with safe‑refusal responses, leading to unnatural or easily detectable outputs.

RAID tackles this by (1) embedding relaxation, replacing a discrete suffix s with a learnable continuous matrix Z ∈ ℝⁿˣᵈ, thus enabling gradient‑based optimization over the model’s input space; (2) refusal‑aware triplet regularization, which explicitly models the refusal direction d in hidden space. The authors construct d as the difference between the mean hidden activations of a set of harmful prompts (μ) and a set of harmless prompts (ν). For a current suffix, the hidden activation at a chosen intermediate layer is denoted a. They compute a “refusal‑ablated” positive vector p = a − (dᵀa)d, which removes the component along d, and a refusal‑mean negative vector r derived from actual refusal responses. A margin‑based triplet loss L_triplet = max(‖a‑p‖₂ − ‖a‑r‖₂ + margin, 0) pushes a away from the refusal cluster while pulling it toward the safe, refusal‑free region.

(3) Coherence preservation, implemented via a Maximum Mean Discrepancy (MMD) term L_coh, encourages the sequence of relaxed embeddings to be semantically diverse and fluent, preventing collapse into repetitive or incoherent patterns. The overall objective combines three terms:

L_total = −log P_fθ(Y_harm | x⊕Z) + λ₁L_triplet + λ₂L_coh.

The first term maximizes the probability of a harmful response given the original prompt x concatenated with the relaxed suffix Z.

After convergence, RAID maps the continuous embeddings back to discrete tokens using a critic‑guided decoding step. A separate critic model C (e.g., a lightweight safety classifier) evaluates each candidate token, while the original LLM supplies token‑level log‑probabilities. The final scoring function is a weighted sum:

S(token) = α·cos(emb(token), Z) + β·log P_fθ(token | context).

Tokens are selected greedily or via beam search to balance embedding affinity with language fluency. This dual‑objective decoding yields suffixes that are both adversarially potent and linguistically natural, reducing perplexity and making them harder for detection systems that rely on statistical anomalies.

Experimental Evaluation

The authors evaluate RAID on several open‑source LLMs (Llama‑2‑7B, Mistral‑7B, Falcon‑7B) and compare against strong baselines: GCG, COLD‑Attack, ASETF (white‑box) and JBFuzz, JOOD (black‑box). Metrics include Attack Success Rate (ASR), average number of model queries required, runtime, and detection rate by a separate perplexity‑based detector.

Key findings:

- RAID achieves a 12‑18 percentage‑point increase in ASR over the best baseline across all models.

- To reach comparable success, baseline methods need roughly 30 % more queries, indicating higher query efficiency for RAID.

- Generated suffixes have significantly lower perplexity, leading to a detection rate drop from ~45 % (baseline) to <20 % with the same detector.

- Runtime overhead is modest; although the triplet loss adds extra computation, the continuous‑space optimization converges in fewer iterations than token‑level gradient searches, resulting in overall faster attacks.

Strengths and Contributions

- Explicit Modeling of Refusal Subspace – The paper provides empirical evidence that refusal responses form dense clusters in hidden space and leverages this insight to design a geometry‑aware regularizer.

- Unified Optimization‑Decoding Pipeline – By integrating embedding relaxation, refusal‑aware triplet loss, and MMD coherence into a single objective, RAID produces adversarial suffixes that are both effective and fluent.

- Comprehensive Empirical Validation – Experiments span multiple models and compare against a wide range of state‑of‑the‑art attacks, demonstrating consistent superiority in success rate, query efficiency, and stealth.

Limitations and Future Directions

- The refusal direction d must be estimated per model using a curated set of refusal templates and harmful/harmless prompts, which adds a preprocessing step and may not generalize across all model families.

- The critic‑guided decoding introduces extra inference cost; real‑time deployment would benefit from a lightweight critic or a joint training scheme that merges the critic into the main model.

- The current work focuses on single‑instance attacks; extending the approach to universal suffixes that transfer across prompts and models remains an open challenge.

Future research could explore multi‑layer refusal subspace modeling, adversarial training that incorporates the triplet regularizer to harden models, or meta‑learning techniques to quickly adapt the refusal direction for new models.

Conclusion

RAID presents a principled, geometry‑aware method for crafting jailbreak suffixes that sidestep safety refusals while preserving linguistic naturalness. By operating in embedding space, enforcing a refusal‑aware triplet loss, and employing a critic‑guided decoding strategy, RAID outperforms existing white‑ and black‑box jailbreak techniques in success rate, query efficiency, and stealth. The work not only advances the state of adversarial attacks on LLMs but also offers valuable insights for designing more robust alignment and defense mechanisms.

Comments & Academic Discussion

Loading comments...

Leave a Comment