The Diffusion Duality

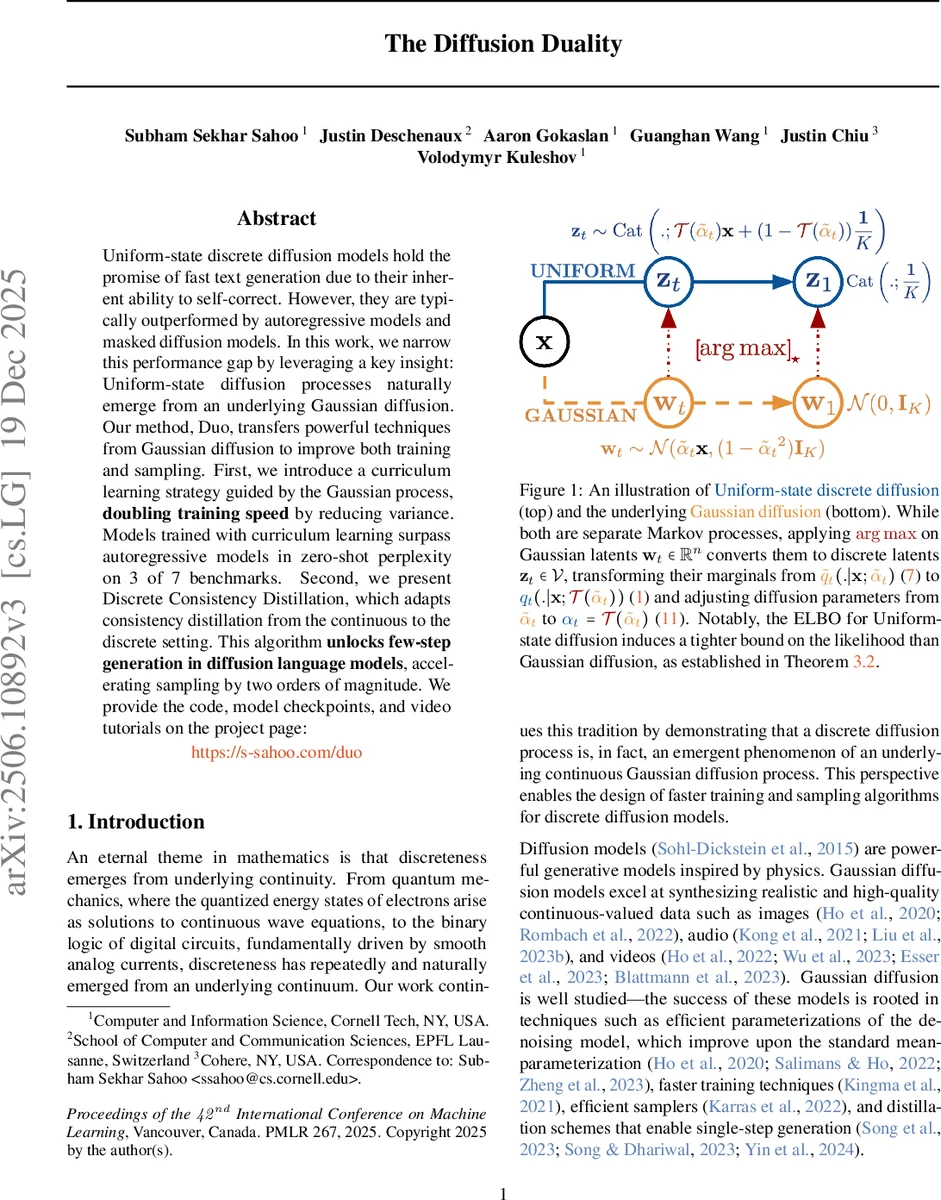

Uniform-state discrete diffusion models hold the promise of fast text generation due to their inherent ability to self-correct. However, they are typically outperformed by autoregressive models and masked diffusion models. In this work, we narrow this performance gap by leveraging a key insight: Uniform-state diffusion processes naturally emerge from an underlying Gaussian diffusion. Our method, Duo, transfers powerful techniques from Gaussian diffusion to improve both training and sampling. First, we introduce a curriculum learning strategy guided by the Gaussian process, doubling training speed by reducing variance. Models trained with curriculum learning surpass autoregressive models in zero-shot perplexity on 3 of 7 benchmarks. Second, we present Discrete Consistency Distillation, which adapts consistency distillation from the continuous to the discrete setting. This algorithm unlocks few-step generation in diffusion language models by accelerating sampling by two orders of magnitude. We provide the code, model checkpoints, and video tutorials on the project page: http://s-sahoo.github.io/duo

💡 Research Summary

The paper “The Diffusion Duality” introduces a theoretical and practical bridge between uniform‑state discrete diffusion models (USDMs) for text and the well‑studied continuous Gaussian diffusion framework. The authors prove that applying an arg‑max operation to the latent vectors of a Gaussian diffusion process yields exactly the marginal distribution of a USDM, with a deterministic transformation T that maps the Gaussian diffusion coefficient α̃ₜ to the discrete diffusion coefficient αₜ. This “diffusion duality” shows that USDMs can be viewed as the discretized push‑forward of a Gaussian diffusion, establishing a rigorous connection between the two domains.

Leveraging this insight, the authors transfer two powerful techniques from Gaussian diffusion to the discrete setting. First, they design a curriculum learning schedule guided by the Gaussian process: early training steps use high‑noise (large α̃ₜ) samples, and the noise level is gradually reduced as training progresses. This reduces variance in the training objective and effectively doubles training speed without sacrificing model quality. Second, they adapt consistency distillation—originally developed for continuous diffusion—to the discrete case. By using a teacher model (typically an EMA of the student) to generate a low‑noise latent via a deterministic Probability‑Flow ODE step, the student is trained to map a higher‑noise latent directly to the low‑noise one. The loss combines an L₂ distance between teacher and student outputs with a time‑dependent weighting λ(t). This “Discrete Consistency Distillation” reduces the number of reverse‑diffusion steps (NFEs) from the typical 1024 down to as few as 8, achieving a two‑order‑of‑magnitude speed‑up in sampling while preserving text quality.

The paper also provides a likelihood analysis. Theorem 3.2 proves that, for the same data distribution, the marginal log‑likelihood of the discrete diffusion model is always at least as high as that of its underlying Gaussian counterpart, implying that the discretized model can capture the data distribution more tightly.

Empirically, the authors evaluate their Duo framework on seven zero‑shot language generation benchmarks. With curriculum learning, the USDMs surpass autoregressive (AR) baselines on three datasets in perplexity, demonstrating competitive performance. When combined with discrete consistency distillation, the models achieve fast few‑step generation: in low‑NFE regimes (≤8 steps) they outperform Masked Discrete Diffusion Models (MDMs), which traditionally require many more steps or predictor‑corrector loops. Overall, training time is halved and sampling speed is increased by roughly 100× compared to standard USDM training and inference.

In summary, the work establishes a mathematically sound “diffusion duality” that unifies continuous and discrete diffusion processes, and it translates two key advances—curriculum learning and consistency distillation—from the Gaussian world to discrete text generation. This results in faster training, dramatically accelerated sampling, and competitive or superior performance relative to both AR models and existing discrete diffusion approaches, marking a significant step toward making diffusion‑based language models practical for real‑world applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment