On Dequantization of Supervised Quantum Machine Learning via Random Fourier Features

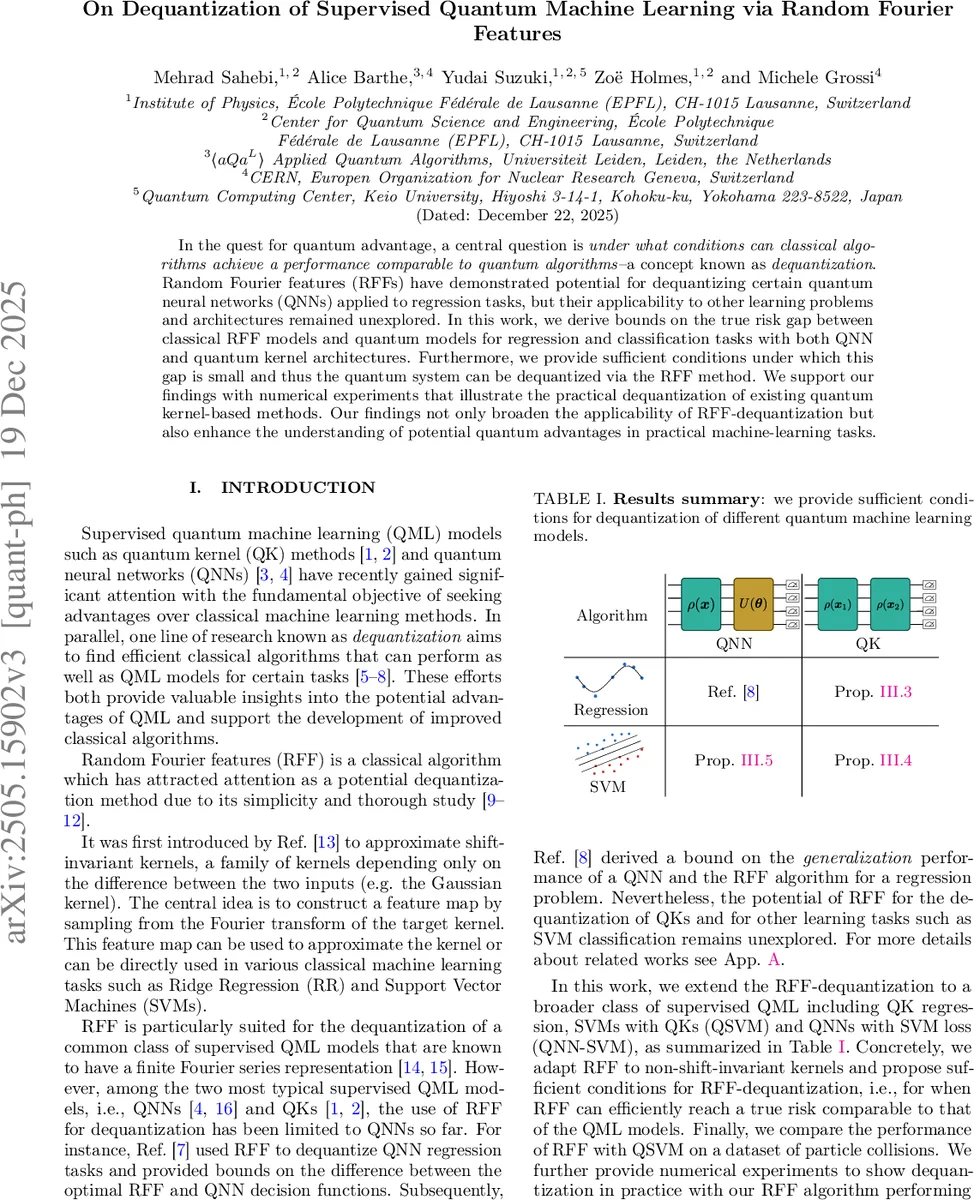

In the quest for quantum advantage, a central question is under what conditions can classical algorithms achieve a performance comparable to quantum algorithms–a concept known as dequantization. Random Fourier features (RFFs) have demonstrated potential for dequantizing certain quantum neural networks (QNNs) applied to regression tasks, but their applicability to other learning problems and architectures remained unexplored. In this work, we derive bounds on the true risk gap between classical RFF models and quantum models for regression and classification tasks with both QNN and quantum kernel architectures. Furthermore, we provide sufficient conditions under which this gap is small and thus the quantum system can be dequantized via the RFF method. We support our findings with numerical experiments that illustrate the practical dequantization of existing quantum kernel-based methods. Our findings not only broaden the applicability of RFF-dequantization but also enhance the understanding of potential quantum advantages in practical machine-learning tasks.

💡 Research Summary

The paper investigates the conditions under which classical algorithms can match the performance of quantum supervised learning models—a process known as dequantization—by leveraging Random Fourier Features (RFF). While prior work demonstrated that RFF can dequantize quantum neural networks (QNNs) for regression, the applicability to quantum kernel (QK) methods and classification tasks remained open. This work fills that gap by deriving risk‑gap bounds for both regression and support‑vector‑machine (SVM) classification when the quantum model is either a QNN or a QK.

The authors begin by formalizing the supervised learning setting, introducing kernel methods, and reviewing the classical RFF construction based on Bochner’s theorem for shift‑invariant kernels. They then describe the quantum setting: QKs are defined via fidelity of quantum states prepared by Hamiltonian encodings, and QNNs are expressed as parameterized circuits with data‑encoding layers. Crucially, under Hamiltonian encoding, both QNN outputs and QK kernels admit a Fourier series representation. For QNNs this yields a cosine‑sine feature map (Eq. 8), directly aligning with the RFF construction. For QKs, the authors prove (Lemma III.1) that any fidelity‑based kernel can be written as a double sum over frequencies with a positive‑semidefinite matrix F; the diagonal of F defines a probability mass function q over the frequency set Ω.

Using this representation, they propose a generalized RFF algorithm (Algorithm 3 in the appendix) that samples frequencies from the eigenstructure of F, thereby approximating non‑stationary kernels. The required number of samples scales as D = O(|Ω|² d ε⁻² log (1/ε²)), matching the classic bound for stationary kernels but highlighting an exponential dependence on the input dimension d through |Ω|.

The core theoretical contribution is a set of sufficient conditions for dequantization (Definition II.3). For regression, three conditions are identified:

- Sampling efficiency – the distribution p used by RFF must be easy to sample.

- Concentration – the maximum probability p_max must satisfy p_max⁻¹ = poly(d), i.e., p should not be overly flat.

- Alignment – p_ω should be proportional to the magnitude of the optimal quantum model’s Fourier coefficients |c_ω|.

These conditions guarantee that the true risk of the RFF estimator, R

Comments & Academic Discussion

Loading comments...

Leave a Comment