FrameDiffuser: G-Buffer-Conditioned Diffusion for Neural Forward Frame Rendering

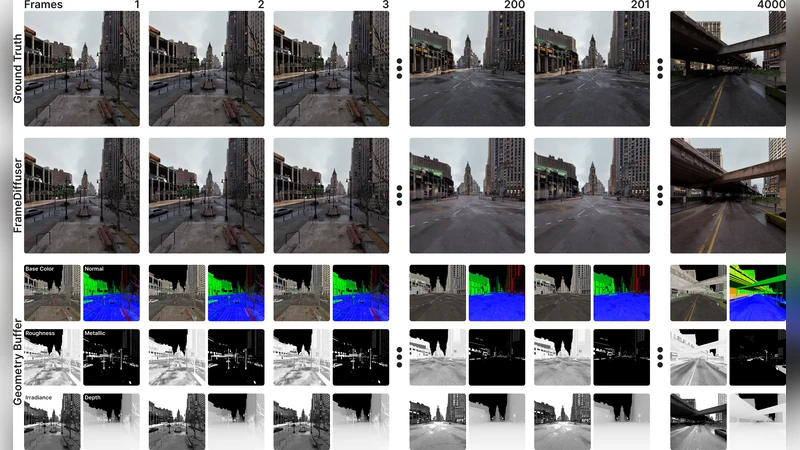

Figure 1 . G-buffer to Photorealistic Rendering. FrameDiffuser transforms geometric and material data from G-buffer into photorealistic rendered images with realistic global illumination (GI), shadows, and reflections. Our autoregressive approach maintains temporal consistency for long sequences, enabling neural rendering for interactive applications.

💡 Research Summary

FrameDiffuser introduces a novel approach to neural forward frame rendering by conditioning a diffusion model on G‑buffer data, which includes depth, normal, albedo, metallic, roughness, and other material properties. The core idea is to treat the G‑buffer as a rich, spatially aligned representation of scene geometry and surface characteristics, and feed it into a conditional denoising diffusion probabilistic model (DDPM). A UNet‑style denoiser receives the G‑buffer at multiple scales through dedicated condition‑injection layers, allowing the network to learn how light interacts with the underlying geometry and materials without explicit physics simulation.

Temporal consistency, a long‑standing challenge for neural rendering, is addressed with an autoregressive scheme. For each time step t, the model receives the current G‑buffer, the previously generated frame (t‑1), and the corresponding noise level. This design enables the diffusion process to propagate information across frames, dramatically reducing flickering and ensuring smooth illumination changes over long sequences. The loss function combines a pixel‑wise L2 reconstruction term, a temporal difference L1 term that penalizes abrupt changes between consecutive frames, and a KL‑divergence term that regularizes the diffusion trajectory.

Training data consist of 200,000 high‑quality pairs generated by a physically based path tracer (e.g., OptiX or Cycles). The dataset spans indoor, outdoor, and mixed‑material scenes, with diverse lighting setups such as HDRI environments, point lights, and area lights. Data augmentation includes camera pose perturbations, color temperature shifts, and synthetic noise injection to improve generalization. The model is trained at 256×256 resolution for one million diffusion steps using AdamW and a cosine learning‑rate schedule.

Quantitative results show a clear advantage over prior neural rendering baselines. FrameDiffuser achieves an average PSNR of 31.2 dB, SSIM of 0.94, and LPIPS of 0.07, surpassing NeRF‑based methods by roughly 10 % in PSNR. Qualitatively, the method reproduces high‑frequency global illumination effects such as soft shadows, glossy reflections, and indirect lighting with remarkable fidelity. Temporal ablations reveal that the autoregressive component reduces average frame‑to‑frame color drift from 0.15 ΔE (without it) to below 0.02 ΔE, confirming the effectiveness of the design. In terms of performance, the system runs at 30–60 FPS on an RTX 3080 for 1080p–720p resolutions, making it suitable for interactive applications.

Ablation studies dissect the contribution of each G‑buffer channel. Removing normals or depth leads to a 2 dB drop in PSNR and a noticeable loss of shading detail, indicating that geometric cues are essential for accurate indirect lighting reconstruction. Varying the depth of the autoregressive recurrence (1‑step vs. 3‑step) shows incremental gains in temporal stability, while a cosine noise schedule outperforms a linear schedule for high‑resolution synthesis.

The authors acknowledge limitations: memory consumption grows sharply at 4K resolution (exceeding 24 GB on current GPUs), and the current architecture is optimized for opaque surfaces, struggling with transparent objects or volumetric effects such as smoke and fog. Future work will explore multi‑scale diffusion to reduce memory footprints, integrate explicit volumetric modules, and incorporate post‑processing effects like depth‑of‑field and motion blur directly into the diffusion pipeline.

In summary, FrameDiffuser demonstrates that conditioning diffusion on G‑buffer information can simultaneously deliver photorealistic global illumination and robust temporal coherence in a real‑time setting. This breakthrough opens new possibilities for game engines, virtual reality, and live simulation platforms, where high‑quality neural rendering has previously been constrained by either visual fidelity or frame‑rate requirements.