Exploiting Domain Properties in Language-Driven Domain Generalization for Semantic Segmentation

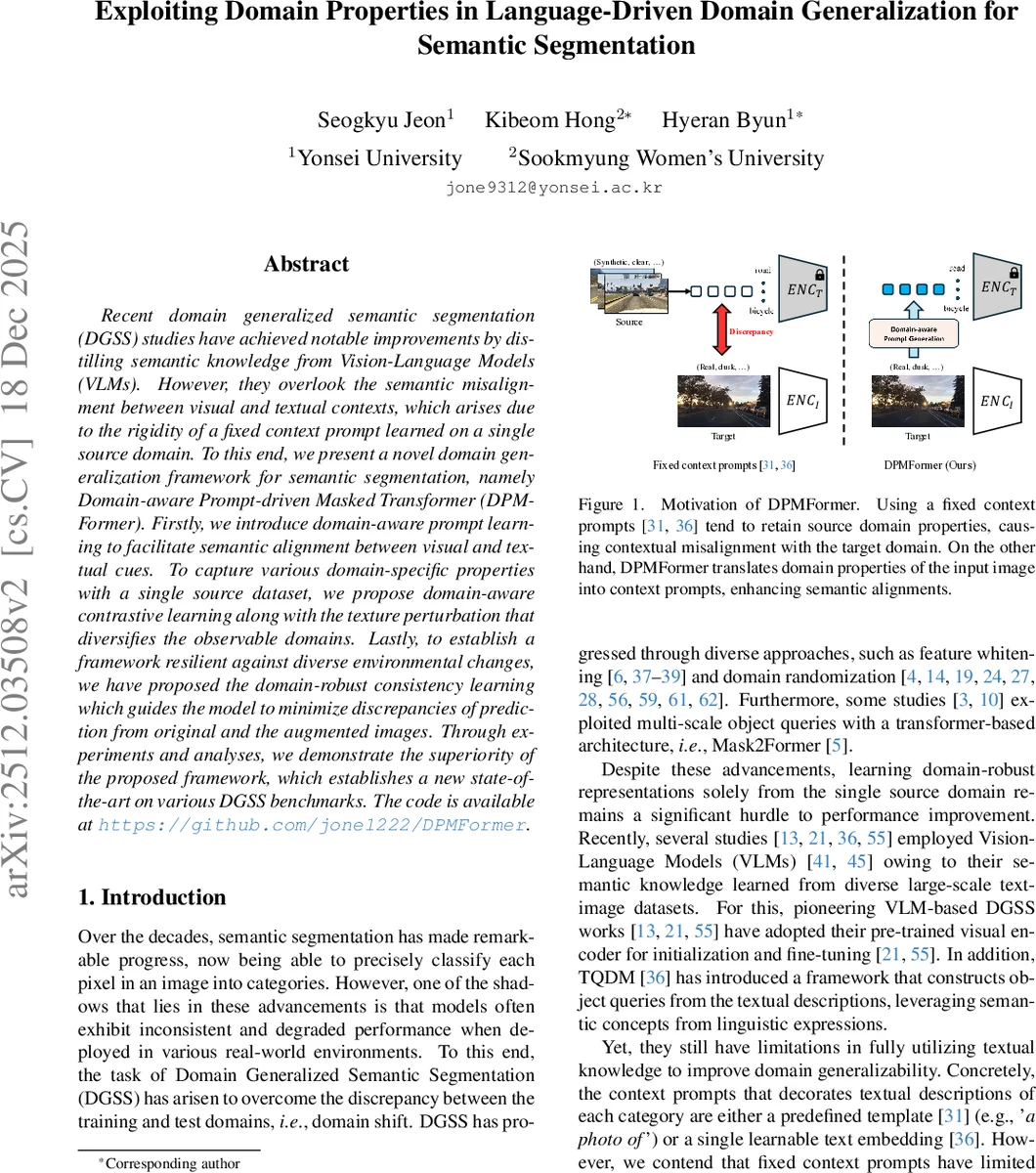

Recent domain generalized semantic segmentation (DGSS) studies have achieved notable improvements by distilling semantic knowledge from Vision-Language Models (VLMs). However, they overlook the semantic misalignment between visual and textual contexts, which arises due to the rigidity of a fixed context prompt learned on a single source domain. To this end, we present a novel domain generalization framework for semantic segmentation, namely Domain-aware Prompt-driven Masked Transformer (DPMFormer). Firstly, we introduce domain-aware prompt learning to facilitate semantic alignment between visual and textual cues. To capture various domain-specific properties with a single source dataset, we propose domain-aware contrastive learning along with the texture perturbation that diversifies the observable domains. Lastly, to establish a framework resilient against diverse environmental changes, we have proposed the domain-robust consistency learning which guides the model to minimize discrepancies of prediction from original and the augmented images. Through experiments and analyses, we demonstrate the superiority of the proposed framework, which establishes a new state-of-the-art on various DGSS benchmarks. The code is available at https://github.com/jone1222/DPMFormer.

💡 Research Summary

The paper tackles the challenging problem of Domain Generalized Semantic Segmentation (DGSS), where a model must be trained on a single source domain yet perform robustly on multiple unseen target domains. Recent DGSS works have leveraged Vision‑Language Models (VLMs) such as CLIP to import rich semantic knowledge, but they typically rely on a fixed textual prompt (e.g., “a photo of …”) that is learned only on the source data. This static prompt becomes misaligned when the visual appearance of target images differs dramatically (e.g., night, rain, fog), limiting the benefit of VLMs.

To overcome this limitation, the authors propose DPMFormer (Domain‑aware Prompt‑driven Masked Transformer), a framework built on the Mask2Former architecture and enriched with three novel components:

-

Domain‑aware Prompt Learning – An auxiliary network hθ extracts a global visual token from a frozen CLIP backbone and converts it into a domain‑specific embedding πx. This embedding is added to the learnable base prompt p, producing a dynamic prompt px = p + πx that is fed to the CLIP text encoder together with each class name. Consequently, the textual representation of a class is conditioned on the visual domain of the current image (e.g., “car at night”), aligning language and vision more tightly.

-

Texture Perturbation & Contrastive Learning – To expose the model to diverse domain styles while preserving semantic content, the authors apply strong photometric transformations (color jitter, Gaussian blur, noise) to generate a perturbed version x′ of each training image x. Both x and x′ are processed in the same batch. A domain‑aware contrastive loss (Eq. 1) encourages prompts derived from images of the same domain (both original or both perturbed) to be similar, while pushing apart prompts from different domains. This forces πx to capture genuine domain characteristics rather than spurious image‑specific details.

-

Domain‑robust Consistency Learning – The model is further regularized to produce consistent predictions across the original‑perturbed pair. Two consistency terms are introduced: (a) class‑level consistency (KL or L2 between class logits) and (b) mask‑level consistency (pixel‑wise cross‑entropy or IoU loss between predicted masks). Importantly, these losses are applied at every layer of the transformer decoder, preventing early‑layer domain bias from propagating downstream.

The overall training objective combines the standard segmentation loss with the contrastive and consistency terms, weighted by hyper‑parameters.

Experimental validation is performed with GTAV as the sole source dataset and several challenging benchmarks as targets: BDD100K (day/night, weather variations), Cityscapes, Mapillary, and others. DPMFormer consistently outperforms prior VLM‑based DGSS methods such as TQDM and DAP, achieving 2–4 percentage‑point gains in mean IoU, with especially large improvements (≈5 pp) on night and adverse weather conditions. Ablation studies confirm that each component—dynamic prompts, texture perturbation with contrastive learning, and multi‑layer consistency—contributes significantly; removing any of them degrades performance noticeably.

Key contributions of the work are:

- Introducing a mechanism that injects image‑derived domain information directly into textual prompts, thereby resolving the semantic misalignment between visual and linguistic modalities in DGSS.

- Leveraging simple yet effective texture perturbations to synthesize virtual domains from a single source, and using a contrastive objective to make the learned prompts truly domain‑aware.

- Designing a comprehensive consistency regularization that operates at both class and mask levels across all decoder layers, ensuring robust predictions under severe domain shifts.

Overall, DPMFormer presents a compelling solution that bridges the gap between language‑driven semantic knowledge and the practical need for domain‑agnostic segmentation, setting a new state‑of‑the‑art for single‑source DGSS.

Comments & Academic Discussion

Loading comments...

Leave a Comment