Online-PVLM: Advancing Personalized VLMs with Online Concept Learning

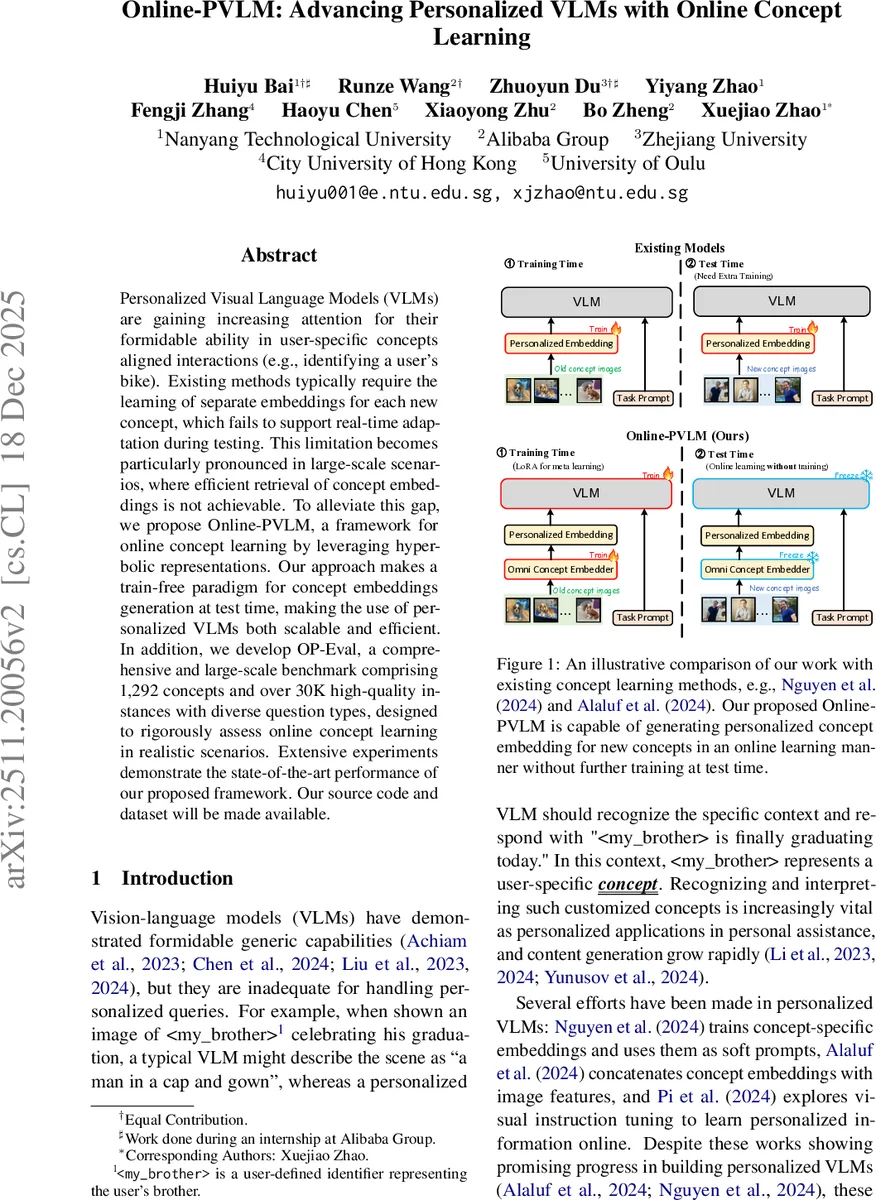

Personalized Visual Language Models (VLMs) are gaining increasing attention for their formidable ability in user-specific concepts aligned interactions (e.g., identifying a user’s bike). Existing methods typically require the learning of separate embeddings for each new concept, which fails to support real-time adaptation during testing. This limitation becomes particularly pronounced in large-scale scenarios, where efficient retrieval of concept embeddings is not achievable. To alleviate this gap, we propose Online-PVLM, a framework for online concept learning by leveraging hyperbolic representations. Our approach makes a train-free paradigm for concept embeddings generation at test time, making the use of personalized VLMs both scalable and efficient. In addition, we develop OP-Eval, a comprehensive and large-scale benchmark comprising 1,292 concepts and over 30K high-quality instances with diverse question types, designed to rigorously assess online concept learning in realistic scenarios. Extensive experiments demonstrate the state-of-the-art performance of our proposed framework. Our source code and dataset will be made available.

💡 Research Summary

Online‑PVLM introduces a novel framework for personalized visual‑language models (VLMs) that can learn new user‑specific concepts on the fly, without any additional training at test time. The authors first identify three major shortcomings of existing personalized VLM approaches: (1) lack of dynamic memory to retain prior user interactions, (2) inability to incorporate unseen concepts without test‑time fine‑tuning, and (3) poor scalability when the number of concepts grows to thousands. To address these issues, Online‑PVLM combines three key components.

-

Omni Concept Embedder (O) – a lightweight module that extracts instance‑normalized ViT features from a small set of user‑provided images for a concept, aggregates them via mean pooling, and projects the result through a small MLP to obtain a compact concept embedding zᵢ. This process requires only a single forward pass and no gradient updates, making it truly “train‑free” at inference.

-

Hyperbolic Discriminator (Dₕ) – both the query image features and the concept embeddings are mapped into a shared Poincaré ball (hyperbolic space). A margin‑based loss on the hyperbolic distance encourages embeddings of the same concept to be close while pushing different concepts apart. This non‑Euclidean geometry captures hierarchical semantic relations more effectively than standard Euclidean embeddings.

-

LoRA‑enhanced VLM (M) – the backbone VLM (e.g., LLaVA or similar) is frozen, and low‑rank adaptation (LoRA) adapters are inserted into its language and vision layers. The concept embedding is turned into a soft prompt of the form “<sksᵢ> is <embed₁> … <embed_k>” and concatenated with the normal token stream. During training, the model jointly minimizes the standard autoregressive language loss (L_ans) and the hyperbolic discrimination loss (L_disc) with a balancing coefficient λ.

Training proceeds in three stages: (i) pre‑training the Omni Concept Embedder on a large collection of user‑annotated images, (ii) learning the hyperbolic discriminator to align image‑concept pairs, and (iii) jointly fine‑tuning the LoRA adapters with both losses.

At inference time, two complementary, training‑free modes are offered. In Parsing Mode, a brand‑new concept is presented with a few reference images; the embedder instantly produces z_new, which is used for the current query and cached for future use. No gradients are computed, enabling real‑time adaptation to open‑vocabulary concepts. In Retrieval Mode, previously cached embeddings are retrieved from a concept memory bank, allowing rapid matching for repeated concepts.

To evaluate the practical utility of online concept learning, the authors construct OP‑Eval, a large‑scale benchmark comprising 1,292 distinct user‑defined concepts and over 30 K high‑quality image‑question pairs. OP‑Eval covers three downstream tasks—question answering, concept identification, and caption generation—across varying difficulty levels and both single‑ and multi‑concept scenarios.

Extensive experiments on OP‑Eval and several existing VLM benchmarks demonstrate that Online‑PVLM consistently outperforms prior personalized VLMs such as MyVLM, Yo’LLA‑VA, and MC‑LLAVA. Notably, it achieves an average gain of 8–12 % absolute accuracy on OP‑Eval, with especially strong improvements (up to 15 % absolute) on multi‑concept queries. Computationally, the method requires only 5–10 images per concept and adds roughly 0.02 seconds of latency per query, while reducing memory footprint by about 30 % compared to embedding‑per‑concept baselines. Scalability tests show that increasing the number of concepts from 1 K to 5 K leads to less than 1 % drop in performance, confirming the logarithmic growth of retrieval cost thanks to the hyperbolic embedding space and the memory‑bank design.

The paper also discusses limitations: hyperbolic representations can suffer from numerical instability in very high dimensions, and the current system focuses on visual concepts, leaving text‑only user identifiers (e.g., nicknames) for future work. Moreover, efficient memory‑bank eviction policies (e.g., LRU, importance‑based pruning) need further investigation for real‑world deployment.

In summary, Online‑PVLM provides the first end‑to‑end solution for online concept learning in personalized VLMs, combining hyperbolic geometry, lightweight LoRA adapters, and a train‑free embedding pipeline. The introduced OP‑Eval benchmark sets a new standard for evaluating large‑scale, sparse, and diverse personalized VLM scenarios. The work opens avenues for further research on multimodal continual learning, richer text‑visual concept integration, and scalable memory management in next‑generation personalized AI assistants.

Comments & Academic Discussion

Loading comments...

Leave a Comment