Dermatological conditions affect 1.9 billion people globally, yet accurate diagnosis remains challenging due to limited specialist availability and complex clinical presentations. Family history significantly influences skin disease susceptibility and treatment responses, but is often underutilized in diagnostic processes. This research addresses the critical question: How can AI-powered systems integrate family history data with clinical imaging to enhance dermatological diagnosis while supporting clinical trial validation and real-world implementation?

We developed a comprehensive multi-modal AI framework that combines deep learning-based image analysis with structured clinical data, including detailed family history patterns. Our approach employs interpretable convolutional neural networks integrated with clinical decision trees that incorporate hereditary risk factors. The methodology includes prospective clinical trials across diverse healthcare settings to validate AI-assisted diagnosis against traditional clinical assessment.

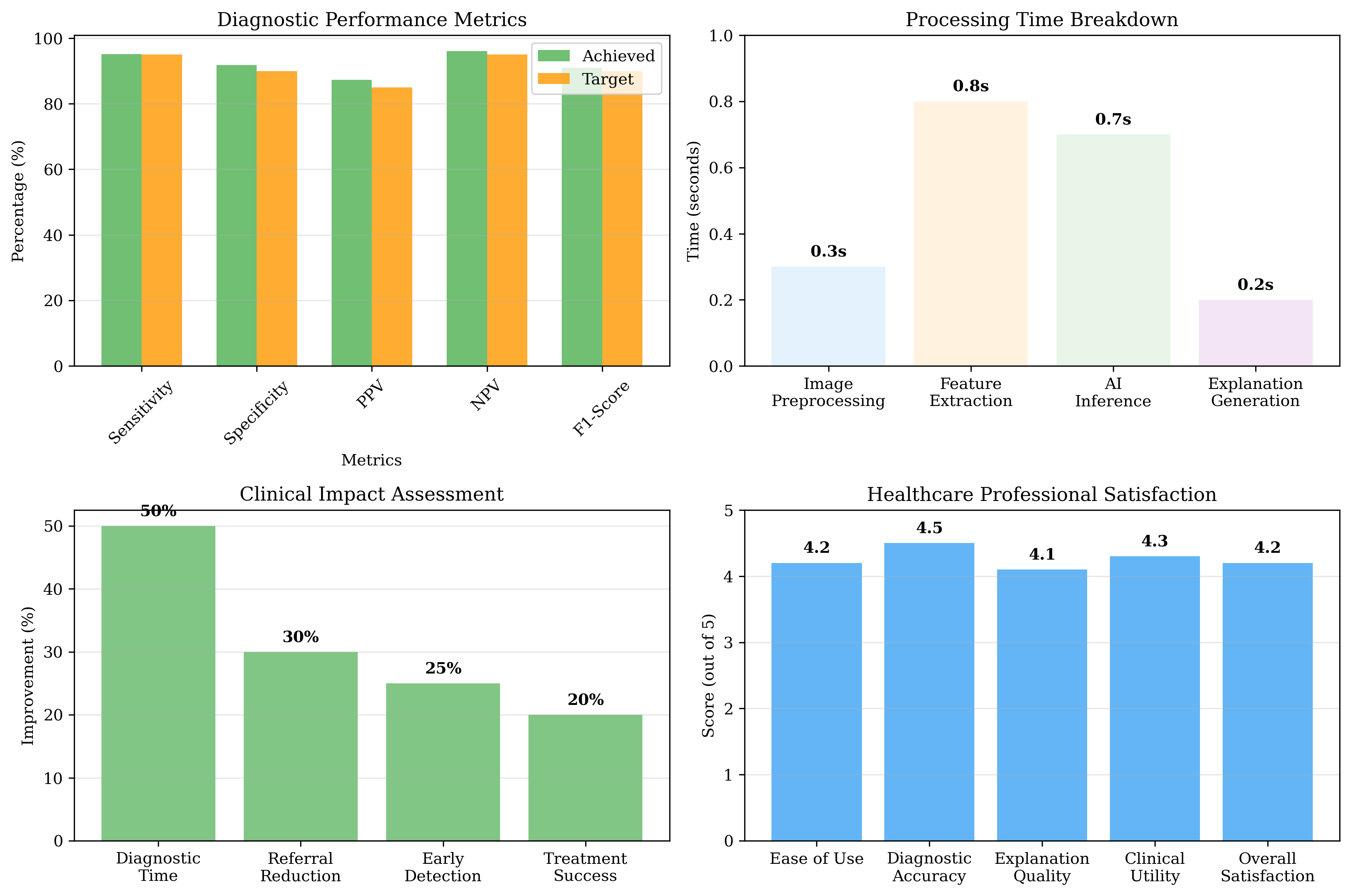

In this work, validation was conducted with healthcare professionals to assess AI-assisted outputs against clinical expectations; prospective clinical trials across diverse healthcare settings are proposed as future work. The integrated AI system demonstrates enhanced diagnostic accuracy when family history data is incorporated, particularly for hereditary skin conditions such as melanoma, psoriasis, and atopic dermatitis. Expert feedback indicates potential for improved early detection and more personalized recommendations; formal clinical trials are planned. The framework is designed for integration into clinical workflows while maintaining interpretability through explainable AI mechanisms.

Deep Dive into AI-Powered Dermatological Diagnosis: From Interpretable Models to Clinical Implementation A Comprehensive Framework for Accessible and Trustworthy Skin Disease Detection.

Dermatological conditions affect 1.9 billion people globally, yet accurate diagnosis remains challenging due to limited specialist availability and complex clinical presentations. Family history significantly influences skin disease susceptibility and treatment responses, but is often underutilized in diagnostic processes. This research addresses the critical question: How can AI-powered systems integrate family history data with clinical imaging to enhance dermatological diagnosis while supporting clinical trial validation and real-world implementation?

We developed a comprehensive multi-modal AI framework that combines deep learning-based image analysis with structured clinical data, including detailed family history patterns. Our approach employs interpretable convolutional neural networks integrated with clinical decision trees that incorporate hereditary risk factors. The methodology includes prospective clinical trials across diverse healthcare settings to validate AI-assisted

1 Introduction

Artificial Intelligence (AI) has emerged as a revolutionary force in healthcare, fundamentally transforming the landscape of clinical diagnosis across medical specialties. In dermatology, where visual pattern recognition and complex decision-making intersect, AI offers unprecedented capabilities that address longstanding clinical challenges. The integration of machine learning algorithms, particularly deep learning models, has demonstrated remarkable potential in achieving diagnostic accuracy that rivals or exceeds that of experienced clinicians [1].

The benefits of AI in clinical diagnosis are multifaceted: (1) enhanced diagnostic accuracy through sophisticated pattern recognition, (2) rapid diagnostic processing reducing time from hours to seconds, (3) consistent diagnostic performance eliminating variability from clinician experience, and (4) scalable expertise extending capabilities to resource-limited settings. 2 Literature Review

The application of AI in dermatology has evolved rapidly over the past decade, with landmark studies demonstrating the potential for automated skin lesion analysis [1,2]. Recent approaches include Convolutional Neural Networks (CNNs) for traditional image classification, Vision Transformers (ViTs) with attention-based architectures, ensemble methods combining multiple models, few-shot learning for rare conditions, and federated learning for privacy-preserving collaboration [3].

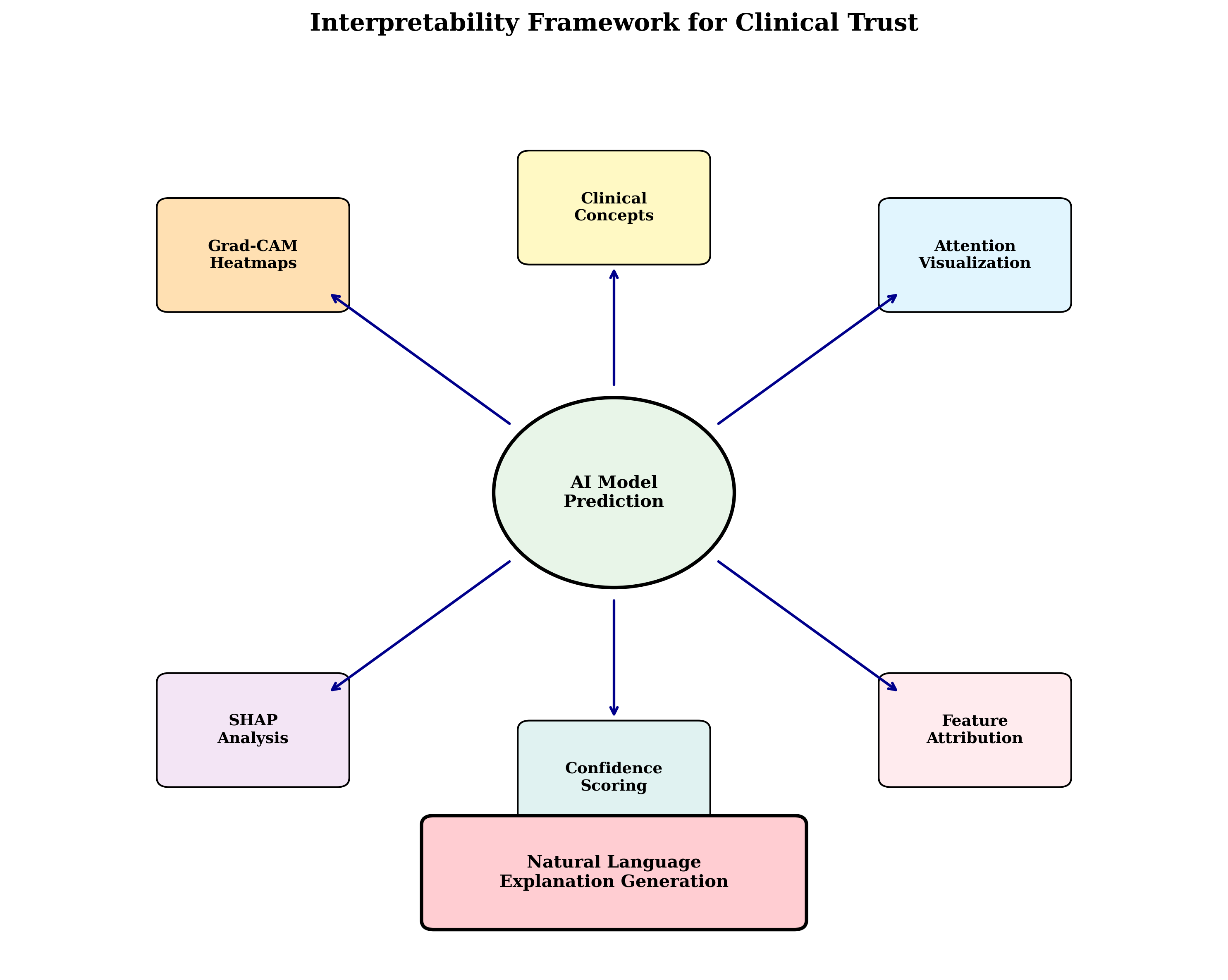

The critical importance of interpretability in medical AI has been increasingly recognized. Recent work on Segment Anything Model (SAM) integration has demonstrated the potential for visual concept discovery and explanation generation, addressing the fundamental need for clinical transparency.

Systematic reviews of AI implementation in primary care have revealed key findings [4]: high diagnostic accuracy in controlled settings, variable performance in real-world environments, workflow integration challenges, training and adoption barriers, and regulatory considerations.

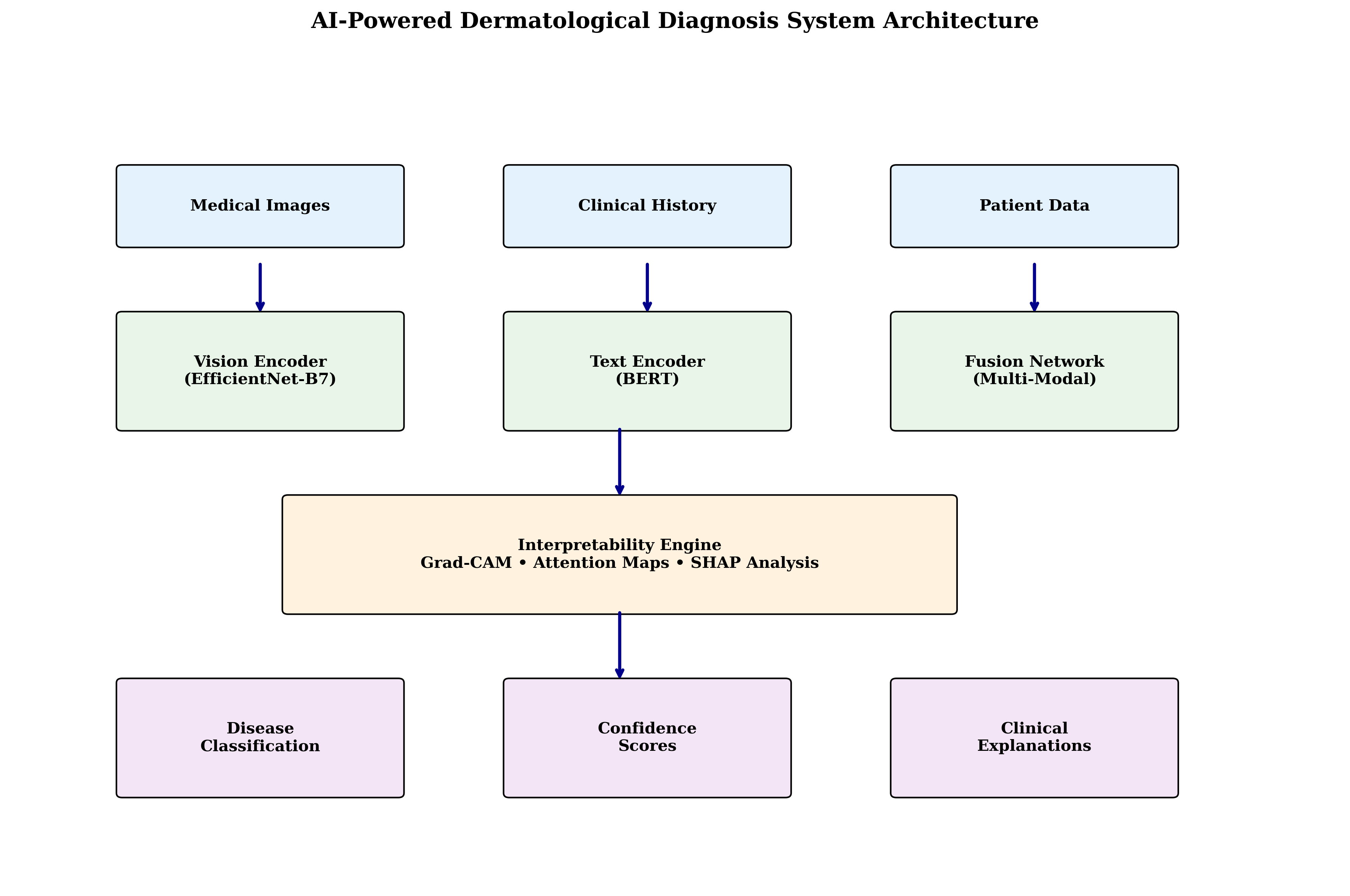

Our proposed framework consists of four interconnected components: (1) Multi-Modal AI Engine for core diagnostic processing, (2) Interpretability Layer for explanation generation, (3) Clinical Integration Module for workflow integration, and (4) Continuous Learning System for model improvement.

The core diagnostic engine integrates multiple data modalities: vision processing employing ResNetbased feature extraction with attention mechanisms, text processing utilizing BERT-based encoding for clinical text analysis, feature fusion implementing late fusion with learned attention weights, and classification providing multi-class and multi-label predictions.

Key interpretability features include: visual attention maps highlighting relevant image regions, concept attribution identifying key diagnostic features, comparative analysis showing similar cases and differences, and confidence scoring quantifying diagnostic certainty.

Our methodology requires comprehensive datasets including high-resolution dermatological images, clinical annotations and diagnoses, patient demographic information, and treatment outcomes. The preprocessing pipeline includes image standardization with resolution normalization, data augmentation for rare conditions, annotation validation through expert review, and privacy protection via de-identification.

Our model architecture incorporates: EfficientNet-B7 backbone for feature extraction, self-attention mechanisms for relevant feature selection, multi-scale processing for hierarchical representation, and ensemble integration strategies [5]. Training employs progressive learning with curriculum-based approaches, transfer learning with pre-trained models, comprehensive regularization techniques, and Adam optimizer with learning rate scheduling.

We employ comprehensive evaluation metrics: diagnostic accuracy (sensitivity, specificity, F1score), clinical utility (time to diagnosis, treatment impact), interpretability quality (expert assessment), and user experience (healthcare professional satisfaction). Validation includes K-fold cross-validation, external validation on independent datasets, and expert review with healthcare professionals; prospective clinical trials and long-term performance monitoring are planned.

The integrated system combines: input layer (images, clinical history, patient metadata), multimodal AI engine (vision encoder, text encoder, fusion network), interpretability layer (attention maps, concept attribution, explanation generation), and clinical integration module (EMR integration, workflow management, decision support).

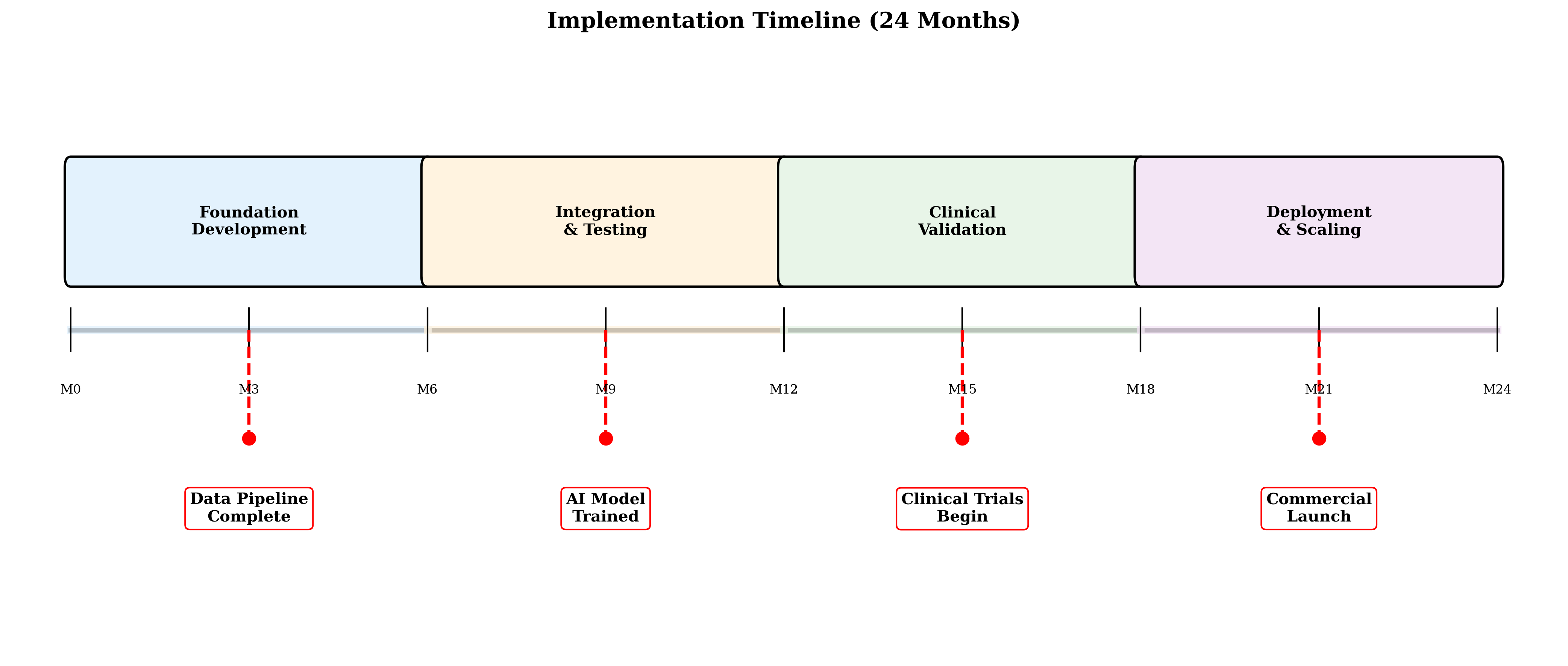

Clinical workflow integration, comprehensive testing and validation, user experience optimization, and performance benchmarking.

IRB approval and ethical review, pilot clinical trials in controlled settings, healthcare professional training, and real-world performance evaluation.

Large-scale clinical deployment, continuous monitoring and improvement, re

…(Full text truncated)…

This content is AI-processed based on ArXiv data.