When & How to Write for Personalized Demand-aware Query Rewriting in Video Search

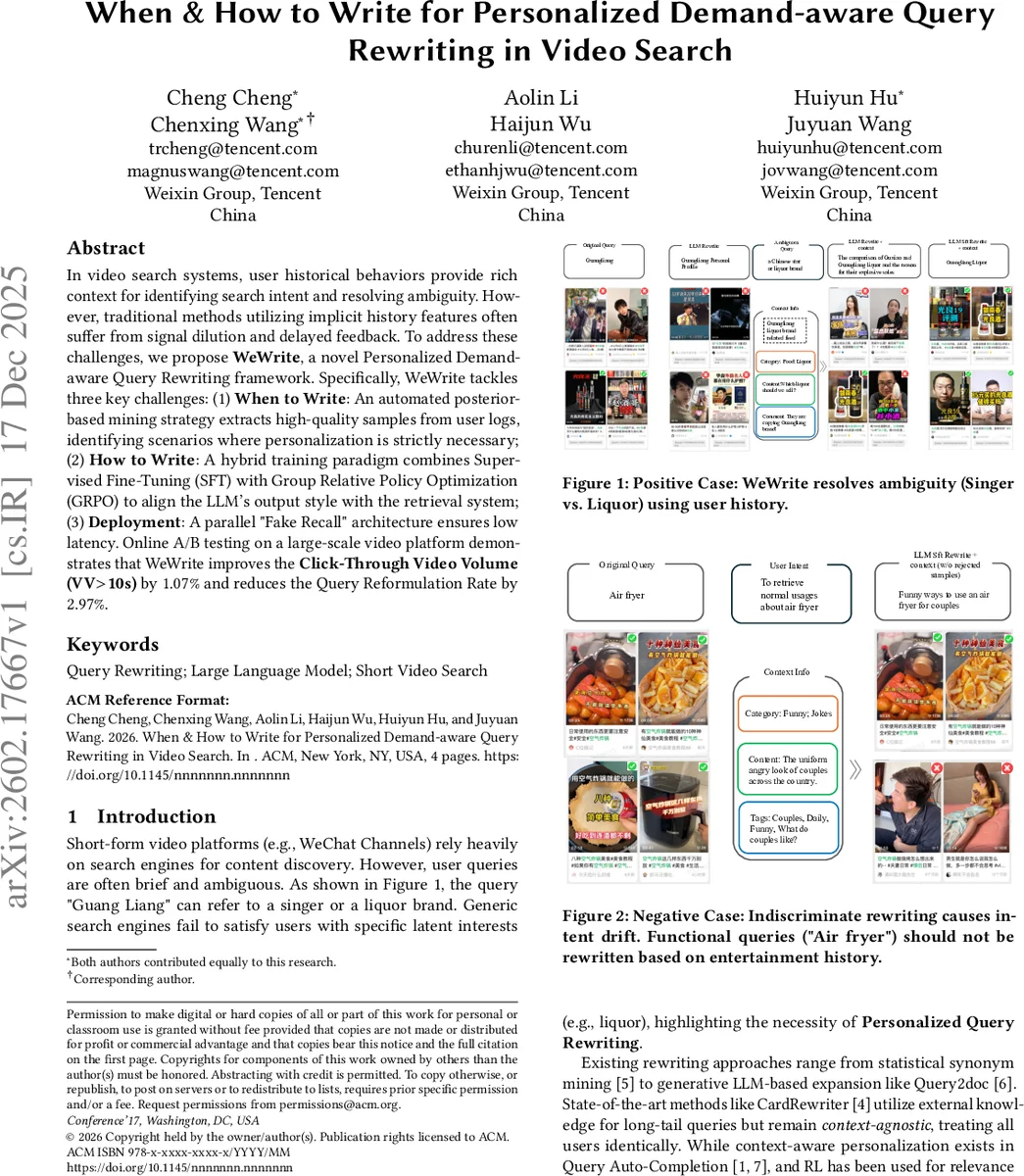

In video search systems, user historical behaviors provide rich context for identifying search intent and resolving ambiguity. However, traditional methods utilizing implicit history features often suffer from signal dilution and delayed feedback. To address these challenges, we propose WeWrite, a novel Personalized Demand-aware Query Rewriting framework. Specifically, WeWrite tackles three key challenges: (1) When to Write: An automated posterior-based mining strategy extracts high-quality samples from user logs, identifying scenarios where personalization is strictly necessary; (2) How to Write: A hybrid training paradigm combines Supervised Fine-Tuning (SFT) with Group Relative Policy Optimization (GRPO) to align the LLM’s output style with the retrieval system; (3) Deployment: A parallel “Fake Recall” architecture ensures low latency. Online A/B testing on a large-scale video platform demonstrates that WeWrite improves the Click-Through Video Volume (VV$>$10s) by 1.07% and reduces the Query Reformulation Rate by 2.97%.

💡 Research Summary

WeWrite addresses two fundamental challenges in short‑form video search: (1) deciding when a query should be personalized and (2) determining how to generate a rewrite that is both semantically appropriate and retrieval‑friendly. Traditional implicit‑history methods suffer from signal dilution and delayed feedback, leading to either over‑personalization (intent drift) or missed personalization opportunities.

When to Write – Posterior‑based Sample Mining

The authors exploit posterior user signals—immediate query reformulations, dwell time, and click behavior—to automatically construct a high‑quality training set. Positive samples are extracted when an original query (Qₒᵣᵢg) yields little interaction (dwell < τₛₕₒᵣₜ = 2.4 s) and the subsequent reformulated query (Qₙₑₓₜ) leads to a long dwell (> τᵥₐₗᵢd = 10 s). A two‑stage filter ensures demand‑awareness: (i) a coarse Context Overlap filter retains only pairs where at least one newly introduced term appears in the user’s recent video titles/tags; (ii) a fine‑grained LLM‑based intent verifier (teacher model Qwen3‑32B) performs binary classification to confirm that the reformulation is truly grounded in the user’s context. Negative samples are collected from sessions where the original query already satisfies the user (long dwell, no reformulation) and are labeled with a special

How to Write – Style‑aligned LLM Fine‑tuning

WeWrite adopts a two‑stage training pipeline. First, Supervised Fine‑Tuning (SFT) uses the mined positive and negative pairs to teach a base LLM (e.g., Qwen) to output either a rewritten query or

Deployment – Fake Recall & Fusion Architecture

Real‑time video search imposes strict latency budgets, making direct LLM inference infeasible in the critical path. WeWrite introduces a parallel “Fake Recall” architecture. A pre‑built key‑value store I_fake maps popular queries to their top‑K (K = 50) documents, constructed from interaction‑rich head queries and retrieval‑based mining of long‑tail logs. When a request arrives, the traditional text/vector recall pipeline runs concurrently with the LLM rewrite path. If the LLM‑generated query hits I_fake, the cached document list is retrieved instantly; a lightweight relevance filter then removes any mismatched items before merging the fake candidates with the main recall results. Because LLM inference proceeds asynchronously, the overall end‑to‑end latency remains unchanged, achieving zero‑perceived‑latency personalization.

Experimental Validation

An online A/B test on a large‑scale video platform deployed the optimal model (Qwen3‑4B + SFT + GRPO). Results showed a 1.07 % lift in Click‑Through Video Volume for sessions with stay time > 10 s (VV > 10 s) and a 2.97 % reduction in Query Reformulation Rate. These gains demonstrate that precise “when” detection prevents unnecessary rewrites, while GRPO‑aligned “how” generation produces queries that are both user‑specific and index‑compatible.

Contributions and Impact

- Posterior‑based automatic mining provides a data‑driven criterion for triggering personalization, mitigating intent drift.

- The hybrid SFT + GRPO training aligns LLM output style with retrieval system metrics, addressing the zero‑recall problem common in generative rewriting.

- The Fake Recall parallel architecture enables deployment of large LLMs in latency‑critical production environments without sacrificing response time.

Overall, WeWrite offers a practical blueprint for integrating large language models into personalized search pipelines, balancing personalization benefits with system efficiency. Its methodology can be extended to other multimodal retrieval scenarios where user context is rich but real‑time constraints are strict.

Comments & Academic Discussion

Loading comments...

Leave a Comment