Characterizing Mamba's Selective Memory using Auto-Encoders

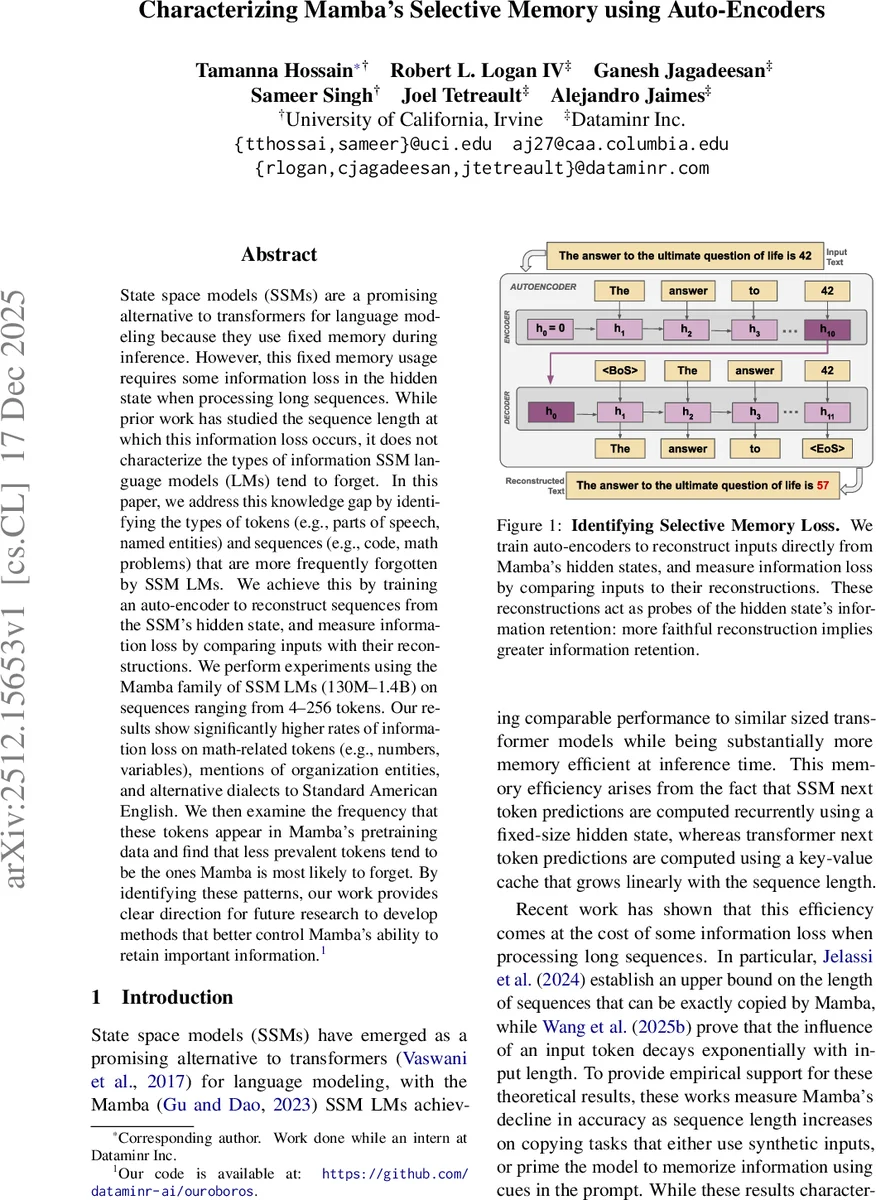

State space models (SSMs) are a promising alternative to transformers for language modeling because they use fixed memory during inference. However, this fixed memory usage requires some information loss in the hidden state when processing long sequences. While prior work has studied the sequence length at which this information loss occurs, it does not characterize the types of information SSM language models (LMs) tend to forget. In this paper, we address this knowledge gap by identifying the types of tokens (e.g., parts of speech, named entities) and sequences (e.g., code, math problems) that are more frequently forgotten by SSM LMs. We achieve this by training an auto-encoder to reconstruct sequences from the SSM’s hidden state, and measure information loss by comparing inputs with their reconstructions. We perform experiments using the Mamba family of SSM LMs (130M–1.4B) on sequences ranging from 4–256 tokens. Our results show significantly higher rates of information loss on math-related tokens (e.g., numbers, variables), mentions of organization entities, and alternative dialects to Standard American English. We then examine the frequency that these tokens appear in Mamba’s pretraining data and find that less prevalent tokens tend to be the ones Mamba is most likely to forget. By identifying these patterns, our work provides clear direction for future research to develop methods that better control Mamba’s ability to retain important information.

💡 Research Summary

This paper investigates the selective forgetting behavior of Mamba, a state‑space model (SSM)‑based language model that uses a fixed‑size hidden state during inference. While prior work has quantified the sequence length at which Mamba’s performance degrades, it has not examined what kinds of information are most likely to be lost when processing natural language. To fill this gap, the authors propose a probing methodology that reconstructs input texts directly from Mamba’s hidden states using an auto‑encoder, and then measures reconstruction quality with two complementary metrics: (i) Omission Rate, which captures token‑level dropout, and (ii) ROUGE‑F1, which captures sequence‑level fidelity.

Methodology

The encoder is a frozen, pretrained Mamba model that maps an input token sequence into two latent components: a convolutional state (β_C) and an SSM recurrent state (β_S). The decoder re‑uses the same Mamba architecture, initializing its own convolutional and recurrent states with β_C and β_S, respectively, and then autoregressively generates a reconstruction of the original text. Separate auto‑encoders are trained for each fixed sequence length (4, 8, 16, 32, 64, 128, 256 tokens) to control for length‑related confounds. Training uses 200 K instances (≈282 M tokens) from the “Pile Uncopyrighted” dataset, optimizing a cross‑entropy loss between the original and reconstructed tokens while keeping the encoder frozen.

Key Findings

-

Length and Model‑Size Effects – Reconstruction quality declines sharply as sequence length grows. For the smallest 130 M‑parameter model, ROUGE‑F1 drops from 98.6 % at length 4 to 85.0 % at length 128 and 66.6 % at length 256. Larger models (370 M, 790 M, 1.4 B) mitigate this decay, especially for longer sequences, confirming earlier theoretical results that longer contexts demand higher capacity. Interestingly, the 790 M model sometimes outperforms the 1.4 B model, indicating non‑monotonic scaling due to factors such as initialization and time‑dependent SSM parameters.

-

Token‑Level Forgetting – Tokens belonging to mathematical expressions (numbers, variables), organization named entities, and non‑standard dialects (e.g., African American Vernacular English) exhibit significantly higher omission rates. A concrete example is the number “42” being reconstructed as “57”. These errors appear even for relatively short contexts (≥ 16 tokens).

-

Sequence‑Level Forgetting – Entire sequences that are mathematically dense, contain code snippets, or otherwise have complex structural patterns suffer larger reconstruction errors, suggesting that the SSM’s fixed hidden state struggles to compress highly structured information.

-

Correlation with Pre‑training Frequency – By sampling a 178 M‑token subset of the Pile, the authors show that the categories most prone to forgetting are also the rarest in the pre‑training corpus. Organization mentions and mathematical tokens constitute less than 0.3 % of the sampled tokens, yet they have the highest omission rates. This points to a data‑distribution bias: SSMs inherit the rarity‑driven forgetting behavior of their training data.

Implications

- Data‑Centric Remedies: To improve recall of rare tokens and dialects, future pre‑training pipelines could oversample under‑represented categories or employ token‑weighted loss functions that amplify gradients for scarce tokens.

- Architectural Enhancements: Augmenting the fixed‑size SSM hidden state with external memory (e.g., a key‑value cache) or hybridizing with transformer layers may alleviate the exponential decay of token influence observed in prior work.

- Evaluation Framework: The auto‑encoder reconstruction probe provides a general, prompt‑free way to assess memory fidelity across arbitrary natural inputs, complementing earlier “copy‑task” or “prompt‑cue” benchmarks.

Conclusion

The study delivers a nuanced picture of Mamba’s selective memory: while the model can retain most information for short contexts, it systematically forgets rare, mathematically oriented, or dialect‑specific tokens, especially as context length grows. These findings highlight the intertwined roles of model capacity, training data distribution, and SSM architecture in shaping long‑range memory. The proposed probing methodology and the identified failure modes offer concrete directions for future research aimed at balancing the computational efficiency of SSMs with robust information retention.

Comments & Academic Discussion

Loading comments...

Leave a Comment