Optimizing Bloom Filters for Modern GPU Architectures

Bloom filters are a fundamental data structure for approximate membership queries, with applications ranging from data analytics to databases and genomics. Several variants have been proposed to accommodate parallel architectures. GPUs, with massive thread-level parallelism and high-bandwidth memory, are a natural fit for accelerating these Bloom filter variants potentially to billions of operations per second. Although CPU-optimized implementations have been well studied, GPU designs remain underexplored. We close this gap by exploring the design space on GPUs along three dimensions: vectorization, thread cooperation, and compute latency. Our evaluation shows that the combination of these optimization points strongly affects throughput, with the largest gains achieved when the filter fits within the GPU’s cache domain. We examine how the hardware responds to different parameter configurations and relate these observations to measured performance trends. Crucially, our optimized design overcomes the conventional trade-off between speed and precision, delivering the throughput typically restricted to high-error variants while maintaining the superior accuracy of high-precision configurations. At iso error rate, the proposed method outperforms the state-of-the-art by $11.35\times$ ($15.4\times$) for bulk filter lookup (construction), respectively, achieving above $92%$ of the practical speed-of-light across a wide range of configurations on a B200 GPU. We propose a modular CUDA/C++ implementation, which will be openly available soon.

💡 Research Summary

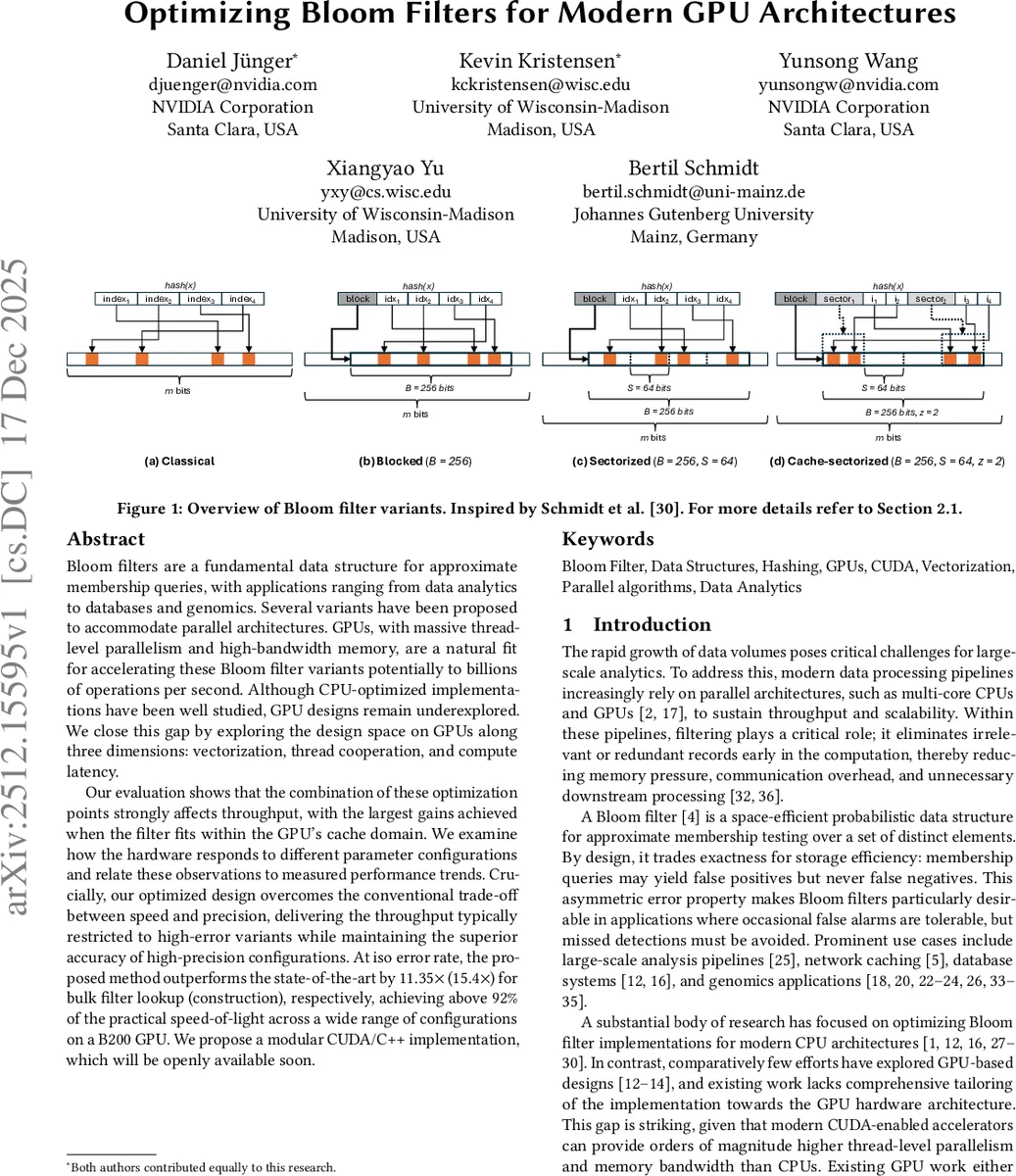

The paper addresses the under‑explored problem of implementing Bloom filters efficiently on modern GPUs. Bloom filters are widely used for approximate membership queries in analytics, databases, and genomics, but most prior work focuses on CPU optimizations. The authors examine several Bloom filter variants—Classical Bloom Filter (CBF), Blocked Bloom Filter (BBF), Register‑Blocked Bloom Filter (RBBF), Sectorized Bloom Filter (SBF), and Cache‑Sectorized Bloom Filter (CSBF)—and analyze how their memory access patterns interact with GPU architecture. They identify three key dimensions for optimization: vectorization, warp‑level cooperation, and compute latency hiding.

Vectorization exploits the fact that words within a block are stored contiguously (vertical) and can be processed independently (horizontal). By using wide load instructions (16 B on older CUDA GPUs, 32 B on Blackwell), the implementation coalesces memory accesses, reducing the number of high‑latency DRAM transactions. Warp‑cooperative execution assigns an entire warp to a single block, allowing threads to share a single hash computation and to perform atomic bit updates without redundant work. Compute latency is reduced by employing branch‑free multiplicative hashing, which generates fingerprints in a pipeline that can be overlapped with memory operations, thus hiding latency via instruction‑level parallelism.

The authors implement these ideas in a modular CUDA/C++ library and evaluate it on several NVIDIA GPUs (B200, H200 SXM, RTX PRO 6000). Experiments cover both cache‑resident configurations (filter fits entirely within the L2 cache) and DRAM‑resident configurations. In the cache‑resident regime, the optimized design reaches more than 92 % of the measured “speed‑of‑light” bound, indicating that memory latency is effectively eliminated. In the DRAM‑resident regime, where bandwidth becomes the bottleneck, the same design still achieves up to 15.4× higher lookup throughput and 11.35× higher construction throughput compared with the state‑of‑the‑art GPU implementation (WarpCore BBF). Importantly, these gains are obtained without sacrificing false‑positive rate; at iso‑error rates the new method matches or exceeds the accuracy of high‑precision configurations while delivering the speed of high‑error variants.

The paper also provides a systematic exploration of the hyper‑parameter space (block size, number of hash functions, sector count, etc.), showing how performance scales with filter size and how the optimal configuration shifts depending on whether the workload is compute‑bound or memory‑bound. The authors demonstrate that when the filter size exceeds the L2 cache, performance is fundamentally limited by random‑access DRAM bandwidth, but their coalesced access pattern still extracts the majority of the available bandwidth. Across all tested GPUs, the proposed optimizations consistently outperform prior work, confirming that careful alignment of data layout, thread cooperation, and instruction scheduling can unlock the full potential of GPUs for Bloom filter workloads. The authors plan to release the library as open source, enabling immediate acceleration for a broad range of applications that rely on fast approximate set membership testing.

Comments & Academic Discussion

Loading comments...

Leave a Comment