DeX-Portrait: Disentangled and Expressive Portrait Animation via Explicit and Latent Motion Representations

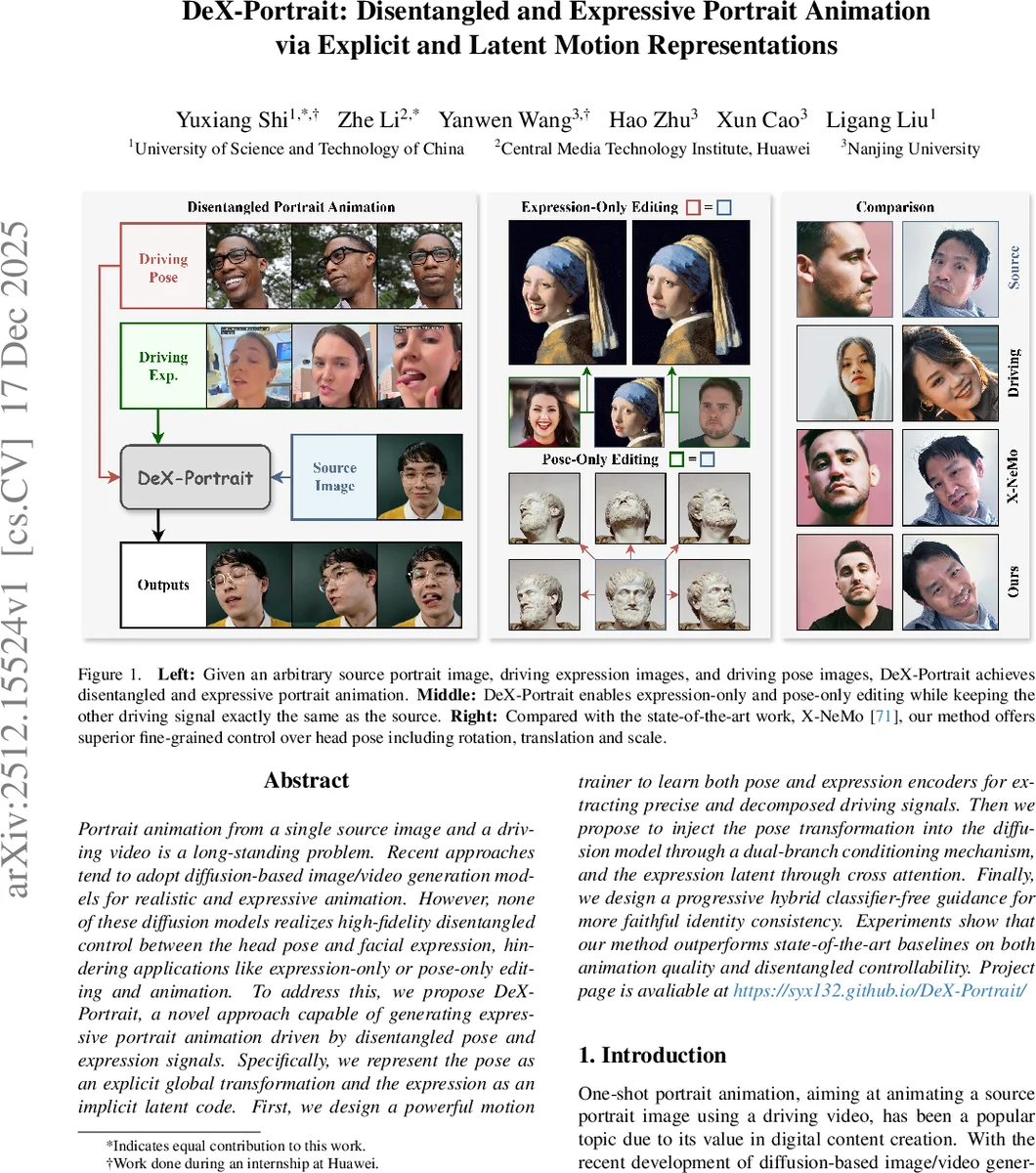

Portrait animation from a single source image and a driving video is a long-standing problem. Recent approaches tend to adopt diffusion-based image/video generation models for realistic and expressive animation. However, none of these diffusion models realizes high-fidelity disentangled control between the head pose and facial expression, hindering applications like expression-only or pose-only editing and animation. To address this, we propose DeX-Portrait, a novel approach capable of generating expressive portrait animation driven by disentangled pose and expression signals. Specifically, we represent the pose as an explicit global transformation and the expression as an implicit latent code. First, we design a powerful motion trainer to learn both pose and expression encoders for extracting precise and decomposed driving signals. Then we propose to inject the pose transformation into the diffusion model through a dual-branch conditioning mechanism, and the expression latent through cross attention. Finally, we design a progressive hybrid classifier-free guidance for more faithful identity consistency. Experiments show that our method outperforms state-of-the-art baselines on both animation quality and disentangled controllability.

💡 Research Summary

The paper “DeX-Portrait: Disentangled and Expressive Portrait Animation via Explicit and Latent Motion Representations” addresses a critical limitation in current diffusion-based portrait animation: the entanglement of head pose and facial expression. In existing state-of-the-art models, controlling the rotation of the head often leads to unintended changes in facial expressions, making it nearly impossible to perform isolated editing tasks such as “pose-only” or “expression-only” animation.

To overcome this, the authors introduce DeX-Portrait, a framework that decomposes motion into two distinct and decoupled representations. The first is an “explicit” representation for pose, modeled as a global 3D transformation matrix. By treating pose as a geometric transformation, the model can precisely manipulate the spatial orientation of the head without altering the underlying facial features. The second is an “implicit” representation for expression, encoded as a latent code. This allows the model to capture the complex, non-linear, and subtle nuances of facial muscle movements through learned patterns in a latent space.

The architecture relies on a specialized “Motion Trainer” designed to train encoders capable of extracting these decomposed signals from a driving video. The integration into the diffusion model is achieved through a “dual-branch conditioning mechanism.” The pose transformation is injected via a branch that handles spatial warping and geometric shifts, while the expression latent is integrated through a cross-attention mechanism, similar to how text prompts are processed in text-to-image models.

Furthermore, the paper introduces “Progressive Hybrid Classifier-Free Guidance” (CFG) to tackle the challenge of identity preservation. A common issue in animation is that the subject’s identity drifts as the motion intensity increases. The proposed hybrid guidance strategy dynamically balances the influence of the driving signal and the original source image throughout the diffusion denoising process, ensuring that the animated character remains highly consistent with the source identity.

Experimental results demonstrate that DeX-Portrait significantly outperforms existing baselines in both animation fidelity and disentangled controllability. The ability to independently manipulate pose and expression marks a substantial step forward for applications in digital human creation, visual effects, and interactive media, where precise and granular control over facial performance is essential.

Comments & Academic Discussion

Loading comments...

Leave a Comment