Photorealistic Phantom Roads in Real Scenes: Disentangling 3D Hallucinations from Physical Geometry

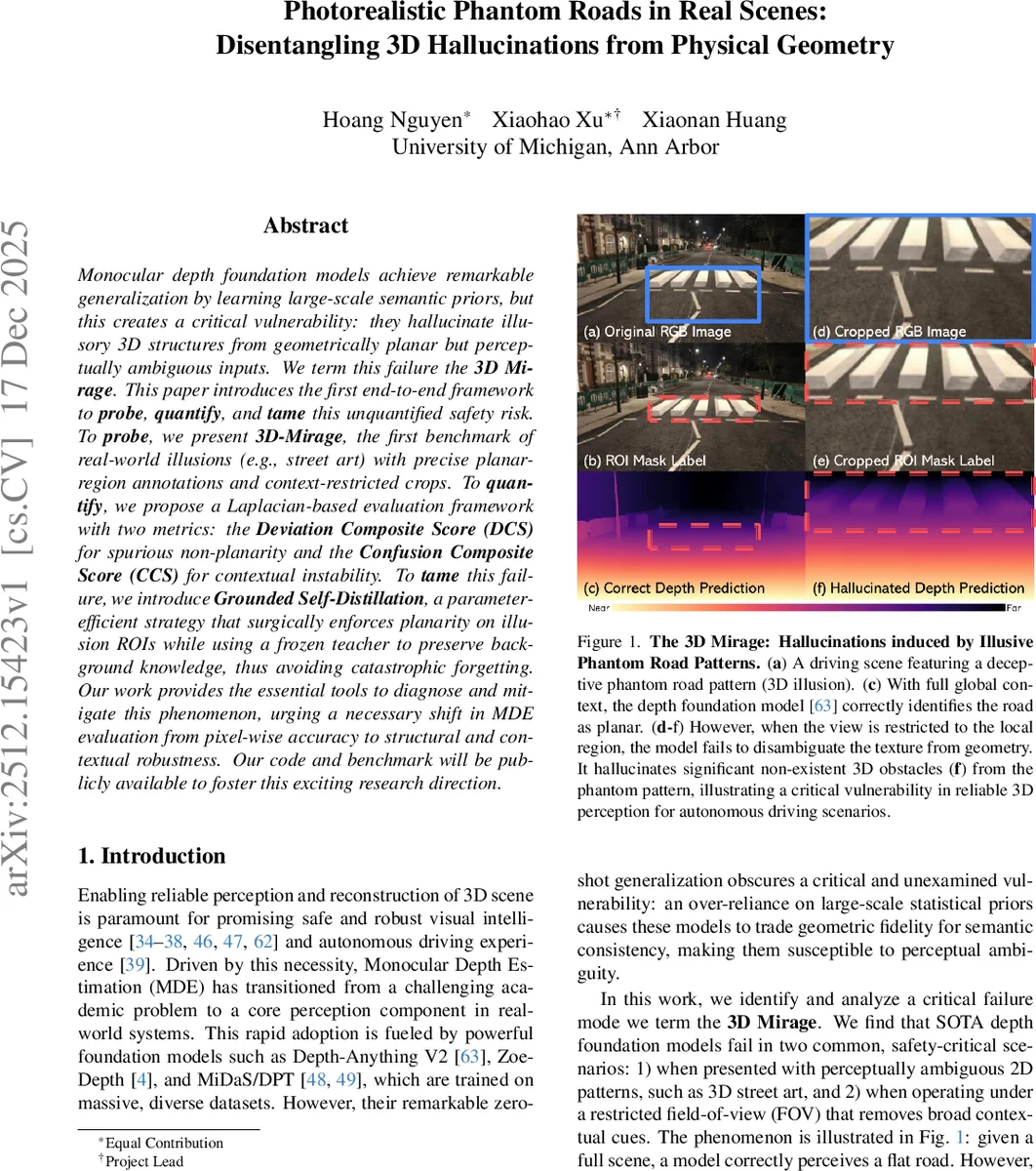

Monocular depth foundation models achieve remarkable generalization by learning large-scale semantic priors, but this creates a critical vulnerability: they hallucinate illusory 3D structures from geometrically planar but perceptually ambiguous inputs. We term this failure the 3D Mirage. This paper introduces the first end-to-end framework to probe, quantify, and tame this unquantified safety risk. To probe, we present 3D-Mirage, the first benchmark of real-world illusions (e.g., street art) with precise planar-region annotations and context-restricted crops. To quantify, we propose a Laplacian-based evaluation framework with two metrics: the Deviation Composite Score (DCS) for spurious non-planarity and the Confusion Composite Score (CCS) for contextual instability. To tame this failure, we introduce Grounded Self-Distillation, a parameter-efficient strategy that surgically enforces planarity on illusion ROIs while using a frozen teacher to preserve background knowledge, thus avoiding catastrophic forgetting. Our work provides the essential tools to diagnose and mitigate this phenomenon, urging a necessary shift in MDE evaluation from pixel-wise accuracy to structural and contextual robustness. Our code and benchmark will be publicly available to foster this exciting research direction.

💡 Research Summary

The paper investigates a critical failure mode of modern monocular depth estimation (MDE) foundation models, which the authors term the “3D Mirage.” While large‑scale, semantically rich pre‑training enables impressive zero‑shot generalization, it also makes these models prone to hallucinating non‑existent 3D structures on geometrically flat surfaces that contain visually ambiguous patterns such as street art, forced‑perspective murals, or chalk anamorphoses. The problem is exacerbated when the model’s field‑of‑view (FOV) is restricted, removing global contextual cues that normally anchor the depth prediction.

To study this phenomenon systematically, the authors introduce 3D‑Mirage, a benchmark consisting of 468 real‑world RGB images containing such optical‑illusion patterns. Each image is annotated with a precise polygonal mask that delineates a region of interest (ROI) known to be physically planar. For each sample, up to four context‑restricted crops are generated, preserving at least 40 % of the ROI’s diagonal while discarding surrounding context. The final dataset contains 1,872 image‑crop pairs, each with a planar ROI mask, and has been verified by human annotators.

Standard pixel‑wise metrics (MAE, RMSE, REL) are insufficient to capture the structural errors introduced by 3D Mirages because they average over the entire image and ignore local planarity violations and context sensitivity. The authors therefore propose a Laplacian‑based evaluation framework with two composite scores:

-

Deviation Composite Score (DCS) – measures the magnitude of spurious curvature inside the planar ROI. The depth map is filtered with a Laplacian operator L, the top‑10 % responses within the ROI are summed, and a radial distance from the origin is computed. Higher DCS indicates stronger geometric hallucination.

-

Confusion Composite Score (CCS) – quantifies contextual instability. It compares the average Laplacian responses of the ROI in the full‑image prediction versus the cropped‑image prediction, using an L2 norm. Larger CCS reflects greater sensitivity to the removal of surrounding context.

Both scores are computed for each ROI and aggregated across the benchmark, providing a fine‑grained assessment of hallucination intensity (DCS) and context dependence (CCS).

To mitigate the 3D Mirage, the paper introduces Grounded Self‑Distillation, a parameter‑efficient adaptation strategy that leverages Low‑Rank Adaptation (LoRA) modules inserted into the encoder of a frozen teacher model (e.g., Depth‑Anything V2). The student model, equipped with trainable LoRA adapters, is trained with three loss terms:

-

Non‑Hallucination Knowledge Preservation (L_NKP) – aligns the student’s background (non‑ROI) predictions on the full image with the teacher’s outputs, preventing catastrophic forgetting of the pre‑trained knowledge.

-

Hallucination Knowledge Re‑editing (L_HKR) – forces the student’s full‑image prediction within the ROI to match its own prediction from the context‑free crop, thereby flattening the ROI and reducing DCS.

-

Implicit Contextual Consistency – by jointly minimizing L_NKP and L_HKR on both full and cropped views, the student also learns to keep predictions stable across different contexts, thus lowering CCS.

Because LoRA only modifies a low‑dimensional subspace of the encoder, the adaptation adds only a few hundred thousand parameters while preserving the powerful semantic priors encoded in the frozen backbone.

Experimental results span six state‑of‑the‑art MDE models (Depth‑Anything V2, ZoeDepth, MiDaS/DPT, Marigold, DepthFM, and a commercial Depth Pro). When evaluated on the original 3D‑Mirage set, all models exhibit substantial increases in both DCS and CCS under cropped conditions (average DCS rising from ~0.12 to ~0.35, CCS from ~0.09 to ~0.28). After applying Grounded Self‑Distillation, DCS drops by an average of 42 % and CCS by 35 %, with ROI Laplacian responses approaching zero while background predictions remain virtually unchanged (≤0.01 deviation from the teacher). Importantly, the adaptation does not degrade overall depth accuracy on standard benchmarks, confirming that the method successfully “tames” the hallucination without sacrificing the model’s generalization capabilities.

The authors discuss several implications and limitations. First, the benchmark isolates a specific class of visual ambiguities (static planar textures) and a limited FOV reduction; extending the analysis to dynamic scenes, varying illumination, or multi‑camera setups remains future work. Second, while the Laplacian‑based scores are effective for detecting curvature on flat surfaces, they may generate false positives on genuinely textured road surfaces, suggesting the need for complementary geometric cues. Third, the LoRA‑based self‑distillation is shown to be effective, but its scalability to larger models or real‑time deployment scenarios warrants further investigation.

In conclusion, the paper makes three major contributions: (1) a novel, real‑world benchmark (3D‑Mirage) that explicitly targets structural failures of depth models, (2) two interpretable, region‑focused metrics (DCS and CCS) that reveal hallucination intensity and context dependence beyond traditional pixel‑wise errors, and (3) a practical, parameter‑efficient adaptation pipeline (Grounded Self‑Distillation) that restores planarity in illusion regions while preserving the rich semantic knowledge of pre‑trained depth foundations. This work shifts the evaluation paradigm of monocular depth estimation from pure numeric accuracy toward structural and contextual robustness, a crucial step for safety‑critical applications such as autonomous driving and robotic navigation.

Comments & Academic Discussion

Loading comments...

Leave a Comment