ERIENet: An Efficient RAW Image Enhancement Network under Low-Light Environment

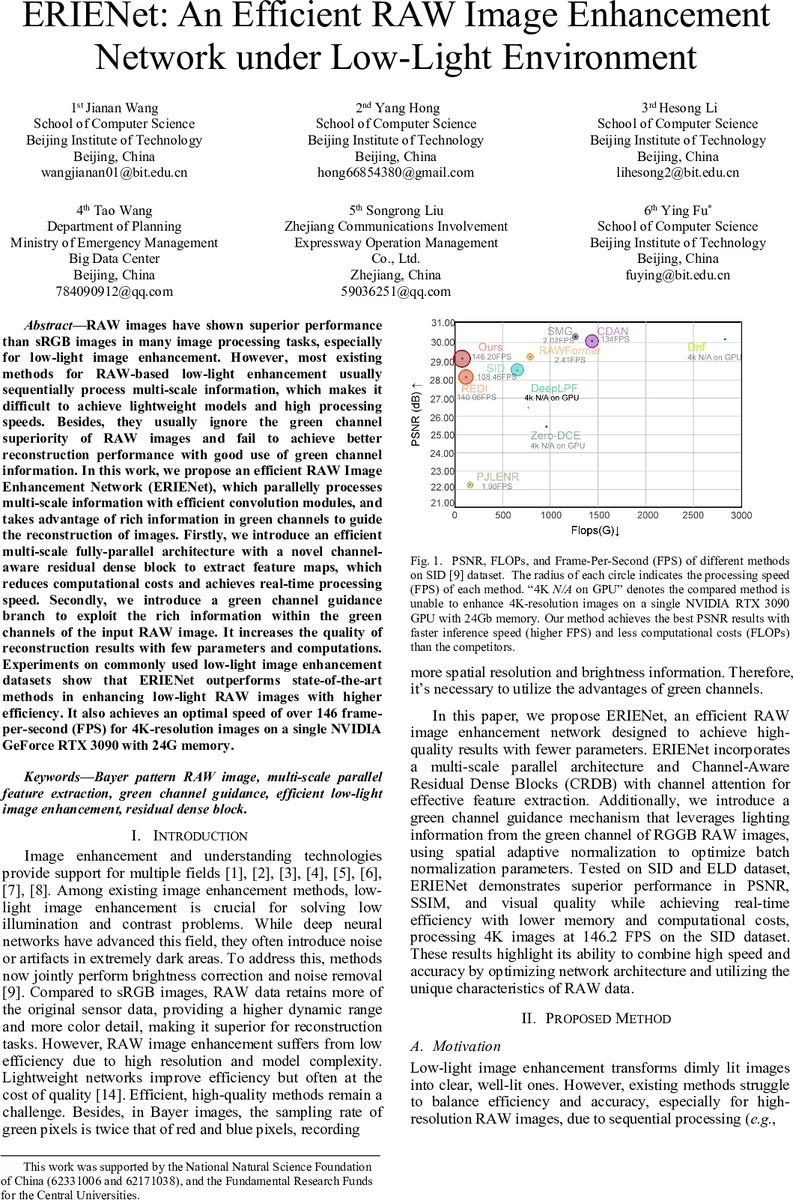

RAW images have shown superior performance than sRGB images in many image processing tasks, especially for low-light image enhancement. However, most existing methods for RAW-based low-light enhancement usually sequentially process multi-scale information, which makes it difficult to achieve lightweight models and high processing speeds. Besides, they usually ignore the green channel superiority of RAW images, and fail to achieve better reconstruction performance with good use of green channel information. In this work, we propose an efficient RAW Image Enhancement Network (ERIENet), which parallelly processes multi-scale information with efficient convolution modules, and takes advantage of rich information in green channels to guide the reconstruction of images. Firstly, we introduce an efficient multi-scale fully-parallel architecture with a novel channel-aware residual dense block to extract feature maps, which reduces computational costs and achieves real-time processing speed. Secondly, we introduce a green channel guidance branch to exploit the rich information within the green channels of the input RAW image. It increases the quality of reconstruction results with few parameters and computations. Experiments on commonly used low-light image enhancement datasets show that ERIENet outperforms state-of-the-art methods in enhancing low-light RAW images with higher effiency. It also achieves an optimal speed of over 146 frame-per-second (FPS) for 4K-resolution images on a single NVIDIA GeForce RTX 3090 with 24G memory.

💡 Research Summary

The paper presents ERIENet, an Efficient RAW Image Enhancement Network designed for low‑light photography. Unlike most existing approaches that first convert RAW data to sRGB and then apply enhancement, ERIENet operates directly on the Bayer‑RGGB RAW format, preserving the sensor’s full dynamic range and color fidelity. The authors identify two major obstacles to practical deployment: (1) RAW images are typically high‑resolution, leading to prohibitive computational cost; (2) the green channel in Bayer patterns is sampled twice as often as red or blue, yet most methods ignore this valuable information.

To address these issues, ERIENet introduces three core innovations. First, a multi‑scale parallel feature extraction backbone downsamples the input RAW image by factors of 4, 8, and 16, and processes each scale in an independent branch. This fully parallel design eliminates the sequential bottleneck of traditional pyramid networks, allowing the lowest‑resolution branch (16×) to carry the bulk of the computation while still benefiting from high‑resolution detail supplied by the other branches.

Second, each branch is built from a novel Channel‑aware Residual Dense Block (CRDB). CRDB retains the dense connectivity of a Residual Dense Block but incorporates Efficient Channel Attention (ECA) – a lightweight 1‑D convolution that adaptively re‑weights channel responses without adding significant parameters. The block concatenates the outputs of several ReLU‑Conv layers, compresses them with a 1×1 convolution, and then applies ECA. This yields strong feature representation with only 1.13 G FLOPs and 39 M parameters for the whole network.

Third, the network exploits the superior sampling of the green channel through a Green Channel Guidance (GCG) branch. The GCG extracts illumination‑sensitive scaling (γ) and shifting (β) parameters from the green channel via 3×3 convolutions and injects them into a Spatially‑Adaptive Normalization (SAN) layer placed inside the CRDB of the 16× branch. By modulating the normalized features with γ and β, the network can dynamically emphasize illumination cues, leading to better recovery of dark‑area details, reduced color bias, and more natural textures.

The loss function combines three terms: an L1 pixel loss, a Wavelet‑SSIM loss, and a Wavelet‑MSE loss. Using a three‑level Haar wavelet decomposition, the authors enforce similarity both in the spatial domain (L1) and in multi‑scale frequency domains (wavelet losses), encouraging the network to preserve high‑frequency details while suppressing noise.

Experiments are conducted on two benchmark datasets: SID (Sony α7S II and Fujifilm X‑Trans RAW images) and ELD (indoor scenes captured at ISO 800‑3200). Quantitative results show that ERIENet achieves PSNR/SSIM/LPIPS of 29.12 dB / 0.797 / 0.259 on SID and 28.80 dB / 0.819 / 0.281 on ELD, outperforming nine state‑of‑the‑art methods (both RAW‑based and sRGB‑based). Notably, ERIENet processes 4K RAW images at 146 FPS on an NVIDIA RTX 3090, a speed unattainable by any competing method. Ablation studies confirm that each component—parallel multi‑scale extraction, GCG‑SAN, and CRDB—contributes significantly to the final performance, with the green‑channel guidance alone improving PSNR by more than 1 dB.

The authors acknowledge that ERIENet is currently tailored to the Bayer RGGB pattern; extending the design to other CFA layouts (e.g., X‑Trans) and to video sequences would be valuable future work. Nonetheless, the paper demonstrates that a carefully engineered architecture can simultaneously achieve real‑time throughput, low memory footprint, and high visual quality for low‑light RAW enhancement. ERIENet therefore offers a practical solution for applications such as night‑time photography, surveillance, and mobile image processing where both speed and fidelity are critical.

Comments & Academic Discussion

Loading comments...

Leave a Comment