BookReconciler: An Open-Source Tool for Metadata Enrichment and Work-Level Clustering

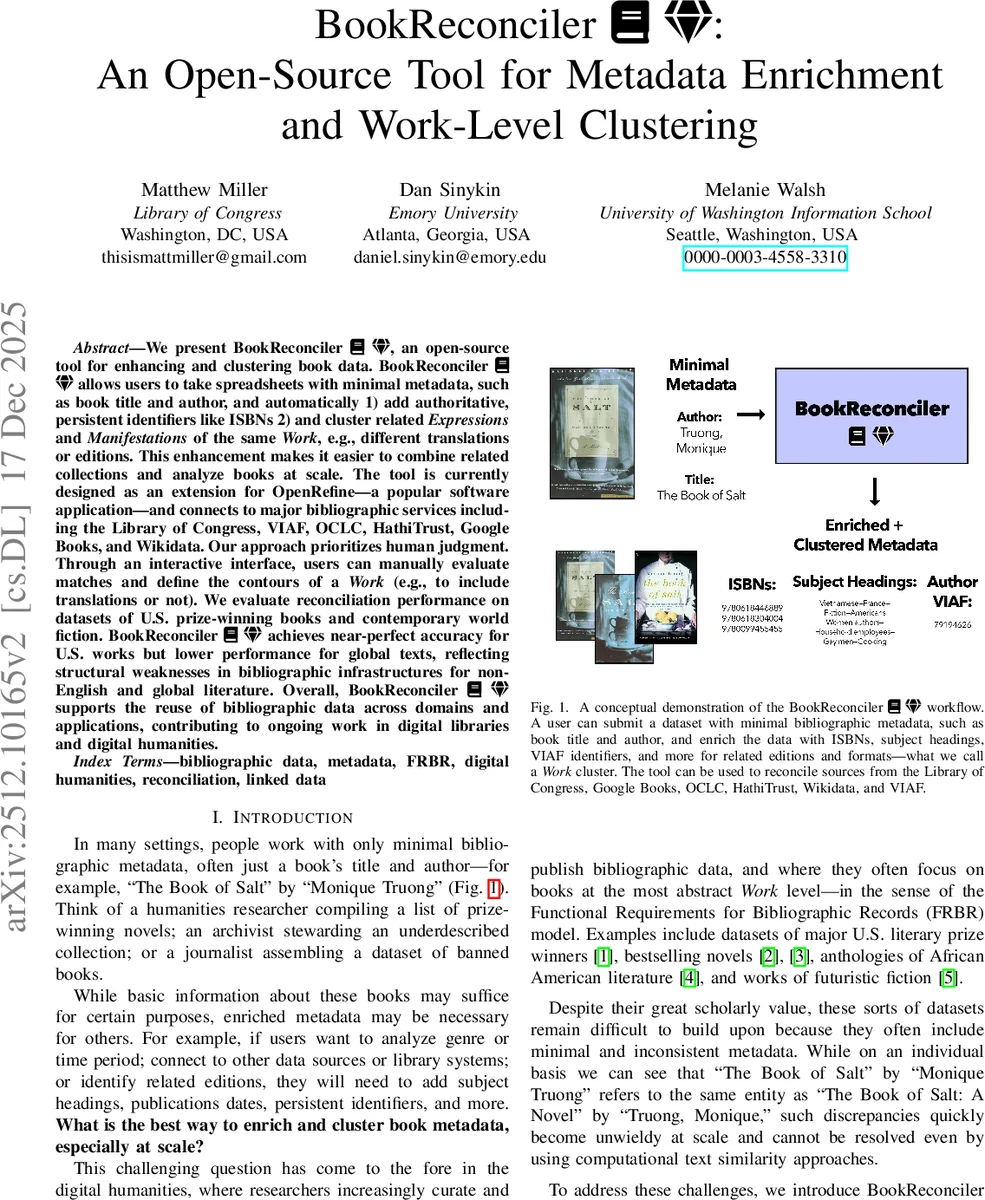

We present BookReconciler, an open-source tool for enhancing and clustering book data. BookReconciler allows users to take spreadsheets with minimal metadata, such as book title and author, and automatically 1) add authoritative, persistent identifiers like ISBNs 2) and cluster related Expressions and Manifestations of the same Work, e.g., different translations or editions. This enhancement makes it easier to combine related collections and analyze books at scale. The tool is currently designed as an extension for OpenRefine – a popular software application – and connects to major bibliographic services including the Library of Congress, VIAF, OCLC, HathiTrust, Google Books, and Wikidata. Our approach prioritizes human judgment. Through an interactive interface, users can manually evaluate matches and define the contours of a Work (e.g., to include translations or not). We evaluate reconciliation performance on datasets of U.S. prize-winning books and contemporary world fiction. BookReconciler achieves near-perfect accuracy for U.S. works but lower performance for global texts, reflecting structural weaknesses in bibliographic infrastructures for non-English and global literature. Overall, BookReconciler supports the reuse of bibliographic data across domains and applications, contributing to ongoing work in digital libraries and digital humanities.

💡 Research Summary

BookReconciler is an open‑source extension for the data‑cleaning platform OpenRefine that automates the enrichment and work‑level clustering of book metadata. Users start with a spreadsheet that may contain only a title and an author name. By selecting the column(s) to reconcile and optionally adding auxiliary properties such as author full name or publication year, the tool queries six major bibliographic services—Library of Congress, Google Books, VIAF, OCLC WorldCat, Wikidata, and HathiTrust—through their public APIs (or database dumps in the case of HathiTrust). Candidate matches are scored using a Levenshtein distance metric (0‑100) and the highest‑scoring result is chosen for each row. When a service supplies a persistent work identifier (e.g., OCLC Work ID), BookReconciler groups all returned records that share that identifier, thereby clustering different expressions (translations, reprints, adaptations) and manifestations of the same intellectual work.

A key design principle is the human‑in‑the‑loop interface. After the automated reconciliation step, each reconciled cell displays a hover preview; clicking opens a Flask‑based web view that visualises the entire work cluster. Users can manually accept or reject individual matches, decide whether to include translations, and otherwise shape the definition of a “Work” to suit their research goals. The enriched fields—ISBNs, subject headings, genres, publication dates, descriptions—can then be imported back into the OpenRefine table via its “Data Extension” feature, with options for handling multi‑value cells (concatenation or row explosion).

The authors evaluate BookReconciler on two distinct corpora. The first consists of 691 U.S. literary‑prize winners spanning 1918‑2020, a dataset dominated by English‑language works. Using Google Books alone yields a 98 % correct‑match rate; aggregating results from all six services raises accuracy to 99 %. Performance degrades for poetry and for books with variant author name representations (e.g., “W. S. Merwin” vs “William Stanley Merwin”). The second corpus contains 1,139 titles of contemporary world fiction published 2012‑2023, representing 13 countries, nine languages, and five continents. Here the best single‑service accuracy drops to 63 % (Google Books), while other services achieve between 0 % and 36 %. The overall “all‑services” accuracy mirrors the Google Books figure at 63 %. These results highlight the strong bias of major authority services toward English‑language, U.S.-centric collections and expose structural gaps in the global bibliographic infrastructure.

The paper discusses several limitations. Reliance on external APIs makes the tool vulnerable to service outages, rate limits, and incomplete coverage; HathiTrust, for example, lacks a public API and must be accessed via periodic database dumps. The Levenshtein‑based string similarity, while effective for minor typographical variations, struggles with semantic ambiguities such as translated titles, homographs, or divergent naming conventions. Moreover, the current set of services provides limited multilingual coverage, which directly explains the lower performance on the world‑fiction dataset.

Future work is outlined along three axes. First, expanding the repertoire of authority services to include non‑English national catalogs (e.g., France’s data.bnf.fr, Australia’s Trove, Japan’s NDL Linked Open Data) would improve coverage for multilingual collections. Second, integrating large language models (LLMs) as a secondary similarity layer could capture semantic relationships that pure string metrics miss; however, the authors stress that any LLM‑driven suggestions must remain subject to human verification to avoid propagating systematic biases. Third, the authors propose to translate the abstract FRBR model into concrete clustering rules and to publish a practical guide for libraries and digital‑humanities scholars, thereby fostering broader adoption of work‑level metadata practices.

In conclusion, BookReconciler demonstrates that a modest, user‑friendly interface built on top of OpenRefine can substantially lower the barrier to enriching sparse bibliographic datasets and to constructing work‑level clusters. Its near‑perfect performance on well‑documented U.S. literary data validates the technical approach, while the challenges observed with global, multilingual corpora point to systemic gaps in existing bibliographic infrastructures. By open‑sourcing the tool under an MIT license, providing Docker deployment, and offering tutorial material, the authors invite community contributions to sustain and extend the platform. The system thus represents a valuable addition to the digital‑humanities and library‑science toolkits, enabling more interoperable, richly described book datasets for research, curation, and public communication.

Comments & Academic Discussion

Loading comments...

Leave a Comment