SparseWorld-TC: Trajectory-Conditioned Sparse Occupancy World Model

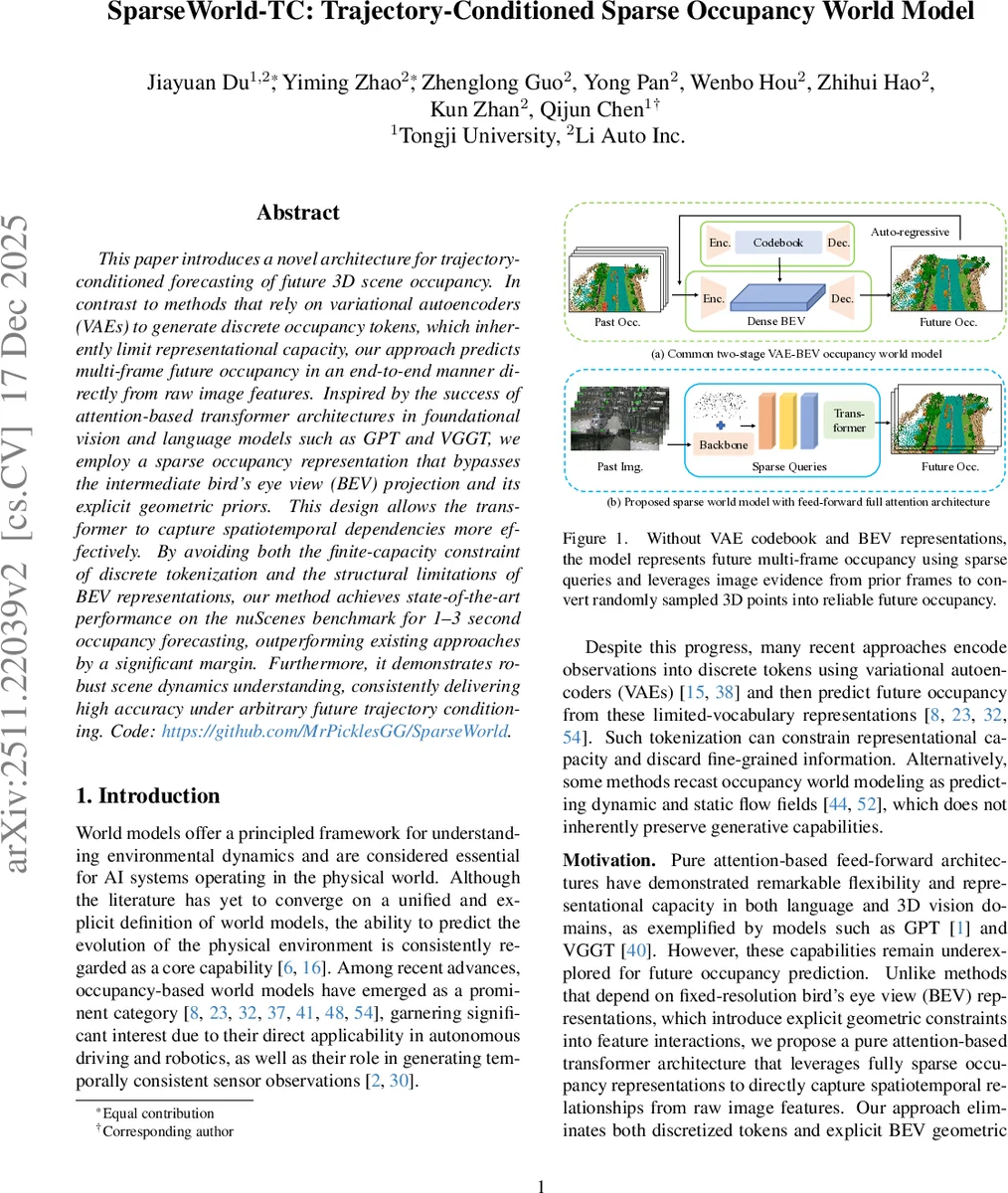

This paper introduces a novel architecture for trajectory-conditioned forecasting of future 3D scene occupancy. In contrast to methods that rely on variational autoencoders (VAEs) to generate discrete occupancy tokens, which inherently limit representational capacity, our approach predicts multi-frame future occupancy in an end-to-end manner directly from raw image features. Inspired by the success of attention-based transformer architectures in foundational vision and language models such as GPT and VGGT, we employ a sparse occupancy representation that bypasses the intermediate bird’s eye view (BEV) projection and its explicit geometric priors. This design allows the transformer to capture spatiotemporal dependencies more effectively. By avoiding both the finite-capacity constraint of discrete tokenization and the structural limitations of BEV representations, our method achieves state-of-the-art performance on the nuScenes benchmark for 1-3 second occupancy forecasting, outperforming existing approaches by a significant margin. Furthermore, it demonstrates robust scene dynamics understanding, consistently delivering high accuracy under arbitrary future trajectory conditioning.

💡 Research Summary

SparseWorld‑TC introduces a trajectory‑conditioned 3D occupancy forecasting framework that departs from the two dominant paradigms in current occupancy‑based world modeling: discrete tokenization via variational autoencoders (VAEs/VQ‑VAEs) and intermediate bird’s‑eye‑view (BEV) representations. Both paradigms impose restrictive bottlenecks—VAEs limit representational capacity through a finite vocabulary and discard fine‑grained geometry, while BEV projects the 3D scene onto a 2‑D grid, imposing explicit geometric priors that hinder flexible spatiotemporal interaction.

The core contribution of the paper is a sparse occupancy representation built around “anchors”. An anchor consists of a set of randomly initialized 3‑D query points and an associated learnable feature vector. Each feature vector is processed by two lightweight multilayer perceptrons (MLPs) to predict per‑point offsets (Δp) and semantic class logits (s). Starting from noisy, uniformly distributed points, the model iteratively refines them into a coherent occupancy field through attention‑driven interactions.

The architecture is a pure transformer that relies exclusively on attention mechanisms—self‑attention for intra‑anchor communication and deformable cross‑attention for fusing image evidence. Deformable attention projects each anchor’s centroid into multi‑scale image feature maps (produced by a ResNet or ViT backbone) using known camera intrinsics, extrinsics, and ego‑motion. Sampling offsets are derived from the mean and standard deviation of the points within an anchor, allowing the model to aggregate features across multiple camera views when fields of view overlap. This design eliminates the need for any BEV or voxel grid, preserving full 3‑D geometry while keeping computational cost manageable.

Trajectory conditioning is achieved by embedding the future ego‑vehicle trajectory as a sequence of way‑points, each containing planar position (x, y), heading θ, and timestamp t. Position embeddings are generated by applying a relative pose transformation followed by an MLP, while temporal embeddings use classic sinusoidal encodings processed through a linear layer. The two embeddings are fused via a spatial‑temporal embedding module that learns affine transformations between adjacent frames, effectively injecting both spatial layout and temporal ordering into the trajectory feature vectors. These trajectory embeddings are concatenated with the anchor and sensor embeddings before entering the transformer, enabling the model to steer future occupancy evolution according to the planned path.

Training is performed on the nuScenes dataset, targeting 1‑ to 3‑second ahead occupancy prediction. The loss combines regression on point offsets with cross‑entropy on semantic logits, encouraging the random points to converge to accurate occupancy predictions. Quantitative results show that SparseWorld‑TC outperforms state‑of‑the‑art VAE‑based methods (e.g., Occupancy‑World, DOME) by 4–6 percentage points in mean Intersection‑over‑Union (mIoU), with particularly large gains on dynamic objects such as vehicles and pedestrians. Moreover, when the conditioning trajectory is arbitrarily altered, the model maintains consistent performance, demonstrating robust trajectory‑aware forecasting.

Ablation studies confirm the importance of each component: removing deformable attention degrades performance due to loss of fine‑grained image cues; replacing sparse anchors with dense BEV features reduces accuracy on small objects; and omitting trajectory embeddings leads to a noticeable drop in conditional prediction quality.

Limitations include sensitivity to the density and distribution of the initial random points, and the current focus on camera imagery without explicit integration of LiDAR or radar modalities. Long‑term forecasting beyond three seconds also shows room for improvement. Future work could explore adaptive point sampling strategies, multimodal sensor fusion, and large‑scale pre‑training to further boost generalization.

In summary, SparseWorld‑TC presents a novel paradigm for occupancy world modeling that bypasses both VAE tokenization and BEV geometric priors. By leveraging a sparse point‑based representation together with a fully attention‑driven transformer, it achieves state‑of‑the‑art accuracy in short‑term 3‑D occupancy forecasting while providing flexible, trajectory‑conditioned control—an essential capability for safe and efficient autonomous driving.

Comments & Academic Discussion

Loading comments...

Leave a Comment