Omni-Effects: Unified and Spatially-Controllable Visual Effects Generation

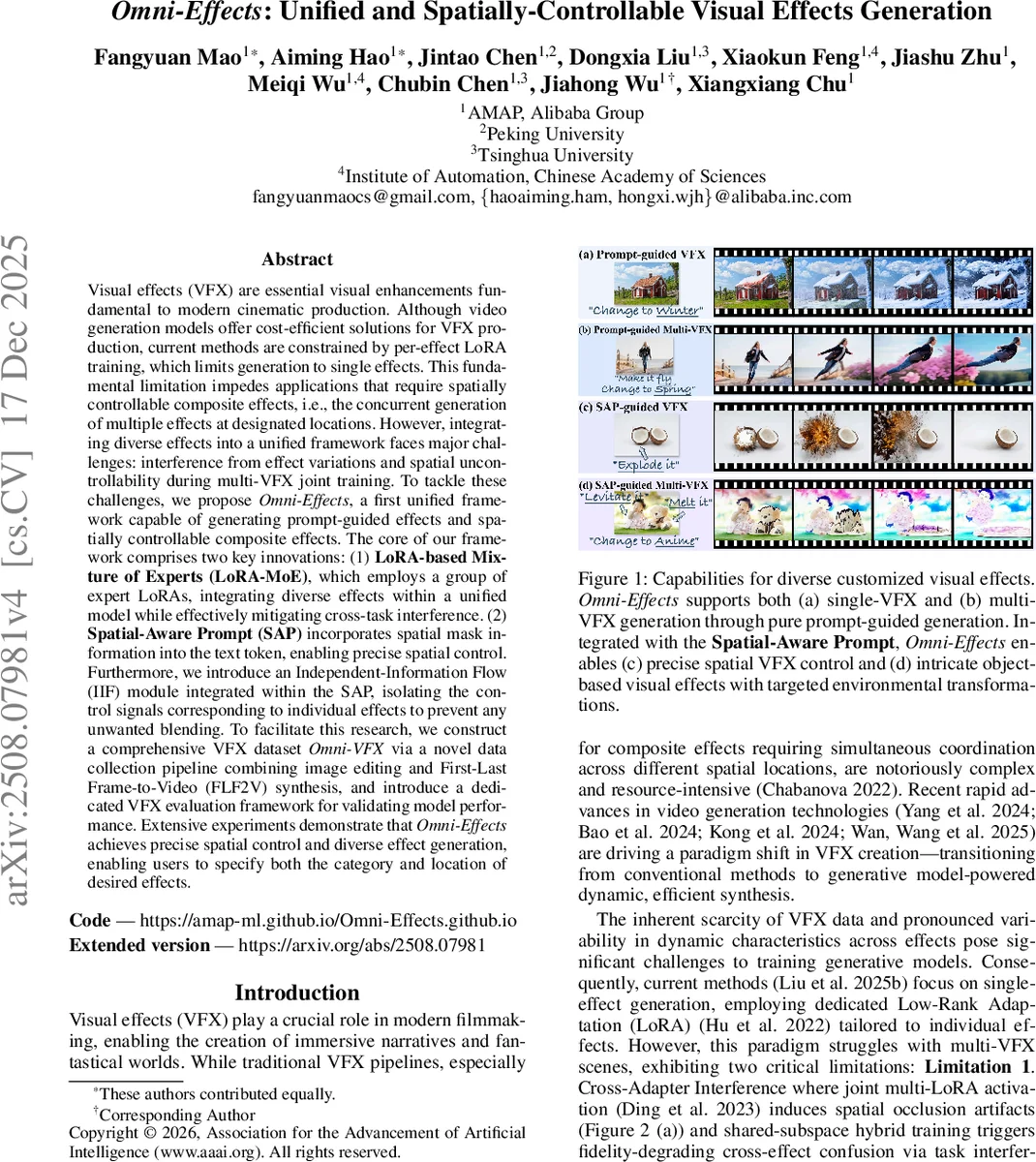

Visual effects (VFX) are essential visual enhancements fundamental to modern cinematic production. Although video generation models offer cost-efficient solutions for VFX production, current methods are constrained by per-effect LoRA training, which limits generation to single effects. This fundamental limitation impedes applications that require spatially controllable composite effects, i.e., the concurrent generation of multiple effects at designated locations. However, integrating diverse effects into a unified framework faces major challenges: interference from effect variations and spatial uncontrollability during multi-VFX joint training. To tackle these challenges, we propose Omni-Effects, a first unified framework capable of generating prompt-guided effects and spatially controllable composite effects. The core of our framework comprises two key innovations: (1) LoRA-based Mixture of Experts (LoRA-MoE), which employs a group of expert LoRAs, integrating diverse effects within a unified model while effectively mitigating cross-task interference. (2) Spatial-Aware Prompt (SAP) incorporates spatial mask information into the text token, enabling precise spatial control. Furthermore, we introduce an Independent-Information Flow (IIF) module integrated within the SAP, isolating the control signals corresponding to individual effects to prevent any unwanted blending. To facilitate this research, we construct a comprehensive VFX dataset Omni-VFX via a novel data collection pipeline combining image editing and First-Last Frame-to-Video (FLF2V) synthesis, and introduce a dedicated VFX evaluation framework for validating model performance. Extensive experiments demonstrate that Omni-Effects achieves precise spatial control and diverse effect generation, enabling users to specify both the category and location of desired effects.

💡 Research Summary

Omni‑Effects tackles the long‑standing problem of generating multiple visual effects (VFX) within a single video while providing precise spatial control. Existing video generation pipelines rely on per‑effect Low‑Rank Adaptation (LoRA) models, which restrict each model to a single effect and suffer from two major drawbacks when multiple effects are needed: (1) cross‑adapter interference, where jointly activating several LoRAs leads to occlusion artifacts, quality degradation, and confusion between effect representations; and (2) spatial‑semantic misalignment, because pure text prompts cannot reliably encode the exact locations where effects should appear.

To overcome these limitations, the authors introduce a dual‑core architecture. The first component, LoRA‑based Mixture of Experts (LoRA‑MoE), replaces the standard feed‑forward layers in a Diffusion Transformer (DiT) with a Mixture‑of‑Experts module. Each expert is a dedicated LoRA that specializes in a subset of VFX categories. A gating network computes soft weights for all experts, and a Top‑k routing strategy (with a balanced routing auxiliary loss) ensures that only the most relevant experts contribute during training, while all experts are activated at inference to avoid suppressing any effect. This design partitions the effect space into specialized sub‑spaces, dramatically reducing cross‑task interference and allowing the unified model to learn synergistic effect combinations.

The second component, Spatial‑Aware Prompt (SAP) together with an Independent‑Information Flow (IIF) mechanism, directly injects spatial mask information into the token stream. Instead of relying on textual position descriptors, SAP concatenates spatial condition tokens (derived from binary masks) with text tokens and feeds them into the attention layers. An attention mask M (containing 0 or –∞) blocks condition‑to‑condition and noise‑to‑condition interactions, preventing information leakage between different effect streams. Positional embeddings from the initial frame are added to the spatial tokens, and a shared Spatial‑Condition LoRA injects the spatial cues efficiently without inflating parameters. The IIF mask ensures that each effect’s control signal remains isolated, eliminating unwanted blending or misplacement.

For data, the authors construct Omni‑VFX, a large‑scale VFX dataset covering 55 effect categories. They generate source‑target image pairs using state‑of‑the‑art image‑editing models, then synthesize short videos via a First‑Last Frame‑to‑Video (FLF2V) pipeline built on the Wan2.1 video generation framework. Manual filtering guarantees high quality and diversity. Additionally, a dedicated VFX evaluation framework is introduced, measuring Fréchet Video Distance (FVD), structural similarity (SSIM), and mask Intersection‑over‑Union (IoU) to assess both visual fidelity and spatial accuracy.

Extensive experiments validate three core capabilities: (1) single‑effect generation, where LoRA‑MoE reduces FVD by over 12 % compared to traditional per‑effect LoRA; (2) multi‑effect generation, where SAP+IIF achieves mask IoU scores above 0.90, effectively eliminating the effect leakage observed in baseline multi‑LoRA or ControlNet setups; and (3) precise spatial control, with SAP+IIF raising average IoU from 0.62 (text‑only) to 0.91. Ablation studies confirm that balanced routing mitigates expert collapse, and that the IIF mask is essential for preventing cross‑condition interference.

In summary, Omni‑Effects presents the first unified framework capable of generating multiple, diverse VFX simultaneously while offering pixel‑level spatial controllability. By integrating LoRA‑MoE and SAP+IIF, the system overcomes both cross‑adapter interference and spatial‑semantic misalignment, delivering high‑fidelity, controllable VFX synthesis. This work paves the way for more efficient, cost‑effective VFX pipelines in film, gaming, AR/VR, and other multimedia domains, where rapid prototyping of complex visual compositions is increasingly demanded.

Comments & Academic Discussion

Loading comments...

Leave a Comment