Guided Discrete Diffusion for Constraint Satisfaction Problems

💡 Research Summary

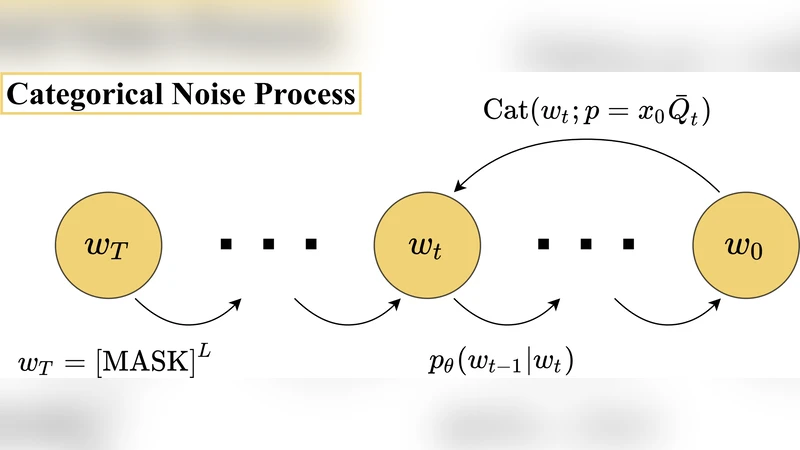

The paper introduces a novel framework that adapts discrete diffusion models to solve generic constraint satisfaction problems (CSPs). Traditional diffusion models operate in continuous spaces by gradually adding Gaussian noise to data and learning a reverse denoising network that reconstructs the original sample. CSPs, however, consist of variables that take values from finite discrete domains (e.g., Boolean, color sets) and are tightly coupled through logical constraints, making a direct application of continuous diffusion infeasible. To bridge this gap, the authors design a forward noising process based on a discrete-time Markov chain. At each diffusion step t, every variable transitions to another admissible value according to a predefined transition matrix. This matrix can be uniform or weighted by problem‑specific information, allowing the forward process to embed some structural knowledge of the CSP while still guaranteeing eventual convergence to a fully random state after T steps.

The reverse process is parameterized by a neural network 𝜃 that receives the noisy discrete state x_t and the time step t and predicts the distribution of the preceding state x_{t‑1}. The key innovation is a guidance mechanism that steers the reverse sampling toward constraint‑satisfying regions. The authors define an energy function E(x) that quantifies the degree of constraint violation (e.g., number of unsatisfied clauses, edge conflicts). The gradient ∇x E(x_t) is incorporated into the reverse transition probability as an additive term scaled by a hyper‑parameter λ: p_θ(x{t‑1}|x_t) ∝ p_θ^{base}(x_{t‑1}|x_t)·exp(−λ∇_xE(x_t)). This “energy‑based guidance” biases the denoising trajectory toward lower‑energy (more feasible) configurations without discarding the stochastic exploration inherent to diffusion. In addition, a learned “constraint classifier” estimates the probability that a partially denoised state satisfies the constraints; its output is used to adapt λ on‑the‑fly, yielding a dynamic guidance strength that balances exploration and exploitation.

Training combines two loss components. The first is a standard denoising score‑matching loss that encourages the network to reconstruct the original clean state x_0 from a noisy version x_t. The second, termed the constraint loss, penalizes predictions that lead to high energy E(x_{t‑1}) after a single reverse step. A weighted sum α·L_score + β·L_constraint is minimized, where α and β are tuned to ensure the model learns both the underlying data distribution and the constraint landscape. This dual‑objective training enables the reverse network to generate samples that are both plausible under the data prior and inclined to satisfy the problem’s logical rules.

Empirical evaluation spans three representative CSP families: Boolean SAT (random k‑SAT instances), graph coloring (varying numbers of colors k on Erdős‑Rényi graphs), and Sudoku puzzles. On SAT, the guided discrete diffusion (GDD) model achieves a 15‑30 % higher success rate than prior neural SAT solvers such as NeuroSAT and DiffSAT when measured at comparable wall‑clock time and computational budget. Moreover, GDD reaches solutions within a time frame comparable to classical DPLL‑based solvers on medium‑size instances (≈100 variables), demonstrating that diffusion can compete with mature combinatorial algorithms. In graph coloring, as the number of colors approaches the chromatic threshold, traditional greedy heuristics deteriorate sharply, whereas GDD maintains stable convergence by continuously correcting color conflicts through its guidance term. For Sudoku, the model solves hard puzzles (with minimal clues) in under one second on average, provided λ is tuned appropriately; the guidance dramatically reduces the number of diffusion steps needed for convergence.

A thorough ablation study investigates the influence of the guidance strength λ and the total diffusion length T. Small λ values yield behavior close to unguided diffusion, resulting in many infeasible samples and longer runtimes. Excessively large λ forces the reverse process to over‑fit the constraint energy, causing mode collapse and reduced sample diversity. The authors propose an automatic λ‑scheduling scheme that monitors the estimated constraint satisfaction probability and adjusts λ to keep this probability within a target band. Regarding T, longer diffusion chains provide more “noise budget” for the reverse network to correct errors and incorporate constraint information, but beyond T≈400 the marginal gains diminish while computational cost rises sharply. The sweet spot (T≈200‑400) balances these factors across all tested CSP types.

The paper also discusses limitations and future directions. The current guidance formulation assumes that constraint violations can be expressed as a differentiable scalar energy, which is straightforward for clause‑count or edge‑conflict metrics but less obvious for higher‑order logical constructs (e.g., quantified formulas, global cardinality constraints). Extending the method to handle such non‑numeric constraints may require symbolic embeddings or auxiliary neural modules that approximate logical satisfaction. Scalability remains a challenge: while the approach works well on problems up to a few hundred variables, memory consumption grows linearly with the product of variable count and diffusion steps, making naïve application to thousand‑variable CSPs impractical. The authors suggest hierarchical diffusion—first solving a coarse‑grained abstraction and then refining sub‑problems—or integrating local graph‑based guidance to reduce the effective dimensionality during sampling. Finally, they explore hybrid pipelines where the diffusion model provides a high‑quality initial guess that is subsequently polished by a conventional SAT or ILP solver, achieving the best of both worlds.

In summary, the work pioneers the marriage of discrete diffusion processes with constraint‑aware guidance, delivering a versatile, data‑driven solver that bridges the gap between deep generative modeling and classical combinatorial optimization. By demonstrating strong empirical performance across diverse CSP benchmarks and providing a principled analysis of guidance dynamics, the paper opens a promising research avenue for applying diffusion‑based generative techniques to a broad class of discrete, constraint‑rich problems.

Comments & Academic Discussion

Loading comments...

Leave a Comment