MemFlow: Flowing Adaptive Memory for Consistent and Efficient Long Video Narratives

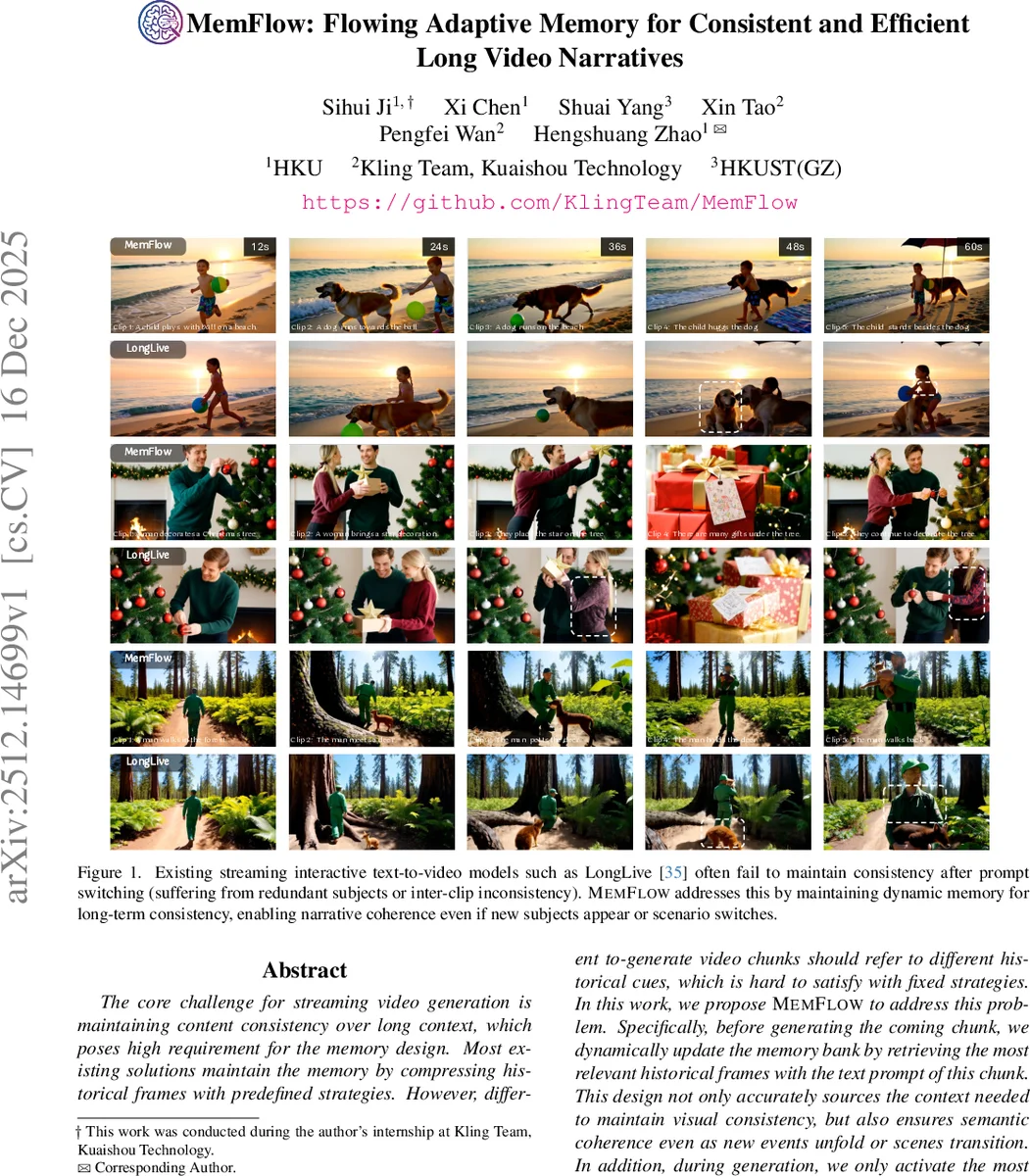

The core challenge for streaming video generation is maintaining the content consistency in long context, which poses high requirement for the memory design. Most existing solutions maintain the memory by compressing historical frames with predefined strategies. However, different to-generate video chunks should refer to different historical cues, which is hard to satisfy with fixed strategies. In this work, we propose MemFlow to address this problem. Specifically, before generating the coming chunk, we dynamically update the memory bank by retrieving the most relevant historical frames with the text prompt of this chunk. This design enables narrative coherence even if new event happens or scenario switches in future frames. In addition, during generation, we only activate the most relevant tokens in the memory bank for each query in the attention layers, which effectively guarantees the generation efficiency. In this way, MemFlow achieves outstanding long-context consistency with negligible computation burden (7.9% speed reduction compared with the memory-free baseline) and keeps the compatibility with any streaming video generation model with KV cache.

💡 Research Summary

The rapid advancement of generative AI has shifted the focus from short-clip synthesis to long-form streaming video generation. However, a fundamental obstacle remains: maintaining “content consistency” across extended durations. As video length increases, maintaining the identity of characters, environmental details, and narrative flow becomes exponentially difficult. Existing streaming video generation frameworks attempt to manage this by compressing historical frames into a fixed memory structure. Yet, these predefined compression strategies suffer from a lack of adaptability; they cannot effectively respond to “semantic shifts,” such as sudden scene transitions or the introduction of new objects, because they rely on static rules rather than context-aware retrieval.

To overcome these limitations, the paper introduces “MemFlow,” a novel framework designed for “Flowing Adaptive Memory.” The core innovation of MemFlow lies in its ability to dynamically align historical memory with the current generative context. Unlike previous methods that use fixed compression, MemFlow utilizes the text prompt of the upcoming video chunk as a query to perform a retrieval-based update of the memory bank. By searching for the most semantically relevant historical frames, MemFlow ensures that the model retains only the essential visual cues necessary for the current segment. This mechanism allows the model to seamlessly navigate through scene changes and new narrative events without losing the thread of the story.

Beyond consistency, MemFlow addresses the critical issue of computational scalability. In long-context generation, the computational cost of the attention mechanism typically grows quadratically with the sequence length. MemFlow mitigates this by implementing an efficient token activation strategy. During the attention process, the model selectively activates only the most relevant tokens from the memory bank for each query. This sparse activation approach prevents the computational explosion associated with long-duration video processing.

The empirical results are highly impressive. MemFlow achieves superior long-context consistency with a negligible impact on performance, incurring only a 7.9% reduction in speed compared to a memory-free baseline. Furthermore, the architecture is designed to be highly compatible with any existing streaming video generation model that utilizes KV caching, making it a plug-and-play solution for the broader research community. In conclusion, MemFlow provides a scalable, efficient, and context-aware solution to the long-context challenge, paving the way for the next generation of continuous, high-fidelity video synthesis.

Comments & Academic Discussion

Loading comments...

Leave a Comment