Segmental Attention Decoding With Long Form Acoustic Encodings

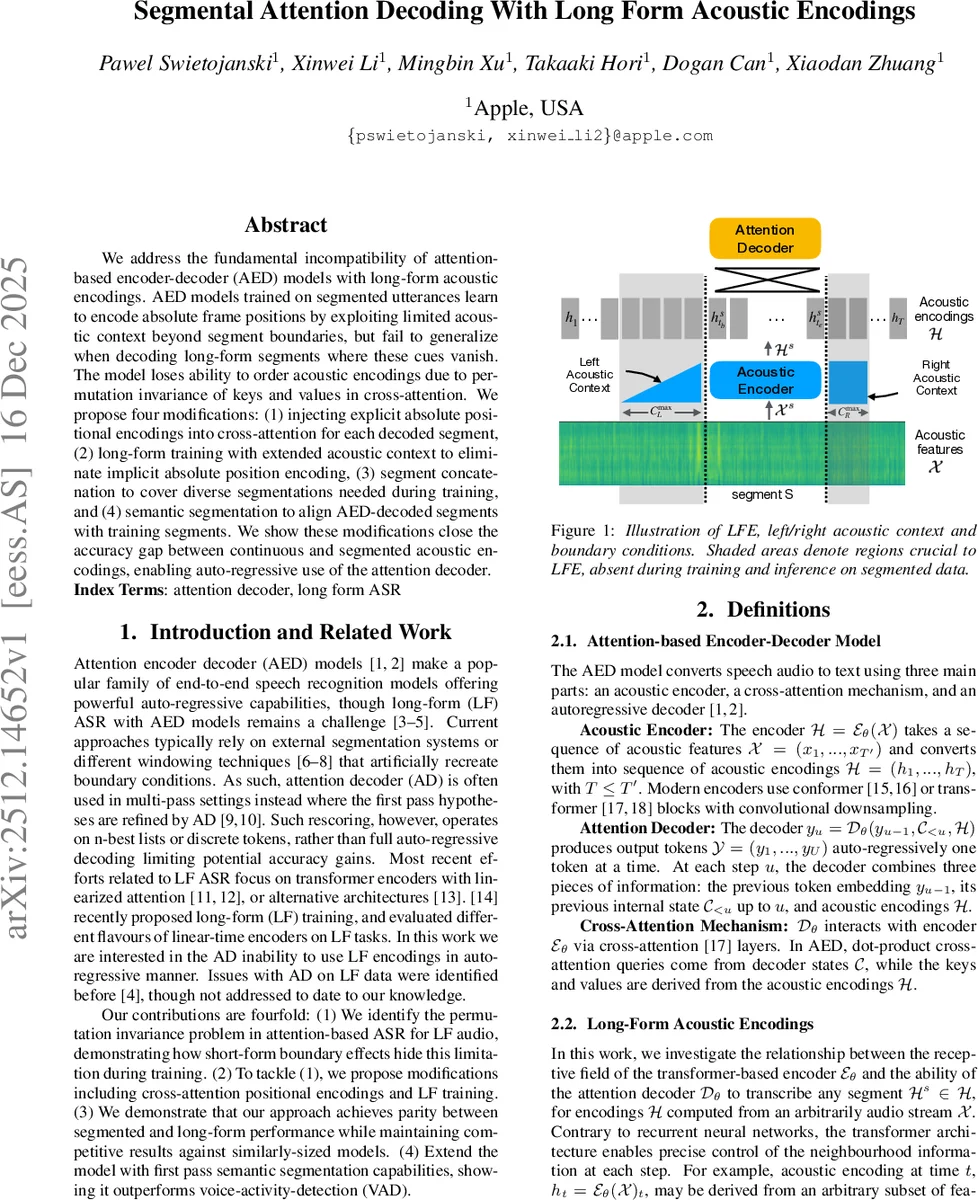

We address the fundamental incompatibility of attention-based encoder-decoder (AED) models with long-form acoustic encodings. AED models trained on segmented utterances learn to encode absolute frame positions by exploiting limited acoustic context beyond segment boundaries, but fail to generalize when decoding long-form segments where these cues vanish. The model loses ability to order acoustic encodings due to permutation invariance of keys and values in cross-attention. We propose four modifications: (1) injecting explicit absolute positional encodings into cross-attention for each decoded segment, (2) long-form training with extended acoustic context to eliminate implicit absolute position encoding, (3) segment concatenation to cover diverse segmentations needed during training, and (4) semantic segmentation to align AED-decoded segments with training segments. We show these modifications close the accuracy gap between continuous and segmented acoustic encodings, enabling auto-regressive use of the attention decoder.

💡 Research Summary

The paper investigates a fundamental mismatch between attention‑based encoder‑decoder (AED) speech‑recognition models and long‑form acoustic encodings (LFE). When AED models are trained on short, segmented utterances, the encoder’s limited left/right context at segment boundaries unintentionally provides each acoustic frame with an implicit absolute position cue. Because cross‑attention is permutation‑invariant, the decoder can rely on these boundary cues to infer temporal order. In a true long‑form scenario, every frame is computed with the full bidirectional context (CmaxL, CmaxR), the boundary cues disappear, and the decoder loses the ability to order the keys and values. This manifests as repeated outputs, failure to emit end‑of‑sentence tokens, and dramatically inflated word‑error rates (WER) on long‑form segments.

To remedy this, the authors propose four complementary modifications:

-

Explicit Positional Encodings (PE) in Cross‑Attention – An absolute positional vector p is added to each segment’s encoder outputs (Hs + p) before they are used as keys and values. This provides a fresh, segment‑local temporal grounding that is independent of the encoder‑input RoPE. Positions are reset for each decoded segment, avoiding unbounded global indices.

-

Long‑Form Training with Expanded Acoustic Context (AC) – During training, each target segment is surrounded by left and right acoustic context (Xs L, Xs R) sufficient to make the central frames true LFEs. The decoder loss is computed only on the central valid frames, forcing the model to rely on the explicit PE and the existing relative positional information rather than on edge artifacts.

-

Segment Concatenation (SC) – Training data are artificially concatenated into longer multi‑segment utterances (up to 2 min 30 s). This exposes the decoder to a wider distribution of segment lengths and boundary patterns, improving robustness to the variability encountered in real long‑form audio.

-

Semantic Segmentation (SS) – A CTC head is equipped with a special “segE” token that marks semantically coherent sentence boundaries. At inference time, the CTC stream provides these tokens, defining the LFEs that the second‑pass attention decoder should process. This aligns decoding boundaries with linguistic units rather than with raw acoustic silence detection (VAD).

The experimental setup uses two model sizes (Ours.base ≈ 90 M parameters, Ours.small ≈ 240 M) built on a CTC‑AED architecture with a fixed 18 M‑parameter transformer decoder and a causal Conformer encoder. Training data combine public corpora (LDC, SpeechOcean, LibriHeavy) and a large‑scale long‑form conversational dataset (SpeechCrawl) that is pseudo‑labeled. Long‑form transformations (AC and SC) are applied to all datasets lacking contiguous recordings.

Results are presented in two tables. Table 1 shows an ablation study where each modification is added sequentially. The baseline (Model 0) suffers a catastrophic 295 % WER on LFEs. Adding SC reduces overall WER but leaves LFEs poor. Adding AC drops LFE WER to 40.3 %, demonstrating that exposing the model to full context is the most impactful single change. Adding PE further halves LFE WER to 14.5 %, confirming that explicit absolute positions help the permutation‑invariant attention recover temporal order. Finally, adding SS yields the best system (Model 5) with LFE WER of 4.7 %—essentially parity with short‑form performance (4.5 %). Table 2 compares the final systems to Whisper baselines on public benchmarks (TED‑LIUM3, Earnings21, CommonVoice, Librispeech). Across all decoding modes (attention rescoring, pure attention, joint CTC‑attention) the proposed models achieve equal or lower WER than Whisper, especially on long‑form tests where Whisper’s performance degrades. Notably, the models maintain low WER even when decoding with larger chunk sizes (3.84 s), indicating robustness to latency‑accuracy trade‑offs.

Key insights include:

- The permutation‑invariance of dot‑product cross‑attention makes AED models fundamentally dependent on any positional signal present in the keys/values. When training data lack a consistent positional signal (as in true LFEs), the decoder fails.

- Providing an explicit, segment‑local absolute positional code restores order information without requiring global position tracking, which would be impractical for streaming.

- Training with full left/right context forces the model to rely on the explicit PE and on the relative positional encodings already present in the encoder inputs, eliminating the shortcut of learning boundary artifacts.

- Segment concatenation enriches the distribution of segment lengths and boundary conditions, further improving generalisation.

- Semantic segmentation via CTC aligns decoding boundaries with linguistic units, enabling the decoder to operate on arbitrary subsets of LFEs without recomputing encoder outputs.

In conclusion, the paper demonstrates that by (1) injecting absolute positional information into cross‑attention, (2) exposing the model to genuine long‑form context during training, (3) diversifying segment lengths through concatenation, and (4) using a CTC‑driven semantic segmentation signal, an AED system can close the performance gap between short‑form and long‑form acoustic encodings. This enables true auto‑regressive decoding on continuous audio streams, opening the door for on‑device, low‑latency, high‑accuracy speech recognition in scenarios such as meetings, broadcasts, and long‑form dictation.

Comments & Academic Discussion

Loading comments...

Leave a Comment